Abstract

Introduction

Artificial intelligence (AI) is becoming omnipresent, dramatically transforming various fields globally and permeating our professional and personal lives in many areas. The travel industry is one of the main sectors affected by AI innovation. Indeed, the advancements in AI present great opportunities for tourism destinations and hospitality companies to promote their products and services and influence travel behavior (I. Tussyadiah & Miller, 2019). As a result, AI applications, including travel recommender systems (Borràs et al., 2014), service robotics (Hou et al., 2021; Tuomi et al., 2021), and other autonomous systems (I. P. Tussyadiah et al., 2020), are increasingly integrated into business processes and customer experience.

A key area of implementation of AI technology in the travel sector is artificially intelligent assistants (hereafter “AI assistants”). Powered by natural language processing and machine learning tools, which are two sub-domains of AI, AI assistants (also known as “chatbots,” “conversational agents,” “virtual assistants,” “personal assistants,” and “voice assistants”) are computer programs that interpret natural-language input from a user (in the form of text or voice commands, or both) and generate appropriate responses to the user (Khan & Das, 2018; Ling et al., 2021). AI assistants are implemented in various usage scenarios, helping users with basic cognitive tasks such as searching for information, planning/scheduling, and messaging (Maedche et al., 2019). Prominent examples include voice-based assistants, such as Google Assistant, Siri, Cortana, and Alexa, and text-based AI Assistants (chatbots) usually embedded in websites and Messenger apps such as Facebook Messenger and WhatsApp. The launch of OpenAI’s ChatGPT significantly extends the capabilities of chatbots via integrating generative language models and deep learning, and the widespread global adoption of ChatGPT has demonstrated an enormous range of use cases/contexts, including tourism and hospitality (Dwivedi et al., 2023). For example, Expedia app recently integrated ChatGPT features into its mobile app to provide an interactive experience in travel planning (Whitmore, 2023).

AI assistants offer great value to consumers (i.e., 24 × 7 customer service, personalized recommendations, automated customer service tasks, and fast responses), and their ability to complete jobs more efficiently than humans makes AI-enhanced services appealing for businesses to consider (Balakrishnan et al., 2022). However, AI assistant technology has yielded mixed results, with high failure rates in the interaction process (Araujo, 2018). The conversational user interfaces of AI assistant applications face substantial challenges, such as capturing various open-ended questions (Brandtzaeg & Følstad, 2018). AI assistants do not always understand every single word users speak and have not mastered handling sophisticated conversations yet.

Whilst existing research points to users perceiving intelligent agents to be human-like and smart (Moussawi & Koufaris, 2019), there are no established scales that measure user perceptions of AI assistants that can apply to both text-based chatbots and voice-based speakers embedded in various technological applications/devices. Current measures of perceived intelligence are mainly based on user perceptions of service robotics (Lu et al., 2019), with intelligence partly determined by the perceptions of the physical appearance and movement of robots, and with anthropomorphism, which focuses mainly on facial features and mental capacities (Moussawi & Koufaris, 2019). These measures do not capture the core features that make AI assistants unique. Especially for text-based chatbots, users’ perceptions of intelligence mostly depend on the dialog's textual characteristics due to the disembodiment property (Laban, 2021).

While measurement scales of AI assistants have been developed in marketing and business contexts (Israfilzade, 2021; Moussawi & Koufaris, 2019), scholars have not yet developed a scale of AI assistants in the travel context. As AI assistants have been increasingly implemented by tourism, leisure, hospitality, and airline companies and have played a vital role in providing convenient and 24/7 customer services such as hotel booking, airline tickets, and recommendations for local tourism attractions (Calvaresi et al., 2023), understanding which key dimensions constitute an intelligent interaction with AI assistants is critical for successful implementation of the technology in the travel settings. Notably, traveling is a complex process involving exchanges with multiple service providers, numerous decision-making, various sources of information, and many different activities (e.g., planning, booking, navigation) at various stages in an entire travel journey. Users’ perceptions of AI assistants may vary depending on the specific context in which they are used. It can be suggested that certain features of AI assistants that may not be considered critical in online shopping or general information search are highly salient in the travel context, such as navigation or instant language translation. Thus, the scales developed to measure AI assistants in other sectors may not be applicable in the travel context. More context-driven aspects must be considered in the scale development and in predicting its impacts on travelers’ behavioral outcomes. Therefore, it is necessary and timely to develop measures of perceived intelligence of AI assistants for travel purposes.

This study makes several innovative contributions to literature and the tourism/hospitality industry. Based on DeLone and McLean (2003)’s updated Information System Success Model, this paper developed a new reliable and valid scale for assessing user perceptions of the intelligence of AI assistants in the travel industry. Given that research on user interaction with AI assistants in the travel context is nascent, the development of this scale provides a standardized and validated tool for future research to assess how travelers perceive the intelligence of AI assistants in a travel usage context and its subsequent impact on users’ attitudes and behaviors. Furthermore, this study provides developers with valuable insights and guidelines for designing AI assistants and for travel industry service providers/managers on implementing AI assistants and their associated marketing and communication strategies.

Literature Review

AI Assistants in Travel

Existing literature commonly defined AI assistants based on the characteristics of personalization (i.e., ability to respond to user’s specific requests and provide information based on user-specific preferences and history), autonomy (i.e., ability to operate upon command and without the user’s continuous intervention), awareness of/reactivity to the environment (i.e., ability to detect conditions in physical/virtual environment), learning and adaption to change (i.e., ability to adapt its behavior based on prior events and new circumstances), communication (i.e., ability to interact via natural language processing and language production abilities), and task completion (i.e., ability to complete tasks within a favorable and expected timeframe for the user, and be able to find and process the necessary information for completing tasks) (Moussawi & Koufaris, 2019).

Extant research has discussed adopting and using AI assistants/chatbots for tourism and hospitality (Ivanov & Webster, 2020; Yu, 2020). Travel and hospitality companies such as online travel agencies (e.g., Booking.com, Expedia, and Kayak) and hotels (e.g., AccorHotels, Hyatt, and Marriott) have used AI assistants to deliver more convenient and faster responses to customers’ queries. These travel AI assistants (also known as “virtual travel agents” and “travelbots”) assist travelers throughout the entire journey, from pre-trip planning and travel booking to on-trip customer support and post-trip service processes by making it easy for travelers to receive relevant and useful information (Rokou, 2018). For example, on an online travel agency’s website, an AI assistant provides information to potential tourists instead of a human staff, which may include information regarding tourism products, itineraries, and so on, which are typically pre-determined by the online travel agency to ensure objective and accurate replies (Zhu et al., 2023). Thus, the AI assistant helps facilitate travelers in making various decisions on flights, hotels, tour packages, attractions, etc. (I. Tussyadiah & Miller, 2019). Today, advanced generative AI such as ChatGPT integrated into travel booking apps can advise potential tourists on where to go, where to stay, and what to do with broader knowledge within instance responses. Figure 1 shows an example of a conversation between a tourist and Expedia’s app with ChatGPT integration.

Conversation example with Expedia’ ChatGPT integration.

Additionally, an increasing number of digital voice assistants (e.g., Kayak’s integrated digital voice assistant, KLM’s “Blue Bot) have been launched on major voice platforms such as Amazon Alexa and Google Assistant, providing travelers with customized booking assistance and packing tips according to destination information so that travelers can focus on planning their trip itineraries rather than travel logistics (Gutierrez & Khizhniak, 2018; VB, 2018). Many hoteliers consider voice-based AI assistants a top-impacting technology to enhance hotel services and streamline guests” experiences (Buhalis & Moldavska, 2021). Jiménez-Barreto et al. (2023) recently explored tourists’ interactive experiences (information production and process) with Google Assistant when planning trips. As AI assistants are an emerging technology in the travel and tourism industry, this study seeks to better understand travelers’ behavioral intentions to use AI assistants for travel planning and booking.

Perceived Intelligence of AI Assistants

In the literature on artificial intelligence, the Turing test provides an operational definition of intelligence in computers whereby a machine is considered intelligent if it can deceive the human interrogator into thinking that it is not a computer but a human (Russell & Norvig, 2010). In the human-robot interaction literature, the perceived intelligence of AI assistants could be considered as their competence, efficiency, use, and capacity to process and produce natural language and deliver effectual output (Mirnig et al., 2017). Based on these characteristics of AI assistants, this study defines perceived intelligence of AI assistants as the formed perceptions about the extent to which an AI assistant is capable of understanding and processing the natural language inputs from users efficiently and logically and producing useful and goal-directed information that meet users’ demands (Moussawi & Koufaris, 2019). The cognitive nature of perceptions of AI assistants’ intelligence will impact users’ evaluations of its performance and usefulness as it relates to how effective the AI assistant is.

Warner and Sugarman (1986) developed an intellectual evaluation scale that consists of five seven-point semantic differential items: competence (incompetent/competent), knowledge (ignorant/knowledgeable), responsibility (irresponsible/responsible), intelligence (unintelligent/intelligent), and sensibility (foolish/sensible). However, Moussawi and Koufaris (2019) argued that using a single-item scale of “intelligence” does not provide enough depth in measuring this construct. Instead, perceived intelligence should be measured on a more comprehensive scale that captures a more multidimensional view. Moreover, as previously stated, the measurements of physically embodied robotics cannot reflect all the unique characteristics of AI assistants, where perceived intelligence is mainly dependent on the textual properties of conversation. Moussawi and Koufaris (2019) suggested the dimensions of intelligent agents’ intelligence include autonomy, physical world awareness, virtual world awareness, pro-activeness, completion time, communication ability, logical reasoning, learning ability, and output quality, all of which refer to the quality of information provided by AI assistants. However, most of the dimensions of this intelligence scale focused on intelligence competence, with less attention to output quality. Meanwhile, information quality has been widely utilized in the information system literature as a context-specific and decision-relevant construct to measure information systems’ success (Wieder & Ossimitz, 2015), notably as a key concept in DeLone and McLean’s Information System Success Model (DeLone & McLean, 2003). Therefore, a new scale capturing both the competence and output quality dimensions of intelligence is needed.

In the travel industry, AI assistants are expected to perform various tasks, such as searching for travel information, booking flights and accommodations, and providing information on activities to do in different destinations. These tasks require access to a wide range of information sources and application programing interface integration that allows different software applications to communicate and share data seamlessly. As a result, travelers may have different needs and expectations when interacting with AI assistants in this domain, which can impact their perceived intelligence of AI assistants, including their expectations of what constitutes intelligence (i.e., attributes and dimensions). Furthermore, the travel industry is constantly evolving. AI assistants need to be more sensitive and adapt quickly to new information and changes in situational contexts to generate accurate responses to users, which may not be a critical requirement in other use contexts. Therefore, this study aims to develop a new scale to measure the perceived intelligence of AI assistants, specifically in the travel industry context.

Scale Development and Validation

This study sought to develop a scale of perceived intelligence of AI assistants to measure the key dimensions that constitute users’ perceived intelligence of AI assistants. To achieve this goal, the study followed the procedures suggested by scholars from different fields, including management, marketing, sociology, and psychology (Bagozzi et al., 1991; Churchill, 1979; Hinkin, 1998; Hollebeek et al., 2014), with particular attention to the processes applied in tourism and hospitality research (e.g., Dedeoğlu et al., 2020; Kim et al., 2022). This paper adopted a multi-study method to develop and validate the scale. The procedure consisted of four studies involving four different samples: item generation, purification of the measurement scale, evaluation of the latent structure, and nomological validation (see Figure 2).

Scale development process.

Study 1: Item Generation

An initial pool of potential items was generated using both inductive and deductive approaches (Varshneya & Das, 2017). In the deductive approach, the items were derived from the findings of a systematic literature review (analyzed 18 papers) on the conceptualization of factors influencing users’ adoption and usage intention toward AI assistants (Ling et al., 2021). The inductive approach included in-depth interviews with 11 industry experts (Founder/Co-Founder/Managing Director of AI/chatbot companies or AI developers) and two intensive in-person workshops (with 31 participants in total) exploring the factors influencing AI assistants implementation and design. Each workshop includes three focus group discussions with 5 to 6 participants in each group. Participants were recruited through a combination of purposive and snowball sampling methods. Participants are stakeholders from hotels, restaurants, tech companies, academics, and general users, who work in the fields of tourism, hospitality, and/or AI technology innovations, in organizations based in the United Kingdom. The participants were chosen based on the sector that they were working in and could represent, to allow us to access a diverse group of participants with varying levels of experience and perspectives (e.g., tourism and hospitality service providers, technology developers, customers/users) related to the use of AI assistants in travel services. Interview transcripts were analyzed, and potential items were identified through a combination of different methods, including vocabulary-based extraction, word frequency-based extraction, syntactic relation-based extraction, and topic-based extraction (Hernández-Rubio et al., 2019). This procedure generated an initial list of 26 items (see Table 1), categorized into the following three dimensions: conversational intelligence (i.e., reflecting learning ability, natural language processing ability, pro-activeness, logical reasoning, awareness of physical/virtual world, and autonomy), information quality (i.e., accuracy, completeness, and currency), and anthropomorphism (i.e., reflecting the social and emotional psychological aspects).

Items Sources.

Item deleted for the following exploratory factor analysis.

Humans tend to measure intelligence through accomplishments that demonstrate competence and capabilities/skills. However, intelligence and competence are distinct concepts. Intelligence is primarily related to knowledge, a (state of) high mental and cognitive capacity, while competence refers to the ability to apply that knowledge to practice effectively. Therefore, when evaluating the intelligence of AI assistants, it is crucial to consider both aspects of the system separately. As can be seen from Table 1, a set of conversational intelligence indicators primarily measure intelligence/cognitive ability in understanding human inputs/conversation context, whilst a set of information quality indicators primarily reflect the competence of the AI assistant in providing accurate and relevant information to the user. By considering both intelligence and competence, we can develop a more comprehensive understanding of the capabilities of AI assistants.

Conversational Intelligence

This study defines conversational intelligence as the degree to which AI assistants can understand users’ natural language inputs and conduct the conversation smoothly in a natural, proactive, and timely manner (Dinan et al., 2020). Sousa et al. (2019) proposed a set of architectural elements that should be considered when building intelligent interaction with chatbot technologies, including flow development, natural language understanding, natural language processing, conversational contexts, conversational memory, and machine learning. Natural language understanding and processing are core features and are now becoming mandatory criteria for assessing intelligent AI assistants. Various studies have demonstrated the importance of natural language understanding/processing ability in building an intelligent conversation with AI assistants (Chaves & Gerosa, 2021; Følstad et al., 2018). Smith et al. (2020) argued that being knowledgeable, engaging, and empathetic are all desirable general qualities in a conversational agent, and instead of being specialized in one single quality, a good open-domain conversational agent should be able to seamlessly blend them all into one cohesive conversational flow. Therefore, it could be argued that the intelligence of the conversation itself (focusing on natural language understanding/processing, machine learning, conversation context recognition and memory) is a key dimension constituting users’ general perception of the intelligence of AI assistants. Notably, human language and perception are context-dependent (Barbour, 1999). For AI assistants, linguistic and perceptual understanding are active processes strongly influenced by user expectations, purposes, and interests. The cognitive nature of perceptions of AI assistants’ intelligence will impact users’ evaluation of their performance and usefulness as it relates to how effective an AI assistant is. It is thus vital to understand how developmental and cognitive psychologies affect human responses to AI assistant behaviors and how AI assistant systems are expected to behave so that people can easily understand them and communicate smoothly and naturally.

Information Quality

The metric of information quality was first proposed by DeLone and McLean’s (1992) Information System Success model to examine what constitutes successful information systems. Setia et al. (2013, p. 268) defined information quality as “the accuracy, format, completeness, and currency of information produced by digital technologies.” Adapted from this definition, information quality in this study refers to the degree to which the information/recommendations provided by AI assistants are useful and effectually meet users’ expectations (Setia et al., 2013). While conversational intelligence is related to the conversation/interaction process (i.e., competence, responsiveness), information quality is mainly about the interaction outcome/content (i.e., intellectual value). As previously stated, the quality of output is also a vital dimension in forming intelligent assistants’ intelligence. Previous research (Følstad & Skjuve, 2019; Laban, 2021) highlighted the importance of high-quality content, such as quality of information, transparency, and providing adequate answers, in generating users’ positive perceptions. Hence, information quality is selected as a dimension of the perceived intelligence scale in this study. In the travel industry and in the context of AI assistants as an advisor/communication interface, information provided by AI assistants is vital in helping users obtain knowledge, identify options/alternatives, and make decisions. Hence, it can be predicted that information quality plays an even more critical role in the travel context, and users are likely to consider an AI assistant as intelligent if the system provides high-quality information.

Anthropomorphism

A key feature of AI assistants is that they aim to appear human-like while interacting with users (Daniel et al., 2019; Hill et al., 2015). While conversational intelligence and information quality are related to system competence and interaction content, the subjective aspects of intelligence are associated with the social dimensions and capabilities of AI systems, such as the capacity to demonstrate and respond to emotions and social information, as well as to understand and react to individual contexts (Laban, 2021). Anthropomorphism, which refers to the attribution of human-like psychological traits, emotions, intentions, motivations, and goals to non-human entities (AI assistants for the scope of this study) (Melián-González et al., 2021), is believed to be a key characteristic that distinguishes AI from non-AI applications (Troshani et al., 2021). Studies have found that users attribute human-like characteristics to AI assistants that display anthropomorphic features, leading to higher perceptions of their intelligence and ability to facilitate human-like interactions. For instance, Duffy (2003) showed that anthropomorphism contributes to a person’s increased perception of social capabilities, which is essential in contributing to people’s perception of another’s intelligence.

Humans have a strong tendency to anthropomorphize almost everything they encounter, including computers and robots; in other words, when humans see robots, some automatic process starts running inside that tries to interpret the system as human. This tendency can affect users’ attitudes and acceptance of bots as a social category (Złotowski et al., 2015). It has been shown that such psychological essentialism is associated with prejudice and perceptions. Research examined the appearance design of AI systems, robots and chatbots and the attributes of human nature, including friendliness, emotion, and passion. Duffy (2003) argued that employing successful degrees of anthropomorphism with cognitive ability in social robotics will provide the mechanisms whereby the robot could successfully pass the age-old Turing Test for intelligence assessment. In our study, we argue that not only the visual design of a humanlike AI assistant but also its behavioral attributes can influence impressions by human users. We emphasize the perception that AI assistants can act humanly and have the capacity to process human languages and understand users’ psychological needs. In Rijsdijk et al. (2007)’s scale development, humanlike interaction is a key dimension of product intelligence. It can be argued that people tend to interact with AI assistants that are capable of conducting conversations in a “human” way and perceive an “intelligent” AI assistant would most likely be humanlike.

Item Refinement and Evaluation

To fine-tune the items and ensure face validity, the research team approached tourism experts to review the initial 26 items. In April 2020, a panel of 20 experts (PhD students and academic staff from lecturer to professors from a university in the United Kingdom) whose research area focused on technological innovation in tourism, hospitality, and events, including AI research, and/or had experiences using scale development method in tourism research, were given the conceptual definition of the construct dimension and asked to retain the items based on clarity of wordings and relevancy. The reviewers assessed the representation of the items to the construct dimension definition by rating each item as “clearly representative,” “somewhat representative,” or “not representative.” All items were scored above the mean value in terms of representativeness and were thus retained (Haynes et al., 1995). Experts suggested wording modification (i.e., changing “our” to “the,” “I” to “the user”) and removing two items (CI4: AI assistant is smart; CI6: AI assistant acts like human), indicating concerns about clarity. To support the face validity of the scale, these two items were removed, resulting in 24 items.

Study 2: Exploratory Factor Analysis and Scale Purification

To purify the perceived intelligence AI assistant scale, a pilot test was conducted in October 2020 via an online questionnaire distributed through Amazon Mechanical Turk to US residents who have traveled (overnight stay) in the past 24 months. The 24 items were converted into the questionnaire with a 5-point Likert-type scale ranging from 1 (strongly disagree) to 5 (strongly agree). To ensure each participant was familiar with AI assistants and had a basic understanding of how AI assistants could be used in the context of travel, a YouTube video (https://www.youtube.com/watch?v=8195qemqp-0) depicting a typical interface example between a user and Google Assistant in the context of hotel booking was embedded in the survey as an introduction. Respondents were required to watch the video for 150 s before the questions were displayed. Lastly, demographic questions were presented to facilitate the profiling of the participants.

This procedure yielded a total of 201 usable observations after the deletion of incomplete and unqualified responses. Of these, 54.2% of them were male. In total, 184 participants (91.5%) had used at least one type of AI assistant in the past, and more than half of participants (62.7%) had used AI assistants for travel purposes. Table 2 presents the participants’ demographics.

Participant Demographics (Study 2).

Exploratory Results

To examine the underlying dimensions of the perceived intelligence AI assistant scale, exploratory factor analysis using principal axis factoring with varimax rotation method via SPSS 25.0 (Soulard et al., 2021) was carried out, and the results are presented in Table 3. The Kaiser-Meyer-Olkin result showed a value of 0.928, indicating an adequate sample for conducting the factor analysis, further substantiated by a significant result on Bartlett’s Test (

Exploratory Factor Analysis Results (

Extraction method: Principal axis factoring.

Item deleted for composite confirmatory analysis.

Afterward, a 23-item scale emerged. Three factors were formed from the 23 items, representing conversational intelligence, information quality, and anthropomorphism. Kaiser’s criterion was employed to determine the optimal number of factors, with an auxiliary interpretation based on scree-plots and percentage of extracted variance. The factorial structure of the three dimensions explained 54.224% of the variance. Subsequently, Cronbach’s alphas were computed for each factor to determine scale reliability. All values were relatively high, ranging from 0.879 to 0.917, all greater than the suggested onset of 0.7 (Nunnally & Berstein, 1994), providing evidence for the reliability of the scale using this factorial structure.

Study 3: Evaluation of the Latent Structure

This phase of the scale development aimed to confirm the latent structure obtained in phase 2 by conducting a composite confirmatory analysis with a new set of data. A questionnaire with 23 items along with demographic questions was posted on Amazon Mechanical Turk (the built-in Qualification function was used to prevent repeat participation from Study 2). The data collection followed the same procedure as Study 2. After removing incomplete and unqualified responses, 202 responses were used. Within the sample, 47% were female, and most respondents (84.7%) were between 25 and 54 years old, with 11.4% older than 54 years and 4% younger than 25 years. Most respondents had used AI assistants in the past (93.6%), and 74.3% of respondents had used AI assistants for travel purposes. Participants’ demographics are shown in Table 4.

Participant Demographics (Study 3).

Composite Confirmatory Analysis

The validity and reliability of the perceived intelligence AI assistant scale were evaluated by performing composite confirmatory analysis in SmartPLS version 4.0. Table 5 reports the results of reliability and validity assessment. This study used Cronbach’s alpha coefficient (Cronbach, 1951; critical acceptance value = .7) and Composite Reliability Index (Fornell & Larcker, 1981; threshold value = .7) to evaluate the internal consistency reliability of the measurement instrument. The Cronbach’s alpha values and composite reliability index for all three constructs met the abovementioned threshold.

Composite Confirmatory Analysis Results for Refined Measurement Items (

Extraction method: Principal axis factoring.

Item deleted for Main Study.

Construct validity was evaluated based on convergent and discriminant validity. The factor loadings of individual items range from 0.502 to 0.830. According to Awang et al. (2015), the factor loading for every item should ideally exceed 0.6. Therefore, IQ4, IQ9, IQ11, IQ12, and AN4 were removed. Additionally, the values of average variance extracted (AVE) of the individual construct are above the 0.5 cut-off point recommended by Fornell and Larcker (1981), demonstrating strong indicator reliability.

Furthermore, this study assessed the Heterotrait-Monotrait ratio to confirm the discriminant validity. The results range from 0.085 to 0.844 (i.e., below the suggested 1.0), indicating the establishment of discriminant validity (Hair et al., 2014; Henseler et al., 2015). Taken together, the preceding statistical tests suggest that the items were valid and reliable measures of the latent constructs.

Theoretical Background and Hypotheses Development

Information System Success Model

DeLone and McLean (2003)’s updated Information System Success Model, initially developed in 1992, is a well-accepted theory in explaining usage behavior consisting of six variables: system quality, information quality, information system use, user satisfaction, individual impact, and organization impact. DeLone and McLean (2003) extended the model by incorporating one new variable: service quality. While other models, such as the Technology Acceptance Model and the Unified Theory of Acceptance and Use of Technology, are widely applied in the information system literature, the updated Information System Success Model has been considered most appropriate to assess the success of AI assistant systems in various use contexts, including e-commerce (Ashfaq et al., 2020), banking industry (Trivedi, 2019), e-government (Tisland et al., 2022), and tourism (Pereira et al., 2022).

To test the predictive validity of the perceived intelligence of AI assistants scale in the next step and based on the data obtained in the previous phases, this study applies the updated Information System Success Model to develop hypotheses due to its two critical technical aspects (i.e., system quality/conversation and information quality) being prominent in assessing the interactions between users and AI systems. Indeed, past studies claimed that system quality and information quality are crucial determinants of any information system platform’s success (i.e., Gao et al., 2015; Veeramootoo et al., 2018). Accordingly, considering AI assistants as an information system application, the current study incorporates these two quality dimensions—system quality and information quality, and one impact dimension, usage intentions, to examine how system quality and information quality can shape users’ perceptions of intelligent interactions with AI assistants and their effects on behavior intentions.

Notably, although the system quality and information in the Information System Success Model represent key technical aspects to determine information system success, simply combining these two aspects to examine AI assistants’ intelligence and the effects on user behavior intentions may not be sufficient to explain the unique difference in AI-based chatbots service context, particularly the perceptions arising from a social perspective, as this Information System Success Model treats all technologies alike, regardless of specific design characteristics. As a result, this model may be inadequate to capture the extent to which the defining features of the technology under consideration influence user’s attitudes toward assistants in the travel decision-making context. Notably, the interaction systems between users and AI assistants rely largely on the conversation/dialog quality, and AI assistants are known for their intelligent architecture designed for productive conversations with users (Moussawi et al., 2020).

Generally, system quality tends to concentrate on the overall performance (technical and functional aspects of an information system) and reliability of the system itself; however, interactions with AI assistants, which are open domain and sometimes include task-oriented/goal-directed dialog systems (e.g., restaurant booking), need to classify user intents and recognize and extract entities mentioned by the users from a knowledge base and reasoning, as well as updating the information and beliefs about user intents after getting new input (Burtsev & Logacheva, 2020). Such systems are often modular and focus on user input or interactions, which engage in contextually relevant conversations with users and emphasize the “interpersonal” aspect of interactions. Especially in the travel industry, travelers’ interactions with AI assistants are primarily conversational. Therefore, while integrating system quality from the Information System Success Model that focuses on the technical and functional aspects of an information system, to adapt the model to the context of AI assistants in travel, we took user-, interaction-, and context-centric approach by using conversation intelligence as the representation of system quality in the conceptual model of this study. In addition, we added a technology-specific aspect—anthropomorphism. The updated Information System Success Model indeed comprises a commercial element (service quality); in the context of this study, where services are delivered by AI assistants, users expect AI assistants to be able to provide customer service as good (i.e., intelligent) as human beings. It has been suggested that an anthropomorphic representation of intelligent agents will allow for a rich set of easily identifiable behaviors, which are important signals for social interactions (S. Han & Yang, 2018; Kiseleva et al., 2016), and research has shown that agents with human-like characteristics tend to be evaluated higher on intelligence (King & Ohya, 1996). Thus, it can be suggested that the more human-like the AI assistant is designed, the more likely users are to consider the AI assistant as capable of delivering “human-to-human”-like customer service. Therefore, the current study includes a social element (anthropomorphism) in conceptualizing the research model.

Overall, while past research on the AI system evaluation scale mainly focused on intelligence competence, this study posits that the quality of information/output is also key in measuring users’ perceptions of AI assistants and in assisting users in making travel decisions. In addition, to highlight the importance of human nature in forming users’ perceptions of intelligent AI assistants, we also included anthropomorphism as a social element in the scale. Therefore, this study aims to contribute to travel and information system research by integrating anthropomorphism, system quality, information quality and individual use from the Information System Success model to generate new knowledge in understanding AI assistants in travel.

Hypotheses Development

Prior research demonstrated that perceived intelligence was found as one of the key antecedents of the adoption intention of AI applications such as personal intelligent agents (Moussawi et al., 2020) and the adoption of hotel service robots (I. P. Tussyadiah & Park, 2018). In travel research, travel behavioral intentions are widely investigated through the willingness of travelers to visit and/or revisit, to purchase or repurchase, their word-of-mouth recommendations, and their feedback to travel service providers (Tavitiyaman et al., 2021). Travel organizations employ AI assistants to provide users with travel information and customer support, aiming to enhance staffing efficiency and automate the booking process. Hence, this study focuses on the intention to use AI assistants to search for travel information and intention to make travel bookings as the representation of “usage intention” integrated from the Information System Success Model.

Information search in travel refers to travelers’ efforts (i.e., time spent) in obtaining travel information (Schul & Crompton, 1983). Tavitiyaman et al. (2021) argued that when travelers obtain either positive or negative information from various sources online, the information can encourage or discourage their decision-making process and influence their travel behavior. In the context of AI assistants, numerous businesses have employed AI assistants as advisors for online customer service, providing customers with information and aiding them in the decision-making process. According to Fitzsimons et al. (2008), a highly responsive AI assistant can produce a sense of being agile, intelligent, and sophisticated while searching for information. It is plausible that an intelligent and well-implemented AI assistant may enhance positive experience and consequently result in increasing users’ confidence in decision-making. Past studies have investigated the impact of AI assistants triggering consumer purchase intention in e-commerce (Lo Presti et al., 2021; McLean et al., 2020). Pillai and Sivathanu (2020) revealed that AI assistants are useful in providing real-time tailored solutions to make travel bookings and, consequently, the perceived intelligence of AI assistants leads to the adoption intention of AI assistants in travel. Therefore, this study posits the following hypotheses:

H1.

H2:

Furthermore, considering the aforementioned three dimensions of perceived intelligence, it can be proposed that conversational intelligence, information quality, and anthropomorphism directly influence travelers’ intention to search for travel information and book travel services using AI assistants.

Past research demonstrated the attributes of

H3:

H4:

H5:

H6:

Finally,

H7:

H8:

In addition to information quality and system quality, the Information System Success Model includes other constructs; however, they were not included in this study as the aim was not to identify comprehensively what contributes to AI Assistant adoption and user satisfaction. Instead, the above hypotheses were developed to test the predictive validity of the perceived intelligence scale.

Study 4: Nomological Validity

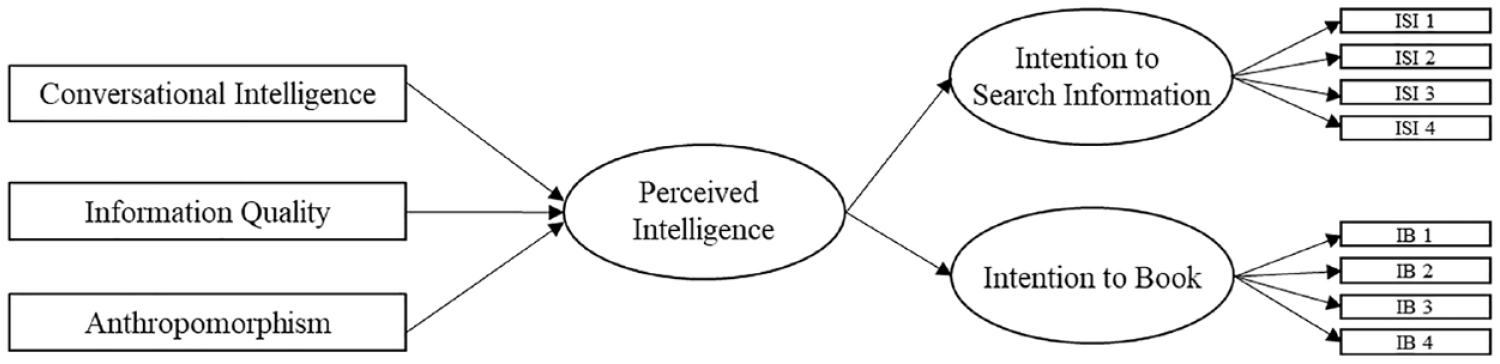

To assess nomological validity, defined as the degree to which predictions in a formal theoretical network are confirmed (Hagger et al., 2017), a theoretical relationship was anticipated between each dimension of the perceived intelligence of AI assistants and behavioral intentions of using AI assistants for travel by utilizing a structural equation modeling technique. Two constructs—intention to book (IB) and intention to search for information (ISI) were included in the model. The four items under intention to book (IB) and four items under intention to search information (ISI) were adapted from studies by Dodds et al. (1991) and Xu and Schrier (2019).

After investigating inter-construct correlations, predictive validity was assessed to determine whether scores related to perceived intelligence could predict scores on booking intention and intention to search for information. The structural equation model was estimated by the partial least square method due to the explorative nature of the study. Following Wang et al. (2019), a two-stage approach was adopted to estimate the model in SmartPLS 4.0. The first-stage model (see Figure 3) was assessed based on reflective constructs; the second-stage model applied formative and reflective construct measures. In particular, perceived intelligence was evaluated as a formative construct (formed by conversational intelligence, information quality, and anthropomorphism) in the second-stage model (see Figure 4). Attention check questions were used in the survey to prevent data contamination.

First-stage Model.

Second-stage Model.

Description of Study 4 Respondents

The data collection followed a similar procedure to previous studies, resulting in 643 usable responses. Data were collected in December 2020 using two platforms, with 203 usable responses from Amazon Mechanical Turk (the built-in Qualification function was used to prevent repeat participation from previous studies) and 440 usable responses from a survey panel from a professional market research company. The purpose of using a combination of these platforms was to avoid any potential common method variance bias (Chang et al., 2020) and due to its advantages of having a larger sample pool with more diverse backgrounds of participants. Table 6 presents the participant demographics.

Participants Demographics (

Composite Confirmatory Analysis Results of Study 4

A composite confirmatory analysis was performed, and Table 7 reports the reliability and validity assessment results. The Cronbach’s alpha values for all five constructs are above the acceptance value of .7, with their composite reliability over .8 (threshold value = .7) (Fornell & Larcker, 1981). In addition, construct validity was evaluated based on convergent and discriminant validity. The factor loadings of individual items range from 0.767 to 0.941 (greater than the suggested 0.6 cut-off). The five constructs each had an average variance extracted value above the 0.5 cut-off point recommended by Fornell and Larcker (1981), indicating strong convergent validity. Discriminant validity was first examined by assessing the Heterotrait-Monotrait ratio, with the values below the 1.0 threshold, indicating the establishment of discriminant validity (Hair et al., 2014; Henseler et al., 2015). Overall, these results confirmed the validity and reliability of the latent constructs. Furthermore, the standardized root mean square residual value for the first- and second-stage models is 0.04 and 0.08, respectively, meeting the threshold of 0.08 (Henseler et al., 2016) and showing that the proposed model had a good fit.

Composite Confirmatory Analysis Results for Main Study Measurement Items (

Extraction method: Principal axis factoring.

Assessing Second-Order Formative Hierarchical Model

In assessing the second-order model, as shown in Table 8, this study did confirm the suitability of modeling perceived intelligence as a second-order latent construct formatively constructed by first-order latent constructs: perceived intelligence-conversational intelligence, perceived intelligence-information quality, and perceived intelligence-anthropomorphism. The composite confirmatory analysis results showed that all the lower-order underlying constructs weighted significantly (

Composite Confirmatory Analysis of Formative Second-order Latent Factors.

Moreover, the coefficients of determination (

Structural Model and Hypotheses Testing

As suggested by Hair et al. (2017), the bootstrapping routine was performed using a sample of 5,000 to calculate the

Estimated Path Coefficients, Effect Size: First-Stage and Second-Stage Model (

In addition to evaluating

Other than evaluating the predictive power of the model using

Furthermore, the hypotheses testing results implied that perceived intelligence of AI assistants positively predicts future behavior with AI assistants. In particular, perceived intelligence was found to positively influence users’ intention to search for travel information and to make travel bookings from AI assistants (H1 and H2 were supported). This aligns with previous studies (Coskun-Setirek & Mardikyan, 2017; Kuberkar & Singhal, 2020), where users are likely to adopt AI assistants if the system can understand users’ queries and the actual performance can meet user needs. It also supports Pillai and Sivathanu (2020)’s findings that perceived intelligence positively influences the adoption of AI assistants in tourism, specifically in travel planning, whilst demonstrating a positive impact of perceived intelligence on travel booking intentions.

Positive relationships were also found between each dimension of perceived intelligence and behavioral intentions. Firstly, it was found that conversation intelligence had a significant influence on intention to book travel and intention to search for travel information (H3 and H4 were supported), consistent with prior research arguing a positive relationship between AI assistants/chatbot conversation intelligence and usage intentions (Melián-González et al., 2021). Second, information quality positively affects users’ intention to search for travel information using AI assistants and on users’ intention to book travel with AI assistants (H5 and H6 were supported). This illustrates that if AI assistants can provide good quality travel information (i.e., accurate, consistent, reliable, timely, and tailored) or travel recommendations, users will be willing to search for travel information from AI assistants when planning trips, and they are likely to make direct travel bookings through the conversation with AI assistants. This is also in line with extant research (Ashfaq et al., 2020; Forsgren et al., 2016; Wixom & Todd, 2005) that information quality positively influences user satisfaction and behavioral intentions, including booking intention (Hwang et al., 2018; Koivumäki et al., 2008). Moreover, this study showed that the anthropomorphism of AI assistants has direct and positive effects on both users’ intention to search for travel information and users’ intention to book travel (H7 and H8 were supported), which is consistent with many past studies suggesting that anthropomorphism plays a positive role in shaping consumers’ (re)use intentions of AI applications/AI assistants (Lv et al., 2021; Moriuchi, 2021), purchase intentions through AI assistants/chatbot commerce (M. C. Han, 2021), and chatbot adoption intention in travel planning and travel booking (Pillai & Sivathanu, 2020).

Conclusion and Implications

Summary of Findings

As AI assistants are increasingly implemented in frontline services, understanding what can constitute intelligent interactions with AI assistants to foster users’ intentions and behavior is vital to travel organizations. This study established the scale of perceived intelligence of AI assistants based on clarifying the unique characteristics of AI assistants and interactions and developed the associated scale following a mixed-methods approach. The dimension construction was based on a systematic review, 11 semi-structured interviews with AI assistant developers, and six focus group discussions with travel practitioners, while the scale development, validation, and nomological test were based on 1,046 survey respondents collected from end-users in three separate studies. This integrated method with multiple data sources enables us to understand the key characteristics of AI assistants’ intelligence based on both users’ and organizations’ perspectives, avoiding the bias emanating from a single view.

Specifically, in Study 1, the results from a systematic review and open codes from interviews and focus group discussions were gradually clustered into axial and selective codes, forming a three-dimensional perceived intelligence scale: conversational intelligence, information quality, and anthropomorphism. In Study 2 and Study 3, the items for these three-dimensional constructs were developed in three phases. After checking the content validity of the initial scale, the scale was refined based on the factor loadings, reliability, and validity of three rounds of international survey data. Consequently, an 18-item perceived intelligence scale was obtained with good reliability and validity. First, six items of conversational intelligence represent attributes of natural language understanding/processing ability, conversation memory, smooth conversation flow, context recognition, and natural conversation logic. Second, nine information quality items represent information attributes that meet users’ needs and are accurate, relevant, sufficient, up-to-date, reliable, credible, justifiable, and personalized. For anthropomorphism, three items represent attributes of human resemblance, natural communication, and the ability to understand users. All three dimensions (conversational intelligence

Theoretical Implications

This paper is the first to develop and validate a scale of perceived intelligence of AI assistants for travel, providing valuable contributions to the tourism and hospitality literature and human-chatbot interaction literature in several ways. First, this paper fills the knowledge gap regarding the dimension classification of AI assistants’ perceived intelligence. The existing measures of perceived intelligence are mainly based on user perceptions of service robotics (intelligence partly determined by the perceptions of physical appearance, movements, facial features, and mental capacities), which cannot be fully applied to AI assistant technologies/services (intelligence largely dependent on the textual characteristics of the dialog). This is especially true in travel contexts involving complicated planning and booking processes. This paper developed and refined a new reliable and valid scale tailored explicitly to the travel industry to measure perceived intelligence of AI assistants in travel, which includes three dimensions: conversational intelligence, information quality, and anthropomorphism. The measurements of perceived intelligence of AI assistants scale reflect the unique technical characteristics of AI assistants, such as natural language understanding/processing and machine learning capabilities. For example, the conversation/interaction process (dialog development) and conversation outcome (information quality) are important dimensions in shaping users’ perceptions of the intelligence of AI assistants. This is in line with various past research arguing the critical role of capacities such as natural language understanding/processing, conversation flow and memory in building up intelligent conversations with AI assistants and users’ attitudes/evaluations toward the intelligence (Følstad et al., 2018; Sousa et al., 2019). This scale provides a holistic understanding of interactions/conversations between humans and AI assistants.

Second, in contrast to existing scales designed for broader marketing or business contexts (e.g., Israfilzade, 2021; Moussawi & Koufaris, 2019), the innovation in the conceptualization of our scale lies in its tailored focus on the travel industry. Due to the intangibility and heterogeneity of tourism products/services, the interaction with AI assistants in travel is unique. It makes travel decision-making more dynamic and complex, involving a range of interconnected elements such as transportation, accommodations, and experiences. Travelers conduct more extensive searches for information than consumers looking to purchase tangible goods (Uthaisar et al., 2023). AI assistants in travel must adapt to this complexity, providing context-dependent information/assistance. Our scale was purposefully crafted to capture the intricacies and specific needs of travelers, their interactions with AI assistants in travel planning and booking contexts, and the requirements of travel service providers. While previous scales may assess generic perceptions of AI intelligence, our perceived intelligence AI assistants scale, which focuses on conversational intelligence, information quality, and anthropomorphism, is tailored to address the specific user expectations, experiences, and interactions of using AI assistants in the travel domain, aligning with the unique demands and dynamics of the tourism and hospitality sector. The dimensions and measurement items of our scale, grounded in the context of travel and derived from the tourism and hospitality stakeholders, distinctively delve into the travel-related dimensions of perceived intelligence of AI assistants, such as its ability to provide personalized travel recommendations, anticipate complex traveler needs, adapt to diverse cultural preferences, and facilitate travel booking processes. For instance, conversational intelligence gauges AI assistants’ capacity to understand traveler needs (related to a range of resources) and engage travelers in meaningful interactions during their entire complicated travel journeys (before-, during-, and post-travel), which is a highly critical dimension in the travel industry.

Third, whereas the extant literature has primarily investigated the role/characteristics of perceived anthropomorphism and perceived intelligence of AI assistants/service robots separately (Balakrishnan et al., 2022; Bartneck et al., 2009; Troshani et al., 2021), our work is a pioneer in investigating the formative relation between anthropomorphism and perceived intelligence of AI assistants in travel. We argued that users are likely to perceive AI assistants as intelligent if the AI assistants have human-like/anthropomorphic features (act like human beings). Hence, we proposed a formative relationship and empirically evaluated anthropomorphism as a critical design attribute of AI assistants that can form users’ perceptions of an AI assistants’ intelligence.

Fourth, the developed perceived intelligence of AI assistants scale provides usable measurement instruments for scholars to conduct future empirical research on user behavior when interacting with AI assistants. This study develops an integrated evaluation system of perceived intelligence, including system quality (conversational intelligence of the interaction itself) and information quality (outcome of the interaction), as well as users’ perception of human-like intelligence. Hence, the scale provides measurements for scholars in different disciplines, including those within the tourism and hospitality management fields, to evaluate the performance of AI assistants serving as a marketing or customer service tool.

Lastly, this study integrates the Information System Success Model to build a research model analyzing the factors constituting the perceptions of AI assistants’ intelligence and their impacts on travel information search and booking intentions. By doing so, it highlights the development of AI assistants through conversation intelligence, information quality, and anthropomorphic design of AI assistants. The well-established model provides a more comprehensive understanding of the AI assistant services research, especially emphasizing anthropomorphism as a key social element in influencing users’ behavioral intentions. Current literature in technology-based services research has explored chiefly the frameworks adapted from the base of Technology Acceptance Model, United Theory of Acceptance and Use of Technology, and Information System Success Model, and this research has moved ahead to provide additional insights into the Information System Success Model, by also testing a social element (anthropomorphism) along with technical system and information factors in the context of AI assistants.

Practical Implications

This research provides valuable insights and guidelines to AI assistant developers as well as the travel industry service providers/managers through the following implications: the importance of considering the system factors (conversation) and information factors (information quality) when designing the AI assistant services and employing social elements (anthropomorphism). First, the dimensions of the perceived intelligence scale play a vital role in different stages of AI assistant development. The dimensions can be used as a reference and checklist to identify key factors of intelligent interaction with an AI assistant, to design key attributes of the product (AI assistant), and to evaluate the success of its implementation (i.e., interaction). Second, travel businesses can use these scale dimensions as a reference to assess the competence of AI assistant as an “employee” providing high-quality customer service and/or a marketing tool to enhance business performance. This research found that conversational intelligence and information quality are key attributes forming users’ perceptions of intelligence of AI assistants, which positively affects travelers’ information search and travel booking intentions. That is, when travelers seek local recommendations, require language support, itinerary changes, and real-time assistance, etc., AI assistants with strong conversational capabilities can provide information better tailored to travelers’ needs. Therefore, AI assistants developers and travel service providers such as online travel agencies, hotels, airlines, and tourism attractions need to understand customer needs better and must ensure that AI assistants can understand users’ needs/intents and provide reliable, up-to-date, context-dependent, accurate, relevant, sufficient, and tailored information to enhance users’ travel planning process. For example, when travelers search for visa/flight information for a trip, online travel agencies must ensure that their AI assistants can provide accurate and up-to-date information (i.e., travel restrictions and visa requirements to be most up to date) and allow travelers to book flight tickets and hotels directly through the conversation, while at the same time remember users’ enquiry history, ID/ticket number/booking information, etc. Suppose users are unable to obtain the information they want from the cognitive AI assistant, in that case, they may consider such a system as unintelligent and useless, which will harm customer satisfaction, brand image and reputation, and in turn, discourage the customers’ use of it further (Ashfaq et al., 2020).

Moreover, this research suggests implications to incorporate more human-like characteristics in AI assistant design and implementation. This study found that anthropomorphism forms users’ perception of the intelligence of AI assistants and that it significantly influences the travelers’ intentions to search for travel information and make travel bookings. Hence, it is suggested that AI assistant designers, managers, and service marketers should make use of important and contextually relevant anthropomorphic design elements (i.e., gender, appearance, personality traits reflected by attitude, tone, language, and communication style, etc.) to create solutions for travel businesses that will resonate with customers’ needs and ultimately foster the usage and facilitation of travel bookings. It is worth noting that the anthropomorphic elements are not only designing AI assistants’ physical appearance resemblance with human beings but also their cognitive ability to understand users’ needs in different situations and be able to answer complicated queries that a human agent can solve. In today’s competitive business market, where customers look for personalized solutions, it is imperative for businesses to utilize AI assistants as a powerful solution to assist customers like well-trained and thoughtful marketers/sales/managers. In addition, the study provides insights into how customers perceive the intelligence of AI assistants in the travel industry, which can inform the development of more effective marketing and communication strategies. For instance, the study found that customers perceive AI assistants to be more intelligent when they provide accurate and up-to-date travel information, suggesting that emphasizing the accuracy and reliability of AI assistants in marketing materials of travel products may be an effective strategy to improve customers’ perceptions of AI assistants’ intelligence and enhance travel booking intentions.

Notably, although the data were collected both before and during the COVID-19 pandemic, the study findings are valid in both COVID-19 and pre- or post-COVID-19 situations. In times of COVID-19, more AI assistants are used in numerous cases ranging from supporting clinicians to aiding customer service (i.e., mitigating social distancing, identifying coronavirus symptoms, providing health advice, sharing travel restriction information, offering emotional support, and promoting remote work environment). Hence, some end users might become more familiar with AI assistants now, albeit not using them for travel purposes during the pandemic. Therefore, the results of this study become even more important and can be used in multiple disciplines and areas of application.

Limitations and Future Research

Despite the contributions to theory and in practice, as with all scholarships, this study is not bereft of limitations. First, considering that AI assistants was a relatively new technology when this research was conducted, it was assumed that not many people had experience using AI assistants for travel purposes. To ensure participants got familiar with the research topic and due to the Covid-19 pandemic situation when collecting data, an explainer video was presented in the survey, and participants evaluated the AI assistants based on the given video. This may pose a challenge to the generalizability and external validity of the results, as they may vary if participants were to provide their evaluations based on actual first-hand experiences. Future research could target participants with real-world past interaction experiences with AI assistants for travel purposes and use multiple stimuli in the research design. Second, this scale was developed within the context of travel (i.e., by contextualizing its use in the research design). It is encouraged that researchers test the scale in different sectors to obtain context-based findings. In addition, the anthropomorphism measurements in our study were examined on perceived qualities of AI assistants to have for human-like intelligent interaction, with a focus on the “conversation,” such as the naturalness of the conversation and the contextual understanding of users. Future research can investigate other aspects of perceived anthropomorphism, such as agent appearance, emotional intelligence, and behavioral characteristics. Moreover, this study did not consider individual differences and situational factors in investigating AI assistant adoption. Extant literature proposes that user acceptance of technologies also depends on gender, age, prior experience, and innovativeness (Venkatesh et al., 2012). Future research can investigate how users’ behavioral intentions toward AI assistants may vary across demographic groups. Lastly, some data were collected at the height of the COVID-19 pandemic, which may have impacted respondents’ answers, and hence, the findings of the study. It is important to test this model with data collected after the pandemic to understand whether there is a significant behavioral change regarding the use of AI assistants for travel purposes and whether conversation intelligence, information quality, and anthropomorphic design of AI assistants continue to be crucial in enhancing perceptions of AI assistants’ intelligence and determining travelers’ intentions to use AI assistants for travel information search and travel bookings.