Abstract

Keywords

Introduction

When controlling dynamical engineering systems, predictions are used to form expectations of future system behavior and enable a more controlled environment. These predictions are limited by the mathematical model computing the prediction process. During the operation and observation of a system, deviations between a measured actual state and a planned state might trigger a corresponding reaction or a targeted need for action by engineers. The defined plan value can be determined via a simulation using a prediction process and is used as a target value. If the actual state deviates measurably, relevantly, and significantly from a desired plan state, the system will indicate either a warning or a fault. Thus, the risk of a shutdown exists, especially in the case of a long-term inability to correct a deviation. Simulations are hereby a powerful tool to predict and classify malfunction states in advance, to avert possible malfunctions during regular operations, or to start countermeasures in advance. Nevertheless, during the operation and observation of a system, states might still occur, which were not predicted in advance and can represent malfunctions. These would generally be recognized as an anomaly in the data, defined as a substantial deviation from the norm (Mehrotra et al., 2017).

From a system planner’s point of view, the anomaly detection process, as well as the evaluation of the precision of a prediction, is therefore a comparison of the expected system state (a planned value) with the actual system state (an actual value). In this definition, anomalies are not the result of noisy data, expected malfunctions, or errors using the prediction models but rather novel deviations not explainable by the underlying prediction process (Spoor et al., 2022). Therefore, anomalies in the measured data are the limitations of these prediction models, if measurement and prediction differ in a substantial manner from the normal data model (Mehrotra et al., 2017). Hereby, global anomalies are referred to as systematic differences between measurements and prediction within the whole time series and local anomalies are distinguishable time frames and spots containing anomalous values. Local anomalies can be further divided into point outlier, contextual anomalies, and collective anomalies (Lindemann et al., 2021). Future challenges in anomaly detection for time series in Internet of Things application are given by Cook et al. (2020) as the development of unsupervised methods, real-time processing, and the generalization of methods.

We propose a novel methodology for the detection and evaluation of global and local anomalies in systems with a discrete measurement and prediction process for multivariate time series data. The idea is to compare the measured covariance matrix and a theoretically derived covariance matrix for which is assumed that only noise conceals the measurements. The comparison is conducted by an entropy measure for finding global anomalies and the application of the Wasserstein metric is used as a measure to compare the amount of anomalous behavior of different predictions. In addition, a local anomaly detection is conducted by applying the Mahalanobis distance using the theoretical noise covariance matrix. This methodology improves the state-of-the-art of anomaly detection of multivariate time series by enabling a local as well as global anomaly detection without prior clustering, training data, or the use of a correct time series as baseline. Furthermore, this methodology provides a novel measure for selecting the best prediction function to improve model selection for nonlinear systems.

This contribution starts with an overview of the current literature for anomaly detection in time series data. Subsequently, the theoretical derivations for the methodology and the setup of the theoretical noise covariance matrix are discussed. Based on the derived methodology, a simulation study using logistic growth as an example of a nonlinear time series is conducted to prove the capabilities of our proposed method for a local and global anomaly detection. In addition, a use case is discussed to compare the amount of anomalous factors in predictors of satellite orbits using the Wasserstein metric. Thereafter, our proposed method is discussed and a conclusion is given.

Literature review

Multiple papers discuss anomaly detection in multivariate and univariate time series. In the case of a local anomaly detection in multivariate time series, Blázquez-García et al. (2021) distinguish model-based approaches (either by prediction or estimation), methods using histograms (for point outliers), and dissimilarity-based approaches. For a global anomaly detection, Blázquez-García et al. (2021) name dissimilarity-based approaches and dimensionality reduction as techniques.

Since information entropy is used as a metric to estimate system complexity (Pincus, 1991), local outliers are detected or anomaly affected areas identified within univariate time series by using the Shannon entropy (He et al., 2021). With this approach, no global anomalies can be detected. However, the Shannon entropy (Germán-Salló, 2018) or the permutation entropy (Bandt and Pompe, 2002) are proven to be, in principle, useful measures to detect anomalies within time series.

Wang et al. (2011) use the correlation of a suspected anomaly affected signal and a known correct signal without anomalies so that global anomalies in the suspected signal are detected. This approach requires an identified second correct system and no local anomaly detection is conducted. Similar to the correlation of two signals, autocorrelation in anomaly detection is widely used (Izakian and Pedrycz, 2013). However, these methods lack the possibility for a global anomaly detection and are applied for univariate time series.

Li et al. (2021) use clustering of multivariate time series and they analyze the data points with a distance measure so that local outliers are detected. The clustering is conducted using a Gaussian Mixture Model solved through the usage of an EM-algorithm, which is enabled by the Mahalanobis distance. In other methods and applications, the Mahalanobis distance provides good results, but a prior clustering is necessary (Sperandio Nascimento et al., 2015) or the data sources are contextually clustered beforehand (Titouna et al., 2019). In the case of clustering, the covariance matrix of a time series is estimated using the priorly set up clusters. In some approaches, the covariance matrix of nonlinear systems is approximated using simulations and evaluated using the Mahalanobis distance (Burr et al., 1994).

Concluding, machine learning is another approach. An advantage of machine learning is that no model assumptions of the analyzed time series are necessary. However, supervised approaches from machine learning, for example, a Support Vector Machine as implemented by Rodriguez et al. (2010), require labeled data sets. Approaches using unsupervised neural network architectures, for example, an Autoencoder as implemented by Audibert et al. (2020), require a prior training phase and the assumption of a training data set without or with only very small amount of anomalies. In recent years, architectures based on long short-term memory (LSTM) are developed but also require extensive training data and sometimes labeling (Lindemann et al., 2021). LSTMs are also used for creating predictions which are then evaluated using a local average with adaptive parameters to detect local anomalies (Tan et al., 2020).

Theoretical derivation of methodology

Applied measurement and prediction model

Following the proposed system description by Spoor et al. (2022), a system state is given by the multivariate description

We assume, for most applications, white noise under a normal distribution and an expected mean of zero

In the case of a variance depending on the feature, the variance in the following derivations can simply be adjusted to

When modeling a system, a precise knowledge of function

Noise and measurement inaccuracy

Ignorance of the real features of the system

Ignorance of the effects of the real operations

To create a precise prediction of future system states, the goal of an engineer is to select

Noise in measurements results in distorted predictions of the system

Ignorance of the real

Ignorance of the effects of the real operation

(a) Observable features

(b) Unobservable features

Complexity of the model and limitation of the model due to computational power. Therefore, not all effects on the observable features are precisely modeled

Reason 4 is an additional explanation to reason 1–3 since even if reason 1–3 could be solved, limitations due to computational power still apply and decrease the precision of the predictions and result in noteworthy discrepancies of the expected state and the measured state. Therefore, reason 4 is more of a technical expansion of reason 1–3.

These items describe the reasons for unexpected states despite extensive simulations and knowledge of the system. This raises the question of how these influences can be incorporated into a model. Most notably the Kalman filter enables a correction of predictions, which improves the predictions and state estimations without bias. However, the Kalman filter offers no applicable metric in measuring and comparing the performance of two models and evaluating how inaccurate a model is from the real states. Therefore, it becomes an important task not only to correct the prediction but also to spot the anomalous behavior of a prediction, that is, in which cases the prediction is more inaccurate than in other cases and to define a measure to evaluate the precision or anomalous behavior of the prediction. This evaluation is set up by viewing the occurring deviations from the prediction as a distribution and comparing this distribution with sample distributions only affected by noise and not the ignorance of the real features and operations.

Derivation of theoretical noise covariance matrix

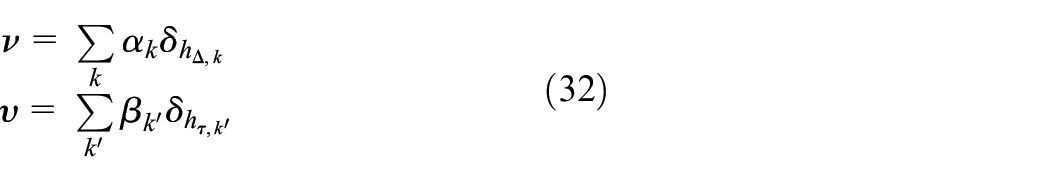

If the knowledge of the underlying operations

Furthermore, we know the distribution

The distribution is also influenced by an unknown distribution of the ignorance of function

For further analysis,

If the prediction function

Since

Therefore,

It should be noted that

The term

Both time series are uncorrelated but not independent. Since the time series of

If we want to calculate the noise term

From

The covariance for two different features

From

For the series

Since in the case of white noise

From

If the state

The covariance matrix of the time series

Using equations (15), (17), and (20), the diagonal sub-covariance matrices are constructed as

The matrix

It should be noted that for linear systems the covariance matrix

This theoretical covariance matrix can be used as a test measure if the measured

Global anomaly detection via entropy of the density distribution

For an anomaly detection, the existence of the ignorance must be tested. It is deduced that an anomaly is present within the system when the empirical covariance

If this hypothesis is rejected, an unknown covariance matrix with a density distribution

If an analysis of a time series with enough data points

The cross-entropy between the two density distributions of

The density distributions are not measured directly, but the density distributions can be approximated by using a histogram. If the measured values of pairs of

In conclusion, the KL-divergence is adjusted to

Since the KL-divergence is not symmetric, both directions should be calculated and added together for analyzing the comparison. If both distributions are identical,

As a test value, the comparison of entropies using

A sample distribution

Evaluation and comparison of predictions via Wasserstein metric

It is not only important whether a prediction differs from the measurements so that an unknown system behavior is assumed, but it is also important to measure how strong the anomalous behavior and difference between the prediction and the measurement is. Thus, a metric is necessary to measure how inaccurate a prediction is. For time series and nonlinear systems, the influence of parameters and the Wasserstein metric as evaluation criterion of such systems is studied by Muskulus (2010). The metric is based on the comparison of both distributions

The Wasserstein metric can be visualized as the optimal transport flow between the two observed distributions. One distribution acts as

Thus, the histogram bins act as sources of entries flowing toward sinks. Therefore, the amount of values of all bins of the first distributions are the sources and the values of all bins of the second distribution are the sinks. This results in source and sink conditions

The first-order Wasserstein distance becomes as follows

The value

Local anomaly detection via Mahalanobis distance

If only single data points when measuring

This distance metric is applicable to all states

Since the Mahalanobis distance follows the chi-square distribution (Fauconnier and Haesbroeck, 2009), the chi-square distribution with 2D degrees of freedom is applied to test the measured

Since the covariance matrix is known beforehand and can be computed in advance to the measurement using the function

When counting the amount of detected outliers, the detected amount is compared to the expected amount of false-positive detected outliers. Using the significance level

Proposed algorithm

As algorithm for a functional global anomaly detection, the pseudo code of Algorithm 1 is proposed. The assumption is that if

As algorithm for a local outlier detection, Algorithm 2 is proposed. This algorithm can be applied to a time series in real time since only the function

In general, the parameters for the algorithms are comparably easy to estimate. Regarding the amount of simulations of the pure noise distributions, a sufficient sample size

Simulation study: Anomaly detection in logistic growth

Applied global and local anomaly detection

For an analysis with synthetic data, we assume a system with a system state

Since only the observed features are known, the prediction function

As soon as

The operation

Delta between measured and expected values of feature

With the knowledge that the additive signal follows a sine wave function, the signal can sometimes be guessed within the

For the purpose of a better visualization of the relation between state

Theoretical noise density distribution and empirical density distribution of state

As a second step, the white noise must be estimated. Therefore, we apply a halting operation as proposed. Within our time series, it is also applicable to measure the time’s standard deviation directly since it is not concealed by a signal and follows a linear relation. The measured standard deviation of the

When analyzing the entropy for the distribution of the time measurement, no significant differences occur between the empirical density and the theoretical noise density. This is expected since there is no signal concealing the time measurement. Since the time is linear, we can cross-check the analysis with the measured correlation of time between state

The entropy of the distributions of the features

An entropy of

Using Algorithm 2, a local anomaly detection is conducted. Since the sine wave signal is only intense compared to the noise in the minimum and maximum values, we expect outliers to occur right after these extreme values. Other data points in the time series might be more compatible with the noise assumptions. The detected outliers are marked in Figure 3 using an

Identified outliers of feature

It is visible that some marked outliers occur directly after the high and low points of the concealing signal feature

Using a signal intensity

Sensitivity analysis of global anomaly detection via entropy

If the signal strength

Therefore, the significance of the anomaly detection is validated by the evaluation of the entropy using varying

Mean theoretical and measured entropy for feature

Figure 4 shows that the empirical entropy of feature

For different amounts of executions of the time series

Sensitivity analysis of the proposed algorithm using varying sample sizes

Use case: Evaluation of numeric predictors for satellite orbits

Orbital mechanics

Since satellites follow an easy to predict path using physical models, that is, newton mechanics, they also have a prediction and a measurement process, which is necessary for implementing our proposed method. Furthermore, satellites follow an elliptic path in orbit and are therefore not a linear system. In order to demonstrate the proposed method, satellite data already researched by Puente et al. (2021) and provided by the International Data Analysis Olympiad (IDAO, 2020) are used. The data are given for the period of January 2014 for 600 satellites. The previous research makes the data set a good choice for benchmarking and comparison.

First, the physical models need to be set up. The main information in the data set are the coordinates of the satellites along the

Each satellite has a specific radius

A common solver for these differential equations is the Euler method or the Runge–Kutta method of order 4 (RK4). The RK4 and Euler method use first-order differential equations. As an additional solver, a LSODA method, a variant with automatic method selection of the Livermore Solver for Ordinary Differential Equations (LSODE), as implemented by Hindmarsh (1983) is used as a very precise predictor of the orbits.

Derivation of applied predictions

The Euler method is the historic way to calculate orbits and is the simplest of the family of Runge–Kutta methods, but it therefore has a high error-proneness for computing the orbits. The prediction of the velocity for step

The prediction of the radius uses the prediction of the velocity

The deviations are calculated so that the full theoretical covariance matrix is constructed. As an example and to keep the covariance matrix smaller, only the x-coordinate is checked for anomalies while the error in time is neglected. Thus, the theoretical covariance matrix

with

The theoretical covariance matrix of the velocity is given as follows

with

The theoretical covariance matrix is used to compute the theoretical noise density distributions, which is compared with the empirical density distribution in order to spot anomalous behavior in the

A more precise method is the Runge–Kutta method of order 4. Therefore, the RK4 is used in comparison to the Euler method. The difference between the theoretical noise density distributions and the measured empirical density distribution of the Euler method is assumed to be greater than in the case of the RK4 method, marking the RK4 as a more viable prediction method. For a defined time step

This results in predictions of the state

Either the theoretical covariance matrix of these predictions is computed or the noise is simulated

By evaluating the prediction using the numeric solution at an infinitesimal change, the resulting values are used to construct the theoretical covariance matrix. This estimation is used for computing the theoretical covariance matrix of the LSODA predictions.

For real-time applications using Algorithm 2, a full calculation or estimation of the covariance matrix is necessary, while for those using Algorithm 1, an amount of sample runs under noise is sufficient.

Evaluation and comparison of prediction methods for satellite orbits

The satellite orbits are assumed to be quite anomalous since the simple two-body problem as presented in equations (41) and (42) does not include other astronomical objects, that is, the moon and the sun, as well as man-made satellites and other objects within Earth’s orbit. Furthermore, it assumes that Earth is a point mass and neglects any relativistic effects. Since it is expected that the proposed method will find anomalies quite easily and that these anomalies can even be spotted by a visual comparison of the delta values without further analysis, the evaluation is rather a comparison of the precision of the Euler method prediction, the RK4 prediction, and the prediction using LSODA. This is achieved by applying the Wasserstein metric between the empirical and theoretical (or by equation (54) estimated) density distributions for each prediction and comparing the resulting distances. It is assumed that the Euler method performs worst and the LSODA prediction best. Also, the more stable the orbit is, the better the predictors are.

Two satellite orbits with ID 1 and ID 2 are analyzed in detail. The orbit of satellite 1 is quite unstable and is subject to strong other effects besides Earth’s gravity and satellite 2 is stable in its orbit around Earth. A visualization of the first 30 and last 30 orbits after the measurements in January is given in Figure 5(a) and (b). It is easily visible that the orbits of satellite 1 are very different after the time frame, while the orbits of satellite 2 are still overlapping.

Orbits of satellites with ID 1 and 2 using cartesian coordinates in kilometers of the first and last 30 observations. Earth is at point (0, 0, 0). (a) Satellite orbit of satellite ID 1 and (b) satellite orbit of satellite ID 2.

Equation (45) is used for the calculation of the noise covariance matrix in the case of the Euler method. Equation (54) is applied for the estimation of the noise covariance matrix of the RK4 method and LSODA. For a better visualization, only the prediction of the

Even for the stable satellite orbit 2, the deviations between measurement and predictions are important and visible without further analysis within the data only by observation of the empirical density plots. The difference between predictions and measurements of RK4 and LSODA are within the same magnitude as the noise of the theoretical covariance matrix. The difference between predictions and measurements of the Euler method are, as assumed, multiple times the magnitude of the noise. The evaluation is plotted using the density distribution histograms. The histograms for satellite ID 2 are given exemplary for the RK4 in Figure 6. The empirical density distribution again highlights the necessity of using histogram bins and the Wasserstein metric since the covariance matrix would not fully encompass the complexity of the distribution.

Theoretical noise density distribution and empirical density distribution of the

By applying the proposed anomaly detection, all prediction methods would be classified as anomalous. Since it is not relevant in this case whether an anomaly is present but rather which prediction method is a better predictor, the Wasserstein metric is applied using the implementation by Flamary et al. (2021) with the sliced 2D Wasserstein metric by Bonneel et al. (2015) to determine which prediction is the most precise. The results are given in Table 2.

Evaluation of the satellite orbit predictions of ID 1 and 2 using the 2D sliced Wasserstein metric by Bonneel et al. (2015).

The results of the metric are as expected, with the exception that the Euler predictor performs more precisely in the unstable orbit of satellite 1 than in the stable orbit. This might be the result of the worse performance of the Euler method in orbits with high eccentricity since satellite 2 has a less round shape with a higher eccentricity. For the LSODA and RK4, the predictor performs better for the stable orbit. In addition, the analysis suggests that the LSODA performs better than the RK4 method, while the Euler method performs significantly worse than the other methods. This result is no surprise since the Euler method is a Runge–Kutta Method of order 1 and therefore lacks the precision of a higher order method. Also, the RK4 is considered a less precise method than the more advanced LSODA predictors, which is reflected by our results. A reason for the worse performance of the RK4 are some very high outliers of the

Discussion

The use case and simulation study show the capabilities of our proposed methodology. However, some limitations exist. First, the expected function

Second, the measurement noise must not be so large that the true operation is completely obscured. In this case, the model would be insufficient to obtain information about the true operation. The focus in the application would then be to first eliminate the measurement noise or to increase the number of samples.

Third, the runtime scales linearly with the number of samples

Fourth, a prediction function

As a main difference to other methods, this contribution focuses on the prediction function as the subject of interest for anomaly detection and thus, error correction. Therefore, our proposed method emphasizes the validation of a system model using a measurement and prediction process. This model can be based on physical properties and derived differential equations but also on, for example, autoregressive models. It is not discussed nor are the cases differentiated within our method, whether the cause for differences between prediction and measurement is explained by inaccurate predictions or external factors creating an anomaly.

The procedure is able to precisely detect unexpected influences in the operations of a system and to assign them to the corresponding features and operation. No assumptions have to be made about the underlying distribution, and the necessary parameters are relatively easy to estimate in order to initialize the model. In addition, the approach is unsupervised and does not require any prior analysis of the results or a labeling of data points. However, the methodology assumes modeling and thus knowledge of the normal or expected system state. A further advantage is that the covariance matrix is computed analytically and no estimation with a prior clustering is necessary. This improves the real-time detection of outliers in time series with prior known prediction functions since the Mahalanobis distance is a well-researched and tested measure for outlier detection. In comparison to other models, this enables a global and local anomaly detection and a model identification process.

Conclusion

This work contributes to performing predictive anomaly detection more efficiently since the analysis is conducted without a clustering or other estimations, except a prior knowledge of the prediction function. Moreover, a contribution could be made especially for anomaly detection in nonlinear systems for which many of the conventional methods of prediction formation and anomaly detection have limitations. Furthermore, the systematic evaluation of prediction functions is an important task for practitioners setting up and controlling complex dynamical systems. Therefore, a main contribution of our approach is that it provides a useful measure to compare prediction functions using the Wasserstein metric, enabled by the analytically derived covariance matrix and the distribution of deltas via a histogram.

Through the knowledge of the unexpected states in a system and the affected features, a system engineer is subsequently able to transfer the unexpected states into a prediction formation to perform better simulations. Thus, the proposed anomaly detection and prediction evaluation improve the prediction formation in dynamical and nonlinear systems. Further research regarding possible applications within engineering and a benchmarking of the performance in different use cases compared to other models and algorithms for time series needs to be conducted. In addition, we want to analyze the possibility of using the information about the existence of an outlier or a global anomaly in the time series in order to develop a methodology to systematically improve the prediction function and, therefore, improve the capability of a system engineer to run simulations.