Abstract

1. Introduction

Online and administrative sources yield substantial data rich in pertinent information that can be leveraged to enhance the understanding of labor market dynamics, as demonstrated by previous research (M. E. Beręsewicz et al. 2021; Hersh and Harding 2018). In particular, data from online job advertisements (OJA) have been employed extensively in the tracking of changes in vacancies (see, e.g., Beręsewicz and Pater 2021; Hershbein and Kahn 2018; Turrell et al. 2021). The analysis of such information allows for the examination of the impact of current global shocks on labor demand. While the estimation of the number of jobs is a crucial aspect, the analysis and forecasting of structural change represents a significant challenge in economics during labor market transformation. The analysis of skill demand necessitates a disaggregate approach, as the general definition of skills is unable to capture the specificities in firms’ demand for skills.

Lightcast (https://lightcast.io/about/data), the largest global company providing statistics on OJA, classifies occupations but does not disclose the models or the quality of said models (e.g., classification errors). The European Union agency, Cedefop, is responsible for the collection of OJA data across all EU member states, which is then made available for analysis through the Skills-OVATE tool (see https://www.cedefop.europa.eu/en/tools/skills-online-vacancies/occupations/). Cedefop classification of occupations is based on the International Standard Classification of Occupations (ISCO) up to the unit group level. Initially, they employed Support Vector Machines (SVMs), which Cedefop identified as the optimal approach in terms of classification accuracy (cf. Boselli et al. 2017; Colombo et al. 2018).

A more recent machine learning approach dedicated to job offers was proposed by Turrell et al. (2022). The researchers employed a corpus of 15.2 million advertisements posted between 2008 and 2016 on Reed.co.uk for the purpose of classifying a job offer according to the 3-digit codes of the UK Office for National Statistics’ (ONS) Standard Occupational Classification (ninety minor occupational groups). In order to achieve this, the researchers employed a fuzzy matching technique. The researchers report that manual assignment of occupations yielded 76% correctness (on a sample of 330 job offers), while their algorithm achieved 91% accuracy (on a sample of 67,900 job offers). However, the authors also indicate that their assessment was based on only 34% of the initially collected job offers due to the inability of the ONS algorithm to produce a confident label. In their study, European Commission (2022) employed a pre-trained multilingual XLM-RoBERTa (Conneau et al. 2020) transformer model (a type of deep neural network; see, e.g., Vaswani et al. 2017) to map national occupational classifications to the European Skills, Competences, Qualifications and Occupations (ESCO) framework. In addition to national qualifications and the ESCO dictionary, the authors make use of descriptions drawn from the Qualification Dataset Register and European Employment Services (EURES) online job advertisements. The authors conclude that the model demonstrates promising results when applied to work in a variety of languages. Chen and Mason (2024) put forth the utilization of explainable AI (XAI) methodologies to develop a lexicon-based approach. The method was applied to identify traditional and green energy jobs in online advertisements, with a reported precision rate of 82%. In 2024, Eurostat, in collaboration with Cedefop, launched a text classification competition at the ISCO level. They provided a hand-coded dataset comprising 26,000 OJA, with the highest score for the lowest common ancestor at 58% (for further details, see https://statistics-awards.eu/). It should be noted that neither the data nor the algorithms are disclosed, so they cannot be used by researchers or the official statistics community.

In this study, we propose a hierarchical conditional probability estimator and a classifier of job advertisements for the purpose of estimating job vacancy statistics. The methodology is then applied to predict the probability and classify the advertisements across occupations according to the KZiS, which organizes occupations into a five-level hierarchy of job categories, represented by a 6-digit code. Each digit encodes the child category, with the exception of the final level, which is encoded by two digits. The 1-digit code represents the widest major category, while the 6-digit code corresponds to the fifth level, the most narrow category. This imposes a classical hierarchical multi-class classification problem. Similarly to the approach taken in European Commission (2022), our classifier is based on a pre-trained transformer model, which we have carefully fine-tuned for the specific task of job classification. The approach was tested using XLM-RoBERTa and HerBERT, a transformed model pre-trained on a large multilingual corpus that included the Polish language (Mroczkowski et al. 2021). The classifier was trained and evaluated using a dataset of online job advertisements gathered from Polish online job boards and administrative records.

Our contribution can be summarized as follows:

We propose and develop a hierarchical conditional probability estimator and classifier that takes into account the structure of the official classification of occupations. This approach may serve as a significant complement to traditional hand-coding techniques and facilitate the utilization of big data sources for official statistics.

We employ a novel data source: large-scale administrative data comprising job offers that have been hand-coded by experts from public employment offices. In addition to the data itself, we provide a detailed description of its quality, along with the experts’ coding, which is often absent or unavailable in the existing literature.

We developed a model for twenty-four languages included in the European Union, with a view to studying the performance of these languages.

The open-source software and models are made available to the official statistics community so that they may benefit from the proposed methodology, either by utilizing the classifiers or by modifying them to suit their own purposes.

The remaining part of the paper is as follows. Section 2 describes Polish Classification of Occupations and Specialties (hereinafter KZiS) and its relation to the International Standard Classification of Occupations (ISCO). Section 3 provides theory for the proposed approach that takes into account the hierarchical structure of official classifications. Section 4 describes the dataset used for training and testing, in particular administrative data sources, hand-coded online job offers and other available sources. Section 5 contains information about the quality of the data, in particular how accurate are the experts’ coding. Section 6 presents experimental setup and its implementation. Section 7 presents results for the proposed classifiers for Polish, bilingual (Polish and English) and multilingual datasets. The paper concludes with a discussion and an exploration of the potential for extending the approach to other classifications, including the Occupational Information Network (O*NET), the European Skills, Competences, Qualifications and Occupations (ESCO) framework, and the Standard Occupational Classification (SOC). The Supplemental Material provide a brief tutorial on the use of the software, as well as detailed results.

2. Classification of Occupations and Specializations

The KZiS classification is created by the Ministry of Family, Labour and Social Policy (2014) and provides basic information about job type. It is based on ISCO established by the International Labour Organization (ILO). The current classification, available since 2023 is based on ISCO-08 and updated with new occupations, especially from the vocational education system, that are annually announced by the Ministry of National Education (see https://www.gov.pl/web/edukacja/zawody-szkolnictwa-branzowego).

The KZiS is a 6-digit, hierarchically structured classification system. The hierarchy encompasses a range of occupational groups, from 1-digit major groups to 6-digit occupations (Table 1). It develops the ISCO classification, which ends with 4-digit codes (unit groups). In this case, it is similar to the later established European Skills, Competences, Qualifications and Occupations (ESCO) classification. The KZiS classification encompasses over 2,500 occupations, which are grouped into ten major categories. In addition to the Armed Forces (group 0), the classification is primarily delineated by the level of job position (or skill) through a process of alignment with the UNESCO International Standard Classification of Education (ISCED 2013) and the levels of the Polish Qualifications Framework, as applicable to the majority of occupations within a given group.

Structure of the Classification of Occupations and Specializations (as of 01.01.2023).

The majority of occupations within major group 1 and 2 require individuals to have completed a tertiary education qualification. These roles are associated with the highest skill levels, which entail the worker engaging in intricate practical and technical tasks (skill level 3), problem-solving, decision-making, and creativity (skill level 4). The majority of occupations within Groups 3 to 5 typically necessitate upper secondary education, frequently in a vocational context. These occupations are associated with ISCO skill levels 2 and 3. Skill level 2 encompasses the ability to perform tasks such as operating machinery, mechanical and electronic equipment, vehicles, and the manipulation, ordering, and storage of information. In order to perform tasks associated with ISCO skill level 2, secondary education is required for occupations within groups 6 to 8. Positions within major group 9 typically require a secondary education at most. Workers in these roles typically engage with tasks at levels 1 to 3 of the ISCO skill spectrum. Level 1 is associated with the completion of routine and elementary physical and manual tasks. The following section presents an overview of our methodology, which incorporates a consideration of the hierarchical structure of the occupation classification.

3. Hierarchical Multi-Class Classification

Hierarchical multi-class classification is a classical machine learning problem in which an object can be assigned a set of classes that are hierarchically organized in the form of a tree (Silla and Freitas 2011; Sun and Lim 2001), and a maximum of one class can be predicted per hierarchy level. Additionally, it is often required that every prediction must be coherent with respect to the hierarchy, meaning that for every predicted class, the classifier must also predict all its ancestors in the hierarchy. In other words, hierarchical multi-class classification can be seen as a problem of selecting a single path from the tree root to a leaf. Because we are interested in population analysis of the job market, we not only require the classifier to assign discreet classes but also provide probability estimates of an online job offer belonging to the specific class at each level of the hierarchy.

3.1. Formal Setting

Let

For

An example of class hierarchy with eight classes and their assigned codes

Let

Our goal is to find a classifier

where

In particular, as our goal is to use the classifier for population analysis, we aim at estimating the conditional probabilities of classes

Then the expected class-wise log loss for a single

Therefore, it is easy to see that the point-wise optimal prediction for the

Let us notice that under class hierarchy and the assumption that only one leaf node is assigned to instance

The conditional probability of any parent class is equal to a sum of conditional probabilities of its children:

Since only a single class per hierarchy level can be relevant, the sum of all conditional probabilities of classes on the same hierarchy level (with the same length of the codes

3.2. Modeling Probabilities Under Class Hierarchy

We are not only interested in possible accurate estimates of conditional probabilities

We refer to the first approach as a “bottom-up” (Barbedo and Lopes 2007). The bottom-up approach is one of the simplest to deal with the hierarchical classification problem. In this type of classifier, the conditional probability estimator of classes is trained only for classes in the leaf nodes of the hierarchy tree (i.e., 6-digit KZiS codes), and information about the class hierarchy is ignored. Because, out of these classes, only one can be positive at for instance, one can use categorical cross-entropy loss (a generalization of log loss) to train the probability estimator:

where

We refer to the second approach we consider as the “top-down” approach. In this algorithm, the probability of a given class is determined by a sequence of decisions made by node classifiers that predict subsequent values of the code word. By using the chain rule of probability, we obtain:

Let us notice that since we deal here with a multi-class distribution, we have that:

The classifier is then formed by the training probability estimator for every

3.3. Statistical Challenge of Long-Tail Distribution

It is common in classification tasks with a large number of labels that labels are highly unbalanced—only a few classes have a large number of data points, while the vast majority is represented by a much smaller number of examples. This kind of distribution is said to be long-tailed (Babbar and Schölkopf 2017; Bhatia et al. 2015). The long-tail distribution imposes statistical challenges in modeling the conditional probability distribution using classical machine learning algorithms like logistic regression or naive Bayes classifier, as they are not performing well under such a low data regime.

In our task, the classifier must predict a set of classes out of 2,911 possible ones. Figure 2 illustrates the long-tail distribution present in the dataset, which is described in detail in Section 4. It is evident that almost one-third of the classes are represented by less than ten examples, while only around 250 classes have more than 100.

Last level classes (codes) frequency in the study datasets. The

3.4. Proposed Model Architecture

To address long-tail distribution, instead of training the classifier from scratch, we opted for using a pre-trained transformer model. We are focusing on the popular family of models based on the Bidirectional Encoder Representations from Transformers (BERT; Devlin et al. 2019) architecture.

BERT is a pre-trained language model that has revolutionized natural language processing (NLP). It is an encoder-only model, meaning it is designed to create representations of an input text in the form of a vector that can be used for a variety of NLP tasks such as sentiment analysis, spam detection, or any other text classification task. BERT model uses bidirectional Transformer architecture. This mechanism helps the model weigh the importance of each word in the input sequence by considering its relationship with all other words. It allows BERT to capture context in a bidirectional way, meaning it considers both the left and right context when processing words.

It can be pre-trained on vast amounts of data in an unsupervised manner and can be fine-tuned for downstream tasks such as considered text classification. BERT models are usually pre-trained using two key strategies:

Masked Language Modeling (MLM): A portion of the input tokens are randomly masked, and the model is trained to predict these masked tokens based on their context.

Next Sentence Prediction (NSP): This task trains the model to understand sentence relationships by predicting whether two sentences appear consecutively in the original text.

In this work, we take a pre-trained BERT model and use its predicted text representation (hidden representation of [CLS] Token) combined with bottom-up or top-down output with linear models for modeling conditional probabilities of classes. We carefully fine-tune the resulting architecture in an end-to-end fashion—both partners of bottom-up and top-down outputs are updated, as well as the parameters of the BERT transformer model. This way, we obtain a model that is well-adjusted to the task while retaining its vast general language knowledge, that is, about words and concepts unseen in the training set used for fine-tuning. This should allow it to perform well also on a long-tail—classes with a small number of training examples. In the next section we describe the data used to train and test the proposed models.

4. Data Sources

4.1. Official Classifications and Definitions

This dataset has been prepared for classification using the official classifications and accompanying documentation available on the website of the Ministry of the Family, Labour and Social Policy.

Job description search engine (available at https://psz.praca.gov.pl/rynek-pracy/bazy-danych/klasyfikacja-zawodow-i-specjalnosci/wyszukiwarka-opisow-zawodow)—This service provides a comprehensive description of all occupations, including the name, code, synthesis, and a list of elementary and additional job tasks. For illustrative purposes, please refer to Figure 3. It is important to note that the descriptions of the occupations exhibit considerable variation in terms of both detail and completeness.

INFOdoradca+webservice (available at https://psz.praca.gov.pl/rynek-pracy/bazy-danych/infodoradca)—The service comprises a curated list of 1,000 occupations, offering a comprehensive overview of the profession or job role in question. It provides detailed information on the specific skills, qualifications and authorizations required for entry into the profession or job role, as well as the tasks and competencies necessary for success in the field. For an illustrative example, please refer to Figure 3, which depicts a description from the INFOdoradca+ web service.

Example screenshots of the job description engine and INFOdoradca webservice.

In addition to the official classifications, the following datasets were employed:

thesaurus created by Statistics Poland (see Available at https://stat.gov.pl/Klasyfikacje/doc/kzs/slownik.html)—contains 1,338 unique occupations and their synonyms, with the number of synonyms varying from 2 (equivalent to approximately 40% of all occupations) to 82 (for one occupation 813101 “Aparatowy procesów chemicznych” [Operator of chemical processes equipment]) with mean and median equal to 4.7 and 3 respectively.

KZiS-ESCO linkage database—list of selected occupations from KZiS linked to ESCO proposed by Stęchły and Woźniak (2023). This dataset contains 557 unique codes along with its descriptions downloaded from the ESCO website. The value of this crosswalk is providing occupation descriptions from the ESCO classification and connecting occupations with skills, what has not been done in the KZiS. To connect KZiS with ESCO authors used an expert method supported with language modeling and semantic similarity based on Sentence-BERT model. They determined the relations for all KZiS occupations by matching existing ESCO occupations to KZiS. In linking both classifications, the authors used several types of semantic relations: exact match, broader match, narrower match, and close match. They excluded false friend (no) matches and weak matches (see available at https://esco.ec.europa.eu/en/classification/occupation_main). In our dataset, we only used exact matches (about 8.7% of all KZiS codes).

Civil service job offers database (KPRM)—2,941 job offers from the civil service (https://nabory.kprm.gov.pl) for twelve occupation codes (121101, 121904, 214921, 242208, 242211, 242213, 261103, 315202, 315209, 325504, 334102, 421402) that meet the official definitions. This database was selected based on clerical review of matching job titles and descriptions.

For the official classifications (dictionary and INFOdoradca+), KZiS-ESCO and KPRM we have developed web-scraping algorithms that collected all available information on a given occupation.

In order to ensure the accuracy of online job advertisement classification, it is essential to utilize a comprehensive range of resources beyond official dictionaries or a limited number of advertisements from the Civil Service. Consequently, we have chosen to employ actual job postings from two primary sources: the Central Job Offers Database (hereinafter ePraca; previously known as CBOP), which encompasses all job advertisements submitted to Public Employment Offices (PEOs), and hand-coded job advertisements from multiple online sources. The subsequent subsections will delineate the characteristics of these datasets.

4.2. Central Job Offers Database: ePraca

Public employment services in Poland include public employment offices (PEOs), which operate at LAU1 (Local Administrative Units) level and are responsible for registering and managing unemployment. This means that there are two sources of data about employment in Poland: (i) the Labor Force Survey (LFS) and (ii) registered data collected by PEOs. The register maintained by PEOs contains detailed characteristics of unemployed persons and job offers. The number of registered unemployed may be higher than the LFS estimate because registration is connected with free health insurance and unemployment benefits.

The structure of job offers from PEOs differs from the general population of job vacancies. There is an over-representation of jobs from companies that have an incentive to advertise their vacancies through public employment offices, for example in the case of refunded internships or publicly-subsidized workplaces for the disabled (M. H. Beręsewicz et al. 2021). Public entities, in particular, are more willing to publish job offers in PEOs because they are often obliged to do so by their own internal regulations. Finally, low-payed jobs are more often sent to PEOs because people with lower qualifications often rely on public institutions to help them find a job. Better-paying jobs are more often advertised on job boards, in media or through private HR agencies, which charges fees for their services (Gałecka-Burdziak and Pater 2015; Radzikowski 2023).

Job offers may be submitted via a paper or online form, following a highly structured format. For an illustrative example, please refer to this website: https://warszawa.praca.gov.pl/zgloszenie-oferty-pracy (in Polish). In Supplemental Materials we provide an example paper form in Polish and English translated using DeepL software. The requisite information encompasses the entity’s name, address, and REGON/NIP identifier; the job title; the number of vacancies; the type of contract; a description of the responsibilities and requirements for the applicants. An example of a job posting is provided in Figure 4. It is important to note that the option to specify the job occupation according to the official classification is available on the online form, though this is not a mandatory requirement. In accordance with the findings of our discourse with PEOs, it has been established that the majority of employers refrain from populating this field, and instead, PEOs personnel undertake the coding of job advertisements according to the official KZiS classification manually. Regrettably, we are unable to present any numerical results to substantiate our claims, as this information is not accessible through ePraca database. In Section 5, we examine the quality of ePraca coding using a sample of job ads. Information about the occupation is policy-relevant. The Ministry of National Education annually announces the forecast of the demand for employees in vocational education occupations on the national and regional labor markets (see https://www.gov.pl/web/edukacja/prognoza-zapotrzebowania-na-pracownikow-w-zawodach-szkolnictwa-branzowego-na-krajowym-i-wojewodzkim-rynku-pracy-2024). Based on the forecast the Ministry differentiates the educational part of the general subsidy for vocational education students between local government units. The regional governments consider the forecast in preparing an annual list of occupations for which the costs of vocational training for young employees is reimbursed. The forecast is also used by regional labor market councils in issuing opinions on the validity of education in occupations.

Example job posting from ePraca.

The ePraca data is accessible via the API for registered users (more information is available at the following website https://oferty.praca.gov.pl/portal/index.cbop\#/dlaInt). Each job advertisement comprises 174 fields in JSON format, including an occupation code according to the KZiS and descriptions provided by the employer or recruitment agency. The data used in this study was downloaded on a daily basis from the beginning of 2022 to the end of 2023. The data was then subjected to a process of cleaning, whereby erroneous codes or descriptions with missing data in all fields were removed. The codes used for this purpose are available for download online at https://github.com/OJALAB/CBOP-datasets. Following this, the data set comprised over 822,000 fully labeled ads with 2,468 unique occupation codes and job ads descriptions, based on the aforementioned criteria.

The distribution of KZiS codes was markedly uneven, with 145 codes having only a single example, 128 codes having two examples, and codes with over 5,000 examples. The five most prevalent codes were: The following job titles were identified: 522301 “Sprzedawca” (Sales Assistant), 432103 “Magazynier” (Warehouse Operator), 931301 “Pomocniczy robotnik budowlany” (Construction Worker’s Helper), 911207 “Pracownik utrzymania czystości” (Cleaner), 515303 “Robotnik gospodarczy” (Maintenance Worker). To reduce the size of the ePraca database and speed up training, a stratified sample of the ePraca dataset was created, with strata defined by KZiS code and the number of characters in the description. A total of 11,572 strata were formed. Table 2 presents information regarding the number of advertisements by the number of characters in the advertisement description.

Number of ePraca Ads by the Number of Characters in the Description.

From each stratum, a simple random sample was selected, with the size determined as

4.3. Hand Coded Datasets

4.3.1. The 10,000 Ads Dataset

For our study, we have used two datasets of hand-coded job advertisements. The first consisted of 10,000 job advertisements randomly selected from a large dataset of web-scraped advertisements. The second dataset consisted of 1,000 job advertisements selected from one of Poland’s largest online recruitment services using specific keywords to match a range of occupations. We selected the IT sector for its diversity, particularly during the analyzed period, and for jobs that are less prevalent in online recruitment portals. This study focuses on the 10,000 dataset.

As a basis for the sample, we have used 1,805,967 job ads scrapped between 2021-06-28 and 2021-11-08 from eighteen sources. Information about the number is presented in Table 13 in Supplemental Materials. This dataset contained information on the web service (the source), url, language of title and description according to a given website, job title, employer, region, job type, position level, contract type, salary, category (according to a given source), web-scraping date and job description. Note that some columns contained missing data (e.g., salary).

Before sampling, the data was cleaned to remove rows that had no job description (233,464 observations), had zero words or less than five characters. The number of words was counted using

Next, we have created two stratification variables. The first was based on the identification of specific occupations: “sprzedawca” or “kasjer” (seller or cashier), “doradca klient” (customer consultant), “magazynier” (warehouse worker), “przedstawiciel” (representative), “pracownik produkcji” (production worker), “pomoc kuchenna” or “kucharz” (kitchen help or chef), “biuro” (bureau), “sprzątacz” or “sprzatacz” (cleaner with and without Polish-specific letter), and “specjalista” (specialist). If none was matching “other” category was assigned.

The second stratification variable was based on the number of words in the description that were categorized into the following groups:

In order to code the dataset, three experts from PEOs were recruited in February 2022. Due to budgetary limitations, the sample size was set at 10,000, with each expert responsible for coding 3,333 advertisements. However, due to rounding, the final sample size was 10,002. The sample size was selected using a stratified systematic sampling design, with probabilities proportional to the number of characters in a given ad. The sample was selected using

A total of 3,233 advertisements were selected for each expert, with an additional 100 advertisements sampled for cross-validation of expert coding. It should be noted that for four ads we also had information from ePraca. Each expert was given an Excel spreadsheet, available via Google Sheets, containing all the relevant information. The experts were tasked with coding the advertisements into 6-digit codes and providing comments where necessary. They were given a one-month period to complete the coding of the advertisements into occupation codes.

4.3.2. The 1,000 Ads Dataset

In January 2023, an additional dataset of 1,000 advertisements was selected for manual coding by a single expert. The sample was selected from a comprehensive dataset of over 543,000 ads scraped from one of the largest services in Poland. In the case of this particular dataset, we were able to identify in excess of 227,000 unique descriptions. We then proceeded to implement a sample selection process in accordance with the following scheme: the specification of a search phrase (including regular expression notation) and the subsequent selection of the most appropriate samples. The information pertaining to the aforementioned phrases, the selected advertisements and the number of cases in one of the largest services in Poland is detailed in Supplemental Table 16.

The rationale behind the selection of the phases is as follows. Firstly, the decision was taken to include advertisements for positions related to computer science, data science, and information technology. Secondly, the intention was to incorporate occupations that were not previously covered by our existing sources, such as pharmacist, sworn translator, or piano repairer.

4.4. The Multilingual Dataset

All datasets (over 200k rows) were translated into English and then to twenty-two other languages: Bulgarian, Czech, Danish, German, Greek, Spanish, Estonian, Finnish, French, Irish, Hungarian, Italian, Lithuanian, Latvian, Dutch, Portuguese, Romanian, Russian, Slovak, Slovenian, Swedish, Ukrainian. This procedure was done in two steps.

Firstly, the Polish text was translated into English using Google Translate. This was achieved by utilizing the Google Sheets application and the

Secondly, the ads translated to English with Google Translate descriptions were translated into twenty-two languages using the Argos Translate software. The principal rationale for this approach was the scarcity of human resources (there were no assistants available to undertake the translation process using Google Sheets) and time constraints, given that access to GPUs was available, as supported by the Argos Translate open-source library.

Due to budgetary constraints, an assessment of the quality of automated translation was not conducted. However, based on a discussion with PEO experts, it was evident that if they were to code English or other language-based descriptions, they would likely utilize Google Translate or other free translation services.

5. Quality of the Input Data

5.1. Hand-Coded Data Quality

5.1.1. The 1,000 Dataset

The 1,000 dataset was coded by a single expert, and thus, cross-validation was not available. The expert identified 968 advertisements for which a single occupation code was deemed appropriate, while thirty-two advertisements were assigned multiple codes (twenty-seven with two codes, three with three codes, and one with four codes). Therefore, the final dataset comprised 1,035 advertisements with 226 codes, with the most prevalent being: “Programista aplikacji” (Applications programmer, 125 occurrences; 251401), “Analityk baz danych (data scientist)” (Data scientist, 77 occurrences; 252102), and “Specjalista do spraw cyberbezpieczeństwa” (Cybersecurity specialist, 63 occurrences; 252902). See Supplemental Table 17 for the information on the number of unique 6-digit and 1-digit codes for a given stratum defined by the search phrases.

5.1.2. The 10,000 Dataset

The process of assessing the quality of the hand-coded data was an iterative one. Information about the coding is presented in Table 3. From the final sample, thirty-two advertisements with a description in a language other than Polish were removed, although they had been coded by experts.

Information About the Quality of Hand-Coded Data.

It was observed by experts that for thirty-eight advertisements (0.38%; thirteen from the first expert, thirteen from the second, and two from the third), it was not possible to ascertain the occupation due to a lack of pertinent information or because no occupation in the official classification matched the description. In one instance, the expert indicated that the advertisement describes two distinct occupations. In fifty-nine cases (representing 0.6% of those with codes), experts noted that multiple codes align with the description. Rather than removing these fifty-nine cases, we selected to flag them as potential codes for the same description. Consequently, the final dataset comprised 9,932 ads (prior to accounting for multiple codes).

The subsequent stage of the study involved an investigation into the quality of the coding produced by experts based on a set of 100 ads that exhibited a certain degree of overlap. Two of the experts did not provide a code for one of the advertisements, whereas the others did. Accordingly, for the purposes of comparison, the total number of ads considered was 98. Table 4 presents the data on the rate of agreement of expert coding at the 1-, 2-, 4-, and 6-digit levels (section “Before clerical review”) along with 95% confidence interval using sampling weights using the

Point and 95% Interval Estimates of the Rate of Agreement of Expert Coding at 1, 2, 4, and 6 Digits.

In general, pairs of experts agree at the level of 86% at the first digit of occupation codes, with this figure decreasing to 73% and approximately 67% at six digits. The level of agreement among all experts is 80% for the first digit and decreases to approximately 60% at the sixth digit. The findings were presented to the experts, who were invited to provide comments and suggest potential amendments. Expert 1 elected to alter ten of their original codes, Expert 2 chose to modify thirteen of their initial codes, and Expert 3 revised six codes (including the addition of two missing codes). The results of the clerical review are presented in Table 2 (section “After clerical review”). As anticipated, the agreement rate increased, although some discrepancies between experts remained. The overall agreement on a single digit increased to 85%, while for six digits it reached 68%. The primary source of discrepancy was the lack of relevant information about the education or skills. For all pairs of experts, the Kappa coefficient for agreement of one digit codes shows strong agreement with levels close to 85%.

Next, we focus on the assessment of the quality of ePraca coding, as in our sample, we had 236 ads from this source. We were able to link 213 unique ads to the ePraca using the hash identifier present in the ad’s URL. Table 5 presents information on the quality of ePraca coding according to the experts (point and 95% CI for the rate of agreement) and Kappa coefficient for each expert and ePraca as well as all experts (treated as one group) and ePraca as another (the last row denoted as

Point and 95% Interval Estimates of the Rate of Agreement of Expert Coding at 1, 2, 4, and 6 Digits for ePraca Ads.

Subsequently, an assessment of discrepancies in coding was conducted by experts. Two experts consented to participate in the study and provided comments. The second expert determined that six of the twenty-nine advertisements provided by ePraca were accurate, differing from the expert’s initial assessment. Additionally, four of the twenty-nine advertisements exhibited a dual accuracy, with both codes being correct. Consequently, the expert’s agreement rate at the 6-digit level would increase to 76%, while at the 1-digit level, it would increase slightly, given that the disagreements are at more detailed levels. The third expert determined that in seven of the thirty advertisements, both codes are accurate. However, the expert highlighted that the lack of sufficient information precludes the ability to distinguish between the two. Consequently, for this expert, the agreement rate at the 6-digit level would increase to 72%, while at the 1-digit level, a slight increase is observed due to the disagreements occurring at more detailed levels. This also results in lower Kappa coefficients around 75% in comparison to about 85% presented in Table 4 but they are not significantly different if we compare confidence intervals.

Table 6 presents a comparison of agreements at the 1-digit level. The figures in the table refer to the precision of the classification of ePraca ads. With the exception of the 4th group, the agreement rate for groups 2 to 9 is above 70%. However, as there is only one example in the first group, we refrain from commenting on it. Overall, it can be observed that the ePraca codes are characterized by a high level of confidence, with the main discrepancies occurring between the second and fourth groups.

Comparison of Codes at the 1-Digit Groups Between ePraca and Experts Coding.

The assessment of the quality of hand-coded data is of paramount importance in the construction of a classifier, as the input codes (labels) must be accurate. In our approach, we utilize the codes in their original form; however, it is essential to consider the potential for uncertainty in the preparation of training datasets when interpreting the final results. Further research and the development of algorithms that take this into account will be required to address this issue in greater depth.

5.2. General Description of the Data

In this section, we present information on the quality of input data. The combined dataset contained 200,875 cases out of which 9,200 cases refer to the official dictionary. Note that this number is larger than the number of codes because for certain codes more than one case is provided. Table 7 presents the coverage of occupation 6-digits occupation codes by main groups and source. The last row presents information about the number of rows for a given dataset. Datasets varied in terms of coverage. The most complete was ePraca with coverage close to 80% of all 6-digit codes within given occupation group. Due to sample size, hand-coded data covered only a small fraction of available codes. The combined dataset was missing 186 occupation codes. Detailed information: 1 group: 9, 2 group: 84, 3 group: 46, 4 group: 1, 5 group: 5, 6 group: 1, 7 group: 27, 8 group: 8, 9 group: 5). List of these codes is provided here https://github.com/OJALAB/job-ads-datasets/blob/main/data/codes-not-coveted.csv. It should be noted that for the training dataset the complete dictionary with all 2,911 codes was used.

Coverage of Six Digit Codes by Main Groups and Sources (Excluding Official Dictionary).

Another factor that should be taken into account is the varying number of cases for each code. For instance, the largest number of cases was present for Industrial and craft workers (7) and Professionals (2) with close to 40k and 34k cases respectively. The less represented were Public officials, senior officials and managers (1), Farmers, horticulturists, foresters, and fishermen (6), and Armed Forces (0).

Sources varied in terms of a number of words describing a given code. Table 8 presents information on the distribution of the number of words in the combined dataset for each group separately. The median number of words was 39 and varied between from 31 for the 9th to 83 for the 1st group. A comparison of the number of words in the descriptions from the two sources reveals that the ePraca descriptions contain, on average, approximately fifty words, while the descriptions from online sources contain more than 120 words on average. The detailed information for each input dataset is presented in the Supplemental Material in Table 18.

Number of Words for a Given Group for the Combined Dataset (Official Dictionary Included).

Finally, for building the classifier, we have created training and test data according to the following algorithm:

Step 1: Combine datasets: INFO+, KPRM, HAND (10k), HAND (1k), ESCO, and ePraca into one dataset.

Step 2: Calculate the size of 70% of cases stratified by source and 6-digit code.

Step 3: Strata with only a single case were removed and used for testing.

Step 4: Strata with

Step 5: The final training dataset was created by combining 70% of the dataset from Step 4 with an official dictionary and GUS thesaurus.

Step 6: The final test dataset was created by combining the dataset from Step 3 with 30% sample from Step 4.

Table 9 presents information on the number of cases and 6-digit codes in train and test datasets by source. The training dataset contains close to 143k cases while testing 58k. The main contribution is from the ePraca dataset. The number of codes in the training dataset is equal to the number of codes in the official classification, while in the test data not all codes are available.

Number of Cases and 6-Digit Codes in Train and Test Data by Source.

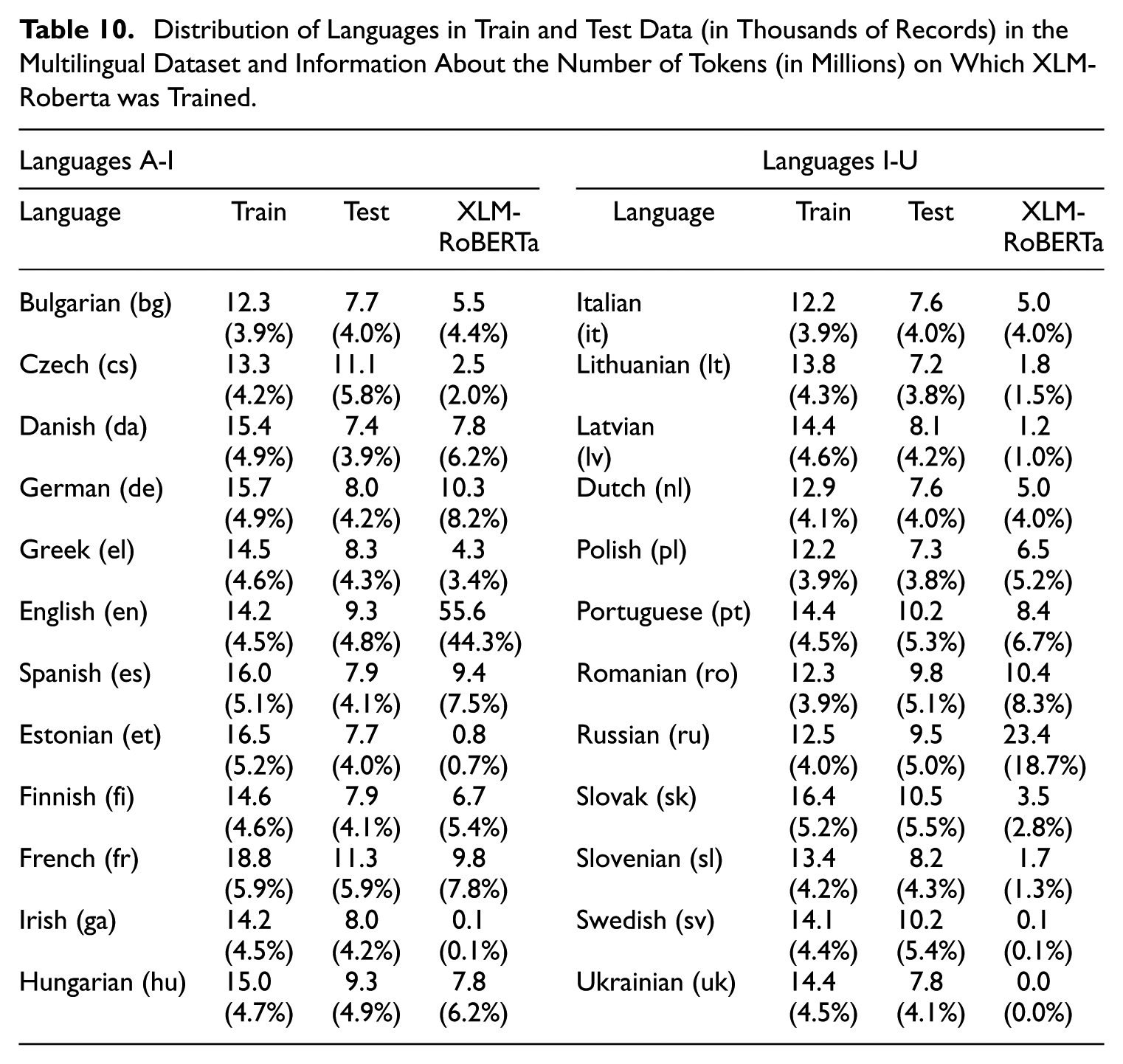

In order to verify the approach we translated train and test data from English (based on Google Translate) to twenty-two languages (all UE languages) with Argos Translate. This dataset over 3,4 mln records for training and close to 1,4 mln for test data. In order to avoid overfitting of the multilingual classifier we selected a stratified random sample of both datasets independently with 10% and 15% from each stratum created by 6-digit occupation code and source type. Information about the number of cases for each dataset (train and test are presented in Table 9 while detailed information about the distribution of languages are presented in Table 10).

Distribution of Languages in Train and Test Data (in Thousands of Records) in the Multilingual Dataset and Information About the Number of Tokens (in Millions) on Which XLM-Roberta was Trained.

The number of cases for a given language varies between the two sources. To illustrate, in the case of hand-coded test data, Polish was the least represented language, with 287 cases, while French was the most represented, with 1,348.

Table 10 contains information about the number of tokens used to train XLM-RoBERTa. The primary objective is to demonstrate that some languages are markedly under-represented in the XLM-RoBERTa dataset, including Estonian, Irish, Swedish, and Ukrainian, while others are over-represented, such as German and Russian. This may suggest that the quality of classification may vary between languages.

6. Experimental Setup

6.1. Models and Implementation

In the empirical study, we considered the following model variants of bottom-up and top-down approaches described in Subsection 3.2:

As a baseline, we use bottom-up and top-down with simple linear models (i.e., logistic regression with linear part) for modeling probabilities using TF-IDF representations of texts.

We compare it with the transformer-based architecture as described in Subsection 3.4, in both bottom-up and top-down variants fine-tuning them based on the following pre-trained models:

– HerBERT-base (https://huggingface.co/allegro/herbert-base-cased, with 110M parameters) and HerBERT-large (https://huggingface.co/allegro/herbert-large-cased, with 336M parameters)—fine-tuned BERT for Polish language developed by Allegro,

– XLM-RoBERTa-base (https://huggingface.co/FacebookAI/XLM-roberta-base, with 279M parameters) and XLM-RoBERTa-large (https://huggingface.co/FacebookAI/XLM-roberta-large, with 561M parameters)—multilingual models developed by Facebook/Meta.

As the baseline implementation, we use

For the implementation of the Transformer architecture, we use PyTorch (Paszke et al. 2019), PyTorch Lightning (Falcon and Team 2019), and Huggingface Transformers (Wolf et al. 2020) libraries. We fine-tune transformer-based models using AdamW optimizer (Loshchilov and Hutter 2019), with the base learning rate equal to

6.2. Evaluation Metrics

To evaluate the quality of obtained classifiers, we primarily report a per hierarchy level logistic loss (cross-entropy) to investigate if the proposed approach improves the quality of obtained estimates of conditional probabilities of classes

where

7. Results

7.1. Results for Country Specific Classifier (Trained on Polish Language Only)

Figures 5 to 7 compares the accuracy of different classification approaches for job codes across various levels of the KZIS occupation classification system, ranging from 1-digit to 6-digit codes, with ISCO classification at the 4-digit level. Across all datasets (Overall, ePraca, and Hand-coded job offers), transformer-based models consistently outperform linear models. Larger transformer models (HerBERT-large and XLM-RoBERTa-large) achieve the highest accuracy scores across all levels and datasets but are slightly better than base models.

Results for the recall@1 classification of Polish job advertisements by classifier (Overall).

Results for the recall@1 classification of Polish job advertisements by classifier (ePraca only).

Results for the recall@1 classification of Polish job advertisements by classifier (Hand-coded only).

Notably, the top-down approach consistently yields better results than the bottom-up approach for all models and coding levels. This improvement is particularly pronounced for hand-coded data, indicating that taking the hierarchical structure of occupational codes into account during model training significantly enhances prediction accuracy. For instance, for the hand-coded dataset for HerBERT-base the difference at ISCO level is close to 1.5 p.p. The same relation is present for the linear model where the differences between top-down and bottom-up are close to 2 p.p. (e.g., ISCO level).

As expected, the accuracy of all models decreases as the level of detail in the occupational codes increases. For example, the best-performing model (XLM-RoBERTa-large and HerBERT-large with top-down approach) achieves around 88.6% accuracy for 1-digit codes but drops to about 61% for 6-digit codes. This trend is consistent across all models and approaches, reflecting the increasing difficulty of classification as the categories become more specific.

Results for country-specific models suggest that large models perform similarly as base ones and country-specific BERT models performs slightly better than the multilingual BERT model. It should be noted that the XLM-RoBERTa-base model consist of 279M parameters while HerBERT 110M so comparison between these models it not entirely fair. However, it suggest that a smaller model for a specific language may perform similarly as larger model trained on multi-language dataset. In the next section, we will compare the multilingual model where the dataset contains two languages—Polish and English.

7.2. Results for Bilingual Classifier (Trained on Polish and English Languages)

7.2.1. Overall Results

Figure 8 compares the accuracy (measured as Recall@1) of different classifiers for occupational coding across various levels of detail, from 1-digit to 6-digit codes, as well as ISCO codes. The classifiers include different transformer models (HerBERT and XLM-RoBERTa) using both top-down and bottom-up approaches. The plot also compares these automated methods to hand-coded results.

Results for the recall@1 classification of Polish job advertisements by multilingual classifier (XLM-RoBERTa-base).

The results show that across all levels of occupational coding detail, the models using Google Translate consistently outperform those using Argos Translate. This difference is particularly noticeable for more detailed coding levels. For example, at the 6-digit level, the best-performing Google model (Polish Transformer XLM-RoBERTa-base, bottom-up) achieves about 88% accuracy, while the corresponding Google Translate model achieves only about 86.4% accuracy. Notably, the HerBERT model, which was specifically designed for Polish, performs poorly when applied to English text, highlighting the importance of using language-appropriate models.

Interestingly, the Polish versions of the models tend to perform slightly better than their English counterparts for both Argos and Google translations. This suggests that maintaining the original language (Polish in this case) or using a closely related language for translation might preserve more relevant information for occupational coding tasks. The poor performance of HerBERT on English text further supports this observation, emphasizing the need for language-specific or truly multilingual models in cross-lingual tasks.

As observed in the previous plot, the top-down approach generally yields slightly better results than the bottom-up approach, although the difference is less pronounced here. The automated methods significantly outperform hand-coding across all levels of detail, with the gap widening for more granular classification tasks. The substantial difference in performance between Argos and Google translations highlights the importance of choosing appropriate translation tools in multilingual occupational coding tasks, with the closed-source Google Translate showing superior results in this context. This finding, combined with the language-specific performance differences, underscores the complexity of cross-lingual occupational coding and the need for careful selection of both translation tools and language models.

7.2.2. Detailed Results for the Hand-Coded Data

This section presents a more detailed analysis of the combined hand-coded data set, comprising 10,000 and 1,000 records. The data primarily come from online sources, and the classifier will be employed for further analysis of this type of data. Table 11 contains the corresponding data for the transformer model. The results are presented separately for the training and test data. For the training dataset, the transformer model achieves over 92% agreement for each group, while the logistic model performs significantly worse for the 1, 3, 4, and 9 groups.

Confusion Matrix for One Digit Occupation Groups (Codes) for the Transformer Model with Top-Down Approach on the Test Data.

In order to evaluate the efficacy of a given approach, it is essential to consider the results of the tests conducted. Once again, the transformer model demonstrated superior performance for all groups, with a precision rate exceeding 68% (with the exception of Occupation Group 6). In contrast, the logistic model exhibited lower precision rates for some groups, with a difference of approximately 10 percentage points. For instance, the logistic model for Group 3 achieved a precision rate of 57.5%, while the transformer model surpassed this rate, reaching over 68%. These results indicate that the transformer model is the optimal choice. Furthermore, we emphasize that the model’s performance can be validated using out-of-sample data, particularly new advertisements and clerical reviews with expert coding.

The confusion matrices for the XLM-RoBERTa-base transformer model reveal its performance in occupational coding tasks across Polish and English languages. Overall, the model demonstrates high accuracy, with the majority of predictions correctly falling on the diagonal for both languages. Notably, Occupation Groups 2, 5, and 7 consistently show over 80% correct classifications, indicating the model’s strength in identifying these occupational groups. However, some common misclassification patterns emerge, such as Group 2 being mistaken for Group 1, and Group 3 for Group 2, suggesting potential similarities or overlaps in these occupational definitions that challenge the model’s discriminative capabilities.

Interestingly, while the overall performance is comparable between Polish and English, some nuanced differences are observed. For instance, Occupation Group 1 is more accurately classified in English (78.9%) compared to Polish (76.7%), whereas Group 5 shows superior performance in Polish (85.2%) over English (81.3%). These variations highlight the potential impact of language-specific nuances on occupational coding accuracy, emphasizing the importance of considering linguistic context in model development and application.

The matrices also reveal challenges in classifying low-frequency categories, exemplified by Occupation Group 6, which has only seven samples and exhibits poor performance with a high misclassification rate to Group 9. This underscores the persistent challenge in machine learning of accurately handling rare or underrepresented classes, particularly in specialized domains like occupational coding.

Despite these challenges, the consistency in misclassification patterns across both languages suggests that many errors stem from inherent similarities between certain occupational categories rather than language-specific issues. This insight is valuable for future refinements of occupational classification systems and the development of more robust coding models. The strong overall performance of the XLM-RoBERTa-base model across languages demonstrates its potential as a powerful tool for automated occupational coding, while also highlighting areas for future improvement, particularly in distinguishing between similar categories and handling low-frequency occupations. Detailed results are presented in Supplemental Materials.

7.3. Results for Multilingual Dataset (Twenty-Four Languages)

In this section, we present the results for a multilingual dataset that has been trained with XLM-RoBERTa models. Table 12 presents the results for the test dataset only. The table also includes two approaches to modeling the hierarchy for 1, 2, 4 (ISCO) and 6 (KZiS) digits. The results are comparable to those observed for the Polish and bilingual models. The overall classification rate for 1-digit codes is approximately 84% (approximately 2–4 p.p. lower than the first two approaches), and the hand-coded classification rate is approximately 78%. As previously noted, the inclusion of the hierarchy improves predictions for the hand-coded data as the hierarchy is traversed.

Recall@1 Metric for the Multilingual Test Data by Dataset and Transformer Approach.

As indicated in Subsection 5.2, the sample size for languages differs considerably in the test data. Accordingly, in order to evaluate the accuracy of the multilingual classifier, we present the results for each translated test data set, including the complete data set and the sample utilized for training. Figure 9 depicts the accuracy (recall@1) for each language across 1-digit, 4-digit (ISCO) and 6-digit (KZiS) occupation codes, employing both the bottom-up and top-down approaches. The figures illustrate the results for the complete and sampled hand-coded test data sets.

Results for the multilingual model for 1-digit, 4-digits, and 6-digit codes by the approach (bottom-up and top-down) for hand-coded test data (whole and sampled). Note

As expected, the highest classification was observed for English (translated with Google Translate, the most prevalent language in the XLM-RoBERTa dataset) and Polish (original), with a near 60% accuracy at 6-digit codes and a near 80% accuracy at 1-digit codes. The top-down method yielded improvements in classification for 67% of languages at the 1-digit level and 20% at the ISCO level. The top-down approach yielded an average improvement of 0.3 p.p. at the 1-digit code level, while exhibiting a decline of −0.3 p.p. at the 4-digit code level. Notably, this approach consistently enhanced classification outcomes for Polish and English languages, suggesting that the impact on other languages may be diminished due to potential discrepancies in translation from English to other languages via the Argos library. Moreover, the degree of accuracy at the 6-digit level exhibits a notable disparity, with a near 60% success rate for English and a figure approaching 50% for Irish. This trend persists at the ISCO level, while the variance at the 1-digit level is less pronounced.

The results for the sampled part of the test data diverge significantly from the results for the entire dataset. For instance, the French result for 6-digit codes was approximately 40%, while the overall test data yielded a figure exceeding 55%. Conversely, the Polish and English results exhibited a reversed pattern, with values of over 80% and 90%, respectively, in comparison to approximately 80% for both.

A modest correlation was observed between the accuracy of the XLM-Roberta model and the coverage of languages. This is evidenced by the Pearson’s and Spearman’s correlation coefficients between accuracy and the share of tokens, which were .26 and .17, respectively. The highest Pearson’s and Spearman’s correlation coefficients were observed for the share of cases in the test data (.37 and .22, respectively). The detailed results for this classifier are presented in the Supplementary Materials.

8. Discussion

In this paper, we propose a classifier and conditional probability estimator of occupation codes for job vacancy statistics that takes into account the hierarchical structure of the occupation classification. Occupations as proxies of job-related skills seem to be especially important in explaining the technological, demographic, and social transformation in the labor markets. The proposed classifier can be used for automated occupational coding by official statistics or labor market institutions that collect unstructured information about labor demand and formulate policies. To our knowledge this is the first publicly available model for twenty-four languages along with detailed description on the data and predictions quality.

The paper highlighted the importance of data quality as an input to the training and testing models. We have shown that the hand-coded and administrative data can introduce additional uncertainty due to misclassification of labels and hierarchical classification. This process should certainly be included in the National Statistical Quality Assurance Frameworks and the European Statistics Code of Practice in order to progressively include indicators on the quality of training and testing data used for official statistics.

Our approach can be used with information-abundant online and administrative data to enrich survey-based job vacancy data. This is especially important nowadays, when survey research faces the problems of non-response and under-reporting. The use of online job advertisements is the main job search method and often also recruitment. They contain plenty of unstructured information. While statistical offices lack methods to classify such text, we provide one. Our approach is dedicated to job offers, and our procedure shows how to incorporate country-specificity on the example of Poland.

We use the Polish Classification of occupations and specializations, which is six digits, going one level further than the international ISCO classification, and is similar to the ESCO classification. We compare models trained on English and Polish languages and show the limitations of applying generic methods based on English language. We find that the country-specific model performs better than the multilingual one. This confirms the need for language-specific models, as it outperformed the generic ones that were only prepared for our task, and not trained with Polish language. However, there were examples of occupations (e.g., managers) that were well classified in English. This might result from the fact that some occupational titles have English origin and are often used in English form in job advertisements. This may be an example for other language, and institution-specific countries.

In this paper, our focus is on the Polish KZiS and ISCO. However, it should be noted that other classifications may be of interest to users, including O*NET, ESCO, and SOC. One potential avenue for investigation is the utilization of publications that align with one another in terms of their respective classifications. For example, Eurostat has published a report entitled “The crosswalk between ESCO and O*NET” (see https://esco.ec.europa.eu/en/about-esco/data-science-and-esco/crosswalk-between-esco-and-onet), which may be used to align our predictions for ISCO with the O*NET classification system. An alternative approach may be to draw upon the work of Stęchły and Woźniak (2023), who establishes a link between the Polish KZiS and ESCO classifications. Ultimately, researchers may utilize the pre-trained models presented here and develop their own classifiers.

Supplemental Material

sj-pdf-1-jof-10.1177_0282423X251395400 – Supplemental material for Multilingual Hierarchical Classification of Job Advertisements for Job Vacancy Statistics

Supplemental material, sj-pdf-1-jof-10.1177_0282423X251395400 for Multilingual Hierarchical Classification of Job Advertisements for Job Vacancy Statistics by Maciej Beręsewicz, Marek Wydmuch, Herman Cherniaiev and Robert Pater in Journal of Official Statistics

Footnotes

Acknowledgements

We would like to thank Natalie Shlomo and Piotr Chlebicki for their valuable feedback on the previous draft of this paper.

Author Contributions

Marek Wydmuch and Maciej Beręsewicz contributed equally to the paper. Marek Wydmuch was responsible for developing and coding the classifier, Maciej Beręsewicz was responsible for preparing training and testing datasets, computation, preparation of the manuscript, and assessing the results. Herman Cherniaiev collected and initially processed the data on job offers. Robert Pater reviewed the literature and provided the economic and the educational context of research.

Information on Software and Models

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Cherniaiev Herman, Pater Robert, Wydmuch Marek work and research were conducted in the Educational Research Institute—National Research Institute (IBE-PIB) in Warsaw, and funded by the Polish Ministry of Education and Science within a project

Supplemental Material

Supplemental material for this article is available online.

Received: November 5, 2024

Accepted: October 21, 2025

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.