Abstract

Introduction

With the development of the economy, an increasing amount of time-to-event data is being observed and recorded, and the analysis of such data is widely applied in various fields, including medicine research, financial studies, and social science investigation. In biomedical research, time-to-event data are also known as survival data, and the analysis of time-to-event data is referred to as survival analysis. Survival analysis investigates the relationship between certain characteristics of study subjects (e.g. patients) and the time until an event occurs, such as death of the study subject, failure time of certain experiments or recovery. A common model for analyzing such data in statistical research is the hazard model, which associates the risk of an event (usually the onset of a disease or death) with one or more covariates related to that event. The Cox proportional hazards model is a very common type of hazard model and a popular model for analyzing time-to-event data with censored data and covariates. 1

An important assumption of the Cox proportional hazards model is that the hazard remains constant over time. For example, taking a specific drug may halve a person’s possibility of getting a disease, or changing the specific material of certain manufacturing components may triple the possibility of their failure. However, in real-life application, this assumption of constant hazard may not be realistic. In clinical medicine, such non-constant hazard phenomena are quite common. For example, when studying the occurrence of mild cognitive impairment (MCI) related to time to death, the presence of MCI among participants may change over time. One explanation is the existence of covariates that change over time, which are referred to as time-varying covariates. Leemis et al. 2 proposed an algorithm for generating lifetimes when survival models include time-varying covariates, primarily based on inverting the cumulative hazard function to generate survival times. Austin 3 studied the data generation process for the Cox proportional hazards model with time-varying covariates under exponential, Weibull, or Gompertz distributions. Yan and Huang 4 proposed an adaptive lasso method for variable selection in Cox models with time-varying covariates. Ngwa et al. 5 used the Lambert W function to generate survival time data for the Cox proportional hazards model with time-varying covariates that follow exponential or Weibull distributions, and demonstrated that reliable and robust estimation can be performed through simulation studies. Cygu et al. 6 studied the penalized Cox proportional hazards model in the presence of a large number of time-varying covariates and proposed a variant of the gradient descent algorithm to fit the penalized Cox model.

Another extension of the Cox proportional model is the expansion of coefficients, transitioning from originally fixed coefficients to time-varying coefficients. In this case, it is generally assumed that the varying coefficients depend on certain covariates, such as scores for disease severity. Typically, the variation of coefficients is related to the variation of time

Functional data analysis (FDA) has become increasingly popular in many fields, such as medical research, magnetic resonance imaging (MRI), meteorology and public health. The most common method for modeling functional data is the functional linear model (FLM). There are many articles about the extension of functional linear models. Shin 14 introduced the partially functional linear regression model (PFLRM). Peng et al. 15 extended the partially functional linear model and proposed the varying coefficient partially functional linear regression model (VCPFLM) based on Shin 14 . Wang et al. 16 proposed the functional partially varying coefficient zero-inflated model (FPVCZIM). When modeling Cox hazard models, the covariates related to the interesting event include various types, commonly binary and numerical types, which are relatively simple to model. As data complexity increases, functional data is also incorporated into survival analysis models. Modeling functional data is more complex than modeling general numerical data. Some scholars have combined functional data with Cox models for modeling. Lee et al. 17 proposed a Bayesian functional linear Cox regression model (BFLCRM) with functional and scalar covariates. Kong et al. 18 proposed a functional linear Cox regression model to describe the association between event occurrence time data and a set of functional and scalar predictor variables, and developed an algorithm to calculate the maximum approximate partial likelihood estimation of unknown finite and infinite-dimensional parameters. In the final real data analysis, they showed that high-dimensional hippocampal surface data may be an important marker for predicting the time to conversion to Alzheimer’s disease.

According to our knowledge, there are few articles that combined Cox hazard models with functional data, and those articles only considered modeling with functional covariates and fixed coefficients. However, in real life applications, varying coefficients are more common than fixed coefficients. In this article, we therefore propose the so-called functional varying-coefficient Cox (FVC-Cox) model, which extends the analysis of Cox hazard models to a framework that simultaneously incorporates both varying and fixed coefficients, as well as functional data. This extension can be better adapted to practical applications, and is more interpretive and flexible. In this model, functional principal components (FPCs) are used to approximate the functional covariates, B-spline methods are used to approximate the varying coefficient covariates. The performance of the proposed method is evaluated through simulation studies. A real application using Alzheimer’s disease neuroimaging initiative (ADNI) data is utilized to illustrate the practicality of the proposed model.

Functional varying-coefficient Cox (FVC-Cox) model and estimation

Functional varying-coefficient Cox (FVC-Cox) model

Assume that

For functional data, we assume that the response variable

Model (1) is commonly referred as the functional linear model (FLM), where

Kong et al. (2018) proposed the functional linear Cox regression model (FLCRM) which introduced functional data into the Cox regression model to describe the relationship between survival outcome and a set of finite-dimensional and infinite-dimensional predictors. The corresponding hazard model is defined as follows:

In this model, the hazard function is related to the functional covariate

As

According to Mercer’s Theorem, we have

It is noteworthy that the sequence

Similarly, we can obtain the decomposition:

Substituting Equation (3) into Equation (2) yields:

The term

The effectiveness of this approximation depends on whether the first

For the varying coefficient part, local polynomials or B-splines are commonly used for approximation. Since B-spline approximation is global and computationally efficient and fewer parameters need to be estimated, we adopt B-spline approximation for the varying coefficient part in this paper. Let

We outline the estimation procedure for the parameters in the model as follows:

Step 1: Using B-splines to smooth the function

Step 2: Using FPC Analysis to decompose the functional sequence

For selecting the value of

Step 3: Substituting the approximated results from Steps 1 and 2 into the proposed FVC-Cox Model. The parameter

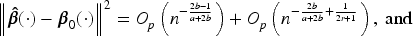

In this section, we will study some properties of the estimators. Given

We consider the following conditions (i.e. (A1)–(A5)) on the considered survival data. Here, Conditions (A1)–(A4) can be regarded as a direct extension of some standard conditions in the literature.

(A1)

(A2) Let

(A3) The functions

(A4) The matrix

(A5) For any

(A6)

(A7)

(A8) For all

(A9) For

(A10) The truncation number

(A11) For all

(A12) Varying coefficient

(A13) The number of knots

(A14) For

Here, (A6)–(A10)are very common in the functional linear regression models.20,21 . (A6) prevents the spacing between eigenvalues from being too small to identify

Simulation studies

Model setting

In this section, simulation studies are conducted to study the performance of the FVC-Cox model. First, we assume that the data are coming from the following model:

Analysis of simulation results

In this section, we consider scenarios with sample sizes of 500, 1000, and 2000, and simultaneously evaluates the proposed model under censoring rates of 0.1, 0.3, and 0.5. For all nine scenarios, we assess the performance of the model and estimators based on the root of average squared error (RASE) and integrated bias (IBIAS), which are defined as follows:

Table 1 shows the corresponding simulation results for the nine scenarios. As expected, all RASEs and IBIASs increase with censoring rate and decrease with sample size. Figure 1 plots the true and estimated values of the varying coefficient part at three different quantiles when the sample size is 500. According to Figure 1, there are insignificant discrepancies between the true values and the estimated values of the varying coefficients obtained from our proposed method. These results suggest that our model and estimation method are highly effective for estimating Cox models with varying coefficients.

RASE and IBIAS for sample sizes of 500, 1000, and 2000 at censoring rates of 0.1, 0.3, and 0.5 (SZ: sample size; CR: censoring rate).

RASE and IBIAS for sample sizes of 500, 1000, and 2000 at censoring rates of 0.1, 0.3, and 0.5 (SZ: sample size; CR: censoring rate).

The true and estimated values of the varying coefficient part at three different quantiles, sample size is 500.

When the data is under the same model setting, a comparison can be made between the results of simulations with and without varying coefficients. To save space, only the results under fixed coefficient settings with censoring rates of 0.1, 0.3, and 0.5, and sample sizes of 500, 1000, and 2000 are presented in Table 2.

RASE and IBIAS for fixed coefficient settings at censoring rates of 0.1, 0.3, and 0.5, and sample sizes of 500, 1000, and 2000 (SZ: sample size; CR: censoring rate).

RASE and IBIAS for fixed coefficient settings at censoring rates of 0.1, 0.3, and 0.5, and sample sizes of 500, 1000, and 2000 (SZ: sample size; CR: censoring rate).

According to Table 2, it can be seen that when the varying coefficient part of the model is replaced with fixed coefficients, there is a significant difference between the simulated results and the true results. Compared to Table 1, the RASEs and IBIASs in Table 2 become much larger. These results clearly suggest that for varying coefficient functional data, the original functional Cox model cannot provide a good fit and estimation of the data. The FVC-Cox model proposed in this paper, however, can not only encompass both functional covariate effects and varying coefficient effects but also perform parameter estimation more effectively than the functional Cox model.

The data used in this paper were obtained from the ADNI database (https://adni.loni.usc.edu/). The primary objective of ADNI is to test whether a combination of serial magnetic resonance imaging (MRI) and other biomarkers can be used to measure the progression of mild cognitive impairment (MCI) and early Alzheimer’s disease (AD) since early and more accurate diagnosis of AD is considered an important therapeutic measure. This is the goal of researchers because therapeutic interventions are more likely to be beneficial during the early stages of the disease. At the same time, identifying sensitive and specific markers of early AD progression aims to help researchers and clinicians develop new treatments, monitor their effectiveness, and reduce the time and cost of clinical trials.

The hippocampus is one of the key brain regions affected by AD. The data set contains clinical and imaging measurements from 373 MCI individuals in ADNI, using them to predict the time from MCI conversion to AD. Among the 373 MCI individuals, 161 MCI individuals progressed to AD before the study was completed, while the remaining 212 individuals did not convert to AD by the end of the study. Therefore, the time from MCI to AD conversion can be regarded as time-to-event data,and the outcomes are interval censored.

In this paper, we use the proposed FVC-Cox model to fit the ADNI dataset. Here, the covariates include gender (1

Here, the interaction between ADAS-Cog score and age was considered, meaning that the coefficient of ADAS-Cog score is a function of age, denoted as

The variation of the varying coefficients

Functional coefficients

Estimated values of the linear component

From Figure 2, the analysis results show that the coefficient of the ADAS-Cog score is a non-linear curve. For data with varying coefficients, using only fixed coefficients Cox analysis can lead to biased coefficient estimates and other issues. Therefore, the FVC-Cox model proposed in this paper is appropriate. Table 3 reports the parameter estimates and their corresponding standard errors. It can be seen that ADAS-Cog score is a significant factor, which is consistent with the conclusions from Kong et al. 18 This result suggests that the ADAS-Cog score provides a good predictive power for the conversion from MCI to AD.

As data become more complex, the demand for model flexibility becomes higher. The functional Cox model incorporates functional data into the Cox model, increasing model flexibility and expanding the scope of application for functional data. Building on the functional Cox model, we further incorporate varying coefficients, which further enhances the flexibility of the survival model. Analysis of simulated data shows that this model performs better in scenarios where both functional data and varying coefficients exist at the same time than the functional Cox model. Our proposed model and estimation method also perform well in the real data analysis.