Abstract

Introduction

Algorithms permeate decision making at each intercept of the criminal justice system. In turn, the algorithm used at one intercept, produces a data point that informs an algorithm at the next intercept. The Pennsylvania Additive Classification Tool (PACT) is an algorithm used by the PADOC (Pennsylvania Department of Corrections) during prisoner intake and recalculated annually. This carceral algorithm was implemented thirty years ago, in 1991, to generate a raw score that determines a person's custody level. Custody level dictates what type of prison a person is assigned to (in Pennsylvania this ranges from minimum to maximum security housing of prisoners). The numerical score the PACT calculates equates to a custody level within the prison. Scholars have focused on algorithms used during sentencing, bail, and parole, but little work explores the algorithms that are used

As we demonstrate, algorithms and data co-produce one another. The PACT also represents a data point that informs other predictive algorithms used at other links in the system. The purpose of the PACT is not to determine parole decisions, but PACT scores determine one's life in prison. Subsequently, the PACT is not only a variable used in parole algorithms “upstream,” it enacts the future data points of the incarcerated person. We thus find it necessary to consider algorithms and data in dialectical interplay.

In what follows, we first qualitatively analyze the PACT tool to consider the institutional goals that are embedded in its calculation. Since neither the algorithm nor the data it draws from are publicly available, this qualitative analysis of oral histories and policy documents is essential to demonstrate the competing and, even, antithetical goals of the PACT. Second, we analyze the underlying data that informs the score using an otherwise unavailable data set provided to us by the Pennsylvania Department of Corrections (PADOC) 1 .

Since this algorithm is used only for incarcerated people, the PADOC collects the data, owns the algorithm, makes decisions based on the data and yet the data is still incomplete and of low quality. Using this data set, we find the algorithm, despite the relatively closed nature of the dataset, draws from incomplete and missing data to produce its output. The underlying data is only the beginning of the fallibility. Third, we use that dataset to analyze the PACT calculations generated by the DOC. If the policy promises of a “smart” data driven approach to corrections were true, then the increased reliance on sophisticated classification tools such as the PACT should quell disparate outcomes in the PADOC. To date, we find that it has not. Instead, we argue that the PACT enacts and cements the lives of incarcerated people through an analysis of the descriptive statistics of the data set. Our mixed-method audit of this “black box” algorithm and its emphasis on what happens to people while they are incarcerated demonstrates the longer history of this type of encoding.

Literature review

The relationship between data and algorithms in criminal justice

In order to understand the power and role of algorithms in society, it is necessary to understand how they are generated by data and how they generate data. Examining an algorithm embedded in the carceral experience is an ideal algorithm from which to gain this vantage point. Predictive algorithms are historically rooted in the century-long scientific rationalization of criminal justice. Sociologist William H. Burgess, as early as 1928, asserted that predictability was feasible and, by 1935, parole prediction instruments were being used in the US (Harcourt, 2006). In the past 30 years or so, computational tools have cemented the actuarial method into criminal justice decisions, and algorithms have been embraced as “a more efficient, rational, and wealth-maximizing tool to allocate scarce law enforcement resources” (Harcourt, 2006: 2). Data driven, “smart on crime” reforms almost always involve the “incorporation of dynamic risk and needs assessment into justice processes” (Schrantz et al., 2018). As the increased popularity of the smart on crime approach emerged, it has been easy to lose sight of the longer history of actuarial methods in criminal justice. Further, criminal justice algorithms that digitize the actuarial approach play a pivotal role in the rise of predictive methods across society (Wang, 2018). Even though predictive modeling has been used in the criminal justice system, it is replete with bias against the poor and people of color in every node from arrest (Beckett et al., 2006), to sentencing (Starr, 2014), and then parole (Winerip et al., 2016).

Although the actuarial approach cemented by algorithms is concurrent with a justice system proven to be overwhelmingly ineffective and replete with bias, there remains a deep underlying faith among practitioners and policy makers that dynamic models can reduce costs, quell human bias, and maintain public safety (Braga, 2017; Ramachandran, 2017). Reliance on data driven algorithms by policy makers continues and their implementation is presumed to make the criminal justice system more efficacious and more just, inherently (Harcourt, 2006; Završnik, 2019). Yet, structural inequity remains. In our analysis of the PACT, we reveal how the algorithm itself enacts, cements, and entrenches bias through its dialectical relationship to data. The PACT offers this vantage point precisely because of this situatedness. To date, there is a great deal of attention to algorithms in criminal justice, but this scholarship primarily attends to sentencing and parole. There is hardly any study of algorithms and data that iteratively produce and control a person's experience

Our attention to the PACT algorithm as productive of data requires a mixed method approach along the lines of an algorithm audit (Brown et al.) that considers the embedded history of the tool in consultation with quantitative understandings of both the data it uses and generates. This mixed methods approach relies on the scholarship in critical data studies that situates (big) data in time and place (Dalton and Thatcher, 2014). Doursh and Gómez Cruz (2018), attuned to the social and historical nature of data, call for an increased attention to the narration of data. Algorithms are often produced by data and they create data. Carceral algorithms create data in two important ways. First, they generate additional data points via their output. Secondly, as the PACT exemplifies, they determine someone's future position and thus structure the human behavior that will be further captured as data. Building on this point in her investigation of carceral capitalism, Jackie Wang cautions, “predictions…

Further, data is not an objective recording of that mistake - it is replete with the biases and priorities of the system that recorded the data point. Algorithmic improvements are “sold as morally superior because they purport to rise above human bias, even though they could not exist without data produced through histories of exclusion and discrimination” (Benjamin, 2019: 10). The historical and situational context of the data that informs the PACT reveal it is rife with systemic biases due to the data upon which it relies. This creates what is functionally a feedback loop in which predictive tools enact the future data set and then “enshrine bias because they use datasets that are themselves tarnished by racial bias” (Wang, 2018: 50). Such scholarship demonstrates the propensity of data-driven criminal justice to reinforce pervasive inequities rather than alleviate them. Scholars identify new algorithmic technologies in myriad systems that, upon closer investigation, “reflect and reproduce existing inequalities,” even though they “are promoted and perceived as more objective or progressive than the discriminatory systems of the previous era” (Benjamin, 2019: 5–6). While a great deal of work examines bias within the algorithm, there is little of this work examining carceral algorithms and the underlying data that informs them (O’Neil 2016). However, a

Racial bias is far from the only issue with criminal justice algorithms. They are “black box” algorithms that embed some priorities over others. We not only look at the data and PACT calculations, we examine the values embedded in the PACT through qualitative analysis or publicly available oral histories with corrections officials and policy documents. Some scholarship signals the underlying values, but little explicitly examines it (Harcourt, 2006; Green, 2020). Ironically, at the same time, some scholars note that data that could be used to evaluate the effectiveness of algorithms and implement meaningful reforms does not exist (Christin et al., 2015). This understanding frames additional study on the algorithmic calculations of the PACT (Dhar et al. 2021 and 2022).

In this paper, we examine the PACT calculations in the data we requested from the PADOC, not the algorithm itself. 2 Our analysis examines the underlying data and the custody levels assigned to incarcerated people. In our examination of the PACT, we build on research that establishes the problems with criminal justice algorithms by focusing specifically on the PADOC data and their calculations. Many scholars have examined bias outcomes in criminal justice algorithms. Software used across the United States to predict criminal behavior is shown to be biased against Black people (Angwin et al., 2016). Despite such well documented cases of racial bias specific to criminal justice, predictive algorithms continue to play a central role in nearly all the nodes of the US criminal justice system. Christin et al. (2015), note “Instruments such as LSI-R and COMPAS are used for many purposes, including the security classification of prison incarcerated persons but also their eligibility for parole and levels of probation and parole supervision.” We contend that attention to the underlying data and the generated data from the algorithm is additionally important to more deeply analyze.

Data issues underlying criminal justice algorithms

An attention to the iterative nature of data additionally necessitates an exploration of data quality, completeness and accuracy. The aforementioned literature explores the social life of data, but criminal justice data sets are rife not only with biased data but also with incorrect data. Not only is data biased, it is fallible. Logan and Ferguson (2016) highlight how fallible data in the hands of government decision-makers has negative impacts on individuals (loss of employment, unlawful arrests, etc.) and on the government systems themselves (loss of trust from the governed, inefficiencies in the system, etc.). In fact, according to a 2001 report from the Bureau of Justice Statistics, “[i]n the view of most experts, inadequacies in the accuracy and completeness of criminal history records is the single most serious deficiency affecting the Nation's criminal history record information systems” (Belair et al., 2001). In this project, we seek to explore the specificities of the concern in the context of the PADOC and how it informs the PACT.

Ferguson (2017) discusses a framework for data quality in predictive policing that we apply to understand the PACT and PADOC data. He distinguishes between data errors that result from human error and from data that is fragmented and biased. In any large scale dataset generated by human activities and collected and catalogued by humans, there is ample opportunity for something that was otherwise accurate to be entered incorrectly. This results in things like illogical birthdates, incorrect addresses, and other such mistakes. The quality of criminal history records, a key component to prison decision-making, is also susceptible to human error (Ferguson 2017). States have a backlog of unprocessed disposition forms, rap sheets have been shown to contain incorrect information, gang and sex offender registries have been shown to be error-prone in addition to being biased (Ferguson 2017).

Ferguson (2017) also identifies methodological concerns in the way complex statistical tools are used in the decision-making process. Namely, he identifies internal validity, external validity and error-rates as three important concerns. Internal validity is the extent to which a piece of evidence supports a claim of cause and effect. Threats to internal validity such as history, change in instrumentation, selection bias are all present in the context of data-centric criminal justice. External validity is the extent to which conclusions reached in one context can be applied to other contexts. Additionally, criminal justice data is fragmented because it is being collected by a variety of organizations at a variety of levels (state, local, country). Due to the fragmented nature of criminal justice data, there is a great deal of overgeneralization that goes on. Conclusions reached in one context (the current governor of the state, the current director of the department of corrections, the current national attitude towards the carceral system) maybe should not be reached in another. Before asserting that an algorithm is biased, it is first necessary to establish the veracity of the underlying data. While research examines this in criminal justice writ large, we use descriptive statistics to focus explicitly on these uses happening during a persons’ period of incarceration.

Case study: The PACT

PACT's implementation exemplifies Pennsylvania's position as a vanguard of national prison reform. Home to the first modern penitentiary, Eastern State Penitentiary in Philadelphia, Pennsylvania has long been a national leader in correctional reforms and innovations. When the state introduced the PACT in 1991, the PADOC was celebrated by policy makers as a leader in nationwide efforts to create “objective” prisoner classification systems (NIC, 2015). The Justice Reinvestment Reform Initiatives of the past decade derive from the same techno-moralism that lead to the implementation of the PACT. Given its ongoing positioning as a national model, the PACT is an important algorithm to study in order to understand the role of algorithms in criminal justice reforms nationally. Further, the PACT is exclusively for incarcerated people. It is used to make decisions within a “closed” system, meaning it is theoretically an ideal system for a complete data set.

The PACT, as indicated in Figure 1, is a confidential algorithm. In other papers (Dhar et al. 2021 and 2022), we examine the algorithm, but in this paper we look at the PADOC constitutions of the tool by reviewing their documents, the underlying data, and descriptive statistics. Since the algorithm is not publicly available, we review policy documents and oral histories to understand the institutional priorities and goals that shape the tool. By then looking at the PADOC data from our requisition for data related to parole outcomes, we are able to evaluate how effective the tool is at achieving that goal. Finally, from this we analyze the assigned custody levels at intake and reclassification as well as overrides. We find those to have a variety of racial disparities.

Redacted section of the reception and classification manual that contains the PACT algorithm.

The PACT in historical context

The PACT is historically situated in the rising need to manage burgeoning prison populations and securitize prisons. It was implemented as part of a sweeping set of reforms in response to an uprising in the Camp Hill State Correctional Institution that began on October 25, 1989. This disruption, now known as the Camp Hill Riots 3 , lasted for three days. When the literal dust settled, “more than 100 people were injured including 69 corrections officers and 41 incarcerated persons” (Kiner, 2018). It resulted in over $50 million dollars of damage to the facility. Over the next year, additional disruptions happened in several other prisons across the state (Stanford, 2005). These disruptions, of which Camp Hill is the most well-known, are understood to be the result of extremely overcrowded institutions. The PADOC was not able to keep pace with the growth of the prison population in the 1980s. This growth had reached a tipping point when the uprising began. This incident played a notable role in reshaping PADOC policy, in part, because Camp Hill also houses the central offices of the Department of Corrections. Therefore, it was witnessed by decision makers that otherwise would not have day to day experiences within the walls of the prison.

In 2019, to commemorate the 30th anniversary of the Camp Hill Riots, the Pennsylvania Department of Corrections Communications Director, Susan McNaughton, conducted 43 oral history interviews with people who were PADOC employees during the riots 4 . As seen in Figure 2, an image from the commemoration page, the commemoration draws a direct line between the implementation of the PACT and the riots. The list underscores the significance of the Camp Hill Riots for shaping the PADOC, and the oral histories give a unique window into the historical context that underlies the implementation of the PACT. This compendium, to its credit, reflects the data-informed, reflexive tendencies of the PADOC in its willingness to recall and review the incident. Such tendencies have made the PADOC a leader in corrections across the country. At the same time, it helps deepen an understanding of the priorities in the PACT, which is essential for a “black box” algorithm.

Changes since the riot (Department of Corrections 2019).

The commemoration implies the PADOC is now “fixed” because it is well equipped to manage its much larger population. Part of the “fix” is the PACT, which, according to the PADOC, is a direct response to the Camp Hill Riots. What is “fixed” from the perspective of the PADOC? Notably, it is considered beyond the purview of the PADOC to question the population size constructed by legislatures. Rather, the development of new classification tools to manage and classify incarcerated people, is the solution to an overcrowded, unsecured institution. The oral histories highlight the gaps in control and other systemic deficiencies that motivated the implementation of the PACT. Our analysis specifically finds the emergence of security and technological innovation as values that emerge from the riots and guide subsequent reforms like the PACT tool. The lived experience of the riot for those at the PADOC central office had indelible impacts on their own understanding of the prison and the expanding incarcerated population. Many of the PADOC personnel interviewed who witnessed or experienced the riot, recollect traumatic scenes that still impact them thirty years later. When the riot happened, Lee Ann Labecki had recently finished her internship and was working as a program analyst at PADOC. She exemplifies the lingering intensity of the experience for corrections staff and decision makers: “ I just was only a 26-year-old kid at the time, but that moment is seared in my brain. When I think back to the riot… just that… the incarcerated person swinging that shovel and then laughing. I don’t think I’ll ever get rid of that picture in my mind.” - Lee Ann Labecki (2019), October 3, 2019, Pennsylvania Department of Corrections Employee Oral History Collection Project

Labecki went on to direct the Planning, Research and Statistics Office, and her oral history highlights the pressures created by the rapid growth in the prison population. In reflecting on what caused the Camp Hill Riots, she explains the fundamental problem of overcrowding in prisons across the state: “I remember in the months prior to the riot, in central office, we had been preparing a number of planning documents on the level of overcrowding in the system generally. And certain institutions in particular, one of them being Camp Hill, because the people that were long-termers kept saying, “It's not a matter of if. It's a matter of when.”…We had some institutions, like Camp Hill and others, that were at 145% of design capacity and rated capacity we were up to 180… and they were putting beds everywhere… in dorm rooms… in gymnasiums. [We] were developing plans with other departmental staff on how to address crowding. We’re sending things over to the Governor's Office to make them aware.” - Lee Ann Labecki, October 3, 2019, Pennsylvania Department of Corrections Employee Oral History Collection Project

For the most part, correctional personnel primarily focus on loss of control this overpopulation generates. The oral histories note the inability to deal with overpopulation through the lack of resources, staffing, and little ability to manage prisoners as a population. Our analysis of the oral history interviews reveals that the word “control” is one of the most used words in the corpus, ranking 44th and mentioned 185 times in the interviews. That count does not include the phrase “control center,” which was mentioned another 57 times. Even this phrase - which refers to the impromptu command center that was created in response over the 3 days - reveals the focus on the micro-spatial geographies of control and how the PADOC operated to contain the uprising. From the perspective of the PADOC, while overcrowding created deplorable conditions for incarcerated people, the crisis of the Camp Hill Riots is fundamentally due to the loss of control of the population. As one oral history describes it: I’ll be honest… this is true for me for all three days… it kind of felt helpless. What can we do? There's nothing we can do but sit here and watch. At that point it was just corrections personnel, state police then, of course, came in force… other police, first responders. It was like they had to take control of the institution and there was nothing we could do. -Dave Rudon, August 20, 2019, Pennsylvania Department of Corrections Employee Oral History Collection Project

In addition to the technological sophistication in prison construction, the PACT was implemented as an algorithmic method to streamline the intake and management of incarcerated people. It was essential that the PADOC become more efficient and predictive in classifying their population in order to retain control. Figure 2 first notes: “Elimination of the treatment versus security struggle by changing to a facility management and centralized services structure.” The riot precipitated a shift, in a Foucauldian sense, to the organized management of a prison population instead of the individual management of prisoners. This can be read as a fundamental shift in which prisoners become seen as a population to be managed and controlled.

While we do not know the details of how the PACT calculates custody levels, reviewing documentation by the PADOC leading up to the implementation of the PACT reveals its underlying orientation. The PACT extends predictive, actuarial methods to quickly and efficiently manage the larger population. It is a tool to regain control, prioritizing incapacitation and security. While rehabilitation may play a role in the considerations, without access to the tool itself, we have no way to know the weight placed on those variables. In Figure 3, we can see that only two nodes are related to programming and rehabilitation. Based on the historical context that preceded the implementation and our review of policy reports in the next section (Hardyman et al., 2004; PADOC, 2011; Raymond J. Smolsky V Department of Corrections, 2011; Stanford, 2005), we assume they are not given much weight. Discursively, security and control are the fundamental concerns that drive reforms in the early 1990s and the PACT is part of this effort. In a report offering the PACT as a model for other jurisdictions, the NIC celebrates the techno-sophistication of the tool noting, “It was developed by an interdisciplinary team as a risk management tool for placing prisoners in the least restrictive custody while providing for the safety of the public, community, staff, other prisoners, and institution guests and visitors and for the orderly operation of the institution” (Hardyman et al., 2004: 37). This makes clear the central purpose of the PACT is to systematize population management to maintain control and secure the prison. Goals such as rehabilitation, eliminating racial bias, and addressing community scale structural issues are not emphasized. Architectural design, programming, or variables that are oriented toward restoration and rehabilitation (Byrne, 2019, Fleetwood 2020) likely would have also quelled unrest, but this is not the path pursued by the PADOC. The emphasis on population management and control is historically embedded in the algorithm.

A flowchart of the process to assign an incarcerated person their initial custody level. A similar process holds for the annual reclassification.

The PACT today

Still in use today, the PACT is meant to standardize the custody assignment process and to systematically sort prisoners based on a prediction of their institutional behavior. The process has four main steps: the PACT tool is applied and an initial score is derived (we do not know how this score is calculated or what ranges it can take on). That score, which reduces the infinite variables of a human life, highlighting their interactions with police and the courts, is then summarized as an incarcerated person's initial classification score, an integer from 1 to 5. That initial classification score can be overridden for administrative reasons and then a custody level score is determined. This custody level score determines a great deal about an incarcerated person's experience in the system. The flowchart in Figure 3 is color coded as follows: Grey boxes represent numbers whose calculation is either explicitly confidential or generally mysterious and obscure. Blue boxes represent data about an individual that are known to be biased (e.g. since Black men are known to be over-policed and get harsher sentences, we know there is a bias in those two inputs to the PACT tool). The green box corresponding to stability factors encompasses features that seem notably subjective and value laden as they include things like an incarcerated person's marital status, employment status before being incarcerated, etc. The resulting diagram suggests that the system is neither objective nor free from bias, but rather emphasizes a moralistic notion of stability.

The PACT number equates to a custody level where CL-1 is the least restrictive and CL-5 is the most restrictive (see Table 1). These custody levels have dramatic consequences for prisoners as it will determine their “housing, programming, and freedom of movement” within the prison and even which facility a person is located in (Hardyman et al., 2004: 37). The PACT thus follows a long actuarial tradition that deterministically structures the lives of incarcerated people (Harcourt, 2006). The report further discloses the way in which the PACT supposedly removes prison staff from the decision, naturalizing the PACT as a neutral reflection of the prisoners: PACT was designed to be objective and behavior driven and to ensure that a prisoner's custody level is based on his/her compliance with institutional rules and regulations and participation in work, education, treatment, and vocational programming. Thus, in theory, compliance by the prisoner will facilitate his/her movement to less restrictive custody levels. PADOC discourages negative behavior by providing consequences for infractions, escapes, and nonparticipation in programs. (Hardyman et al., 2004: 37–38)

Custody levels (compiled using Hardymann et al. 2004 and PADOC 2011).

While qualitative analysis situating the PACT as a reaction to the Camp Hill Riot helps to understand the priorities embedded in the calculation, the public facing explanation of the PACT redacts the algorithm (see Figure 1 above and PADOC, 2011). It is thus difficult to fully analyze the extent and force of embedded bias. A major justification for this redaction is a concern that prisoners could game the algorithm (Raymond J. Smolsky V Department of Corrections, 2011). This, of course, inadvertently reveals that the PACT is not as objective and free of human intervention as we are invited to assume. By intersecting existing research on areas of demonstrated bias in criminal justice with the data that informs the PACT, we highlighted all of the feeding variables and algorithms that have documented biases in Figure 3.

This vulnerability to manipulation along with consideration of the social and historical factors that led to the development and deployment of PACT indicate that the PACT is fallible and subjective. The use of overrides further highlights fallibility of the tool. The NIC report cited above notes the use of overrides that reclassify prisoners after calculating the PACT score and generating a custody level: Administrative overrides—based on the prisoner's legal status, current offense, and sentence—can change classification recommendations. Discretionary overrides by the case manager are permitted based on the prisoner's security threat group affiliation; escape history; nature of current offense; and behavior, mental health, medical, dental, and program needs. Information about cases for which discretionary overrides are recommended is forwarded electronically to the appropriate staff for approval. Multiple levels of review by classification supervisory staff and the central office are required for all overrides. (Hardyman et al., 2004)

While the primary focus of this paper is not algorithmic bias per se, explicit attention to priorities and lack thereof gives window into why and how bias persists. While the state has a long tradition of actuarial policies and technological sophistication, reducing bias is not prioritized. The system remains rife with bias (Jasanoff, 2017; Kramer and Ulmer, 2008; Schwirtz et al., 2016). Algorithms are not likely to address problems that they were not expressly designed to address. Nevertheless, what emerges is an assumption by state officials that through increased efficiency, analysis with more data points, and models making decisions, the scourge of biases and injustices are inherently eliminated at a cost savings to taxpayers. The impetus to be “data driven” firstly implicates the use of data in the creating and justifying of reform policies. Data driven decisions help legislatures calculate savings and redirect resources in presumably more efficacious ways. The faith in an enhanced prison through data driven decision making has proliferated the governing logic of the institution. Take, for example, the case of a concerned citizen in a town hall facilitated by the Pennsylvania Legislative Black Caucus. The citizen asserted to PADOC Secretary, John Wetzel, that incarcerated people were not receiving sufficient portions of food. Wetzel offered the following response: The Department of Corrections’ Master Menu is analyzed using computer software. The DOC menu, as written, when served the standard portion sizes as indicated on the menu, meets the current Recommended Dietary Allowances and Dietary Reference Intakes, Males and Females, 18 to 50 years, as identified by the Food and Nutrition Board of the National Research Council. Every facility uses measured portion utensils, pre-portioned items, or scales to ensure the standard portion is served. Many people are not used to managing or limiting their food portions to those recommended for a healthy lifestyle. It is our hope that incarcerated persons can learn what healthy portions look like so they can in turn carry that forward once released (Pennsylvania Legislative Black Caucus, 2018).

Given the rightness of the

Data driven methods and results

A hallmark of arguments in favor of “smart” tools in decision making is the claim that the tools are immune to human subjectivity, bias and error. As we note above, bias in criminal justice algorithms writ large is well established. However, attention to the PACT offers a unique window into how algorithms remain subjective and error riddled

Unreliable data for an unreliable calculation

To begin, we found the data to be both expansive and vacuous. The data we received from our request included 20 files, a data dictionary and codebook. Such information about the data being collected about incarcerated people has not previously been published or analyzed. The data dictionary that accompanied the dataset we were given contained information about all the variables the PADOC collects and collates including the date each variable came into existence. This means the PADOC maintains even more data than the 7.24 gigabytes we received. The data, while all held by PADOC is highly fragmented. They collate things like gravity scores, programming needs, and even disciplinary reports.These are all input by different offices with different goals.

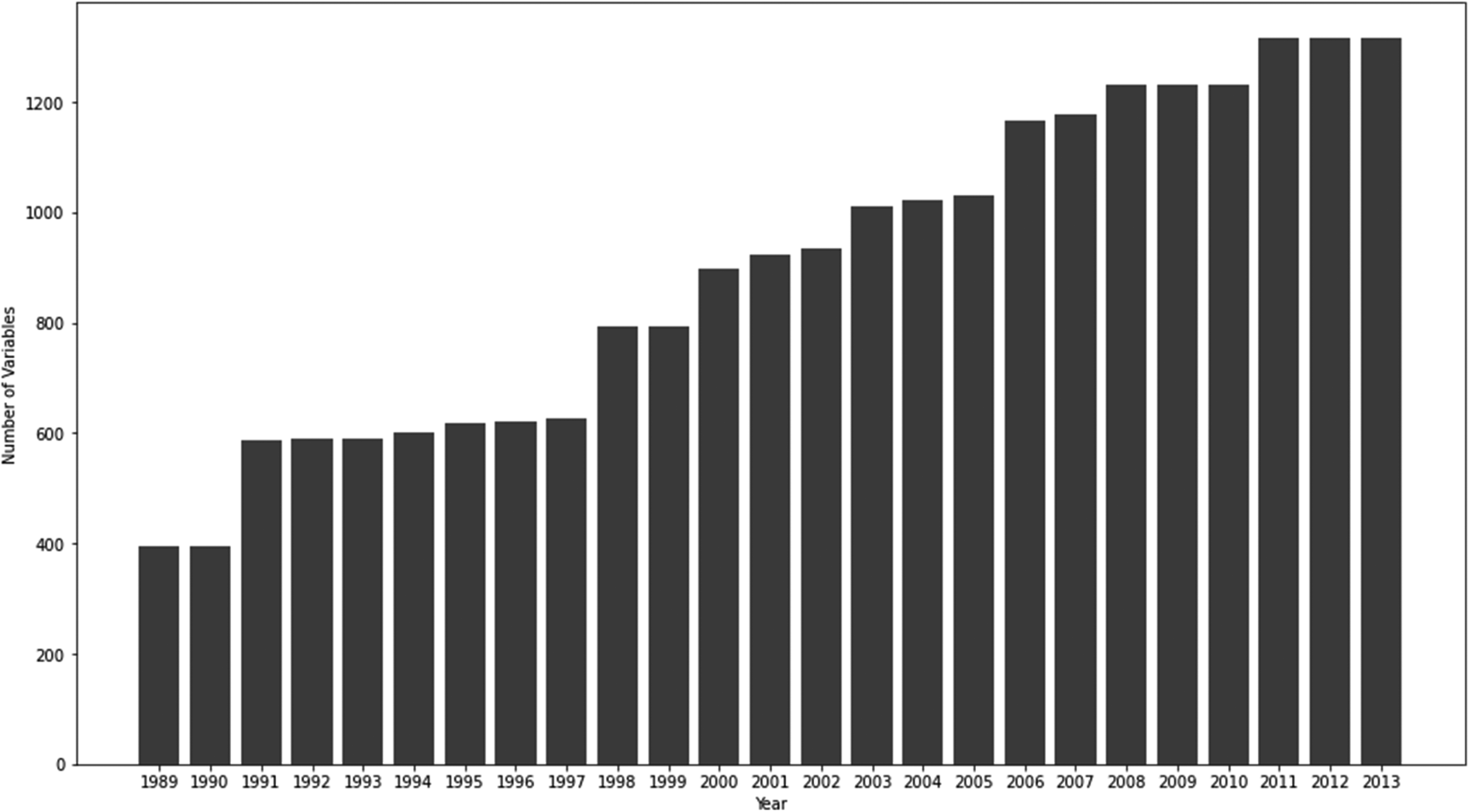

The PADOC collects details spanning almost the entirety of an incarcerated person's life in and outside the prison ranging from their date of birth to any notes recorded by their therapist during a treatment session. A good example of the reach of the data is the fact that, according to the data dictionary, the PADOC is collecting data about the criminal history of anyone who visits an incarcerated person. Figure 4 illustrates the growth in the number of variables starting in 1989, the year of the Camp Hill Riots. Currently the PADOC collects data for more than 1200 variables for each incarcerated person and the number of variables being collected increases over time.

Cumulative number of variables collected by the PADOC data set by year.

This increase over time equates to an increase in the amount of data. Figure 5 shows an increase in the sheer amount of data PADOC is collecting system wide about the people it incarcerates. Though a lot of variables are being collected about each incarcerated person, the data itself is incomplete. This is measured in the number of cells in the database that could be filled in. It is essentially the product of the number of incarcerated persons and the number of variables. Also illustrated in Figure 5 is that there is roughly an equal number of empty cells as there are cells with values. In a real sense, about half of the possible data is missing. If we focus our attention on those variables that are used in the making of important decisions (e.g. custody level score, parole), there is a great deal of missing data there, too. In Figure 6 we see that the custody level score, the output of the PACT tool, is missing for a large number of incarcerated persons. The tool is supposed to be “smart” but it does not appear to record what it knows. It is not clear how the PACT is meeting even its own purported goals with so much missing data, nor why so many people are missing a score.

Total number of empty and non-empty cells over time.

Missingness of the initial custody level. The shaded areas are the proportion of cells that contain data.

In addition to these issues of missing data, there are also instances of illogical values. For example, a variable for maximum court sentence in months should range from 0 to 12 but some values are greater than 100. Marital status, one of the stability factors in Figure 3, should take on the values DIV, MAR, SEP, SIN, UNK, WID but we found 20 different values, including clear typos like WED and WIS that are likely meant to be WID, and some values that are not defined anywhere. There are not many instances of each of these values, but they exist nonetheless. This problematizes the PACT calculation on its own terms. In spite of these findings, the PADOC keeps a record of the quality of each variable in their data set. As shown in Figure 7, the vast majority are recorded as high quality. This signals an overconfidence in the PACT calculation itself in as much as there is overconfidence in the underlying data.

Quality of variables added to DOC: 5 is the highest quality and 1 is the lowest.

Figure 8, summarizes the racial demographics, sex demographics, and age distribution of the people for whom we have data in each of the 6 years that we requested in the original data pull. Note that recently the population has trended younger, the ratio of the number of male incarcerated people to the number of female incarcerated people has remained stable and the ratio of the number of Black incarcerated people to the number of Whites has changed from roughly 3:2 in 1997 to approximately 1:1 in 2017. This disparity remains in spite of Black people making up only about 11% of Pennsylvania's general population. Simply put, this table verifies the underlying skews of the state's criminal justice system.

Basic demographic data for incarcerated people in the system in the years 1997, 2002, 2007, 2012, 2017. 8 Race and ethnicity categories are by first letter and correspond with Figures 10 and 11.

Unreliable calculations

The calculation of the PACT score is reliant on problematic data. It also, therefore, produces another unreliable data point that informs other algorithms in the system. The process outlined in Figure 3 results in one of five categories of custody level 6 being assigned to an incarcerated person. Figure 9 shows the distributions of custody levels assigned to incarcerated persons during the initial classification, after the initial classifications’ overrides, during an annual reclassification and their overrides. Reclassification scores tend to lower an incarcerated person's custody level but also simultaneously increase the range of custody levels. The initial classification distribution is somewhat symmetric. After the administrative overrides are completed, incarcerated persons tend to have a lower custody level and there is a wider range of custody levels. Although overrides are generally considered a best practice when using algorithms in criminal justice, their use means that algorithms do not remove human subjectivity. Further, the need for overrides also reveals the process is labor intensive, rudimentary, and malstandardized.

Distribution of the output of the PACT and the override process for incarcerated people in the system in the years 1997, 2002, 2007, 2012, 2017.

The unreliability draws not only from the underlying data and overrides. Our analysis of the PACT scores calculated by the PADOC found that the score outcomes are racially disparate. For people incarcerated in 2017, we have the following descriptive statistics. The mean initial custody level for a Black person was 3.64 and for a Nonblack person it was 3.27; the mean institutional adjustment score (how this is determined is unclear) is 3.33 for a Black person and 2.95 for a Nonblack person. As for the reclassification process, the mean difference between initial custody level and reclassified custody level was −0.37 for a Black person and -.07 for a Nonblack person (suggesting that Black people are probably being classified initially to too high a custody level) and the mean number disciplinary reports for a Black person was 2.23 and for a Nonblack person was 2.06.

As discussed above, “smart” tools are marketed as being able to remove or reduce biases in decision making processes. These tools have now had 30 years to achieve this goal. Collectively, Figures 10–11 and Tables 2–3 show that this goal has not been achieved. 7 As we see in Figure 10 and Table 2, about 84% of White incarcerated persons have their custody level reduced by 1 or more points for administrative or discretionary reasons whereas the same can be said for only 79% of the Black incarcerated persons. While there is any range of reasons for this that are not inherent to the weighting of the calculation, this discrepancy suggests, at minimum, the PACT is reinforcing different treatment for the two groups. However, the fact that 13.3% of Black incarcerated persons have their custody level reduced by two points or more while the same can be said for only 8.5% of White incarcerated persons suggests that perhaps the PACT tool assigns scores that are “too high” to Black incarcerated persons.

Distribution by race of the difference of initial custody level and override custody level.

Distribution by race of the difference of reclassification custody level and override reclassification custody level.

Difference between initial custody level override and initial custody level by race.

Differences between reclassification custody level override and reclassification custody level by race.

Figure 11 and Table 3 show disparities in the case of reclassification. 25.6% of Black people receive an override to lower their custody level score whereas the same is true for 30.8% of White people. Also, 21.1% of White people had their reclassification overridden to a score two points lower whereas the same is true for 12.0% of Black people. This suggests that, perhaps, White people are being reclassified at a higher rate than Black people relative to where they should be according to administrative requirements. In other papers we examine the weighting of the calculations, the skewed proportions and overrides in the PADOC records further underscore the emphasis on securitization. The PACT is celebrated as successful because prisons are more sophisticated and secure than they were in 1989. However, the data show that the PACT is reproducing

In turning to the PACT score as a data point - we find it is replete with racial bias. First in its calculations, we find that Black incarcerated persons are more likely to receive higher custody levels. People with higher custody levels do not enjoy as many privileges nor access to programs while incarcerated. Subsequently, a higher custody level could make it more difficult to avoid disciplinary action and to meet the requirements for parole. Second, when manual overrides are made, we again see this favoring white incarcerated persons. Finally, white incarcerated persons are more likely to find themselves in a lower custody level and to avoid the need for a manual override in the first place. Manual overrides are frequently used and this is disconcerting in relation to the claims of algorithmic certainty made by smart on crime proponents. On the one hand, overrides could signal that the PACT is not accurate. On the other, overrides could signal that even if the algorithm does reduce bias, human users are given the opportunity to add it back in. In either instance, overrides highlight the human relationality for the algorithm and subsequent constitution of data.

Discussion

In lieu of many other pathways, the PACT is part of a comprehensive system to more efficiently manage a population in order to maintain security and control within the prison. Efficiency is prioritized in a shift to a population management approach that is less individualized. The historic prioritization of security and incapacitation embedded in a tool that shapes the carceral experience is troubling. The increased reliance on “smart” algorithmic tools serves a reductive function for each individual in the care of the PADOC. Further, the desire for security and control as the prison population boomed highlights the PACT as an algorithm that prioritizes incapacitation and population control – like many algorithms used in corrections.

Our qualitative review of the PACT's historical context invites a “reading for absence” (Hesse-Biber, 2012), as we look at what the PACT collects and analyzes and to what end. It is important to consider what it does not collect and analyze to other ends. Incapacitation is the driver of the collection and weighting of the data. This means much is lost along lines of individuality that could better reveal one's potential as a member of society. What cultural and social assets and skills do incarcerated individuals have that could be developed to ensure future success? Of the minimal data of this kind that is collected, none of it appears in the PACT calculation. We can imagine, for example, mental health data might be kept and treated differently if the algorithmic priority were rehabilitation instead of incapacitation. A tool that prioritized rehabilitation from the start would collect vastly different variables and enact a considerably different future. While there are strong justifications for the importance of security, it must be ceded as the priority and as structuring the PACT at the expense of other priorities. Thus, we find the PACT is not an objective tool, it is a historically and socially constituted tool that reflects subjective decisions and values - specifically securitization and control. Future research, particularly the emergent methodologies of algorithmic audits (Brown et al., 2021) should further examine what is

Our specific attention to the PADOC data about the people it incarcerates reveals the myth of data driven approaches to criminal justice. In practice, the data is replete with issues. The data is fragmented. It is not consistently or uniformly collected over time or in discrete periods. The data is also incomplete. We find that over time there are more variables being added to the system, but the collection of the data remains inconsistent and error riddled. We find that the PADOC is overconfident in the quality of the data. The actuality of the data reveals that the PACT score is not only reductive, it is also unlikely to generate a reliable calculation. So, we find that the PACT is an algorithm that prioritizes control, but we also find that the data likely undermines the effectiveness of even this stated goal.

The consequences are best illuminated if the PACT is thought of as both a data point and an algorithm. In our tracing of the PACT, we seek to further advance a study of data and algorithms as iteratively co-productive of one another. This way we can better understand how bias gets baked into the varied nodes of the criminal justice system. We can begin to understand feedback loops between the various algorithms being deployed. Our analysis reveals how PACT perpetuates racial disparity and reduces the humanity of incarcerated people through a data-driven placement process. So, by considering the calculations as data points, we can understand the compounding problems in other algorithms (such as parole and sentencing algorithms) that are flawed by extension of their reliance on the PACT score.

A close examination of the PACT reveals the decades long stake in both more data and more sophistication to improve the system. However, without addressing the issues embedded in mass incarceration, this technology simply reproduces the same entrenched inequalities through a different, emergent form of supervision. The “smart” approach, ultimately works to create a false sense of confidence in the equity and justice of the “data-driven” decisions being made. When one examines the variables that inform prisoner classification, the consistent finding that technological sophistication both obscures and cements bias, is rooted in the limitations of the data. These limitations are rooted in the algorithm's inherent humanness and the history of its original construction. Continued work is needed on data and algorithms that explicitly seek to reduce bias, encourage rehabilitation and reduce recidivism. Such data and algorithms must also interrogate the underlying data infrastructure, given the long history of incomplete data and embedded bias.

Given all that we find and all the other scholars who have identified bias in the criminal justice system, it is ultimately not a surprise that racial bias in the PACT remains. There is little difference in the PACT scores and calculations over time. Thirty years into a holistic application of algorithmic technologies to intake prisoners, the system as a whole remains skewed along racial lines and recidivism rates remain high. Our findings point out that even in the least transparent part of a person's interaction with the PADOC, the data is problematic, the decisions are biased and its usefulness is questionable.