Abstract

Introduction

Anxiety disorders, bipolar disorder, schizophrenia, and post-traumatic stress disorder (PTSD) rank among the most significant and persistent global public health challenges. As reported by the World Health Organization (WHO), nearly one in eight individuals globally is affected by a mental health condition, contributing substantially to the overall burden of disease and to disability adjusted life years (DALYs). 1 Despite increased global awareness and the implementation of mental health policies, service provision remains critically under resourced particularly in low- and middle-income countries (LMICs). Key barriers include a severe shortage of trained mental health professionals, persistent stigma surrounding mental illness, and the lack of coordinated and continuous care delivery systems. Within this challenging landscape, the advent of artificial intelligence (AI) technologies offers a promising avenue for the transformation of mental healthcare delivery, with the potential to enhance early diagnosis, optimize therapeutic interventions, and support long-term patient monitoring and engagement.2,3

AI refers broadly to computational systems that emulate aspects of human intelligence, such as learning, reasoning, and decision making. In the realm of healthcare, AI has already demonstrated transformative potential in areas such as radiology, genomics, drug discovery, and personalized treatment planning. Mental health, though more complex and context-dependent, is increasingly becoming a focus of AI research and application. 4

A key driver behind the growing role of AI in mental health is the digitization of behavioral data. Individuals today generate massive volumes of data through smartphones, wearable devices, social media platforms, and online interactions. 5 These digital footprints can provide valuable insights into psychological states, behavioral patterns, and social functioning. AI systems can harness such data streams to detect mental health risks in real time, often before clinical symptoms become apparent. 6

Another compelling advantage of AI in this domain is its ability to uncover latent patterns in complex data sets. Mental health conditions are inherently multifactorial, influenced by genetic, neurobiological, psychological, and environmental variables. 7 AI models, particularly those using machine learning (ML) and deep learning (DL), are capable of integrating diverse data modalities from text and speech to neuroimaging and genomic profiles to identify predictive markers and stratify patient subgroups. 8

The integration of AI into mental health care is not without challenges. One major concern is the quality and representativeness of training data. Mental health data sets often suffer from small sample sizes, demographic imbalances, and subjective labeling. 9 Mental health data is highly sensitive, raising important questions about privacy, consent, and data governance. The opaque nature of many AI algorithms, often described as black boxes, also poses ethical and clinical concerns. Without clear interpretability, clinicians may hesitate to rely on AI generated outputs, specially when they contradict clinical intuition or patient narratives. 10

Another layer of complexity is the social and cultural context of mental health. Emotional expression, symptom presentation, and help-seeking behavior can vary widely across cultures. AI models developed in one context may fail to perform accurately in another, highlighting the need for culturally aware and inclusive AI development. Furthermore, the human element in mental health care, empathy, rapport, and therapeutic alliance cannot be easily replicated by machines. AI should not be viewed as a replacement for human clinicians but as a complementary tool to enhance care delivery. 11

The COVID-19 pandemic further accelerated interest in AI-enabled mental health solutions. Lockdowns, social isolation, and economic uncertainty led to a surge in mental health issues, while simultaneously disrupting access to in-person care. 12 Digital health platforms saw unprecedented growth during this period, and AI-based tools played a pivotal role in expanding access and managing patient loads. As we move into a post-pandemic world, the role of AI in supporting mental health systems is expected to become even more pronounced. 13

Given this backdrop, the objective of this review is to provide a comprehensive overview of how AI is currently being applied in the field of mental health, with a focus on three primary domains: diagnosis, therapy support, and patient monitoring. The paper begins by outlining the core AI technologies and methods commonly used in mental health applications. It then examines key use cases, including early detection of depression, AI chatbots for therapy, and wearable-based mood prediction. The review also delves into ongoing challenges ethical, technical, and societal and concludes with a discussion of emerging trends and future research directions.

In choosing a narrative review format, this article does not aim to exhaustively catalog every study or quantitative result. Instead, it synthesizes themes and developments from the current literature to provide a broad yet insightful picture of the AI-mental health intersection. Such a perspective can be particularly valuable for researchers, clinicians, developers, and policymakers who seek to understand both the opportunities and limitations of deploying AI in this sensitive and vital domain.

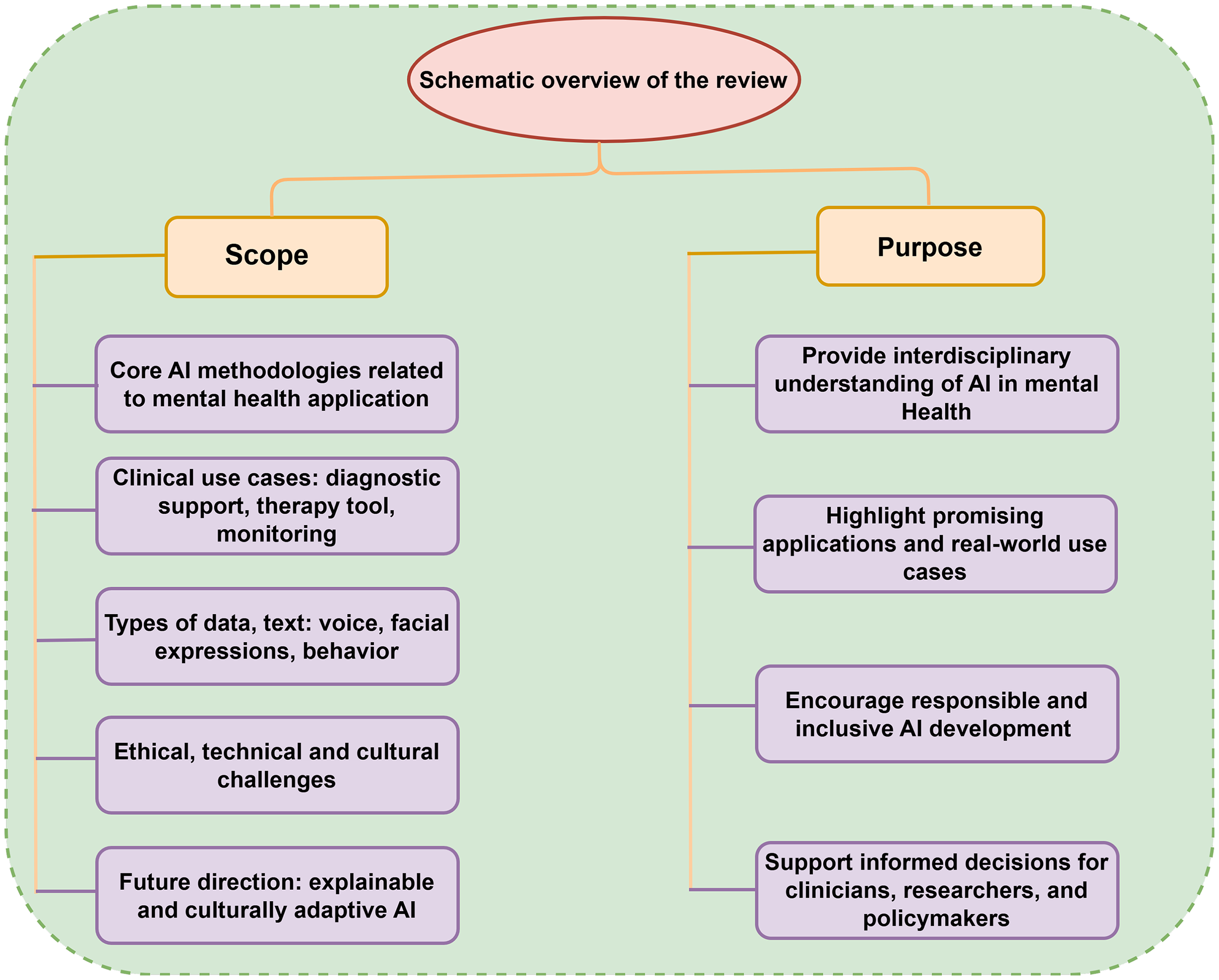

Scope and purpose of this review

Figure 1 shows the aims and scope of the narrative review to explore the evolving role of AI in mental health care, focusing on how AI technologies are being applied to enhance diagnosis, therapy, and patient monitoring. Emphasizing non-generative AI methods such as ML, DL, and natural language processingNLP), the review synthesizes current research and practical implementations to provide a clear picture of ongoing advancements and challenges.

An overview of the review’s scope and purpose in artificial intelligence (AI) for mental health.

Scope

The scope if the review covers:

Core AI methodologies relevant to mental health applications. Clinical use cases including diagnostic support, AI-based therapy tools (e.g. chatbots), and real-time monitoring through mobile and wearable technologies. Types of data used in AI models, such as text, voice, facial expressions, and behavioral patterns. Ethical, technical, and cultural challenges, including privacy, bias, and interoperability. Future research directions, such as explainable AI (XAI) and culturally adaptive systems.

Purpose

Provide interdisciplinary readers with a foundational understanding of AI in mental health. Highlight promising applications and real-world use cases. Encourage responsible and inclusive AI development. Support informed decision-making by clinicians, researchers, and policymakers.

Contributions to the literature

This review makes several key contributions to the growing body of literature at the intersection of AI and mental health care:

Interdisciplinary synthesis: It integrates insights from computer science, clinical psychiatry, and digital health to provide a holistic overview of AI applications in mental health, making it accessible to both technical and non-technical audiences. Focus on practical applications: Unlike many technically focused reviews, this paper emphasizes clinically relevant use cases, including AI-based diagnosis, therapy support tools (e.g. chatbots), and behavioral monitoring, thereby bridging the gap between research and real-world implementation. Highlighting non-generative AI methods: The review specifically concentrates on non-generative AI techniques, such as ML, DL, and NLP, providing clarity on their distinct roles and limitations in mental health contexts. Ethical and cultural contextualization: It addresses not only the technical potential but also the ethical, social, and cultural considerations necessary for the responsible deployment of AI in mental healthcare settings, particularly in diverse populations. Forward-looking perspective: The article outlines emerging research directions, such as multimodal emotion recognition and culturally adaptive AI systems, offering a roadmap for future interdisciplinary collaboration. How this review adds value: Recent 2025 systematic reviews focus on specific modalities or settings. Our goal is complementary: a cross-modal, application-focused synthesis (diagnosis, therapy workflows, and in-the-wild monitoring/ecological momentary assessment (EMA)) with deployment realities (governance, interoperability, and model stewardship). Where systematic reviews exist, we acknowledge and contrast them, then extend with practical guidance for implementation

Positioning and novelty

Several 2025 systematic reviews synthesize AI in mental health. Dehbozorgi et al. 14 survey broad AI applications and ethical issues; Cruz-Gonzalez et al. 2 structure evidence across diagnosis, monitoring, and intervention up to February 2024; Wang et al. 15 focus on generative AI (large language models (LLMs)). In contrast, our narrative review: (i) concentrates on non-generative AI (ML/DL/NLP) with governance and implementation as core through-lines; (ii) is updated to June 2025; (iii) adds a representativeness analysis (high-income country (HIC) vs. LMIC; age groups) and a deployment readiness checklist (data-centric practices, external validation, model reporting, and fairness/privacy); and (iv) includes a modality outcome mapping table to support clinical translation. Together, in Table 1, showing the comparison of recent reviews on AI in mental health and this narrative review.

Comparison of recent reviews on AI in mental health and this narrative review.

AI: artificial intelligence; ML: machine learning; DL: deep learning; NLP: natural language processing; LLMs: large language models.

Methods

Eligibility criteria

AI techniques in mental health

The integration of AI into mental health care has opened new avenues for diagnosis, treatment, and monitoring. Key AI techniques namely ML, DL, NLP, and multimodal AI have shown promise in enhancing mental health services. This section shows these techniques, highlighting recent advancements and applications.

Machine learning

ML involves algorithms that learn from data to make predictions or decisions. In mental health, ML has been utilized for:

Symptom classification and risk prediction

One of the most impactful applications of ML in mental health is its ability to classify psychiatric symptoms and predict an individual’s risk for developing mental health disorders. 19 By leveraging structured clinical data, behavioral patterns, and even unstructured digital traces from social media or smartphone usage, ML models can identify early warning signs of conditions such as depression, anxiety, and suicidal ideation. 20 Recent studies have demonstrated the efficacy of using supervised learning algorithms such as SVM, random forests, and logistic regression to detect depressive symptoms with high accuracy. 21 Another studies report robust supervised-learning performance for depression detection/severity, including support vector machines (e.g. electroencephalogram (EEG)-based classification), random forests (e.g. biomarker plus clinical features), and logistic regression baselines in EHR prediction pipelines. 22

ML models are increasingly being used in real-time digital platforms to enhance continuous mental health monitoring. A notable example is the work, who integrated sentiment analysis with mobile-based ML algorithms to detect early signs of depression and anxiety from users’ social media activity and text messages. 23 The system, trained on longitudinal data, provided automated alerts to mental health professionals when risk thresholds were exceeded, enabling timely outreach. These developments suggest that AI can act not only as a diagnostic aid but also as a preventative tool capable of bridging the gap between symptom emergence and clinical diagnosis, specially in underserved populations or high stigma settings where traditional mental health services are less accessible. 24

Treatment response prediction

One of the most promising applications of AI in mental health is the prediction of treatment response, which aims to identify how individual patients will respond to specific therapeutic interventions before those treatments are fully administered. By leveraging ML algorithms trained on historical clinical data, symptom profiles, and biometric information, AI systems can forecast the likely efficacy of antidepressants, psychotherapy, or other interventions. For instance, a recent study by researchers demonstrated that combining early EEG recordings with ML models could predict antidepressant treatment response with over 70% accuracy within the first week of therapy. Such predictive capabilities enable clinicians to tailor treatment plans to individual patients, potentially shortening recovery times and reducing adverse effects. 25

These AI-based predictive models often utilize a variety of features, including genetic data, patient demographics, baseline symptom severity, and digital phenotyping data from smartphones or wearables. DL models have also been employed to capture nonlinear interactions among these variables, improving predictive performance. 26 However, while the results are encouraging, real-world clinical implementation remains limited due to challenges such as data set heterogeneity, lack of external validation, and ethical concerns regarding algorithmic transparency. 27

Multimodal data integration

Multimodal data integration refers to the process of combining multiple data sources such as speech, facial expressions, physiological signals, and textual input to create a more comprehensive and accurate representation of a person’s mental state. 28 This approach is particularly valuable in mental health, where symptoms are often expressed through diverse behavioral and physiological cues. 29 For example, recent work demonstrated that integrating audio, video, and text modalities significantly improved the accuracy of depression detection compared to single modality models. 30

Multimodal integration plays a critical role in remote and real-time mental health assessment systems. In teletherapy or virtual consultations, AI systems can synthesize facial micro-expressions, vocal tone, and verbal content to infer the patient’s emotional state, thus supporting clinicians in making more informed evaluations even in the absence of in person interaction. 31 Wearable devices and smartphone sensors further expand the scope of multimodal data by providing continuous, passive monitoring of behavioral patterns such as movement, sleep, and social interaction. Platforms like RADAR-MDD utilize multimodal fusion techniques to detect mood changes and relapse risk in patients with depression. As these systems evolve, they promise to offer scalable, personalized, and context-aware mental health support while also raising important considerations around data privacy, interoperability, and ethical deployment. 29

Deep learning

DL, a subset of ML, utilizes neural networks with multiple layers to model complex patterns in data. In mental health, DL has been applied to:

Speech and audio analysis

Speech and audio signals are rich sources of behavioral and emotional information, making them valuable for the early detection and monitoring of mental health conditions. 32 Recent advances in DL, particularly the use of recurrent neural networks (RNNs) and long short-term memory (LSTM) architectures, have enabled the automatic analysis of prosodic features such as pitch, tone, speaking rate, and pauses. In a recent study, developed a speech-based depression detection system using a hybrid LSTM-convolutional neural network (CNN) framework, achieving high classification accuracy across both clinical and non-clinical data sets. Such models allow for passive, non-intrusive mental health screening through regular voice recordings, offering a scalable alternative to traditional diagnostic interviews. 33

Beyond depression detection, speech analysis is also being applied to evaluate the severity and progression of disorders such as schizophrenia, bipolar disorder, and PTSD. Temporal patterns in spontaneous speech and the semantic coherence of verbal responses can provide indicators of cognitive disorganization or emotional distress. 34 Moreover, mobile applications are increasingly incorporating voice analysis tools for real-time assessment, offering continuous support outside clinical settings. As these technologies mature, integrating speech-based models into telepsychiatry and mobile health (mHealth) platforms holds immense potential to improve access, continuity of care, and early intervention in mental health treatment. 35

Facial expression recognition (FER)

FER has emerged as a powerful tool in the assessment of mental health conditions, particularly for detecting subtle affective cues that may be overlooked during traditional clinical evaluations. 36 By leveraging computer vision and DL techniques especially CNN-FER systems can automatically analyze facial muscle movements, micro-expressions, and gaze patterns to infer emotional states. 37 Recent advancements have enabled these systems to capture nonverbal indicators of depression, anxiety, and emotional dysregulation with increasing accuracy. CNN-based models trained on annotated facial data sets can identify sadness, apathy, or avoidance behaviors in patients, which are common in mood disorders. These systems are particularly valuable in telehealth settings, where clinicians may have limited visual access to patients, and in longitudinal studies requiring non-intrusive, automated monitoring. 38

FER has been integrated into multimodal AI systems to enhance diagnostic robustness by combining facial cues with vocal tone, linguistic features, and physiological data. This approach has shown promise in improving the early detection of disorders such as major depressive disorder (MDD) and schizophrenia. 39 For example, study by Li et al. 37 utilized a multimodal framework combining FER and speech emotion recognition to detect depressive symptoms in real time, achieving over 85% accuracy in clinical simulations.

Multimodal data integration

Multimodal data in Figure 2 showing integration in mental health AI refers to the combined analysis of diverse data streams such as facial expressions, speech patterns, text input, physiological signals, and behavioral metrics to capture a comprehensive picture of an individual’s mental state. Unlike unimodal approaches, which analyze a single type of input (e.g. text or audio), multimodal systems can detect complex, subtle patterns by correlating signals across modalities. 40 An individual’s voice tone, facial micro-expressions, and word choices during a therapy session may each suggest mild distress, but their combined analysis can significantly increase the reliability and sensitivity of detecting early signs of depression or anxiety. 41 Recent research has demonstrated that such integrative methods improve the performance of emotion recognition and mood prediction systems, particularly in naturalistic settings where single-modality data may be noisy or incomplete. 42

Integration of multimodel data for mental health.

Advancements in DL architectures, particularly transformer-based models and attention mechanisms, have enabled more sophisticated fusion of multimodal inputs. These models dynamically weigh the importance of each input channel (e.g. visual, auditory, and textual) to make real-time inferences.43,44 Applications include telepsychiatry platforms that automatically assess affective states during video consultations and wearable-integrated apps that track movement, heart rate, and verbal interactions to identify changes in mood or stress levels. 45

Natural language processing

NLP enables machines to understand and interpret human language. In mental health, NLP has been instrumental in:

Sentiment analysis and mood detection

Sentiment analysis and mood detection are among the most widely used NLP techniques in mental health research and practice. These methods analyze textual data ranging from social media posts and text messages to clinical notes and therapy transcripts to identify emotional tone and infer psychological states. 46 By detecting linguistic patterns associated with sadness, hopelessness, anxiety, or agitation, sentiment analysis tools can serve as early warning systems for mental health conditions such as depression and anxiety. 47 ML-based sentiment classifiers trained on large data sets of labeled mental health content have achieved impressive accuracy in detecting depressive symptoms from Twitter and Reddit posts. Recent studies have demonstrated that sentiment scores, when tracked over time, can reveal mood fluctuations and correlate strongly with clinically validated depression scales, suggesting their potential for continuous digital mental health monitoring. 48

In clinical settings, sentiment analysis is also being integrated into electronic health records (EHRs) and patient-reported outcome platforms to support therapists and psychiatrists. 49 By automatically highlighting emotionally charged language or sudden shifts in tone, these tools can assist clinicians in identifying emerging psychological crises or treatment response. Moreover, recent advancements in transformer-based language models, such as BERT and RoBERTa, have further improved the granularity and contextual accuracy of mood detection. 50 These models can capture subtleties in expression, such as sarcasm, hesitation, or emotional masking, that traditional NLP methods often miss. As sentiment analysis continues to evolve, its integration with multimodal systems (e.g. voice tone and facial expression) holds promise for building more comprehensive and real-time mental health assessment tools that can augment both self-monitoring applications and professional care. 51

Chatbot development for therapy support

In recent years, AI powered chatbots have emerged as scalable tools for delivering mental health support, particularly cognitive behavioral therapy (CBT)-based interventions. These systems leverage NLP and ML algorithms to simulate therapeutic conversations, offering users real-time emotional assistance, psychoeducation, and mood tracking. Popular examples include Woebot, Wysa, and Tess, which have been deployed globally to support individuals experiencing depression, anxiety, or stress. These chatbots use conversational agents trained on large data sets of therapy interactions and psychological scripts to provide structured, empathetic responses.

Several studies have demonstrated the efficacy and acceptability of such chatbots in both clinical and non-clinical populations. 52 A randomized controlled trial found that users engaging with a CBT-based chatbot reported significant reductions in anxiety symptoms over a four week period compared to control groups. Moreover, chatbots offer a degree of anonymity and accessibility that lowers barriers for individuals hesitant to seek traditional therapy due to stigma or logistical constraints. 53 While these tools are not intended to replace professional care, they serve as valuable adjuncts, especially in contexts with limited mental health resources. Ongoing advancements in NLP, emotion recognition, and personalization are further enhancing the responsiveness and clinical utility of AI-driven mental health chatbots. 54

Clinical documentation analysis

Clinical documentation, including progress notes, discharge summaries, and psychiatric evaluations, contains a wealth of unstructured data that is critical for understanding patient history and mental health trajectories. NLP techniques have emerged as powerful tools for extracting clinically relevant information from these narratives. 55 By parsing linguistic patterns, identifying named entities (e.g. symptoms, medications, and risk factors), and mapping text to standardized ontologies such as SNOMED CT or ICD-10, NLP models can convert qualitative records into structured data suitable for analysis. This capability supports mental health professionals in diagnosing conditions, tracking symptom progression, and tailoring treatment plans with greater precision. 56

Recent advancements have demonstrated the effectiveness of NLP in automating and enhancing clinical workflows in mental health settings. NLP-based models could accurately identify markers of depression, anxiety, and suicidal ideation from clinician notes, improving both screening efficiency and diagnostic accuracy. 57

Multimodal AI

Multimodal AI systems integrate data from multiple sources such as text, audio, video, and physiological signals to provide a comprehensive assessment of mental health. Applications include:

Emotion recognition

Emotion recognition is a critical capability within AI-enabled mental health systems, aiming to identify an individual’s emotional state based on observable cues such as facial expressions, vocal tone, and linguistic patterns. 39 Traditional approaches relying on single modalities like text-only sentiment analysis often fail to capture the full spectrum of human emotion, especially in complex mental health contexts where symptoms may be subtle or atypical. 40 Multimodal AI overcomes this limitation by integrating inputs from multiple channels, resulting in a more robust and nuanced understanding of affective states. When a patient’s facial expression suggests neutrality, but their speech tone choice indicate sadness or distress, a multimodal model can reconcile these signals to more accurately classify the emotional state. 58

Wearable technology integration

Wearable technologies have emerged as powerful tools for real-time monitoring of mental health states by continuously collecting physiological and behavioral data. Devices such as smartwatches, fitness bands, and biosensors can track parameters like heart rate variability, sleep quality, physical activity, skin conductance, and even voice tone. These data streams offer insights into emotional regulation, stress levels, and depressive symptomatology. 59

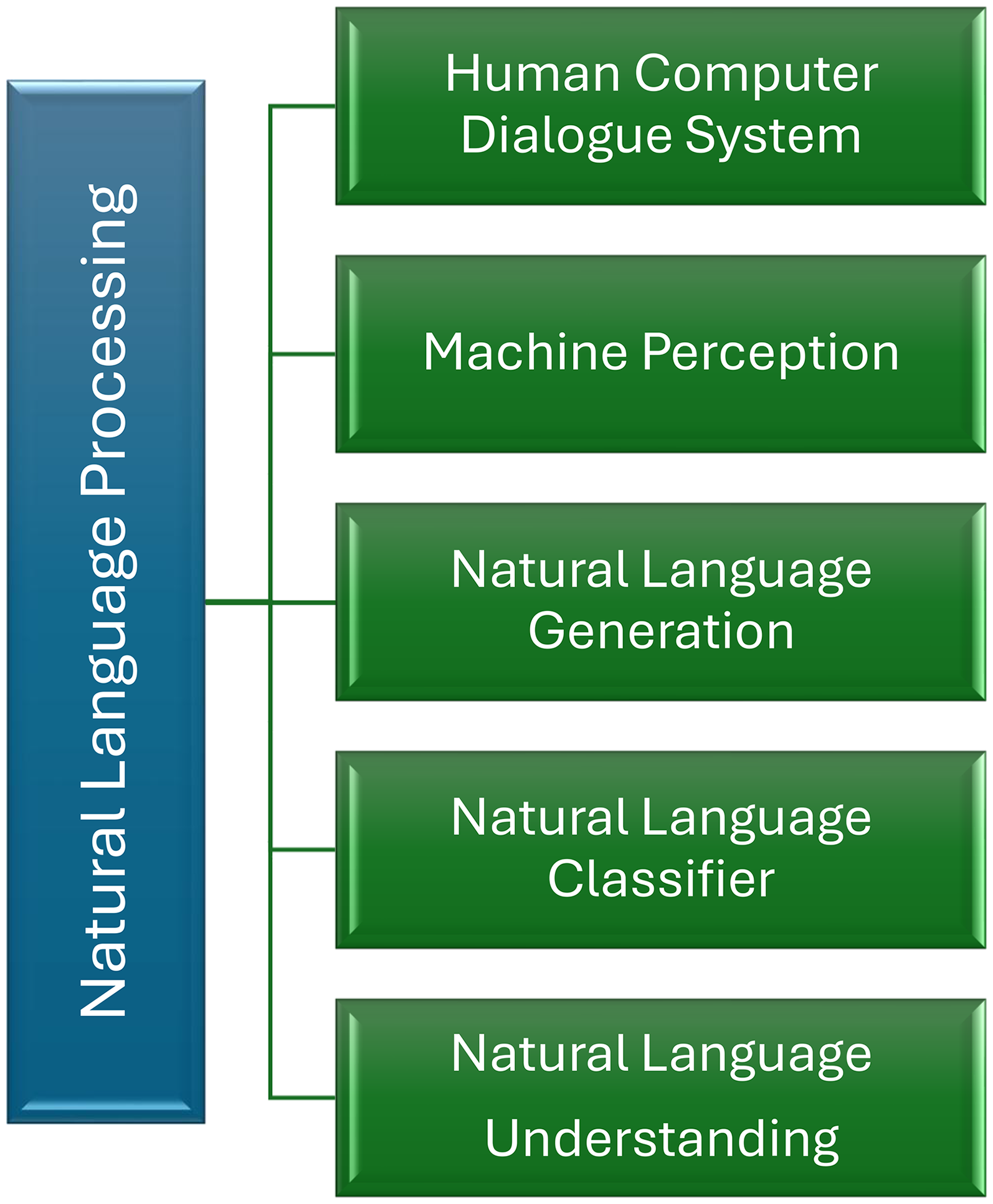

Moreover, the fusion of wearable sensor facilitates the development of personalized and adaptive interventions that align with the user’s daily life and environment. Figure 3 highlights how NLP serves as a cornerstone in processing multimodal inputs from wearables, facilitating key functionalities like natural language understanding, classification, and interactive dialogue systems. Recent studies show that AI-enhanced wearable systems can outperform traditional assessment tools by identifying subtle, moment to moment fluctuations in mood and behavior that might be missed during routine clinical visits. 60 As wearable devices become increasingly affordable and ubiquitous, their role in population-level mental health monitoring is likely to grow, offering scalable and non-invasive means to support early detection, self-management, and remote care delivery. 61

Core components of NLP in wearable AI systems for mental health. NLP: natural language processing; AI: artificial intelligence.

Telepsychiatry enhancements

Multimodal AI has emerged as a powerful tool to bridge this gap by integrating audio, video, and text based data in real-time. These systems analyze vocal features (like tone, pitch, and speech rate), facial expressions, and linguistic patterns simultaneously, allowing clinicians to access a richer and more objective set of indicators. AI can flag subtle speech hesitations or facial micro-expressions associated with anxiety or depression signals that might be missed in a standard video call. Such capabilities not only augment the clinician’s assessment but also support early detection of mood fluctuations and emotional distress. 61

Moreover, advanced AI models can operate passively during virtual consultations to generate continuous mental health risk scores, enabling clinicians to monitor patients over time without increasing session length or clinician workload. Recent studies have demonstrated the efficacy of combining NLP with facial and speech analytics to improve diagnostic accuracy in telehealth settings. 62 These tools can also be adapted for culturally diverse populations by training models on language and context specific data sets, thus improving inclusivity and reducing diagnostic bias. As telepsychiatry continues to expand, integrating multimodal AI can transform remote mental health care into a more data-driven, accessible, and personalized service, especially for underserved or geographically isolated populations.63,64

Applications in mental health

AI has increasingly become integral to mental health care, offering innovative solutions for diagnosis, therapy support, and continuous monitoring. The following sections delve into these applications, highlighting recent advancements and their implications. Figure 4 demonstrates the potential of the different fields for mental health care. And also Table 2 presents the summary of AI techniques and applications in mental health.

Potential domains of artificial intelligence (AI) application in mental healthcare, highlighting both opportunities and challenges.

Summary of AI techniques and applications in mental health.

AI: artificial intelligence; BERT: bidirectional encoder representations from transformers; GPT: generative pre-trained transformer; DL: deep learning.

Diagnosis

AI technologies have revolutionized the diagnostic process in mental health by enabling the analysis of complex data sets, including speech patterns, text inputs, and behavioral activities. These tools assist in identifying conditions such as depression, anxiety, PTSD, and bipolar disorder.

Speech and text analysis

Speech and text analysis have become essential tools in the application of AI for mental health diagnostics. ML algorithms can extract and interpret linguistic features such as tone, prosody, word choice, sentence structure, and speech pace to detect subtle cues associated with psychological conditions. 62 These models have shown significant promise in identifying early indicators of depression, anxiety, and PTSD, specially when traditional clinical assessments are unavailable or infeasible. Variations in speaking rate, reduced lexical diversity, and increased use of negative emotion words are commonly observed in individuals experiencing depressive episodes. 65

This decentralized model enables collaborative learning from data distributed across devices, making it particularly suitable for mental health applications where confidentiality is paramount. The system demonstrated improved accuracy in detecting both depression and anxiety by training on diverse, real-world language samples without aggregating sensitive personal data. This innovation not only advances the technical frontiers of AI-based diagnosis but also addresses critical concerns related to ethics, data protection, and clinical applicability in digital mental health tools. 66

Behavioral activity monitoring

Behavioral activity monitoring has become a critical application of AI in mental health care, leveraging data from wearable devices and smartphone sensors to gain real time insights into an individual’s physical and social behaviors. These technologies continuously collect metrics such as step count, heart rate variability, sleep duration, screen time, and geolocation. AI algorithms then process this high-frequency, high-dimensional data to identify patterns and anomalies that may be indicative of emerging mental health issues. By detecting these behavioral changes early, AI systems offer the possibility of proactive, rather than reactive, mental health interventions. 67

ML models can classify mood states and predict mental health deterioration by analyzing multimodal behavioral signals, even in the absence of explicit self reporting. 68 Research using ecological momentary assessment (EMA) integrated with smartphone sensor data has demonstrated promising results in predicting short-term suicide risk and depressive relapse. While behavioral monitoring presents a powerful tool for continuous mental health surveillance, its effectiveness depends on data quality, user compliance, and ethical handling of sensitive personal information. Ensuring transparency, user consent, and cultural adaptability remains essential for the responsible deployment of AI-based behavioral monitoring systems. 69

Multimodal data integration

Multimodal data integration has emerged as a critical approach in enhancing the diagnostic accuracy of mental health conditions by leveraging diverse data streams. Traditional assessments often rely on self-reported symptoms or clinician observations, which can be limited by subjectivity and recall bias. In contrast, integrating speech patterns, textual inputs (such as social media posts or therapy transcripts), and behavioral metrics (like sleep, movement, or phone usage) provides a richer, more objective picture of an individual’s mental state. 70

Underscored the significant potential of passive sensing data collected from smartphones and wearable devicesin predicting suicidal ideation and high risk behaviors. Their findings highlight that combining data modalities not only improves predictive performance but also enables more proactive mental health care. 71 A model that merges GPS location data with communication frequency and voice sentiment can identify social withdrawal key indicator of depression far earlier than traditional clinical assessments. 72

Therapy support

AI-based tools have expanded access to therapeutic resources, particularly through chatbots offering CBT techniques and emotional support.

AI chatbots

AI powered chatbots such as Woebot and Wysa are increasingly being integrated into digital mental health interventions, offering users immediate, interactive platforms to manage emotional distress and psychological challenges. These chatbots leverage NLP to engage users in conversational exchanges that mimic human interaction, providing real-time coping strategies, mood tracking, and CBT-based guidance. 73

Empirical evidence supports the effectiveness of these AI chatbots in reducing symptoms of mental distress. A recent study demonstrated that users of Woebot experienced significant reductions in depression and anxiety symptoms, along with high rates of user satisfaction and continued engagement. 74 Similarly, Wysa has been adopted by millions globally, with users reporting improved emotional resilience and well-being over time. Despite their promising results, experts emphasize that chatbots should not replace human therapists but rather serve as complementary tools within a stepped-care model providing scalable, low-intensity support while flagging more severe cases for professional intervention. 75

Accessibility and cost-effectiveness

AI-powered chatbots provide a scalable, cost-effective solution to the growing demand for mental health support, particularly in regions where access to professional care is limited. These chatbots, available around the clock, can engage users in therapeutic conversations, deliver evidence-based interventions such as CBT, and assist with mood tracking and emotional regulation. 76 By eliminating geographical and financial barriers, AI chatbots have democratized access to mental health services, especially for individuals in rural or low resource settings where mental health professionals are scarce or overburdened. 77

Recent trends in countries like Taiwan and China highlight the increasing reliance on AI chatbots among younger populations. Faced with long wait times, stigma surrounding mental illness, and a shortage of clinicians, many individuals are turning to digital tools as the first step toward managing their mental health. These chatbots are perceived as nonjudgmental, private, and easily accessible qualities that are specially appealing in cultures where open discussion of emotional distress may be discouraged. However, experts caution that while AI chatbots can provide immediate, supportive care, they should be used as a complement not a replacement for professional mental health services.78,79

Integration with professional care

While AI-powered chatbots have demonstrated significant promise in offering immediate, accessible mental health support, they are not intended to replace the expertise of licensed mental health professionals. These tools can effectively deliver cognitive behavioral strategies, emotional check-ins, and self-care prompts, making them valuable for early intervention and ongoing self-management. 85 However, their responses are limited by programmed algorithms and cannot fully replicate the nuanced understanding, empathy, and clinical judgment of human therapists. As such, chatbots are best positioned as supplementary resources that can support individuals between therapy sessions or during periods when traditional care is inaccessible. 11

Mental health experts consistently emphasize the importance of integrating AI systems within a broader, professionally guided treatment framework. When used in conjunction with clinical care, AI tools can enhance therapeutic outcomes by providing continuous engagement, tracking patient-reported outcomes, and identifying early warning signs of relapse or crisis. 86 However, over-reliance on these technologies without human oversight may lead to misinterpretation of symptoms or neglect of complex psychological issues. Therefore, a hybrid model combining the scalability of AI with the personalized care of mental health professionals offers the most promising path for sustainable, effective mental health support. 65

Monitoring and risk prediction

Continuous monitoring of mental health through AI enables early detection of mood swings and potential crises, facilitating timely interventions.

Wearable devices

Wearable devices have become increasingly vital in the real-time monitoring of physiological and behavioral markers associated with mental health. Smartwatches, fitness bands, and rings are equipped with sensors that track heart rate variability, sleep quality, movement, and even skin temperature factors closely linked to emotional regulation and stress levels. 87 These continuous, non invasive data streams allow for the detection of early signs of mood fluctuations, anxiety, or depressive episodes. Irregular sleep patterns or reduced physical activity captured over days or weeks can signal an impending mental health crisis, prompting timely intervention. These tools are particularly valuable for individuals at risk of relapse or those with limited access to clinical care, providing clinicians with objective, longitudinal data for informed decision-making. 88

Advancements in wearable technology introduced a new generation of home based devices specifically tailored for mental health and sleep disorders. One such innovation includes a smart ring capable of high-fidelity sleep pattern monitoring, designed to aid in the early detection and treatment of obstructive sleep issue often comorbid with mood disorders. 89 These wearables not only enhance patient engagement by providing feedback on daily wellness but also integrate seamlessly with mobile applications that analyze and visualize mental health trends. As these tools evolve, they offer promising potential for more personalized, data-driven mental healthcare, though their adoption must be accompanied by strict adherence to privacy, consent, and ethical guidelines. 90

Smartphone applications

Smartphone applications have become a valuable tool in the assessment and support of mental health by leveraging the ubiquitous presence of mobile devices in daily life. 91 By applying ML algorithms to these data streams, smartphone-based platforms can detect early signs of psychological distress, mood fluctuations, and behavioral changes associated with conditions such as depression and anxiety. This passive sensing allows for unobtrusive, real-time mental health monitoring, providing a scalable solution for early intervention, especially in settings where access to clinicians is limited. 92

Recent research underscores the clinical impact of these technologies. A study demonstrated that individuals on waiting lists for traditional therapy experienced significant reductions in depression, anxiety, and suicidality after using evidence-based mental health apps in a combination with wearable sensors. 93 These digital tools offered users immediate coping strategies, self-assessment features, and behavioral feedback, bridging the gap in care during critical waiting periods. However, experts emphasize the need for careful validation, personalization, and data privacy safeguards to ensure these tools are both effective and ethically responsible in long-term mental health management. 94

Ecological Momentary Assessment (EMA)

EMA is a data collection method that captures individuals’ moods, thoughts, and behaviors in real time and within their natural environments. Unlike retrospective assessments, EMA minimizes recall bias and provides a more accurate, dynamic view of a person’s psychological state. By prompting users to record their experiences multiple times a day via smartphones or wearable devices, EMA generates rich, temporally sensitive data. This approach is especially valuable in mental health research, where symptoms such as mood fluctuations or stress responses can vary significantly throughout the day and are influenced by contextual factors 95 .

Recent advancements in AI have enhanced the utility of EMA by enabling predictive modeling of acute mental health risks. Studies have shown that ML algorithms applied to EMA data can effectively identify patterns associated with near-term suicidal ideation, stress episodes, or depressive relapses. This integration of EMA and AI paves the way for proactive and personalized mental health interventions, allowing for early support before a crisis occurs. However, challenges remain regarding data privacy, adherence, and the need for culturally sensitive algorithms that generalize across diverse populations. 96

Data-centric AI

Privacy-preserving learning (federated and secure enclaves) and synthetic data can widen data access but create new risks (shifted distributions and disclosure through rare combinations). Projects should document data lineage, audit drift post-deployment, and evaluate fairness on subgroups, not only overall metrics.

By keeping data locally, federated learning provides robust privacy protections, but it has some limitations as well. Particularly in low-resource environments, heterogeneity among sites, such as differences in sample distributions, device quality, or data collecting procedures, can result in models that are inconsistent or biased. Furthermore, it’s still difficult to guarantee equal engagement across devices because underrepresented groups could unintentionally be left out of training. Similar to this, creating synthetic data might increase access to training data sets but also presents ethical and legal challenges. Unintentionally reproducing hidden biases or, in rare instances, allowing new identification through distinct feature configurations are two possible outcomes of synthetic samples. There is still ambiguity surrounding declaration and authenticity rules because existing frameworks like GDPR and HIPAA only offer partial recommendations. Therefore, in order to protect trust and accountability in mental health platforms, data-centric AI technologies should be paired with open regulation, such as data set lineage tracking, fairness audits, and unambiguous disclosure of synthetic data utilization.

Challenges and limitations

The integration of AI into mental health care offers promising advancements in diagnosis, treatment, and patient monitoring. However, Table 3 shows the summary of several challenges and limitations and also explain in detail addressed to ensure ethical, equitable, and effective implementation. Key concerns include data privacy, bias and fairness, and the interpretability of AI systems.

Challenges and limitations in applying AI to mental health.

AI: artificial intelligence; DL: deep learning.

Data privacy

Mental health data is inherently sensitive, encompassing personal narratives, behavioral patterns, and clinical diagnoses. The digitization and analysis of such data by AI systems raise significant privacy concerns.

Risks of data breaches and unauthorized access

The potential for data breaches presents a profound threat to the integrity and confidentiality of mental health records. Unlike general medical data, mental health information often includes intimate personal disclosures, therapy notes, emotional histories, and behavioral patterns. When such sensitive data is compromised, it can lead to significant emotional harm, social stigma, and even legal consequences for affected individuals. The breach not only violated patient privacy but also led to blackmail attempts and widespread public distress, illustrating the uniquely high stakes of mental health data security. 105

These incidents underscore the urgent need for robust cybersecurity infrastructure and strict data governance frameworks in AI powered mental health systems. AI applications often require extensive data sets to function effectively, increasing the attack surface for malicious actors. 106 Mental health services become more digitized with mobile apps, online counseling platforms, and wearable devices collecting data the complexity and vulnerability of these systems grow. Developers and healthcare providers must implement end to end encryption, secure cloud storage, and anonymization protocols. 107

Regulatory challenges

The regulatory landscape surrounding AI in mental health is still in its formative stages, struggling to keep pace with the rapid development and deployment of these technologies. As AI tools become more prevalent in mental health diagnostics and therapy, they often operate in legal gray areas regarding consent, data usage, and cross-border data flows. 108 The lack of standardized guidelines makes it difficult for developers and healthcare institutions to determine acceptable practices, specially when sensitive patient data is involved. While general frameworks such as the EU’s General Data Protection Regulation (GDPR) offer baseline protections, they do not yet account for the unique ethical and clinical nuances associated with AI-based mental health interventions. 109

A notable example highlighting the urgent need for more tailored regulation is the case in which Italy’s data protection authority fined Luka Inc., the company behind the Replika AI chatbot, €5 million. The fine was issued for violations including the collection and processing of user data without a legal basis and the absence of effective age verification mechanisms. As mental health applications increasingly involve emotionally vulnerable populations, strong oversight mechanisms are essential to ensure ethical compliance and to foster public trust.110,111

Public perception and trust

Public trust in AI technologies is a cornerstone for their successful integration into mental health care. While many users express curiosity and optimism about the role of AI in expanding access and efficiency, they often remain skeptical about the safety of their personal and psychological data. This skepticism is further amplified in the context of mental health, where users may share highly intimate and stigmatized information, making them particularly sensitive to issues of data misuse or exposure. 112

Moreover, the perception of AI systems as impersonal or emotionally detached can also impact trust, especially in therapeutic settings where empathy, nuance, and human connection play central roles. Users may question whether AI-driven interventions can truly understand or support their psychological struggles. Building trust requires active user involvement, culturally sensitive design, and communication strategies that clarify the benefits and limitations of AI systems. As digital mental health tools become more prevalent, aligning technological capabilities with ethical and user-centered design will be key to gaining sustained public confidence. 113

Mitigation strategies

To address growing concerns around the privacy of mental health data, developers and researchers are increasingly adopting privacy-enhancing technologies such as federated learning and differential privacy. Federated learning enables AI models to be trained over decentralized devices or servers that hold local data samples, without transferring the raw data to a central repository. 114 This technique is especially relevant for mental health applications that involve mobile phones or wearable devices, where user data can remain on the device while contributing to global model improvement. In parallel, differential privacy adds controlled statistical noise to data sets or outputs to prevent the identification of individual users, even when multiple queries are made. These technologies collectively offer robust protection mechanisms, allowing developers to uphold user confidentiality while still extracting meaningful insights from sensitive data sets. 115

Transparent consent mechanisms, user control over data sharing, and the ability to withdraw participation are critical components of trustworthy AI systems in mental health care. Furthermore, collaborations with legal experts and compliance with regulations such as the GDPR or HIPAA are essential to align AI deployments with privacy laws. Recent initiatives have also called for ‘‘privacy-by-design” frameworks, where data protection is integrated at every stage of AI system development. 116

Bias and fairness

AI systems in mental health care are susceptible to biases that can lead to unfair or inaccurate outcomes, particularly for underrepresented groups.

Sources of bias

Bias in AI models often originates from imbalanced or non-representative training data sets, which fail to capture the diversity of the populations they are intended to serve. In mental health contexts, this can result in models that perform well for certain demographic groups such as white, English-speaking populations but poorly for others, including ethnic minorities or non-native speakers. 117 When AI models are trained on such narrow data sets, their outputs may systematically underdiagnose or misclassify mental health conditions in marginalized communities, reinforcing existing healthcare disparities rather than alleviating them. 118

Impact on diverse populations

AI systems used in mental health often reflect the biases inherent in the data sets on which they are trained. When these data sets underrepresent certain demographic groups, racial minorities, nonnative language speakers, or individuals from low-income communities the resulting models tend to perform poorly for those populations. For instance, studies have shown that AI models trained to detect depression via social media text achieved high accuracy in white populations but exhibited significantly reduced performance when applied to posts by Black Americans. 119 These discrepancies are attributed to differences in linguistic expression, cultural context, and the use of vernacular language, which are often not captured adequately in training data. This kind of systemic bias risks excluding or misclassifying vulnerable populations, leading to inequitable access to AI-supported mental health care. 120

Cultural variation in the expression and conceptualization of mental illness further complicates AI deployment across diverse groups. What constitutes a symptom in one culture may be normalized behavior in another, resulting in either over-diagnosis or underdiagnosis. Language barriers, dialect differences, and varying norms about help-seeking behavior can affect the inputs that AI systems rely on, particularly those using NLP or voice analysis. If these systems are not trained with culturally diverse data or adapted to recognize such variability, their conclusions may be misleading or even harmful. 121

Mitigation strategies

Addressing bias in AI systems for mental health care requires a multifaceted approach that begins with the construction of diverse and representative data sets. Models trained exclusively on homogeneous populations, whether by geography, ethnicity, age, or socioeconomic status are more likely to perpetuate inequalities and produce unreliable results for underrepresented groups. 122 Efforts must be made to include data from varied demographics to ensure that AI models capture the full spectrum of human behavior and cultural nuances. This includes leveraging community-based data collection, partnering with global research institutions, and ensuring informed consent in ethically appropriate ways. Moreover, regular audits of training data sets for imbalances or exclusionary patterns should become standard practice in AI development workflows. 123

In parallel, the use of fairness-aware algorithms and post-hoc bias correction techniques can mitigate disparities in model predictions. These methods adjust for known biases either during model training (e.g. reweighting samples) or after deployment (e.g. recalibrating outputs). Equally important is the implementation of continuous model monitoring to assess performance across different subgroups over time. Regulatory oversight, such as mandatory fairness reporting and model explainability standards can further enforce accountability, while the integration of interdisciplinary ethics boards in AI projects ensures that social, cultural, and legal dimensions are adequately addressed. Together, these strategies foster transparency, improve model trustworthiness, and contribute to more equitable mental health outcomes. 124

Interpretability

The ‘‘black-box” nature of many AI models poses challenges for their adoption in clinical settings, where transparency and explainability are paramount.

Challenges of black-box models

One of the most critical limitations of advanced AI systems, particularly DL models, lies in their inherent lack of transparency often referred to as the ‘‘black-box” problem. These models, including CNN and RNN, are capable of identifying subtle patterns across vast, high-dimensional data sets such as voice recordings, social media posts, or facial micro-expressions. The internal logic by which these models arrive at specific predictions or classifications is typically not accessible or interpretable to human users. In the context of mental health care, where clinical decisions must be justified and understood, this opacity poses a significant barrier to adoption. Unlike traditional diagnostic tools that are based on interpretable criteria (e.g. DSM-5 checklists), black-box AI outputs cannot be easily explained to clinicians, patients, or regulatory bodies, raising questions about accountability and clinical validity. 130

This disconnect can result in missed opportunities for intervention or inappropriate reliance on potentially flawed outputs. Moreover, legal and ethical implications emerge when decisions are made based on systems that cannot offer a rationale. As mental health professionals are ultimately responsible for patient outcomes, reliance on non-transparent tools without clear decision paths may conflict with clinical guidelines and malpractice standards. 131

Recent studies have emphasized the importance of bridging this gap by developing models that balance predictive power with interpretability. For example, attention-based neural networks and post-hoc explanation methods such as SHAP and LIME have been applied in mental health contexts to clarify which features (e.g. tone of voice, specific keywords, or behavioral patterns) contributed to a prediction. 132 However, these approaches are still evolving and may not fully resolve the trust deficit. Until interpretability becomes a standard feature of AI tools used in psychiatry and psychology, the black-box challenge will remain a significant obstacle to widespread clinical integration and acceptance. 130

XAI approaches

XAI has emerged as a critical area of research in response to the “black-box” nature of many AI models, particularly DL architectures. In the context of mental health care, where clinical decisions must be transparent and justifiable, XAI offers a way to make complex model outputs more understandable to clinicians, patients, and regulatory bodies. Unlike traditional ML models, which may rely on a small number of human-readable features, many DL systems generate decisions from millions of parameters, making it difficult to trace their reasoning. This opacity has raised concerns about accountability, especially when AI tools are used to support sensitive diagnoses like depression, anxiety, or suicidal ideation. 133

An XAI-enhanced framework for mental health screening, demonstrating how model predictions could be accompanied by visual or textual explanations. The system integrated SHAP values to highlight which input features such as changes in language sentiment, vocal tone, or behavioral data contributed most significantly to a diagnosis of depression or anxiety. By surfacing these model contributions, the framework enabled clinicians to better understand the rationale behind each prediction, improving confidence in AI-assisted tools. Importantly, the model was evaluated not only on accuracy but also on the clarity and usefulness of its explanations from the perspective of practicing mental health professionals. 131

Beyond SHAP, other XAI techniques such as LIME, attention visualization in neural networks, and counterfactual reasoning are being explored for mental health AI. These methods allow developers and clinicians to examine how small changes in input data can alter predictions, revealing underlying model behavior and potential biases. For instance, an AI model trained to assess social media data for depression risk could use LIME to show which phrases or keywords were most influential in the model’s classification. This can help identify whether the model is relying on clinically relevant indicators or spurious correlations, thereby guiding improvements in model design and data curation. 134

Despite these advancements, the implementation of XAI in clinical mental health settings still faces significant hurdles. One challenge is the trade-off between model complexity and interpretability: simpler models are easier to explain but may lack the predictive power of more complex architectures. Additionally, there’s a risk that superficial interpretability tools might lend a false sense of transparency, potentially leading to overreliance on flawed models. As such, future efforts must prioritize not only the development of technically sound XAI methods but also the co-design of these tools with mental health practitioners to ensure they align with real-world clinical reasoning and decision-making processes.135,136

Clinical integration

For AI systems to be effectively integrated into mental health care, they must align with existing clinical workflows and complement, rather than disrupt, clinician decision-making. Mental health professionals rely heavily on clinical judgment, therapeutic relationships, and nuanced understanding of individual patient histories. If AI tools are perceived as intrusive, opaque, or inconsistent with standard practices, their utility in real-world settings diminishes. Therefore, seamless integration requires AI models to provide actionable insights in a format that clinicians can readily interpret and incorporate into their care routines. 137

Interpretable models play a critical role in fostering clinician trust and adoption. Unlike “black-box” systems that offer predictions without explanation, interpretable AI enables mental health professionals to trace how and why a model arrived at a particular conclusion. For example, attention-based neural networks or decision trees can highlight which symptoms or behavioral indicators contributed most to a diagnosis or risk score. This level of transparency not only improves clinical confidence but also facilitates more informed discussions with patients, particularly when AI-generated insights influence treatment recommendations. 138

Moreover, clinical integration involves more than model transparency it also requires interoperability with EHR systems, alignment with ethical guidelines, and adaptability across diverse patient populations. AI tools must be rigorously validated in clinical trials, tested across multiple settings, and continuously monitored for performance drift over time. 139 Institutions should also provide training for mental health professionals on how to interpret and apply AI outputs. By ensuring these systems are intuitive, reliable, and context-aware, we can bridge the gap between cutting-edge technology and compassionate, patient-centered care. 140

Representativeness and selection bias

The evidence synthesized here is disproportionately drawn from HICs and academic/tertiary care settings, with comparatively fewer studies originating from LMICs and community services. Many cohorts reflect convenience samples with stable access to smartphones, wearables, or well-curated EHRs, which may under-represent populations experiencing digital exclusion or fragmented care. Age distributions are often adult-dominant, with limited youth and older-adult representation, and demographic reporting is inconsistently granular, constraining subgroup assessment. As a result, model performance and implementation findings may not generalize across health systems, languages, devices, or culturally mediated symptom expression.

Language and indexing bias

Our search was restricted to English-language sources, which likely excluded relevant studies published in other languages or local journals/repositories, particularly from LMIC contexts. This introduces language and indexing bias and may over-weight HIC evidence. Future reviews should incorporate multilingual searches (e.g. regional databases) and translation workflows, and primary studies should report standardized demographics and conduct external validation across diverse sites to strengthen generalisability.

Future directions

Future directions in Table 4 are highlighting key objectives, implementation needs, and expected impact of AI to mental health. This table also includes relevant references supporting each direction, guiding the development of more ethical, interpretable, and inclusive AI systems in mental health care.

Key future directions in AI applications for mental health, with associated goals and implementation needs.

AI: artificial intelligence; DL: deep learning; XAI: explainable artificial intelligence; EHRs: electronic health records.

Culturally sensitive AI

As AI systems become more integrated into mental health care, ensuring cultural sensitivity is paramount. Mental health experiences and expressions vary widely across cultures, and AI models must account for these differences to provide effective and equitable care.

Recent studies highlight the importance of training AI models on diverse data sets that encompass various cultural contexts. For instance, a systematic review emphasized that generative AI tools often lack cultural competency, leading to misinterpretations or inappropriate recommendations in mental health assessments. To address this, researchers advocate for the inclusion of culturally diverse data during model development and the incorporation of feedback from diverse user groups.141,142 As illustrated in Figure 5, the integration of AI into EHRs provides numerous advantages, such as predictive analysis, NLP, and improved data fetching each of which contributes to culturally sensitive and efficient mental health care.

Future prospects of AI in EHRs, highlighting potential benefits across clinical and analytical domains. AI: artificial intelligence; EHRs: electronic health records.

Moreover, initiatives like the NIH-funded project on improving patient-provider cultural attunement using AI are exploring ways to enhance therapeutic alliances through culturally sensitive AI interventions. These efforts aim to ensure that AI tools not only understand linguistic nuances but also respect cultural values and norms, thereby improving inclusivity in mental health care. 143

Multimodal emotion analysis

Understanding human emotions is a complex task that benefits from analyzing multiple modalities, such as facial expressions, speech tone, and textual content. Multimodal emotion analysis leverages these diverse data sources to provide a more comprehensive assessment of an individual’s mental state. 144

Advancements in this area include the development of models that integrate EEG and electrocardiogram data with traditional modalities to enhance emotion recognition accuracy. Systematic reviews have identified effective AI-based multimodal dialogue systems capable of emotion recognition, highlighting the potential of these technologies in mental health interventions. 144

However, challenges remain in ensuring the accuracy and reliability of these systems across diverse populations. Future research should focus on developing models that can adapt to individual differences and cultural variations in emotional expression, thereby enhancing the effectiveness of AI-based mental health assessments.

Figure 6 illustrates the general workflow of an AI-driven multimodal emotion analysis system. It begins with the analysis of various data inputs, such as text, speech, and physiological signals, and proceeds through structured stages understanding, feature extraction, and algorithmic processing to generate emotion related outputs.

Artificial intelligence (AI)-driven pipeline for multimodal emotion analysis, illustrating five key stages from input analysis to output generation.

Clinician-AI collaboration

Integrating AI into clinical practice requires a collaborative approach where AI serves as a decision support tool rather than a replacement for human clinicians. This collaboration can enhance trust and effectiveness in mental healthcare settings.

Studies reveal that AI-enabled Clinical Decision Support Systems (AI-CDSS) show great potential in assisting healthcare professionals by providing personalized treatment recommendations and enhancing shared decision-making between clinicians and patients. These systems can analyze vast amounts of patient data to generate evidence-based insights, potentially improving diagnostic accuracy and treatment outcomes. However, integrating AI-CDSS into routine clinical workflows presents significant challenges. One of the primary concerns among clinicians is the trustworthiness of AI-generated outputs, especially in high-stakes medical situations. Many healthcare providers remain cautious to rely solely on AI recommendations, stressing the need of human validation and oversight. The prevailing sentiment that AI should complement, not replace, clinical judgment. This highlights the need for transparency, interpretability, and effective collaboration between AI systems and medical professionals to ensure successful adoption and seamless integration into clinical practice. 145

To address these concerns, future efforts should prioritize the development of explainable AI models that offer clear and interpretable insights. Additionally, implementing comprehensive training programs for clinicians on effectively using AI tools, while incorporating their feedback into AI system design, can promote smoother integration. By positioning AI as a complement to human expertise, mental health care can achieve improved diagnostic accuracy and more tailored treatment planning.

Conclusion

AI is transforming the field of digital health by enabling earlier detection of mental health conditions, offering scalable support tools, and providing continuous real-time monitoring of emotional and behavioral changes. Our analysis of non-generative AI techniques (ML, DL, NLP, and multimodal approaches) demonstrates how they might enhance medical decisions, increase exposure through digital resources, and enhance diagnostic precision. Nonetheless, issues with accessibility, bias in algorithms, data security, and cultural diversity still exist. To protect confidence in patients and efficacy, researchers and practitioners should collaborate on the design of AI tools, give explainability first priority, and provide post-deployment assessment. For implementation, it is crucial to provide appropriate data sets and incorporate AI results into current procedures. To ensure responsible implementation, policymakers and healthcare providers should mandate data linkage records, confidentiality-preserving mechanisms (like federated learning), and fairness audits. To maintain access to funding for small-scale, culturally sensitive AI solutions is also required. AI has the potential to be a reliable adjunct to personalized mental health services with careful control and cooperation.