Abstract

Introduction

Endometriosis, a chronic inflammatory condition characterized by the presence of endometrial-like tissue outside the uterine cavity, affects a significant proportion of women of reproductive age, contributing to pelvic pain, infertility, and reduced quality of life.1–5 Globally, it affects approximately 10% of women of reproductive age, equating to around 190 million individuals, with similar prevalence rates in China, where the age-standardized incidence has increased by 3.51% since 1990. Despite its substantial burden, diagnosis often delays by 7–10 years due to nonspecific symptoms and limited awareness, exacerbating physical and psychological impacts.6,7

In recent years, social media has emerged as a pivotal channel for health information dissemination, particularly for conditions like endometriosis, where patients seek peer support and educational resources. 8 Platforms such as TikTok (Douyin in China) and Bilibili, with over 600 million and 300 million active users respectively, facilitate rapid sharing of short videos, enabling accessible health education. TikTok (Douyin) and Bilibili represent two distinct ecosystems in the Chinese social media landscape. To provide necessary context for the engagement metrics, we considered the distinct characteristics of each platform, TikTok (Douyin) is characterized by short-form videos driven by algorithmic recommendations for a broad user base,9,10 whereas Bilibili focuses on medium-to-long-form content with a unique “danmu” (bullet screen) commenting system, attracting a predominantly younger demographic.11,12 These structural differences likely influence how health information is disseminated and consumed on each platform. However, the absence of formal medical editorial oversight or pre-publication verification on these platforms raises concerns about content accuracy and reliability, potentially leading to misinformation that influences patient decisions.13,14

Prior studies have examined health video quality on these platforms for conditions like hypertension, thyroid eye disease, cataract, and so on,15–17 consistently finding moderate to low quality, with professional sources outperforming others but engagement not correlating with reliability. Despite this, endometriosis-specific analyses on Chinese platforms remain scarce. This study addresses this gap by assessing video adherence to clinical health information standards and engagement on TikTok and Bilibili, aiming to inform strategies for improving digital health communication.

To address the research gap on the quality of endometriosis information delivered via short-video platforms, this study conducts a cross-sectional content analysis of videos on TikTok and Bilibili. We evaluate video quality and reliability using validated instruments—the Global Quality Score (GQS), modified DISCERN (mDISCERN), Journal of the American Medical Association (JAMA) benchmarks, and the Video Information and Quality Index (VIQI). By systematically appraising platform content, we aim to identify shortcomings in current health communication practices. We advance three hypotheses. First, content quality differs between platforms because of differences in user demographics and recommendation algorithms. Second, videos produced by healthcare professionals and institutions achieve higher quality scores than videos from nonprofessional sources. Third, user engagement metrics such as likes and shares do not correlate with objective quality scores.

Method

Video selection and data extraction

Videos were searched using the simplified Chinese keyword “子宫内膜异位症” (endometriosis) on both TikTok and Bilibili, with searches conducted in incognito mode without user login to prevent algorithmic personalization and ensure reproducibility; the top 100 videos from each platform were retrieved based on the platforms’ default sorting algorithms, which prioritize relevance and popularity. Inclusion criteria required videos to be publicly accessible, in Chinese language, and directly addressing aspects of endometriosis such as etiology, symptoms, diagnosis, treatment options, prevention strategies, or patient experiences; exclusion criteria encompassed videos that were advertisements, promotional content, duplicates, or irrelevant to endometriosis, resulting in the exclusion of five videos and the inclusion of 195 videos (99 from TikTok and 96 from Bilibili) as depicted in the flowchart (Figure 1). For each video included, data collection was conducted in strict accordance with platform guidelines: videos were not downloaded, no personal data (such as usernames or identifiable information) were stored, and collection was limited to publicly available metrics. The following variables were manually extracted: platform (TikTok or Bilibili), upload date, video duration in seconds, number of likes, number of collections (favorites or bookmarks), number of comments, number of shares, uploader type classified as professional individuals (licensed physicians, nurses, or health experts with verifiable credentials), nonprofessional individuals (patients, laypersons, or influencers without medical qualifications), or professional institutions (hospitals, clinics, or medical organizations), and content type categorized as disease knowledge, treatment, Traditional Chinese Medicine, or other.

Study selection flow for short videos on endometriosis. Initial retrieval identified 200 records using the Chinese keyword for endometriosis, comprising one hundred TikTok and 100 Bilibili videos.

Quality and reliability assessment

Video quality and reliability were evaluated using four validated instruments: the Global Quality Score (GQS), a 5-point Likert scale assessing overall educational value where 1 indicates poor quality and poor flow with completely useless information, 2 represents generally poor quality and poor flow with limited usefulness, 3 denotes moderate quality and suboptimal flow with somewhat useful information, 4 signifies good quality and generally good flow with useful content, and 5 reflects excellent quality and excellent flow with highly useful information18,19; the modified DISCERN (mDISCERN), a 5-item binary tool (1 point for yes, 0 for no) evaluating reliability through questions on whether aims are clear and achieved, reliable sources of information are used, the information is balanced and unbiased, additional sources are listed for reference, and areas of uncertainty are mentioned, yielding a total score from 0 to 520,21; the JAMA benchmarks, a 4-point scale assessing credibility where 1 point is awarded each for authorship (provision of authors’ names, affiliations, and credentials), attribution (clear listing of references and sources with copyright information), disclosure (prominent declaration of ownership, sponsorship, funding, or conflicts of interest), and currency (indication of content posting and update dates), resulting in scores from 0 to 4 22 ; and the Video Information and Quality Index (VIQI), a 20-point scale comprising four domains each rated from 1 (poor) to 5 (excellent)—flow of the video (smoothness and logical progression), clarity of information (accuracy and comprehensibility), quality of production (sound and image resolution), and precision (alignment between title, description, and content)—with a total score calculated by summing the domain ratings. 23 Two board-certified gynecologists with >5 years of experience independently scored all videos while blinded to each other's assessments to reduce bias. Reviewers watched each video fully at normal speed, pausing as needed for notes, and applied scoring tools via a predefined rubric.

Statistical analysis

Data were analyzed using R language (version 4.2.3) with packages including stats for basic statistical tests, irr for inter-rater reliability calculations, and dplyr for data manipulation; normality of continuous variables was assessed using the Shapiro–Wilk test via shapiro.test; descriptive statistics presented continuous variables as mean ± standard deviation (SD) for normally distributed data or median [interquartile range (IQR)] for non-normal distributions, and categorical variables as frequencies and percentages; group comparisons for continuous variables employed the Wilcoxon rank-sum test (wilcox.test) for two groups, Kruskal–Wallis test (kruskal.test) for more than two groups followed by Dunn's post hoc test if significan; associations between variables were examined using Spearman's rank correlation coefficient; all tests were two-sided with statistical significance set at

Ethical considerations

This study was conducted in accordance with the Declaration of Helsinki and ethical guidelines for internet-mediated research. The study protocol was reviewed by the Ethics Review Committee of Huzhou Maternity and Child Health Care Hospital, which granted a formal exemption from full review (Waiver No.2026-J-S-002), as the study involved the analysis of publicly available secondary data. All data were ethically sourced, and all videos analyzed were publicly accessible at the time of data collection. Individual informed consent was not required as no direct interaction with human participants occurred, and no personally identifiable information was published. Data collection and analysis were conducted in full compliance with the Terms of Service of both TikTok (Douyin) and Bilibili.

Result

Engagement and sources

Across 195 videos, platform-level engagement patterns differed markedly. Compared with TikTok, Bilibili videos accrued fewer likes, collections, comments, and shares on a per-video median basis yet were substantially longer in duration (Table 1). Median likes were 18.5 on Bilibili versus 355 on TikTok, median collections were 30.5 versus 118, median comments were 1 versus 57, and median shares were 0 versus 101. All between-platform differences in these four metrics were significant with

Platform-level engagement and video duration for endometriosis-related short videos on Bilibili and TikTok.

Wilcoxon rank-sum test. Likes, collections, comments, and shares denote per-video engagement counts. Duration is measured in seconds.

Uploader composition was dominated by professional individuals. As shown in Figure 2A, professional individuals accounted for the vast majority of uploads 163 of 195, followed by nonprofessional individuals 23 and professional institutions 9. The distribution of uploader types differed by platform Figure 2B. On Bilibili, professional individuals represented 76% of uploaders, nonprofessional individuals 18 point 8%, and professional institutions 5 point 2%. On TikTok, professional individuals constituted 90 point 9%, nonprofessional individuals 5 point 1%, and professional institutions 4%. The between-platform distribution was significantly different by Fisher's exact test with

Distribution of uploader types overall and by platform. (A) shows the overall composition of uploader categories among 195 videos, with professional individuals constituting the majority, followed by nonprofessional individuals and professional institutions. (B) presents the proportional distribution of uploader types within each platform Bilibili and TikTok. The between-platform distribution differed significantly by Fisher's exact test

Engagement metrics varied by uploader type Table 2. Relative to nonprofessional individuals, content from professional individuals attracted higher median likes 85 versus 9, higher collections 69 versus 18, and higher shares 13 versus 0, with Kruskal–Wallis

Engagement and duration by uploader type across all videos.

Kruskal–Wallis test. Uploader types comprise nonprofessional individuals, professional individuals, and professional institutions. Duration is measured in seconds.

Together, the platform contrasts and uploader effects indicate that audience interaction is shaped both by the structural characteristics of short-video ecosystems and by the credibility signaled by uploader identity. The lack of a significant difference in comments across uploader types, despite pronounced differences in likes and shares, may reflect divergent user behaviors across platforms and content styles rather than uniformly higher participatory discourse.

Content themes and engagement

Video content was dominated by disease knowledge. As illustrated in Figure 3A, nearly two thirds of videos focused on disease knowledge 125 of 195, followed by treatment 47, Traditional Chinese Medicine 17, and other topics six. The composition of content differed significantly by platform Figure 3B. On Bilibili, disease knowledge accounted for 81-point 2%, whereas on TikTok it represented 47-point 5%, with a corresponding enrichment of treatment content on TikTok 42-point 4%. Fisher's exact testing indicated a significant between-platform difference with

Distribution of video content categories overall and by platform. (A) Overall composition of content among 195 videos, categorized as disease knowledge, treatment, Traditional Chinese Medicine, and other. (B) Proportional distribution of content categories within Bilibili and TikTok. The composition differed significantly between platforms by Fisher's exact test

Engagement varied substantially across content categories Table 3. Treatment videos received the highest audience response, with markedly elevated medians for likes 445, collections 134, comments 79, and shares 99, all significantly greater than other categories by Kruskal–Wallis testing

Engagement and duration by video content category across all videos.

Kruskal–Wallis test. Content categories include disease knowledge, Traditional Chinese Medicine, treatment, and other. Duration is measured in seconds.

Video duration also differed by content type, with

Quality assessment overview

Platform comparisons indicated modest but meaningful differences in informational quality Table 4. Bilibili videos achieved higher median scores on GQS and mDISCERN than TikTok with

Quality metrics by platform for endometriosis-related short videos on Bilibili and TikTok.

Wilcoxon rank-sum test. Quality instruments were the Global Quality Score GQS, modified DISCERN mDISCERN, Journal of the American Medical Association JAMA benchmark, and Video Information and Quality Index VIQI.

Uploader type was strongly associated with quality metrics, Table 5 and Figure 4. Videos from professional individuals and professional institutions scored higher than those from nonprofessional individuals across all instruments. Median GQS was three among professional creators versus two among nonprofessionals with Kruskal–Wallis

Quality metrics by uploader type. (A) Global Quality Score (GQS) by uploader category. (B) Modified DISCERN (Mdiscern) by uploader category. (C) Journal of the American Medical Association (JAMA benchmark) by uploader category. (D) Video Information and Quality Index (VIQI) by uploader category. Boxplots display distributions with individual data points overlaid; annotations indicate overall ANOVA results and multiple-comparison markers.

Quality metrics by uploader type across all videos.

Kruskal–Wallis test. Uploader types comprise nonprofessional individuals, professional individuals, and professional institutions. Quality instruments were the Global Quality Score GQS, modified DISCERN mDISCERN, Journal of the American Medical Association JAMA benchmark, and Video Information and Quality Index VIQI.

The dispersion of scores in Figure 4 underscores these contrasts. Nonprofessional content clustered at lower values with narrow spread for GQS, mDISCERN, and JAMA, consistent with uniformly limited reference to evidence and source information. Professional individuals displayed consistently higher central tendencies and tighter distributions for GQS and mDISCERN, suggesting more reliable and balanced information delivery. Professional institutions exhibited comparable or slightly higher medians than professional individuals for VIQI and GQS, albeit with greater variability, which may reflect heterogeneous production standards across institutional accounts.

Overall, the convergence of platform-level and uploader-level analyses indicates that both ecosystem context and source identity shape the quality of short-video health information. The absence of a platform difference in VIQI alongside significant differences in GQS, mDISCERN, and JAMA implies that surface production features do not guarantee substantive informational quality. These patterns reinforce the study hypothesis that professional provenance is associated with higher objective quality while also highlighting opportunities for platforms to incentivize evidence citation and source disclosure.

Correlations among metrics

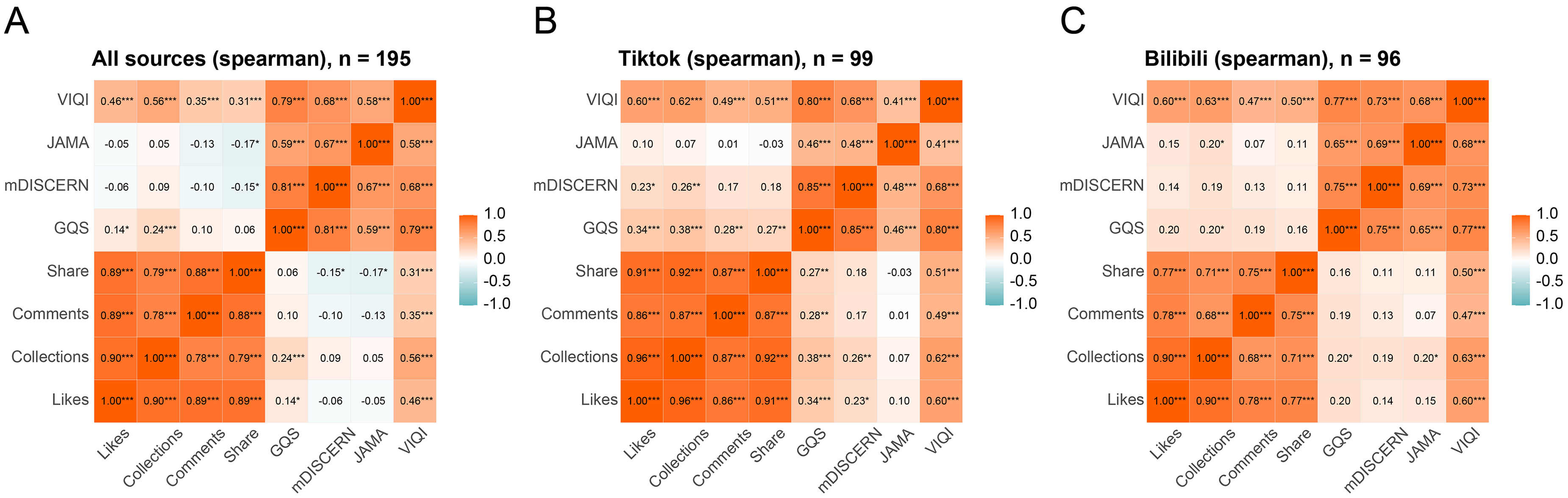

Across all videos, engagement indicators were strongly intercorrelated while their relationships with objective quality were weak or inconsistent Figure 5A. Likes, collections, comments, and shares showed high positive correlations with one another, Spearman rho approximately 0.78–1.00, all

Spearman correlation matrices for engagement and quality metrics. (A) All videos combined

Platform-stratified analyses revealed similar patterns with some divergences, Figure 5B and Figure 5C. On TikTok, mDISCERN, GQS, JAMA, and VIQI were positively interrelated rho approximately 0.41–1.00 and each showed only weak associations with engagement metrics. Shares on TikTok were essentially uncorrelated with JAMA, reinforcing the decoupling between disclosure standards and virality. On Bilibili, quality instruments were again moderately to strongly correlated with one another, and their associations with engagement were minimal. The magnitude of engagement–engagement correlations was slightly lower than on TikTok yet remained substantial. Taken together, these matrices corroborate the hypothesis that user engagement is a poor proxy for objective informational quality, while production quality aligns only partially with evidentiary rigor and transparency.

Discussion

This cross-sectional analysis demonstrates that endometriosis-related videos on TikTok and Bilibili are characterized by moderate overall quality, with significant variations attributable to platform architecture and uploader credentials. Bilibili videos exhibited superior scores on reliability (mDISCERN), transparency (JAMA), and global quality (GQS) compared to TikTok, consistent with prior evaluations of health content on these platforms. This disparity may stem from Bilibili's emphasis on longer-form content, which allows for more comprehensive explanations and source citations, whereas TikTok's short-video model favors succinct, engaging narratives that may sacrifice depth for accessibility. Conversely, TikTok's higher engagement metrics—likes, shares, comments, and collections—align with its algorithmic promotion of viral, emotionally resonant material, a feature that has been noted in studies of other health topics such as irritable bowel syndrome and radiotherapy.24–27 It is important to acknowledge a potential framework-content mismatch when applying tools like mDISCERN and JAMA—originally designed for formal medical information—to user-generated content. These platforms are often valued by patients for lived experience, emotional support, and peer validation, dimensions that clinical assessment tools may systematically penalize. Consequently, a “low quality” score in this study reflects a divergence from established clinical information standards rather than a lack of value for patient community building. However, it is crucial to distinguish between content that scores low due to its narrative nature and content that propagates misinformation. The primary risk arises not from the narrative format itself, but when such content contains unverified medical claims that could be interpreted as a substitute for professional medical advice.

The predominance of professional individuals as uploaders (83.6%) and their association with elevated quality scores across all metrics reinforce the value of expert involvement in digital health dissemination. Nonprofessional content, while comprising a smaller proportion, consistently scored lower, particularly in JAMA benchmarks for authorship and attribution, echoing findings from analyses of endometriosis information on platforms like Instagram and Facebook.28,29 This gradient suggests that credentialed sources are more likely to adhere to evidence-based standards, yet the persistence of nonprofessional videos highlights a potential vulnerability in user-generated ecosystems, where misinformation can proliferate amid limited oversight. Platform-specific uploader distributions, with TikTok favoring professionals and Bilibili hosting more nonprofessionals, may reflect differing community norms and algorithmic incentives, as observed in comparative studies of gastric cancer and gastrointestinal bleeding content.29,30

Content themes further illuminate user priorities, with disease knowledge dominating overall but treatment-focused videos eliciting the strongest engagement. This preference for actionable information mirrors patient journeys documented in social media analyses, where individuals with endometriosis seek practical guidance amid diagnostic delays and chronic symptoms. However, the shorter duration and lower quality of treatment videos raise concerns about incomplete or anecdotal advice, potentially exacerbating misinformation in a condition already plagued by diagnostic challenges. The inverse relationship between video length and engagement underscores a broader tension in short-video platforms: brevity enhances virality but may compromise informational completeness.

Critically, the weak correlations between engagement metrics and quality scores indicate that popularity is not a reliable indicator of accuracy or reliability. This decoupling, evident across both platforms, aligns with systematic reviews of health videos on social media, where viral content often prioritizes sensationalism over evidence. Recommendation algorithms on short-video platforms are designed to maximize watch time and interaction, often prioritizing visually stimulating or emotionally charged content over medically accurate but drier educational material. This algorithmic bias likely contributes significantly to the observed lack of correlation between information quality and user engagement. Such patterns pose risks for vulnerable audiences, as endometriosis patients frequently turn to social media for support and information amid gaps in traditional healthcare. The lack of correlation between engagement and quality scores likely reflects the dichotomy between user needs and clinical standards: users engage with content that offers emotional resonance and narrative support, which naturally differs from the rigid, evidence-based structures required by medical assessment frameworks. However, this disconnect remains critical to document, as patients may inadvertently treat highly engaging but anecdotal content as actionable medical advice.

These results have important implications for digital health strategies. Platforms like TikTok and Bilibili could enhance content moderation by promoting verified professional accounts and requiring source disclosures, thereby aligning engagement with quality. Clinicians should educate patients on evaluating online resources, perhaps integrating social media literacy into consultations. Moreover, collaborations between health organizations and influencers could amplify evidence-based messaging.

Limitations include the cross-sectional design, which captures a snapshot and may not reflect temporal changes in content. The focus on Chinese platforms limits generalizability of our findings to other linguistic and cultural contexts. The unique user demographics and algorithmic architectures of TikTok (Douyin) and Bilibili create a specific digital ecosystem that may not perfectly mirror Western platforms like YouTube or Instagram, although similar trends regarding misinformation appear in global studies. Subjective elements in scoring tools, despite high inter-rater reliability, introduce potential bias. Future research could employ longitudinal tracking, user surveys to assess impact on health behaviors, or interventions to improve content quality. Furthermore, the methodological framework applied here—utilizing GQS, mDISCERN, and VIQI—demonstrates high adaptability and can be effectively employed to evaluate health information quality across various other chronic conditions and emerging digital platforms.

Conclusion

This study provides a comprehensive evaluation of endometriosis-related videos on Bilibili and TikTok, revealing that platform architecture and uploader identity significantly influence information quality and engagement. Bilibili's ecosystem, characterized by longer video durations, was associated with superior reliability, transparency, and global quality scores compared to TikTok, which favored briefer, high-engagement content that often lacked evidentiary depth. A critical finding was the dominance of professional individuals and institutions in producing high-quality material, consistently outperforming nonprofessional creators across all objective benchmarks; however, this superior quality did not correlate with user engagement metrics like likes or shares. Instead, audience attention was disproportionately directed toward treatment-oriented and emotionally resonant content regardless of medical accuracy, highlighting a concerning decoupling where popularity serves as a poor proxy for clinical validity. Consequently, while short-video platforms offer accessible health education, the prevalence of general disease knowledge themes, contrasted with the high user demand for specific treatment advice, creates a vulnerability for patients seeking actionable guidance.

Footnotes

Funding

This work was supported by the Huzhou Science and Technology Bureau [Project number: 2023GYB21].

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Contributorship

ZL: conceptualization; methodology; data curation; supervision; writing—review and editing; critical revision for intellectual content. YM: data curation; investigation; formal analysis; visualization; writing—original draft. YL: resources; validation; data curation; project administration; writing—review and editing. All authors contributed to manuscript writing and editing and approved the final version for submission.