Abstract

Introduction

The rise of misinformation on social platforms is a serious challenge. About a fifth of Americans now obtain their news primarily from social platforms (Mitchell et al., 2020); however, because anyone can post anything, a user can easily encounter misinformation (Vosoughi et al., 2018; Grinberg et al., 2019). Platforms have recently committed to countering the spread of harmful misinformation, via methods such as informing news consumers that an item may be false or misleading, algorithmically demoting it, or removing it entirely (Facebook, 2020).

The ground truth of whether a news article merits one of these enforcement actions is not simple to define. Typical news articles contain multiple factual claims, some of them more prominent than others, some of them more factually accurate than others, and some of them more harmful than others in their impacts on readers who may be misled. Moreover, an article may mislead readers without making a factual claim, such as by asking a factual question in a way that invites an incorrect inference about the correct answer. Thus, assessments of misinformation often go beyond a binary notion of true and false to use multi-point scales and to include aspects such as misleadingness, harm, neutrality, completeness, accuracy, trustworthiness, and objectivity (Soprano et al., 2021; Barbera et al., 2020; Allen et al., 2021).

Crowdsourcing, or using pools of laypeople, has been shown to be effective for many labeling tasks (e.g., (Mitra and Gilbert, 2015; Chung et al., 2019)). Social media platforms have begun to explore ways to use crowd workers and platform users as part of their misinformation assessment processes. For example, Facebook implemented a process they called “Community Review” where crowd workers identified misinformation to route for final evaluation by fact-checking organizations (Silverman, 2019). Twitter’s Birdwatch program created a community of Twitter users who identify and label tweets that propagate misinformation (Coleman, 2021). Any process that involves broad participation, however, raises several concerns.

One concern is that lay raters will make judgments based on “gut reactions” to surface features, rather than determining the accuracy of factual claims. After all, the reason that misinformation circulates widely on social media platforms is that users upvote and share it, often without considering it deeply or even reading it (Pennycook and Randb, 2019b), which is social media encouraging engagement more than accuracy (Jahanbakhsh et al., 2021). There is reason, however, to be optimistic that this can be overcome. Priming people to focus on accuracy, people’s intention to share other articles became more correlated with the accuracy of those articles (Pennycook et al., 2019). Thus, when asked to rate whether an article contains misinformation, people may assess it differently than they do when they choose whether to click or share an item.

Even when going beyond gut reactions, lay raters may lack the expertise to assess information in the way journalists and professional fact-checkers do. (Wineburg and McGrew, 2019) found that journalists assessing an article tend to search for external corroborating or contradictory information, including what the authors call “lateral reading” about the author or source as well as the contents. College students and even history professors, by contrast, tended to focus more on signals within an article itself, to the detriment of their assessments. Here, too, however, there is reason for optimism. In another study, they found that with two 75-minute training sessions on searching for and interpreting external signals, students’ search practices and reasoning processes improved (McGrew et al., 2019).

A third concern is that lay raters may be ideologically motivated or biased. There are partisan divides in beliefs about some factual claims that have become politicized, such as the size of crowds at President Trump’s inauguration or whether wearing face masks significantly reduces the spread of COVID-19. Results of past research on the effects of partisanship on rater assessments of misinformation are mixed. At the level of sources rather than individual articles, (Pennycook and Randa, 2019a) found that Democratic and Republican crowd workers largely agree when distinguishing mainstream news sites from hyper-partisan and fake news sites, but (Michael and Breaux, 2021) found that political affiliation influenced people’s beliefs about which news sources are “fake.” In other studies, partisanship also affected assessments of whether individual articles were misleading (Faragó et al., 2019; Barbera et al., 2020).

Two recent studies elicited misinformation judgments from lay raters about specific articles (Allen et al., 2021; Godel et al., 2021). Perhaps surprisingly, they came to quite different conclusions. One found that the average of the ratings of a group of lay raters could identify misinformation pretty well, even raters saw just the headlines and ledes of articles and not the entire articles (Allen et al., 2021). The other found that crowds performed worse than a journalist (Godel et al., 2021). Here we report on a larger study, using articles from both of the other studies. Thus, one contribution of this study is to resolve the apparent contradiction in findings, which we address in detail in the discussion section.

More importantly, we study the effects of varying the elicitation process. In a control condition, raters assessed whether articles were false or misleading after opening the articles but without doing any additional research. In one treatment condition, which we call the individual research condition, each rater also searched for corroborating evidence. This was intended to elicit “informed” judgments rather than gut reactions. A version of this approach has been used in Facebook’s Community Review (Silverman, 2019) and in academic studies (Barbera et al., 2020; Soprano et al., 2021), but no study has examined whether searching for corroborative evidence improved rater performance. In the other treatment condition, which we call collective research, raters consumed the results of others’ searches. This was intended to reduce ideological polarization—we deliberately included corroborating evidence links discovered by both liberal and conservative raters in an effort to broaden the search horizon for any given rater. Both of the treatment conditions thus enforced some form of lateral reading.

We assessed performance in three ways. First is inter-rater agreement, a measure of internal consistency. Second was partisan disagreement, measured by the correlation, across articles, between the mean rating of liberal raters and the mean rating of conservative raters for each item.

The final performance measure for an MTurk panel was agreement with a journalist’s ratings of the same articles. While the assessment of misinformation cannot be reduced to a purely objective binary distinction between truth and falsehood, as argued above, there is good reason to treat journalists’ assessments as the best available indicator of an underlying “ground truth,” what some refer to as an “alloyed gold standard” (Spiegelman et al., 1997). Fact checkers have well-codified processes and criteria focusing on specific factual claims. Fact-checkers and journalists more generally have a strong ethos of trying to be unbiased, separating their personal opinions from their professional judgments (Mena, 2019; Rodríguez-Pérez et al., 2021). Moreover, judgments on some content may require domain expertise or an accumulated knowledge of the current disinformation actors and the larger narratives they are promoting (McClure Haughey, Muralikumar, Wood & Starbird, 2020), expertise that journalists accumulate by virtue of spending more time than the general public in following the news.

As a comparison point, we also benchmarked the performance of MTurk panels against simulated subsets of one, two, or three journalists. To enable an apples-to-apples comparisons, all rater panels, whether lay raters from Mturk or journalists, were scored by correlating their mean ratings with those of a single held-out journalist, as illustrated in Figure 1. Conceptual diagram of the performance benchmarking process. (a) Rating a single item; compute the mean rating of a Mechanical Turk panel and the mean rating of a source journalist panel, and collect a single target journalist’s rating. (b) Both turker and journalist panels are scored against the same held-out journalist, by computing the correlation over many items.

Comparison to the performance of simulated panels of journalists is especially interesting because platforms may face the practical question of whether and when to rely on crowd judgments to extend the reach of journalists’ or fact checkers’ judgments, which are available for only a limited set of items. If a platform would be willing to rely on the judgment of a single journalist, then arguably it should be willing to rely on a crowd that performs better than a single journalist at predicting what another journalist would say. If a platform would be willing to rely on the majority rating of three journalists, then arguably it should be willing to rely on a crowd that performs better than a panel of three journalists at predicting what another journalist would say.

Experiment design

We conducted a between-subjects study, where each lay rater only experienced one condition. Each rater could rate as many articles as they wanted. Participants were recruited and completed the labeling tasks through MTurk.

The study was approved by the University of Michigan IRB under HUM00171025.

The rating apparatus

The second author conducted iterative usability studies on the survey software itself and the qualification tasks over the course of several months, with pilot participants thinking aloud while using the labeling software. Insights into question wording, presentation and order, and interface controls led to many modifications before data collection began.

Figure 6 in the Appendix shows the labeling interface in the no research condition. The rater assessed how misleading the news item was, on a seven-point scale. Other research has explored the use of coarser or finer scales (Barbera et al., 2020) and the use of multiple scales to cover distinct aspects such as neutrality, precision, and completeness (Soprano et al., 2021). In pilot testing, we found that focusing the question on misleadingness rather than truthfulness helped people think about the effect of the article as a whole and that labeling the extreme points as “not misleading at all” and “false or extremely misleading” was clear enough that workers were able to make judgments most of the time. 1 The Facebook Community Review process tried an alternative approach where raters were first asked to describe a single main factual claim in a news item and then assess its factuality (Silverman, 2019).

Each rater also assessed how much harm there would be if people were misinformed about the topic of the news items, again on a seven-point scale. This encouraged raters to report an item as misleading even if it was about a trivial topic. We were concerned that without this separate question, some but not all raters would factor the importance of the topic into their misleadingness assessments, reducing the consistency of ratings.

After completing the assessment questions, each rater was asked to complete questions about their action preferences for the item: whether they thought platforms should remove the item, reduce its distribution, and/or inform readers by adding warning labels. The distribution of these action preferences and their correspondence with raters’ assessments is the topic of another paper.

Finally, each rater was asked to predict other raters’ action preferences. Questions of this type that encourage reflection about what other raters are likely to say have been shown to increase inter-rater consistency (Shaw et al., 2011; Hube et al., 2019). Analysis of whether the prediction question unintentionally influenced raters’ reports of their own action preferences and how much information the predictions provide about others’ actual preferences is the topic of another paper, also in preparation.

Figure 7 in the Appendix shows the additional request made of raters in the “individual research” condition. Prior to answering any of the assessment, preference, and prediction questions, they had to search for evidence and paste the search terms used and a link to the best evidence found.

Figure 8 in the Appendix shows the interface in the third condition, where participants were asked to click on links found by participants in the second condition and select the one they thought provide the best evidence.

News articles

Raters labeled two collections of English language news articles, taken from two other studies that were conducted independently in parallel with this one, by teams at other universities (Allen et al., 2021; Godel et al., 2021). The first collection was selected from among 796 articles provided by Facebook that were flagged by their internal algorithms as potentially benefitting from fact-checking. Among those, the authors of that paper manually selected 207 articles where the headline or lede included a factual claim. That study’s focus was how little information needs to be presented to raters in order for them to make good judgments, and participants were presented with only the headline and lede rather than viewing the entire article (Allen et al., 2021). Facebook also provided a topical classification of the articles based on an internal algorithm that they did not share; of the 207 articles, 109 articles were classified by Facebook as “political.”

The second collection, containing 165 articles, comes from a study that focused on whether raters could judge items soon after they were published (Godel et al., 2021). It consisted of the most popular article each day from each of five categories defined by the authors of that study: liberal mainstream news; conservative mainstream news; liberal low-quality news; conservative low-quality news; and low-quality news sites with no clear political orientation. Five articles per day were selected on 31 days between November 13, 2019 and February 6, 2020. 2

Our rating process occurred later, from March 1 to March 14, 2020. Four articles from the first collection and two articles from the second collection were removed because the URLs were no longer reachable when our journalists rated them in May and June, leaving a total of 368 articles between the two collections.

Crowds of lay raters

We recruited a large panel of Mturk workers by posting a paid qualification task. The task was available only to workers located in the U.S. Upon starting the qualification task, each worker was randomly assigned to one of the three experiment conditions. They used the interface corresponding to their assigned experiment condition to label a sample item, after which they were given two attempts to correctly answer a set of multiple choice questions quizzing them on their understanding of the instructions. They then completed an online consent form, a four-question multiple choice knowledge quiz, and a questionnaire about demographics and ideology. Participants who did not pass the quiz about the instructions, or answer at least two questions correctly on the political knowledge quiz, were excluded.

51% of raters self-reported as female, 48% self-reported as male, and less than 1% each non-binary and preferred not to say. By self-reported age, 25% were 18–29, 37% 30–39, 21% 40–49, 11% 50–59, and 6% over 60. For self-reported education levels, 9% had a high school degree or equivalent (e.g., GED), 21% some college, 11% an Associate’s degree, 42% a Bachelor’s degree, and 17% a graduate degree. Thus, the participants were somewhat younger and more highly educated than the U.S. population as a whole.

Recruitment funnel.

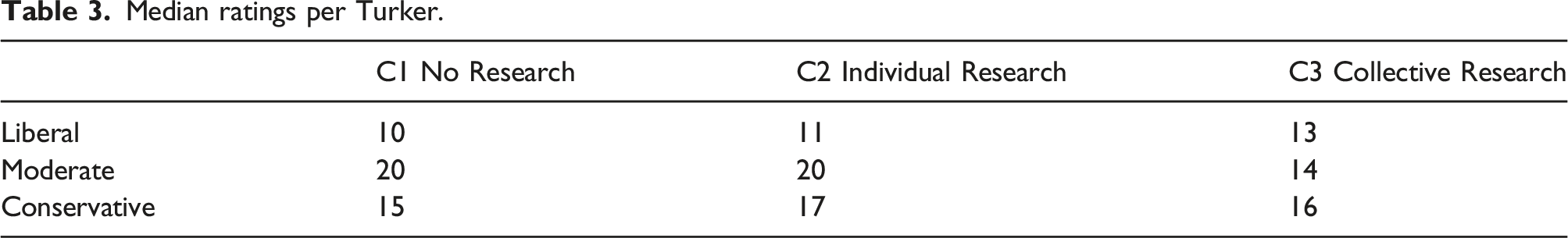

At the completion of the qualification task, workers were assigned to one of nine groups based on their randomly assigned condition and their ideology. We asked about both party affiliation and ideology, each on a seven-point scale, using two standard questions (VCF0301 and VCF0803) that have been part of the American National Election Studies (ANES) since the 1950s (ANES, 2022). Raters who both leaned liberal and leaned toward the Democratic Party were classified as

The news items were posted at various times over the span of 14 days, from March 1 to March 14, 2020. For each condition by ideology group, the first eighteen workers to claim the task rated the item. The median time from posting to receiving the last rating was: 17 hours, 29 minutes in the no-research condition; 18 hours, 22 minutes in the individual research condition; 19 hours, 23 minutes in the collective research condition.

Raters who submitted at least one rating, by condition and ideology.

Median ratings per Turker.

Summary of results by experimental condition.

Journalist raters

Four journalists rated all items from both collections. All four had just completed a prestigious, selective fellowship for mid-career journalists and were recruited based on our contacts with the organizers of the program. Three were U.S.-based and the fourth had covered U.S. politics for many years. One had been through the American Press Institute’s fact-checking bootcamp. One journalist did not rate one item. Another journalist did not rate two items and reported that they did not have enough information to make a judgment on 54 others. Such missing ratings were excluded when computing averages and correlations.

The journalists used the labeling interface for Condition 2, which required them to do individual research. They provided answers to the first two questions, about whether the article was false/misleading and how harmful it would be if people were misinformed on the topic of the article. They were not asked to provide personal opinions about what actions, if any, platforms should take on the article, and were not asked to predict other raters’ opinions about actions. Only their answers to the first question, about how misleading the article was, were used in assessing the performance of panels of lay raters.

As a robustness check, we also assessed performance against a set of three fact-checker assessments for the 207 articles in the first collection, which were collected by (Allen et al., 2021). Their fact-checkers answered Likert-scale questions on seven dimensions, including whether the article was accurate, trustworthy, and unbiased. The seven answers from each fact-checker were averaged to form a composite assessment of the article by that fact-checker. Table 10 in the Appendix shows results of this robustness check, which are qualitatively similar to the results to the results for the same items using the journalists recruited for this study.

Measures and results

We report three evaluation metrics. First is inter-rater agreement, a measure of internal consistency. Second is ideological polarization, measured by correlation between liberal and conservative ratings. Third is correlation with journalist ratings, which we compare to benchmarks of journalist to journalist correlation.

Inter-rater agreement

Figure 2 provides a heatmap of the frequency of the 1–7 ratings for each item in the three conditions. Each item is a row and rows are sorted based on the mean ratings for the item across all three conditions. The color coding makes it obvious that in the two research conditions there were many more items with a consensus of 1 (not misleading at all) or 7 (false or extremely misleading), and fewer intermediate ratings. Frequency of the 1–7 ratings for each item in the three conditions. The two research conditions have more items with strong agreement about extreme ratings (1 and 7).

As a summary measure of agreement, we report the intraclass correlation of ratings (

The

As shown in Table 4, the ICC was 0.422 (CI = [0.388, 0.460]) in the no-research condition, 0.458 (CI = [0.423, 0.496]) in the individual research condition, and 0.510 (CI = [0.473, 0.548]) in the collective research condition. The ICC for C1 falls outside the confidence intervals of the two research conditions. The ICC for C2 falls outside the confidence for C3 but just inside the confidence interval for C1.

Agreement between partisan groups

We also assess whether there is systematic disagreement between raters with different political ideologies. For each item, we compute the mean rating among the eighteen liberal raters and the mean rating among the conservative raters. We then compute the Pearson correlation coefficient, across items, of these mean ratings.

We use the percentile bootstrap procedure (Diciccio and Romano, 1988) to estimate confidence intervals for these correlations. In each of 500 bootstrap samples, we randomly select, with replacement, from the complete set of items, an item set of equal size. For each bootstrap set of items, we follow the procedure above, computing the liberal-conservative correlation coefficient, across items, of the mean ratings. We then compute an interval that covers the middle 95% of correlation scores that were computed.

The liberal-conservative correlation was 0.819 (CI = [0.782–0.851]) in the no-research condition, 0.876 (CI = [0.846–0.899]) in the individual research condition and 0.904 (CI = [0.878−0.926]) in the collective research condition (Table 4). The liberal-conservative correlation in the collective research condition was higher than in the no-research condition for all bootstrap items samples and was higher than in the individual research condition for 99.0% of the item samples. The correlation was higher in the individual research condition than the no-research condition on 99.8% of the item samples.

The effects, however, were not uniform for all kinds of items. On just the political items from the first collection, the liberal-conservative correlations in the three conditions were 0.69, 0.81, and 0.79. On just the non-political items from that collection, the correlations were 0.90, 0.93, and 0.98. On the items from the second collection, the liberal-conservative agreement hardly varied between conditions: 0.83, 0.83, and 0.85. See the Appendix for details about results on subsets of the items.

Agreement with journalists

Our external validity metric compares the performance of panels of lay raters to the performance of panels of journalists. We always score panels, whether MTurk panels or panels of journalists, against a single held-out journalist. To reduce dependence on any one journalist’s ratings, each of the four journalists is used as the held-out journalist and final scores are averaged.

If, by contrast, we scored panels against the mean of several journalists rather than against a single journalist, the correlation scores would be higher. For example, considering all four possible simulated panels of three journalists, the average correlation with the remaining journalist was 0.73. For simulated panels of two journalists, the average correlation with one of the other two held-out journalists was 0.70 For single journalists, the average correlation with another journalist was 0.65. It is the relative scores of different panels that is of interest, however. Scoring against a larger target panel of journalists, would raise the correlation scores for

The advantage of scoring all panels against just a single journalist is that we leave three of the four journalists available for inclusion in simulated benchmark panels. Thus, we are able to compare the performance of large MTurk panels to the performance of groups of up to three journalists.

We construct simulated MTurk panels of various sizes, from one to fifty-four, by taking subsets of the 54 MTurk ratings available for each item. This produces a “power curve” (Resnick et al., 2021) as shown in Figure 3. For each point on the power curve, the x-value is the number of MTurk ratings to randomly select for each item; the y-value is the expected correlation of the mean of that many ratings for each item with the ratings of a randomly selected journalist (in other words, the power of a panel of that size to predict what a journalist will say). The expected correlation is computed by averaging the results over many runs of the process, using each of the four journalists used as the ratings to predict, and using 200 MTurk subsets of each size (all subsets of a given size if there are less than 200 such subsets). Power curves for the three conditions. The

We estimate confidence intervals for the points on the power curve, again using the percentile bootstrap procedure (Diciccio and Romano, 1988). In each of 500 bootstrap samples, we randomly select, with replacement, from the complete set of items, an item set of equal size. For each, we compute the power curve points as described above, the expected correlations with a randomly selected journalist for simulated rater panels of different sizes. We then compute a 95% confidence interval for each point on the power curve as the interval that covers the middle 95% of expected correlation scores that were computed.

The intersection points in the graphs in Figure 3 show the number of lay raters required to get the same predictive power as benchmark panels of one or three journalists. For example, in the no-research condition, 7.69 lay raters were sufficient to achieve the same power as one journalist. 4

Comparing across the three conditions, we can see that lay raters in the two research conditions correlate with a journalist better than do raters in the no research condition and that the individual research condition has greater power than the collective research condition for large groups of lay raters. In the individual research condition, C2, 15.22 lay raters were equivalent to three journalists; even 54 raters were not sufficient to achieve the same power as three journalists in the other two conditions, and in the no research condition, C1, 54 raters were also insufficient to achieve the same power as two journalists (Table 4).

To assess reliability, we considered the results separately for each bootstrap sample of items (see Table 6 in the Appendix). For all group sizes, the power of a group of lay raters in the individual research condition was higher than one in the no research condition in all of the 500 bootstrap samples. With just a single rater, the collective research condition had higher power than the individual research condition on 71.5% of the item samples. With groups of five raters, however, the order flipped: individual research condition performed better than the collective research condition on 98.4% of item samples.

In condition C2, with individual research, eighteen lay raters outperformed two journalists on all of the bootstrap item samples and outperformed three journalists on 65.7% of samples (see Table 8 in the Appendix). 54 lay raters outperformed three journalists on 97.4% of bootstrap item samples.

Discussion

How to elicit better judgments

As noted in the introduction, prior work found that with two 75-minute training sessions, students adopted practices of external search for supporting and challenging information, and lateral reading about the author and our study, none of the raters received explicit training. Both of the treatment conditions, however, required raters to seek out or consider external evidence. This led to improved misinformation judgments, as measured in a variety of ways: judgments in the two research conditions were more internally consistent between raters, showed less partisan divide, and were better correlated with expert journalist judgments.

The results are mixed, however, about what is the best way to ask raters to consider external evidence. Condition 2, where each person searched individually, had lower consistency among raters and more partisanship than Condition 3, where raters examined evidence links that were provided to them. However, when averaging ratings from several raters, the correlation with a journalist was higher in Condition 2, with the difference becoming more and more reliable when averaging across larger rater panels. Each person doing their own search seems to yield noisier individual assessments. Wisdom of crowds models posit that when averaging several judgments it is better for those judgments to be independent (Surowiecki, 2005). Our results are consistent with that; in Condition 3, examining a common set of links to potential corroborating or challenging evidence may have yielded correlated errors in judgment. We suspect that the best procedure for eliciting judgments from raters will be some hybrid that encourages both individual search and considering links that have been discovered by others, especially links discovered by people with different ideologies.

A model of crowdsourced judgments

Consider a stylized hierarchical model of individual raters’ judgments when those raters are drawn from some defined pool (e.g., journalists, or liberal workers on Mechanical Turk)

In this model, there is a Journalist Consensus for each item, consisting of the Truth plus possibly a GroupOffset. And then each journalist sees a draw from a distribution centered on the Journalist Consensus for that item. Similarly, in each condition, for each item there is a Liberal Lay Rater Consensus, a Conservative Lay Rater Consensus, and an overall Lay Rater Consensus.

The GroupOffset can be further decomposed into two components. One is due to

While none of the underlying components, Truth, GroupOffset, and RaterNoise, can be directly observed, this model provides a framework for interpreting many of the results of our study. Most importantly, the relatively high absolute correlations among judgments from random pairs of raters in all conditions suggest that the Truth component of judgments was fairly large; perhaps the notion of truth is not completely broken beyond repair.

The noise in individual ratings was much higher for lay raters than for journalists. This can be inferred from the correlations between random pairs of raters from each group. The average correlation between a pair of random lay raters in the individual research condition was 0.47. For a random pair of journalists, it was 0.65.

The intuition behind the so-called wisdom of crowds is that, following the central limit theorem, the mean of a large sample of independent draws from a distribution has lower variance than the mean of a single draw (Surowiecki, 2005). In our model, taking the mean of many raters should reduce the RaterNoise. We find just that: the correlation between the means of random collections of eighteen raters in the individual research condition was 0.94, up from 0.47 for pairs of individual raters.

In our study, the reduction in noise was sufficient to overcome the presumed smaller GroupOffset for journalists because of their expertise and professionalism. To see this, note that the correlation of a journalist with the mean of a group of eighteen lay raters in the individual research condition (C2) was 0.74, much better than the 0.65 correlation between a journalist and another journalist.

Liberal and conservative lay raters had systematically different GroupOffsets. This follows from the fact that groups of liberal and conservative lay raters correlated with each other less than groups of random lay raters. Even in the independent research condition (C2), where this effect was reduced, the correlation between the means of eighteen liberal and eighteen conservative lay raters was 0.88, short of the 0.94 correlation between the means of random collections of eighteen lay raters. This could reflect ideological bias in judgments by one or both groups, or differences in expertise between the groups. We cannot distinguish between ideological bias and expertise differences, nor can we determine which group’s Consensus tended to be closer to the hypothetical Truth.

Consequently, we also cannot determine definitively whether the journalists had any bias in their judgments. Liberal lay raters correlated better with journalists than conservative lay raters did, meaning that the Journalist Consensus tended to be closer to the Liberal Lay Rater Consensus than it was to the Conservative Lay Rater Consensus. Thus, to the extent that liberal lay raters had ideological bias contributing to their GroupOffsets, the GroupOffsets for journalists must reflect similar ideological bias. On the other hand, if the GroupOffsets for liberals reflected only limited expertise rather than ideological bias, then a better correlation with journalists might not imply any ideological bias on the part of the journalists.

Changes in the design of the rating task can reduce both RaterNoise and the GroupOffset. We interpret the higher inter-rater agreement (ICC) in the two research conditions as an indication that either seeking corroborating information (C2) or considering corroborating information (C3) reduces RaterNoise. The higher agreement in the two research conditions between liberals and conservatives and between both groups and journalists in the two research conditions indicates that seeking or consuming corroborating information brings the GroupOffsets closer to each other; one plausible interpretation is that the research conditions reduced the GroupOffsets for both liberals and conservatives, yielding ratings closer to the underlying Truth.

To the extent that GroupOffsets reflect underlying differences of ideology, values, or lived experiences, the design goal may not always be to eliminate GroupOffsets. Instead, it may be desirable to design elicitation processes that simply illuminate systematic differences in assessments. In other content moderation settings besides misinformation, researchers have begun to explore approaches that explicitly try to elicit or predict differences in the ratings of people from different demographic groups (Goyal et al., 2022; Gordon et al., 2022). To the extent that society comes to view judgments about what constitutes harmful misinformation as partly subjective rather than purely objective, similar approaches may be useful.

Use cases for crowdsourced misinformation assessments

How might assessments from lay raters be incorporated into misinformation enforcement practices? Platforms articulate policies and enforcement actions (Meta, 2022; Twitter, 2022; Krishnan et al., 2021). Though the policies vary and their details are often not made public, at a high level, they generally prescribe some kind of enforcement action against posts that are harmfully misleading. One common enforcement action is to alert users that a particular piece of content may contain misinformation, through a label, color coding, or text. Twitter refers to this as warning (Twitterb, 2021b). Facebook refers to it as an inform action (Lyons, 2018). Another possible action is to downrank a content item so that it appears later in search results or news feeds and thus fewer people encounter it. Many other such strategies are also available in addition to filtering or removing the content (Lo, 2020).

Fact-checks of specific claims, made by external organizations (third-party fact-checkers, as Facebook describes them), serve as an important resource in determining whether particular claims are harmfully misleading (Facebook, 2020). The external organizations do not, however, make judgments about whether individual news articles or social media posts are promoting any particular claims. The external organizations also investigate only some of the claims that circulate. For moderating particular articles and posts, platforms rely on a combination of user flagging and automated algorithms to surface some content as potentially problematic. Sometimes moderation actions are taken automatically; in other cases, paid human moderators review the posts. Current practices at platforms constrain the human moderators to apply very detailed policy guidelines that identify particular classes of claims as constituting harmful misinformation. For example, Twitter’s COVID-19 misinformation guidelines specified a policy against tweets that “misrepresent the protective effect of vaccines” (Twittera, 2021a).

One potential role, then, for lay assessments of misinformation is in that final step, where human moderators review individual posts. Moderators could be given more leeway to identify any harmful misinformation, even on topics which have not yet been codified into platform-specific taboo claims and even for content that external organizations have not (yet) published fact-checks for. For this use case, it is important to understand both the quality of ratings that are produced and the costs. The results of this study, together with that of (Allen et al., 2021), demonstrate a quality-cost tradeoff. As raters move from the low-cost activity of just looking at headlines to the higher cost of scanning the entire news article to the highest cost activity of searching for corroborating evidence, they produce higher quality ratings, with more inter-ideology agreement and more agreement with expert raters.

We offered raters in the no research condition $.50 for each article rated and in the two research conditions $1 for each article rated, in order to compensate them for the extra time required to do research. If, in practice, one’s goal was to get a rating of quality similar to what one would get from a single journalist, this could have been accomplished for $5.50 using eleven raters in the no research condition or $4 using four raters in either of the research conditions. We note that costs in this range are probably not sustainable if even a tiny fraction of all posts are sent to human moderators. If, on the other hand, only news URLs were evaluated and not every post that linked to one, this process could potentially scale to that level of use.

Facebook’s Community Review project described using paid crowd workers in a different way. In that project, their role was to prioritize content for routing to the external, third-party fact checkers (Silverman, 2019). For that use case, it is probably not necessary to approach or exceed the performance quality of a journalist, since content flagged as problematic will subsequently be reviewed by external fact-checking organizations before any action is taken. Thus, lower cost processes such as only examining headlines, and using smaller numbers of raters, may be sufficient. If, however, the crowd judgments would be used to determine enforcement actions in the interim, before external fact-checking organizations rendered final judgments, then a higher cost process that required raters to search for corroborating information would be more appropriate.

An alternate use-case, which we favor, conceives of using citizen juries as a governance mechanism, rather than as a way to speed up decision making (Zittrain, 2019; Fan and Zhang, 2020). In that vision, crowd-based misinformation judgments could be used as part of appeals processes, as ground truth for transparency reports about platform performance, and as training data for human and automated processes. For that, they would need to operate on a medium rather than large scale, and the higher cost of requiring raters to search for corroborating evidence, or even engage in extensive deliberation with each other, would be justified. For this use-case, it might be particularly interesting to explore the role that platform users themselves might play in populating the juries. The Twitter BirdWatch system offers an interesting first attempt (Coleman, 2021), but may suffer from allowing people to self-select which content to review, making it prone to strategic manipulation. SlashDot’s system of meta-moderation, where users were assigned things to assess, rather than choosing them, may help to counter manipulation (Lampe and Resnick, 2004).

Limitations

One limitation of our study is that Turkers passed the qualification process at a lower rate in Condition 2. Thus, it is possible that the better performance in that condition was due, in part, to a pool of raters who were more diligent or skilled, rather than the requirement that they search for a corroborating source. It would be interesting in a future study to tease apart the selection effect from the task effect; if the selection effect is sufficient to yield the rater performance found in the second condition, without requiring raters to actually perform independent research on each item, the costs of rater labeling could be further reduced.

Another limitation is that articles were rated weeks to months after they were first posted. It is possible that searching for corroborating evidence would not be as impactful soon after the articles were posted, and thus not as effective at driving raters to provide better misinformation judgements. The study that generated our second collection of articles (Godel et al., 2021) was explicitly designed to compare judgments made by lay raters and journalists within a few days of an article’s publication. It would be interesting to analyze how well those ratings correlate with our journalist and lay ratings that were collected several months later.

More training for journalists, more time spent evaluating each article, or incentives for agreeing with each other could lead to higher inter-rater agreement among journalists. That would set a higher benchmark for lay panels to compete against, as noted in (Godel et al., 2021).

Finally, we should not assume that asking lay raters to search for corroborating evidence will continue to be as effective in the future as it was in this study. If platforms begin to rely on crowd ratings, following any of the use cases described above, disinformation actors might devise new strategies. Much as they already enact different personas to strategically manipulate left-leaning and right-leaning recipients (Arif et al., 2018), they may might start strategically planting “corroborating evidence” URLs and linking to them in order to boost them in search results.

Reconciling results with previous studies

It is worth revisiting the two previous studies (Godel et al., 2021; Allen et al., 2021) to try to assess possible reasons for discrepant results. Because we reused most of the same items, we can rule out some possible explanations, while others will require further research to tease apart.

First, we note that the current study and the other two the three studies constructed the journalist benchmark slightly differently. All three studies compared a simulated panel of lay raters to a benchmark simulated panel of one or more journalists. We ensured apples-to-apples comparisons by scoring both lay panels and journalist panels ability to predict a single held-out journalist’s ratings. By contrast, (Godel et al., 2021) scored both lay panels and a single journalist against the modal answer of a panel of journalists. However, for scoring the single journalist, they had to exclude that journalist, and thus they scored the journalist against the majority vote of a smaller panel. Most likely, the majority vote of a smaller panel will have higher variance, which would make their single journalist benchmark score be lower than it would be in a fair comparison. However, because of the tie-breaking methods they used (a 2–2 vote of four journalists was treated as “not misinformation”), it is possible that comparing to a smaller panel may have produced a score that was higher than it would be in a fair comparison.

The main analysis in (Allen et al., 2021) had an even larger difference in the metrics for comparing the performance of lay rater panels and the performance of a benchmark single journalist. Lay rater panels were scored against the mean of three fact-checkers. The single fact-checker was scored against a single fact-checker. With that comparison, they found that the lay rater panels scored better than a single fact-checker. However, in an appendix (Figure S9 in (Allen et al., 2021)) they provide data for lay panels scored against single fact-checkers. As expected, the correlation is lower. In an apples-to-apples comparison, both scored against a single fact-checker, the lay panel had a lower correlation with a single fact-checker than a single fact-checker did. Thus, there may be less discrepancy between the results of the two prior studies than first meets the eye.

Another possible explanation for discrepant results is uncertainty due to sampling error. (Godel et al., 2021) reported on one binary outcome (whether the panel’s prediction matched the majority vote of the journalists) for each of 135 articles. The accuracy reported for the majority vote of a random crowd of 25 people was 62%, and for the single journalist benchmark it was 69%. But this difference in performance could easily occur by chance even if there was no difference between the two. Suppose, for example, that panels of 25 people and the benchmark journalist both had 65% prediction accuracy. The 95% confidence interval for the sampling distribution of 135 draws from a Bernoulli distribution with

In our study, we have a larger pool of items. We quantify the uncertainty of our results through bootstrap sampling of 500 simulated article sets. In our individual research condition, panels of seven or more lay raters outperformed a benchmark of a single journalist on all 500 simulated article sets and the full panel of 54 lay raters outperformed a benchmark of three journalists on 98% of the simulated article sets.

An even bigger source of uncertainty is the individual journalists. We employed just four journalists and the other studies employed three and six. Even one or two who were outliers from their peers could have reduced the average ability of benchmark journalist panels to predict what other held-out journalists would say. Since we included the same items from (Allen et al., 2021) in our study, both teams were able to perform robustness checks using the other team’s journalist ratings. Tables 9 and 10 in the Appendix show qualitatively similar results in our study whether we use ratings from our four journalists or from their three journalists for the analysis. Because the journalist ratings from the other study are not available, we have not been able to perform a similar robustness check on the items from the second article collection.

A third possible reason for discrepant results could be differences between the types of items in the two sets. The first article collection was selected to include only articles that included a factual claim in the headline. These might be easier for lay raters to judge. To assess this, we ran our analyses separately on the two article collections. Results were qualitatively similar for both. On the 98 articles from the second collection that were from fringe rather than mainstream sources, the subset which were analyzed in (Godel et al., 2021), lay raters correlated with a journalist less well but journalists also correlated with each other less well; nineteen or more lay raters outperformed a benchmark of three journalists (see Tables 11 and 12 in the Appendix). We did, however, find that lay rater performance may have been worse on the subset of 109 political items from the first collection, with panels of 15.26 raters matching the performance of two journalists but even the full panel of 54 lay raters failing to match the performance of three journalists, (see Table 13 and Figure 5 in the Appendix). Because of the smaller sample size, however, confidence intervals are wider, so there is uncertainty about whether performance on these kinds of items is truly different.

(Godel et al., 2021) suggest that the timing of when rating happens may be critical to the capabilities of lay crowds. In particular, they collected ratings within 3 days after articles first appeared. For some news items, follow-up articles or fact-checks might appear later that help to disseminate correct information. Prior to that, lay raters may be poor judges of information quality. Our study and (Allen et al., 2021) had both lay raters and journalists assess articles weeks to months after they first appeared. If lay crowds are especially poor at assessing articles soon after they are posted, relative to a benchmark of journalists, that would limit their use for real-time decision-making. As mentioned previously, if lay panels are conceived of as citizen juries, as part of platform governance and transparency procedures, the ability to make real-time judgments may not be as important.

Finally, we note significant differences in the rating procedures between the studies. Our finding that more informed raters make better judgments may explain some of the differences in results. The study from which we drew the first collection of articles asked raters to examine only the headline and lede, and in one condition the source, without clicking through to see the whole article (Allen et al., 2021). As noted, they found that the average of fifty lay raters had slightly lower performance than one fact-checker in an apples-to-apples comparison. Our first condition provided a little more information to raters, by asking them to examine the full article, and panels of eight or more lay raters had a higher performance than a single journalist. Our second and third conditions, involving individual research or reviewing the results of collective research, provided our raters with even more information, and led to still better judgments.

It is also worth noting that refinement of the selection process and the user interface and instructions may make a big difference for the performance of lay crowds. Studies of crowdsourced labeling in other domains have found that quality control measures and small amounts of training are helpful (Mitra et al., 2015). For this study, we developed a custom web interface embedded as an iframe within MTurk pages, and went through multiple rounds of UX testing over several months. We also excluded raters who did not pass a simple test of whether they understood the interface and instructions and those who did not correctly answer two out of four knowledge questions. Finally, many MTurk workers are very conscientious, especially if they fear work rejections that will harm their ability to earn money in the future. In this study, in addition to the misinformation judgments, workers were asked to provide a subjective opinion about what enforcement action they thought platforms should take and to make a prediction about other raters’ subjective opinions. They were told that their judgments and opinions would not be evaluated, but that if their predictions were too far off they would be disqualified, and this may have encouraged workers to be conscientious. All of these factors may have led the lay crowds in our study to perform better than they might have otherwise.

Conclusion

Overall, the results show that juries composed of lay raters could be a valuable resource in assessing misinformation. It is possible for panels of lay raters to exceed the performance of a panel of three independent journalist ratings, as measured by agreement with a held-out journalists’ evaluations.

Requiring raters to become more informed before rendering judgments about misinformation reduces the partisanship and improves the quality of their ratings. When raters examined only the headline and lede of an article, a lay panel approached but did not match the performance of a single journalist (Allen et al., 2021). When raters examined the entire article (our condition C1), a lay panel could exceed the performance of a single journalist, but not a panel of two journalists. When raters examined evidence links (our condition C3), a lay panel could exceed the performance of a two-journalist panel, but not a three-journalist panel. And when raters searched for their own evidence links (our condition C2), a lay panel could exceed the performance a three-journalist panel.

Much work remains, however, to refine the processes by which rater pools are selected and the research tasks they are asked to perform. Would even more research, or more research done in a different way, further reduce ideological disagreement between liberals and conservatives and further increase alignment with journalist ratings? Moreover, the present work treats all disagreements in judgments as equally important. However, in reality, we know that certain disagreements (e.g., election fraud, the dangers of COVID-19, etc.) have outsized capability to harm society. More work should be done to systematically understand these factors and how to address them.

Supplemental Material

Supplemental Material - Searching for or reviewing evidence improves crowdworkers’ misinformation judgments and reduces partisan bias (Revised title above. original title was: Informed crowds can effectively identify misinformation)

Supplemental Material for Searching for or reviewing evidence improves crowdworkers’ misinformation judgments and reduces partisan bias (Revised title above. original title was: Informed crowds can effectively identify misinformation) by Paul Resnick, Aljohara Alfayez, Jane Im, and Eric Gilbert in Collective Intelligence

Footnotes

Acknowledgements

Declaration of conflicting interests

Funding

Supplemental Material

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.