Abstract

1 Introduction

In recent years, many countries have launched brain‐related research projects, aiming at exploring the mechanism of the brain, modeling the structure of the brain and simulating functions of the brain. For these projects, brain‐like artificial intelligence technologies and corresponding devices must be urgently developed, and research on embodiment intelligence must be conducted.

Various living creatures have embodiment intelligence reflected by a collaborative interaction of the brain, body, and environment. The actual behavior of embodiment intelligence is generated by a continuous and dynamic interaction between a subject and the environment through information perception and physical manipulation. Embodiment intelligence can be jointly developed with disembodied intelligence, which emphasizes logic, reasoning, and problem‐solving. Embodiment intelligence and disembodied intelligence complement each other, with each providing an alternative for intelligence breakthrough. Research on embodiment intelligence originated from psychology, which has recently received attention in many fields, such as cognitive science, artificial intelligence, and robotics. Relevant research results have been distributed in the areas of biological development and evolutionary robotics, ubiquitous computing and interface technology, multi‐agent, and artificial life. Rolf Pfeifer, a well‐known researcher from the University of Zurich, Switzerland, clearly described embodiment intelligence by analyzing how a body affects intelligence. He clarified that a body has a profound impact on understanding of the essence of intelligence and studying artificial intelligence systems.

People have always dreamed for robots to have human‐like brain–body collaborative embodiment intelligence, so that they can free humans from trivial work as well as harsh or dangerous environment. The ability of humans to accomplish various highly complex tasks in a dynamic and uncertain environment depends on the interaction between their brain–body collaborative system and the environment and their lifelong learning ability. The use of the brain–body collaborative cognitive mechanism to realize embodied perception and learning has become an important research topic for intelligent robots and an inevitable trend for developing a new generation of intelligent robots.

The physical interaction between a robot and the environment is the basis for realizing embodied perception and learning. In a physical interaction process, tactile information plays a critical role and can be used to ensure safety, stability, and compliance because it can obtain unique information that is difficult to capture using other perception modalities. However, the development of robotic tactile research lags significantly behind other modalities, such as vision and hearing, seriously restricting the development of robotic embodiment intelligence.

This paper presents the current challenges faced in robotic tactile embodiment intelligence and reviews the theory and methods of robotic embodied tactile intelligence. Tactile perception and learning methods for embodiment intelligence can be designed based on the development of new large‐scale tactile array sensing devices, aiming to make breakthroughs in neuromorphic computing technology and tactile intelligence.

The prerequisite of realizing robotic embodied tactile intelligence is obtaining tactile signals during a physical interaction. Tactile perception and learning based on this form the core of embodied tactile intelligence. The computing technology is the foundation of realizing embodied intelligence. Therefore, robotic embodied tactile intelligence involves several important issues, such as sensing, perception, learning, and computing. In recent years, many researchers in several countries have researched this topic from different perspectives. A detailed analysis of the related research, progress, and trends of the abovementioned aspects is presented in the following sections.

2 Embodied tactile sensing

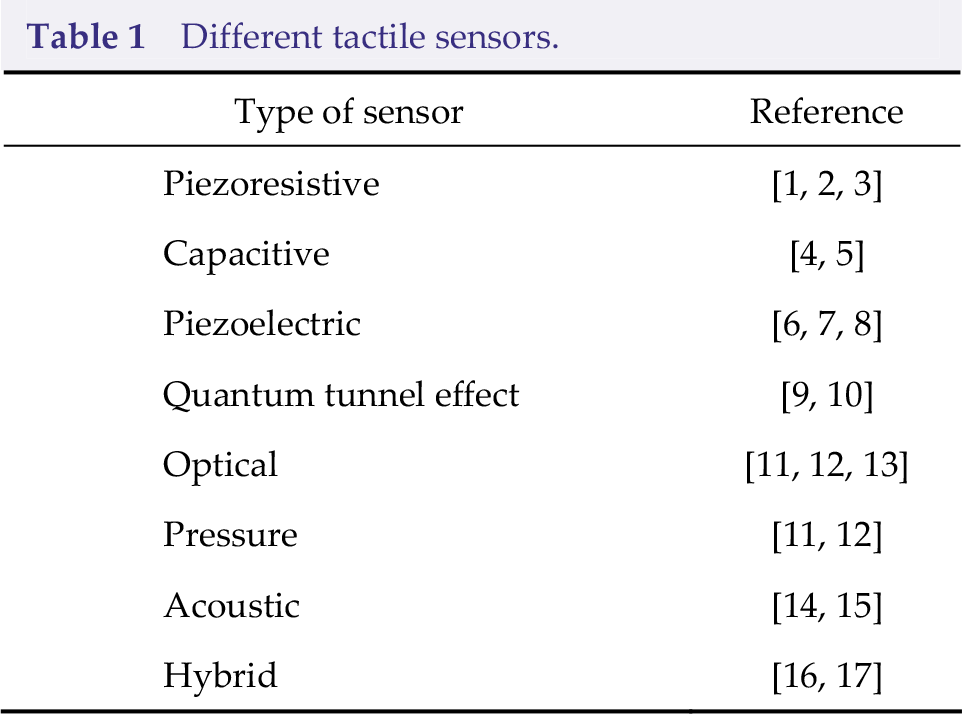

Tactile sensing is the prerequisite of physical interaction and embodied learning among a robot, the environment, and a human. It plays a significant role in many tasks, such as environment detection, dexterous manipulation, and human–robot interaction, as well as in fields such as electronic engineering, instrument measurement, and materials. Tactile sensors are categorized into various types according to their physical principles (Table 1).

Different tactile sensors.

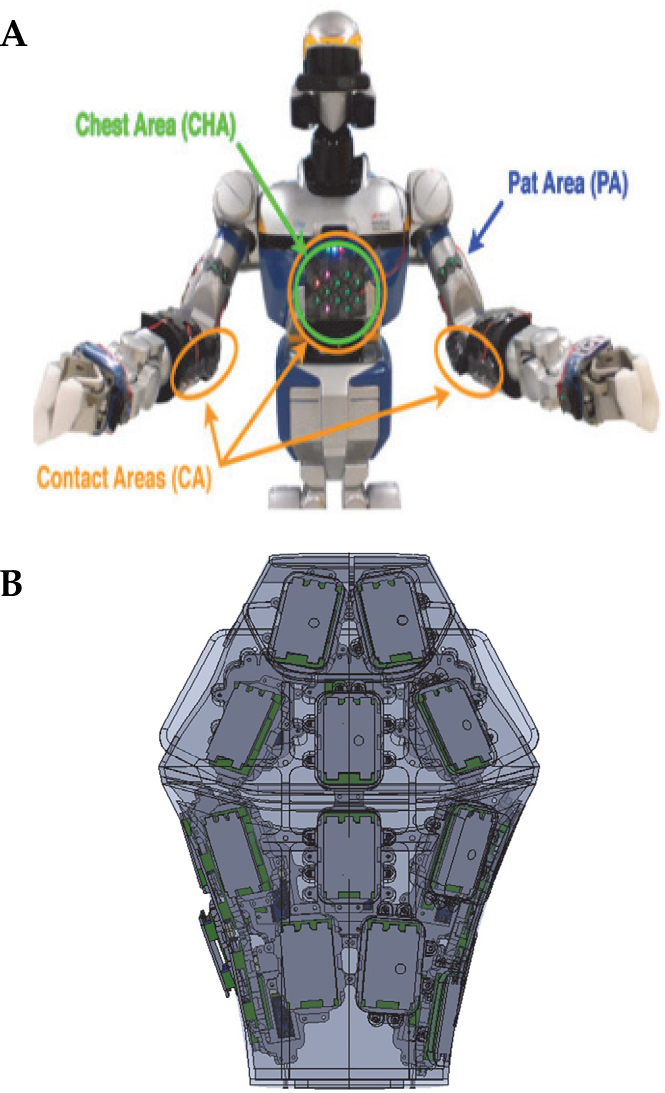

Robot tactile sensing principles and tactile object recognition have been investigated previously [18, 19]; however, the tactile sensor application has not been discussed for robotic embodied learning tasks. Tactile sensors have been widely deployed in different robot parts (e.g., fingertips, fingers, palms, arms, torsos, legs, and feet; Fig. 1) to study the perception, cognition, learning, and interaction capabilities of robots. The abovementioned research has laid a good foundation for studying robotic embodied tactile intelligence. Robotic tactile sensing in different body parts is described in the subsequent sections.

Representative parts for embodied tactile applications.

2.1 Finger tactile sensing

Tactile sensors are widely used in robotic fingers, which can be divided into fingertips and other parts of the finger. A tactile sensor can be integrated at the tip of the robot's finger (i.e., end of the finger) and used to recognize an object’s attributes (e.g., texture, hardness, and shape) by performing different exploratory operations, including sliding, squeezing, pushing, and tapping. Fig. 2 shows some representative work. Winstone et al. [20] integrated a TACTIP tactile sensor that mimics the structure of a human finger on the ELU‐2 Elumation robotic hand, to analyze the texture characteristics of objects. SynTouch Inc. developed a multi‐modal fingertip tactile sensor, called BioTac, which has been integrated into robots (e.g., Shadow, Barret, and Allegro) for tasks such as material classification, object recognition, and slip detection [21]. Schmitz et al. [22] designed a flexible printed circuit board integrated with a capacitive tactile sensing array and developed a fingertip tactile sensor for iCub robots. Koiva et al. [23] designed a rigid three‐dimensional (3D) resistive tactile sensor array using the laser technology, and mounted it on the fingertips of the Shadow robot. Meanwhile, Jara et al. [24] described PPS RoboTouch (Pressure Profile Systems, Inc.) capacitive tactile array installed on four fingertips of the Allegro robotic hand to collect the grasping force for a dexterous manipulation. A fingertip optical tactile sensor, called GelSight, can measure pressure, torque, shear force, and slipping, and is used to estimate object hardness and recognize the object material [25]. The leading author’s group recently installed optical tactile sensors on the fingertips to realize object material recognition and slip estimation [13].

Besides fingertips, tactile sensors are also widely used in other parts of the finger. Fig. 3 shows some representative work. Schmidt et al. [26] proposed the integration of a PPS capacitive tactile array into the gripper of the PR2 robot to explore the characteristics of the object surface to achieve human–robot interaction. To mimic the function of human fingers, Jamali and Sammut [27] used randomly distributed strain gauges and polyvinylidene fluoride (PVDF) films to develop mechanical fingers for identifying different surface textures. Yousef et al. [28] integrated an optical tactile sensor array with 41 contact points into a two‐finger robotic hand, to collect tactile information. Meanwhile, Teshigawara et al. [29] proposed the integration of highly sensitive tactile sensors into a lightweight multifinger robotic hand for slip detection. Heyneman et al. [30] designed a capacitive tactile sensing array and mounted it on Robotiq's grippers to identify different types of slip. Drimus et al. [31] designed an 8 × 8 tactile array based on a piezoresistive conductive rubber and mounted it on a three‐finger Schunk SDH robotic hand to classify deformable objects. In addition, Odhner et al. [32] integrated a barometer‐based tactile sensor array into a finger of an iRobot–Harvard–Yale robotic hand. Suárez‐Ruiz et al. [33] developed a CoRo Lab tactile sensor with a 4 × 7 resolution. They used it to detect the pressure, contact position, and vibration of Robotiq’s fingers. Tenzer et al. [34] proposed the integration of a microelectro mechanical system (MEMS) barometer into the fingers of a RightHand Robotics ReFlex TakkTile hand to feel the force distribution. Moreover, Jamone et al. [35] proposed a magnetic tactile sensor and installed it on a robotic hand for obstacle avoidance and object recognition. Based on the magnetic technology, Paulino et al. [36] proposed a three‐axis tactile sensor and installed it on the fingers of a Vizzy robot to detect normal and shear forces. Funabashi et al. [37] proposed the mounting of uSkin tactile sensors on the fingers of Allegro robots for object recognition. Wilson et al. [38] also indicated that integrating multiple GelSight optical tactile sensors in the fingers of a robotic hand can improve grasping efficiency.

Robotic finger tactile sensing is widely used in many scenarios. Many tactile sensors have been equipped on commercial robotic hands (e.g., Shadow, Barret, Schunk SDH, and Robotiq) and robot platforms (e.g., UR, PR2, and iCub) for practical applications (e.g., environmental detection and object attribute recognition).

2.2 Palm tactile sensing

Palm tactile sensing plays an important role in applications such as dexterous manipulation (e.g., grasping and kneading), power grasp, and human–robot interaction (e.g., handshake and flicking). Fig. 4 shows some representative work. Capacitive tactile sensing arrays based on PPS have been installed in the palms of some commercial robotic hands. For example, a capacitive tactile sensor array with 96 contact points was mounted on a Barret Hand, 24 of which were distributed on the palm [39]. The Robonaut robot uses data gloves to detect the force distribution at 19 contact points. Tomo et al. [40] integrated a magnetic silicone tactile detection unit on the palm of a robotic hand. Wang et al. [41] placed four contacting points on the palm of the designed service robot. Pastor et al. [42] integrated a high‐resolution tactile array containing 1400 tactile sensing units on the palm of a robotic hand, which collaboratively worked with the under‐actuated fingers. The palm has a limited contact with the object during the operation; hence, it is greatly affected by the hand’s operating configuration. A palm usually needs to work together with the finger part to implement a task such as object material classification.

2.3 Arm tactile sensing

During a robotic manipulation, the robotic hand and arm must expand the workspace and increase the dexterity of the operation. Many researchers currently focus on hand tactile sensing, ignoring the importance of arm tactile sensing. Arm tactile sensing plays an important role in human–robot interaction, safe operation, and environment detection. Fig. 5 shows some representative work.

Accordingly, the Institute of Cognitive Systems at the Technical University of Munich designed a hexagonal tactile module to detect temperature, vibration, and light touch to simulate a multi‐modal tactile perception of the human skin. The tactile module was made into a patch and installed on the manipulator from KUKA Robotics Corp to improve its control performance [46]. Bhattacharjee et al. [43] used a robotic arm covered with capacitive tactile sensing arrays to identify a cluttered environment. Albini et al. [44] tiled capacitive tactile sensors on a robotic arm to distinguish various human–robot interactions, such as movement and rotation. Vergara et al. [45] integrated an electronic skin containing 373 tactile sensing units into a UR manipulator to ensure safety when the workspace was shared with other people. Meanwhile, Leboutet et al. [47] used tactile sensing information on an arm to implement compliant operation control. A research team from the Harbin Institute of Technology recently studied a method of deploying tactile sensors on the arms of nursing robots to achieve a safe operation [48]. Research on arm tactile sensing is presently in the beginning stage. Many researchers have adopted a "sleeve"‐style installation for integration into existing robotic arms, which is difficult to adapt to the large extension movement of a robotic arm.

2.4 Torso tactile sensing

In robots, the torso refers to body parts such as the chest, abdomen, and back. Besides the fingers, palms, and arms, torsos also play an important role in the humanoid robot and human–robot interaction. Fig. 6 shows some representative work.

In reality, torso tactile sensing is also likely applicable to the hands, arms, legs, and other parts of the body. Argall et al. [51] summarized the applications of robotic torso tactile sensing in early human–robot interaction systems. In recent years, research on torso tactile sensing has attracted much attention with the continuous development of the large‐scale electronic skin technology. Metta et al. [52] used a capacitive tactile sensing unit to cover the torso of an iCub robot for cognitive ability research. Harada et al. [53] equipped a fingerprint‐like three‐axis tactile force sensor on a robot's body for tactile and slip detection. Mittendorfer et al. [49] imitated the human skin and developed a multi‐modal tactile sensor array, called CellulARSkin, to detect information such as proximity, vibration, temperature, and pressure. A tactile demonstration guides the movement of the entire body to grasp unknown objects. Kaboli et al. [54] further applied CellulARSkin to the torso of a small humanoid robot, NAO. Büscher et al. [55] designed a flexible, stretchable tactile sensor that can seamlessly cover a robot's body for daily human–robot interactions. Ku et al. [50] equipped a humanoid robot with a distributed tactile sensing array to identify 18 social touch patterns (e.g., hit, tap, and pat). Our research team recently installed a tactile array on the back of a service robot to recognize the tactile emotion during the human–robot interaction [56].

Distributed sensing in large‐scale, high‐resolution electronic skin and high‐speed signal interconnection are presently the main bottlenecks for equipping robotic torsos with tactile sensors [57].

2.5 Leg–foot tactile sensing

The research on leg–foot tactile sensing currently focuses on the feet, because they are the areas with the richest contact with the ground. The foot's tactile sensing helps a robot to sense the ground characteristics, adjust gait, improve walking efficiency, and ensure walking stability. Fig. 7 shows some representative work. Shill et al. [58] and Bednarek et al. [59] studied biped and multi‐pedal robots, respectively, in terrain classification, to measure physical interaction information and realize a terrain classification using the tactile sensors integrated into the feet. Wu et al. [60] used a flexible capacitive sensor array on robotic feet to measure the tactile force distribution with the ground and adaptively adjust the gait. Guadarrama et al. [61] studied tactile feedback control for a biped robot when it adapts to an unknown terrain. A research team at Massachusetts Institute of Technology (MIT) realized autonomous high‐speed bounding of Cheetah robots on a complex terrain using tactile intelligence [62]. Meanwhile, the research team from the Harbin Institute of Technology recently studied a terrain classification method using tactile sensors mounted on the bottom of the wheeled robot [63]. A research team in Beijing Nano‐Institute, Chinese Academy of Sciences, designed a smart insole with a novel air pressure‐driven structure to accurately monitor and distinguish various gait patterns [64].

2.6 Advanced tactile sensing for joints

Besides the fingers, palms, arms, torsos, legs, and feet, tactile sensing can also be deployed on tentacles and shoulders in a real robotic system. The current research mainly aims at specific parts of the robot. The resolution is not very high, although a significant progress has been made to develop the robotic tactile sensing technology. A large‐scale distributed tactile sensing system forms the basis for robots to realize embodied perception and learning, while a large gap still exists between the characteristics of the existing array‐type tactile sensors and the sensing ability of the human skin. Although a breakthrough has recently been made in the structure and materials of the electronic skin, achieving high flexibility and elasticity is still challenging. Large‐area tactile sensing arrays generally have poor scalability and are not easy to cut and splice. Manufacturing high‐sensitivity tactile sensing arrays is complicated and expensive, and therefore, are difficult to mass‐produce. Considering these challenges, deploying large-area tactile sensors on any robotic surface has not been realized yet, thereby restricting the development of robotic embodied intelligence.

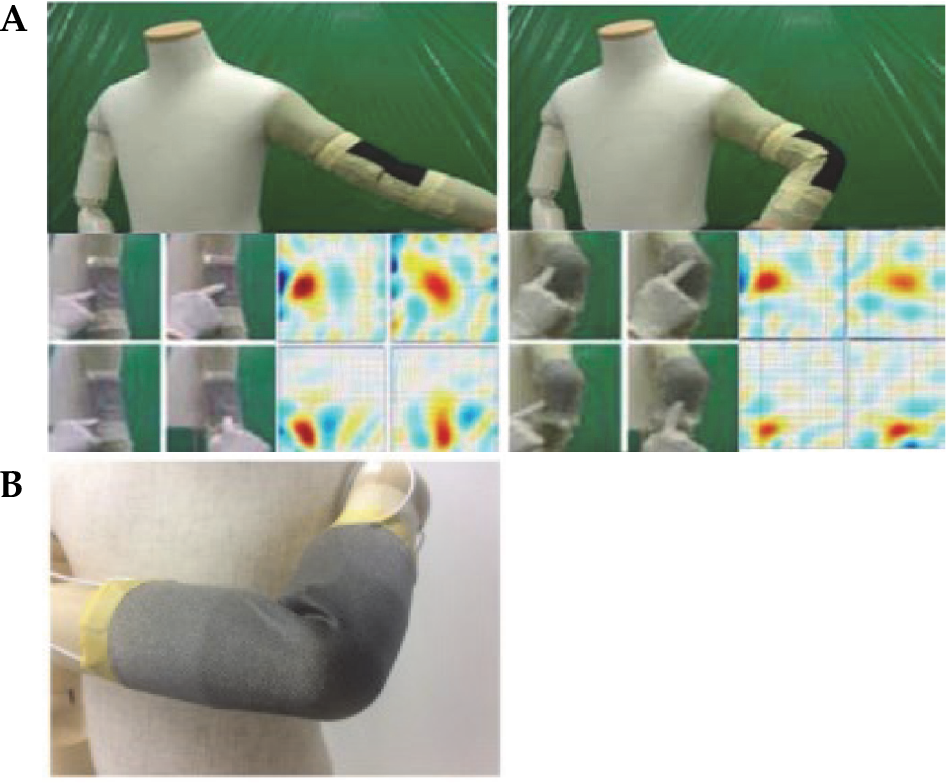

The deforming and stretching characteristics of tactile sensors must be considered because of robot kinematics. Traditional tactile sensors are difficult to implement because of wiring constraints. Some tactile sensors are based on an optical measurement and are limited by the imaging distance and field of view. Recently, tomography‐based tactile sensors have been developed [65 –67], which, in principle, can overcome the limitations of wiring, and have received great attention in the field of flexible stretchable electronic skin. Electrical impedance tomography (EIT) is an imaging technique that uses electrodes to measure the voltage or current signals from the boundaries of the electrical conductors and reconstructs the internal conductive distribution. It can be used to implement robotic tactile sensing if a flexible stretchable material is used to develop the electrodes and attached to the robot surface. Tomography‐based tactile sensors are expected to provide a new solution for the development of robotic embodied tactile sensing.

In Ref. [68, 69], rubber materials were used as electrical conductors and EIT was implemented to reconstruct the resistivity distribution. The stretchable electronic skin was designed in Ref. [70, 71], which can adapt to the elbow of a robot with 19 electrodes and was designed into a thin, flexible, and stretchable artificial skin that can be applied on the fore arm and upper arm of the robot to obtain information (e.g., contact position, contact duration, and contact intensity). Furthermore, Pugach et al. [72] and Park et al. [73] used neural networks to implement image reconstruction. Fig. 8 shows some representative work on tactile sensing based on EIT.

Compared to traditional tactile sensors, EIT has advantages of low cost, low power consumption, no internal wiring, flexibility, scalability, and repeatability. It has become an important tool for the electronic skin because of its outstanding performance in designing a deformable, soft, flexible, and stretchable multipoint tactile sensor and detecting irregular surfaces and narrow areas. However, EIT has some shortcomings. Besides its higher requirements for electrical conductors, its temporal and spatial resolutions are low. Its current applications on robots are mainly limited to pose detection, security protection, and human–robot interaction. Tactile sensors based on EIT are generally more suitable for a spatial resolution of 10–40 mm and a temporal resolution within 45 Hz. This is obviously not enough for robotic tasks, such as object recognition and robotic grasping [74].

Electrical capacitance tomography (ECT) is another imaging method for visualizing permittivity distribution in the interior of a dielectric object by measuring the capacitance at the boundary, and has been widely used in industrial applications. Muhlbacher‐Karrer et al. [75] described an application of ECT in conductor detection. While two soft‐field imaging modalities (i.e., ECT and EIT) have some similarities, ECT can provide a quantitative measurement. However, note that EIT can provide a qualitative measurement only. For the electronic skin, ECT can realize non‐contact perception and object internal imaging. While non‐contact perception can provide predictive information for a safe operation, internal imaging is beneficial for the tactile perception of complex movements, such as power grasp, embracing, and chest/back contact. In addition, using a capacitor array on a robot enables the performance of imaging diagnosis on key parts of a robot, which is significant in maintaining the robot itself. However, there have been few reports on the application of ECT in the fields of robotic tactile sensing and electronic skin.

3 Embodied tactile perception

Tactile perception is based on the processing of an acquired tactile signal to understand objects and the environment. Essentially, tactile perception can be attributed to the tactile pattern recognition problem. Machine learning has become the mainstream method for solving this problem. This section briefly reviews the progress of tactile features and classification. Active tactile sensing is analyzed as the core technology of robotic embodied tactile perception. Tactile modality usually needs to be combined with other modalities in actual robot systems; hence, the progress of the multi‐modal fusion perception technology, which focuses on tactile modality, is reviewed.

3.1 Tactile feature learning and classification

The pattern recognition methods of robotic tactile perception have been described in detail in [18, 19]. Accordingly, many features have been proposed for tactile perception, including time domain, frequency domain, geometric, and statistical features. Some previous studies have been conducted to convert tactile signals into low‐resolution images. Image features, such as image moments and scale‐invariant feature transform, are then extracted. Many classifiers, including nearest neighbors, naive Bayes, decision trees, support vector machines, and artificial neural networks (ANNs) [76], are applied to tasks of object recognition, slip detection, and grasp quality evaluation. However, most of these classifiers use off‐the‐shelf feature extractors to process tactile signals. They have neither fully explored the inherent characteristics of the tactile signals nor considered the practical application of tactile perception.

Inspired by the sparse coding idea, dictionary‐based representation learning has been recently used to learn middle‐level features through low‐level tactile features. A new classifier has been designed based on structured sparse coding [77], which can effectively describe the dynamic characteristics of tactile signals and is suitable for a few samples of tactile data. Feature coding and classifiers can be adjusted to correspond to specific perception tasks. The method has been applied to tasks such as heterogeneous finger information fusion, tactile adjective attribute identification, and tactile object classification, thereby laying a foundation for the feature and classifier joint design.

With the development of deep learning and its successful applications in the fields of image, video, and speech, some researchers have begun applying deep neural networks to tactile information processing. Madry et al. [78] used unsupervised learning methods to learn the spatio‐temporal features of tactile sequences and obtained the best results for multiple tactile object recognition datasets. Afterward, deep learning methods quickly attracted researchers in the field of tactile perception. To solve the material recognition problem, a tactile sensor with a 4 × 4 resolution was used to collect a 2‐second sequence. A 1500‐dimensional sample was then obtained after 750 Hz sampling. The 1500 × 4 × 4 spatio‐temporal signal was converted into a 1500 × 16 two‐dimensional signal to achieve classification using a conventional neural network (CNN) [79]. Ji et al. [80] proposed the usage of weights of a trained sparse auto‐encoder to initialize those of a CNN for tactile signals, to improve performance and convergence. Zheng et al. [81] further studied full convolutional network learning. A hybrid deep learning structure with a CNN and a recurrent neural network were designed to process sequential tactile information online and solve the tactile emotion recognition problem in the human–robot interaction process [82]. Sohn et al. [83] applied deep learning to large‐scale electronic skin tactile perception. By using various contact forces, the obtained tactile spatio‐temporal sequence information was integrated and a 3D CNN was designed to realize object recognition [42]. CNNs are mostly designed for images; thus, good results can be expected on tactile sensors based on optical measurement. Yuan et al. [84] used a CNN to represent the tactile image obtained by the GelSight sensor. A recursive neural network was then employed to model the object deformation over time. Subsequently, a shape‐independent hardness estimation method based on deep learning was designed. Polic et al. [85] developed a convolutional self‐encoder for feature extraction in optical tactile sensors. Considering the lack of labeled samples, Erickson et al. [86] proposed a method based on semi‐supervised learning for object recognition based on generative adversarial networks. This method enables a robot to estimate the texture of everyday objects with approximately 90% accuracy when 92% of the training data are not labeled. A zero‐shot learning method was recently proposed for robotic tactile fabric recognition [87]. The University of Science and Technology of China used an optical equipment to obtain high‐resolution tactile information and a 50‐layer residual network ResNet to process the diffraction patterns, to improve the sensor performance [88].

Tactile data are more difficult to collect and label than visual and auditory modalities; thus, the performance of the current tactile deep learning is not significant. At present, most of the deep neural networks for tactile information processing are borrowed from the visual field and cannot specifically capture the tactile information characteristics. The shortcomings of deep learning in terms of long time and high energy consumption also restrict its further application in robotic tactile perception. In addition, relying on several manually labeled samples for offline learning, deep learning approaches can hardly be applied to robotic tactile sensing tasks, including online motion, exploration, and contact.

3.2 Active tactile perception

Although progress has been made in tactile feature learning and classification in recent years, most of the studies still collect data based on predefined rules and use pattern recognition methods to process them. Tactile perception can only provide local information on environment characteristics; thus, efficiently achieving a comprehensive perception of the environment is difficult. One of the important characteristics of robotic embodied perception is that it is an active process.

Active tactile perceptions attracted attention in the 1980s [89] for enabling robots to control the position and movement of tactile sensing systems through sensory exploration. The autonomous perception ability of a robot is enhanced with the optimized sensory information obtained from the environment and the reduced number of explorations. Seminara et al. [90] introduced the progress of active tactile perception from the perspective of autonomous learning and safe interaction. The current robotic active tactile perception focuses on two main aspects: shape reconstruction,and recognition and classification.

For shape reconstruction, Strub et al. [91] designed an active exploration strategy by considering the errors between object shape representation and tactile feature localization, simultaneously, to automatically realize the shape representation of objects. Abraham et al. [92] verified that low‐resolution binary contact sensors can be used to achieve shape estimation through effective active exploration. Jamali et al. [93] used Gaussian process classification to effectively sample an object’s surface and proposed an effective active learning strategy for its tactile exploration. Sommer et al. [94] designed an active exploration strategy for multifinger robots to adjust the number of contact points in real time, such that a robot can autonomously adapt to the object shape. Driess et al. [95] improved the traditional discrete point query method in the Gaussian process method through smooth path optimization, to avoid inefficient touch and retraction motion. A method for the fast shape estimation of unknown objects was proposed by introducing tactile uncertainty estimation into the exploration time constraints [96]. Ottenhaus et al. [97] maximized the estimated information gain in information theory to minimize the expected cost of exploration actions. They realized an active reconstruction of the object shapes from the sparse tactile data obtained from the robot fingers. However, limited by the accuracy of tactile sensors and exploration actions, the high‐precision and fast shape reconstruction of complex unknown objects through efficient active tactile exploration remains unsolved.

In recognition and classification, Saal et al. [98] resorted to information theory to analyze the dynamic parameters of bottles filled with different liquids, to estimate uncertainty. They then chose the next best action to quickly learn the dynamic parameters of objects through active perception. Zhang et al. [99] used the Monte Carlo tree search to optimize the grasping motion of a robot and realize active object shape recognition. Lepora et al. [100] established an active Bayesian tactile perception method to analyze the estimated uncertainty of each step of the action. The robotic operation was optimized to obtain more information in the next action. This method was applied on the tactile sensors integrated into the fingertips and tentacles [101]. Martinez‐Hernandez et al. [102, 103] further used multifinger coordination to realize active tactile object shape recognition. Tanaka et al. [104] transformed the active tactile object recognition problem into an optimal control problem. Active tactile exploration was achieved by optimizing the informativeness of the exploration and the compliance of action. It was then combined with the Gaussian process for object shape recognition. With a multivariate Bayesian classifier, Sun et al. [105] proposed a recognition method that fuses tactile information, such as friction and surface roughness, and local geometric features through active exploration. Xu et al. [106] realized the fusion of surface hardness and geometry information using active exploration with a tactile stylus. Kaboli et al. [107] recently designed an active tactile sensing method by modeling an uncertain workspace, so that a robot can actively explore the unknown workspace.

The abovementioned results showed that most of the current application scenarios of active tactile perception are relatively simple. The tactile exploration process is too long to be applied to an open environment. In addition, most of the tactile exploration strategies are based on the uncertainty measurement of the state estimation and are not closely integrated with the perception task. Moreover, most of the current active explorations are limited to robotic hands and arms, and movements from the other parts of the robot are rare.

3.3 Multimodal fusion perception

Although tactile signals play an important role in robotic embodied perception, they have limitations. Tactile perception is usually limited to the local area of contact, and the perception process is limited by the contact process. Many real environments and object properties may not be suitable for measuring signals only by tactile modality. In addition, the service time of tactile sensing is affected by frequent contact with the environment. Real robotic systems are usually equipped with other sensors, such as visual auditory. Visual auditory and tactile modalities complement each other in both perceptual range and characteristics. Therefore, multimodal sensors must be comprehensively leveraged to achieve fusion perception for robotic embodied perception and learning.

Visual and tactile modalities exhibit a big difference. Their format, frequency, and range of information vary greatly. Visual modality is generally more suitable for processing features such as color and shape, while tactile modality is more suitable for processing temperature, hardness, and other characteristics. Both visual and tactile modalities can be used to process surface material. The former generally deals with coarser materials, while the latter deals with finer materials. However, tactile modality can usually obtain information on objects with robot contact, and requires a long exploration process. Meanwhile, visual modality can be used to obtain considerable information on objects in the field of view. The asynchrony and difference in the sensing range of the two modalities bring great challenges to information fusion. Previous studies on visual–tactile fusion mainly focused on 3D object reconstruction [43, 108]. Accordingly, visual–tactile object recognition has attracted attention in recent years. Kroemer et al. [109] studied the use of visually assisted tactile feature extraction and texture classification, indicating that a key problem in visual–tactile fusion is the difficult pairing of visual images with the corresponding tactile data. To solve this problem, a visual–tactile object recognition method was established using weakly paired sparse coding and dictionary learning techniques [110, 111].

Recently, deep learning has been applied to solve visual–tactile fusion problems. For example, Gao et al. [112] used CNNs to study the joint learning method of visual images and tactile data. Zheng et al. [81] improved the network structure. Kerzel et al. [113] proposed a multichannel deep neural network structure and performed a spectrum analysis on the vibration and texture data collected during the exploration, by classifying 32 daily life materials. Yuan et al. [114] investigated the method of fusing an optical image and a tactile image collected by GelSight. Deep adversarial learning was used to solve a weakly paired problem between visual and tactile modalities [115, 116].

When a robot interacts with the environment, sound signals can be easily obtained besides tactile signals. Sound signals have the advantage of being superior to visual or tactile modalities in identifying certain internal states or materials. Similar to visual–tactile fusion, visual‐sound‐tactile modal information presents a more obvious weakly paired characteristic. Strese et al. [117] built a multimodal dataset that contained more than 100 types of surface materials. The problem in visual–sound–tactile heterogeneous fusion material recognition was further addressed using the sparse coding framework [118, 119].

Recently, researchers have focused on realizing multimodal robotic active perception by using visual–sound–tactile modal information. Yang et al. [120] combined passive vision with active touch to provide visual priori for tactile perception. Yuan et al. [121] proposed the use of vision for a robot to find the most suitable grasping point, for obtaining high‐quality tactile signals. However, in these processes, the order of sensor usage is manually preset. The problem of comprehensively scheduling sensors of different modalities to achieve efficient sensing based on task requirements is still challenging. Ferreira et al. [122] used the hierarchical Bayesian framework to analyze the emergence of robotic multimodal active perception. Taniguchi et al. [123] introduced information gain to calculate the uncertainty of different modalities under this framework, which was used to realize the automatic scheduling of visual, auditory, and tactile modalities. However, this study was restricted to very limited environments. An active visual–sound–tactile multimodal scheduling method was recently established by introducing adversarial reinforcement learning. The efficient perception of materials using seven types of modalities was achieved [124].

4 Embodied tactile learning

The abovementioned tactile perception methods include sparse coding and deep learning. While they are common machine learning methods for tactile learning, all of them require labeled tactile data. Tactile samples are more difficult to collect and label than visual and auditory data. The important ability of robotic embodied learning is to comprehensively utilize the association between different modalities to achieve mutual learning and enhancement. At the same time, data samples can be acquired to autonomously and continuously accumulate knowledge during the operation process.

Although cross‐modal perception is a basic ability of human beings, it is always challenging to handle in robotics. Kroemer et al. [109] proposed a cross‐modal learning method based on a joint canonical correlation analysis dimensionality reduction to learn the low‐dimensional representation of tactile data and improve the classification performance of dynamic tactile perception. It uses the visual information of object surfaces with training and tactile information to classify the material of objects during inference. However, the visual–tactile weakly paired problem in the cross‐modal learning process must still be overcome. To solve this problem, the cross‐modal visual–tactile information matching was designed using a joint dictionary learning method [125], and its application was then explored in cognitive robots. The idea of zero‐shot learning was recently introduced. If many samples cannot be obtained, a joint dictionary is used to achieve the transfer from visual features to tactile modality, thereby effectively achieving tactile learning [126].

Using deep neural networks to achieve visual–tactile cross‐modal learning has attracted attention. Yuan et al. [114] used deep convolutional networks to associate visual and tactile properties for fabric recognition. By comparing the embedding vectors, a robot can effectively predict the tactile characteristics of the fabric by looking at an image of an object. Luo et al. [127] used deep neural networks to extract the features of visual images and tactile data. The maximum covariance analysis was used to pair the learned features to learn the cross‐modal visual–tactile shared representation. Takahashi and Tan [128] and Gandarias et al. [129] recently studied how to obtain tactile features from optical images based on self‐encoding networks and CNNs and how to use them to enhance the tactile perception capability. Falco et al. [130] established an active exploration framework to realize cross‐modal visual–tactile object recognition. These results verified the possibility of using visual information to enhance tactile perception. However, existing methods have many limitations on objects and can only handle some simple objects.

While the current study mainly focused on the use of visual modality to enhance tactile learning, tactile data may contain even more information in many cases. Pinto et al. [131] used the tactile data generated by the physical interaction of objects and the environment to automatically generate supervised information for learning, thereby enhancing visual learning, which was further generalized by Xu et al. [132] to realize a multistep dynamic interaction.

Although cross‐modal transfer learning addresses the knowledge transfer between different modalities and considers robot self‐supervised learning, it does not implement knowledge accumulation and update during a real long‐term robotic operation. Whenever a new task is encountered, a robot has to retrain it from scratch and cannot leverage previous knowledge when an unknown scene is learned. An autonomous robot in a dynamic environment must be able to incrementally learn a series of tasks and continuously acquire and update its knowledge base during the learning process. The lifelong learning ability to continuously learn new tasks without forgetting previous tasks has received attention from the machine learning community in recent years. Kirkpatrick et al. [133] indicated the difficulty that lifelong learning is facing and called it the “catastrophic forgetting” problem. A research team from the Institute of Automation, Chinese Academy of Sciences, recently used the idea of orthogonal weights to fully protect the previously acquired information when gradient optimization is performed on deep networks. The team demonstrated a good lifelong learning ability in applications such as handwriting and face recognitions [134]. However, most of the current studies are still limited to single perception modality. Both online dictionary learning and deep learning methods have recently been studied for the lifelong cross‐modal learning for robot material perception [135, 136].

5 Tactile neuromorphic computing

Tactile computing is the foundation of tactile embodied intelligence. As mentioned, most existing robotic tactile sensors have a single contact point. Huge challenges in signal processing would occur when a contact array is densely distributed. Popular deep learning methods also have shortcomings in the tactile perception and learning process, such as insufficient data and large time and energy consumption, which limit their in‐depth application in robotic tactile perception and learning. This issue has motivated the academic community to start investigating neuromorphic computing in line with the cognitive mechanism of the human brain.

Neuromorphic computing is based on the results of neuroscience theories and biological experiments. It integrates cognitive science and information science, refers to biological neural network models and architectures, and uses existing complementary metal oxide semiconductor (CMOS) devices/circuits or new neuromorphic devices to simulate the manner in which biological neurons and synapse process information. An intelligent computing platform with functions of information perception, processing, and learning is built based on the human brain’s neural network. It also has significant advantages in terms of power, energy, and hardware requirements and is expected to become a revolutionary paradigm for dealing with massive real‐time data in the era of big data and artificial intelligence. At present, most specific chips for neuromorphic calculations are based on spiking neural networks (SNNs), which simulate the neuron operation. Unlike deep learning, which mainly imitates the hierarchical learning mechanism of the brain, SNN focuses on simulating brain‐like characteristics from spatio‐temporal dynamics. Its asynchronous event‐driven characteristics significantly reduce the energy consumption. Therefore, it plays an important role in real‐time dynamic applications, such as robotic embodied tactile perception and learning.

Governments and research institutions worldwide have made great investments in promoting “brain‐like computing” since 2004. The development of a series of “brain projects” has advanced research on neuromorphic chips based on the traditional CMOS technology. The representative work includes TrueNorth, Spiking Neural Networks architecture (SpiNNaker), BrainScaleS, Loihi, Tianji, and Darwin.

The University of California, Irvine, used the TrueNorth chip to implement an integrated test of autonomous navigation, decision‐making and autonomous vehicle control. Based on a hybrid heterogeneous brain‐like chip “Tianji”, Tsinghua University developed hardware circuits that combine an ANN based on accurate multi‐bit value processing and computing mechanism and a neuromorphic calculation circuit based on a binary pulse sequence. A neural‐state machine algorithm was used to integrate a multimodal neural computation of vision, speech, and inertial measurement unit sensor information to achieve self‐balancing, dynamic sensing, object detection, tracking, obstacle avoidance, speech understanding, and decision‐making for a self‐driving bicycle. In addition, novel neuromorphic devices, such as memristors, phase‐change memories, ferroelectric devices, and magnetic tunnel junctions, start from the perspective of bionics and simulate neurons and synapses from the device level. They are expected to have significant advantages in power consumption and hardware cost, but are still under the exploratory stage.

In 2006, the University of Manchester, in collaboration with the universities of Cambridge, Sheffield, and Southampton, and supported by three companies (i.e., ARM, Silistix, and Thales), launched the SpiNNaker project, which aimed to simulate up to 1% of the human brain’s neural network in real time [137]. The system integrated 57,600 customized digital packages, each of which incorporated a computer chip with 18 ARM cores and a 128 MB shared local memory chip. A single package could simulate up to 16 000 neurons and 8 million plastic synapses, consuming only 1 W of power. Compared to analog signal neuromorphic computing systems, digital signal neuromorphic computing systems are flexible and can meet the diverse needs of neuromorphic applications. The software development and platform maintenance of this project are supported by the EU‐funded Human Brain Project (HBP), and SpiNNaker, together with the Heidelberg BrainScaleS analogue neuromorphic system, forms a neuromorphic computing platform for HBP projects.

Recently, SpiNNaker‐related research has attracted wide attention from several countries, including the United Kingdom, France, Germany, United States, and Japan. On a SpiNNaker system, a structural plasticity learning function is used to implement a reward‐based synapse sampling algorithm that provides an effective tool for brain‐inspired algorithms [138]. Serrano‐Gotarredona et al. [139] optimized the storage design in the SpiNNaker system based on the “weight sharing” feature of a CNN by implementing a five‐layer CNN for symbol recognition. Mendat et al. [140] developed a Markov Chain Monte Carlo inference algorithm based on graph models using SpiNNaker. A hierarchical temporal memory model was implemented using SpiNNaker, which can be used for anomaly detection in sequence data [141].

The SpiNNaker application is continuously growing. In Tapiador‐Morales [142], a spiking convolution processor was used to extract image features from the Poker‐DVS dataset. The visual classification of four different symbols was also achieved on a SpiNNaker platform. Dominguez‐Morales et al. [143] realized audio sample classification using SpiNNaker. As reported by Gutierrez‐Galan et al. [144], SpiNNaker was used to classify audio information to generate gait for a hexapod robot. Kawasetsu et al. [145] used SpiNNaker to simulate a neural activity in the retina and visual cerebral cortex. Meanwhile, Stromatias et al. [146] used SpiNNaker to implement a deep belief network for handwriting digit recognition. Orchard et al. [147] used SpiNNaker to implement a hierarchical model and the X (HMAX) model for event‐driven object recognition. Haessig et al. [148] used SpiNNaker to recover an optical flow from dynamic visual information and realize stereo estimation. The simultaneous localization and mapping of a mobile robot were realized using SpiNNaker to simulate the navigation and localization mechanism of the rat hippocampus [149]. SpiNNaker was deployed on a mobile platform, which integrated visual processing capabilities. A universal event‐driven neuromorphic robotic computing platform was established [150]. Chen et al. [151] and Rast et al. [152] recently introduced SpiNNaker on a snake‐shaped robot and an iCub humanoid platform, respectively, to realize perception processing.

Although visual and auditory information processing based on neuromorphic has been extensively studied, few studies have been conducted on tactile modality. SNN can be implemented on field programmable gate array (FPGA) to process vibration tactile signals and simulate the fingers of mice for active exploration [153]. Bologna et al. [154] studied the neural decoding mechanism of tactile sensing. A decoding method from the force sensor signals to the spiking signals was designed using spiking neurons. Combined with a robot active detection task, the neural coding mechanism of fine tactile perception was then investigated. Spigler et al. [155] and Lee et al. [156] used the Izhikevich spiking neuron model to characterize the tactile coding and decoding mechanisms under different tasks, such as surface properties and tactile stimuli.

Based on the research of tactile encoding, some researchers have attempted to combine encoding information, especially the Izhikevich spiking neuron model, with machine learning and used it for practical tactile perception tasks. Rongala et al. [157] realized tactile encoding during the texture perception process. The spiking signal features were extracted to achieve texture classification. The abovementioned study indicated the importance of spiking temporal signals for tactile recognition. However, the training can only be implemented offline because of the complex classification calculation. Friedl et al. reported that a heterogeneous population of neurons was used to convert tactile signals into spiking patterns, combined with a band‐pass filter with hidden layers, to extract nonlinear frequency‐domain features [158]. The neuromorphic support vector machine was used for the classification task. Yi et al. [159] discussed the use of nearest‐neighbor classification based on tactile spiking encoding to realize surface roughness recognition. Although such algorithms can be conveniently implemented on a neuromorphic chip similar to SpiNNaker, theoretically, these tasks are only at the software simulation stage. The proposed structure strongly depends on specific tasks and is difficult to generalize. From the perspective of hardware implementation, Rasouli et al. [160] indicated that distributed tactile signal processing can significantly reduce the amount of transmitted information and help achieve portable processing. A neural network processor chip with 128 hidden layer nodes was designed to extract time‐domain features from spiking sequences to achieve texture classification. However, the critical inversion operation is still calculated on a conventional computer. The feasibility of using the extracted spiking signal features was further explored for combination with unsupervised clustering [161] and a sparse coding classifier [162]. These studies realized the neuromorphic construction and integration of tactile sensors, encoding interfaces, and feature extraction, and took an important step for the online application of tactile neuromorphic processing. Bologna et al. [154] utilized a closed‐loop neurobotic system, using an active perception strategy for recognizing the braille input. The system was equipped with a finger having 24 capacitive sensors, mounted on a robotic arm, to identify the object surface texture. It was designed to optimize the finger scanning speed and compensate for the motion execution errors. Both Friedl et al. [158] and Rasouli et al. [160] pointed out the importance of active tactile perception. Research in this area is seriously insufficient.

A series of new brain‐like computing chips represented by SpiNNker was preliminarily verified for processing robotic tactile signals. However, the advantages of the low‐power consumption and efficient processing of brain‐like chips have not been fully realized, because of various restrictions. In addition, existing studies mainly focus on feature extraction, and most classifiers are still based on existing ones. The spatio‐temporal characteristics of tactile signals are neither fully leveraged nor combined with robotic manipulation.

6 Conclusions

In this paper, the robotic tactile technology was analyzed from four perspectives: (1) robotic tactile sensing (sensing), (2) tactile perception (perception), (3) tactile learning (learning), and (4) tactile neuromorphic computing (computing). The following conclusions were drawn.

(1)

(2)

(3)

(4)