Abstract

Keywords

1. The rise of transfer learning in robotics

Transferring prior knowledge to novel unknown tasks is one of the abilities that led humans to become the most innovative species on the planet (Reader et al., 2016). In particular, humans’ capability to transfer cognitive (Barnett and Ceci, 2002; Perkins and Salomon, 1992) and motor skills (Schmidt and Young, 1987) from one context to another makes the acquisition of new skills and the resolution of problems possible to a large extent. For instance, the difficulty of learning a new language is significantly influenced by factors such as language distance, native language proficiency, and language attitude (Walqui, 2000) as humans can

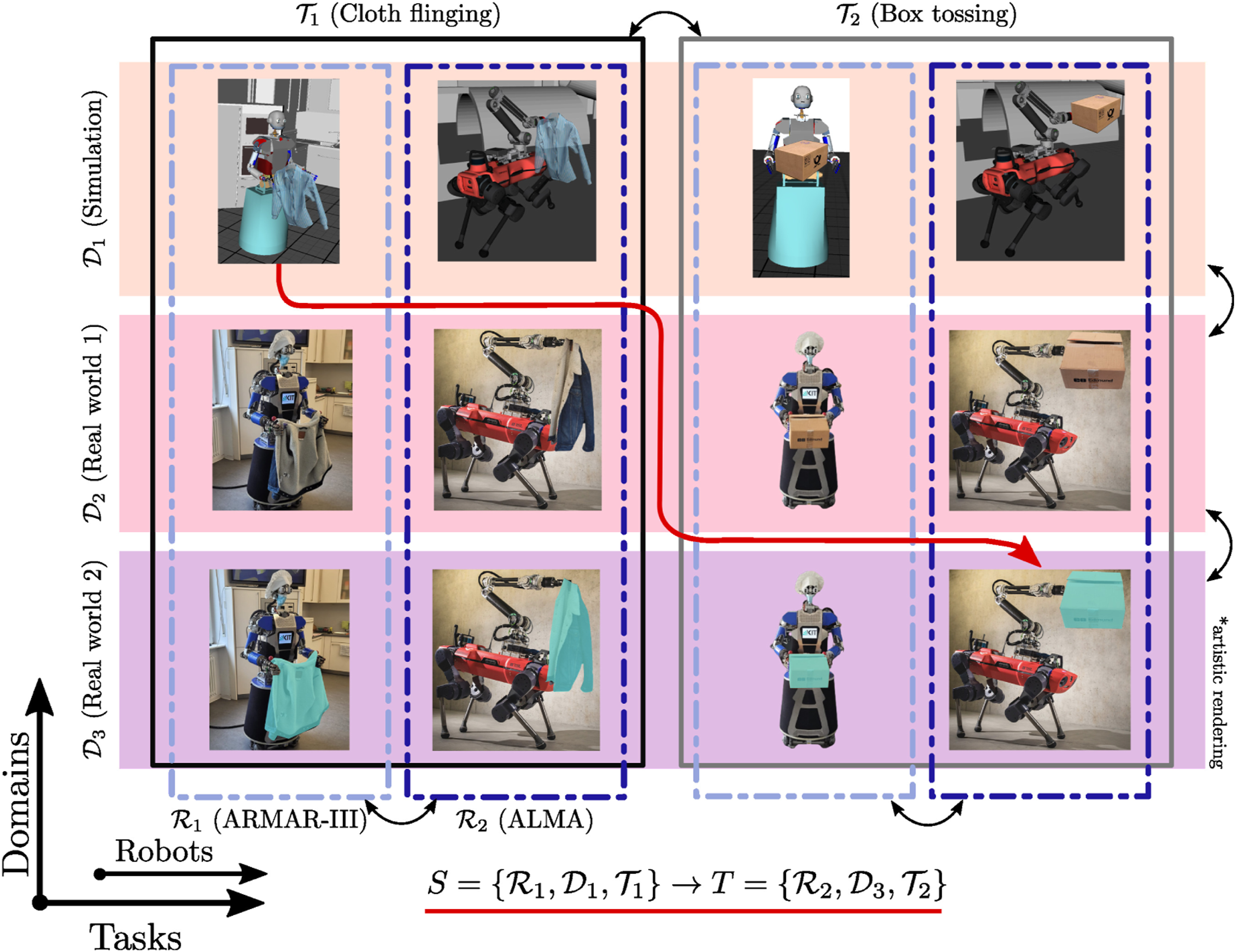

To evolve seamlessly in the real world, robots must feature outstanding cognitive abilities allowing them to perceive their environment, act and react to achieve various goals, and learn continuously from observation and experience, while coping with changes and uncertainty in the world. The transfer learning paradigm for robotics is a promising avenue to avoid learning from scratch by reusing previously-acquired experience in new situations, similar to humans. The core idea of transfer learning in robotics, illustrated in Figure 1, is simple: The experience of a robot performing one task in an environment is leveraged to improve the learning process of a (related) task in a different context, that is, in a different environment or executed by a different robot. Concept of transfer learning in robotics. The experience of a robot performing a specific task in a specific environment is leveraged to improve the learning of a related task by another robot in a related context. Transfer can occur across embodiments (yellow arrows), across tasks (purple arrows), and/or across environments (blue arrows). It is important to note that successful transfer requires commonalities between the source and target robots, tasks, and environments. For instance, a humanoid robot learning to kick a ball will most likely not benefit from the experience of a dual-arm manipulator systems manipulating a box and vice versa.

To identify when transfer learning is needed and/or warranted, one need to identify the similarities and differences between the two situations. Following the concepts proposed in imitation learning (Dautenhahn and Nehaniv, 2002), we distinguish between three aspects that can be deemed similar or different:

In the conceptual example of Figure 1, the experience of the two fixed-based manipulators placing a box on a conveyer belt (left) can be transferred to a humanoid robot executing the same task (middle-top). Transfer learning is made possible and easier as the two situations have many aspects in common: (1) Tasks: Both tasks are similar as both robots are engaged in moving an object with two arms. The notion of similarity with respect to the object may be relative to the robot’s own size and payload. For instance, a pair of industrial arms may be able to support objects up to 20 kg whereas a humanoid robot may carry only a quarter of this. Yet, the task and strategy to solve it, namely ensuring coordinated movement of both arms and the trajectory to place an object on a conveyor’s belt, remains the same. (2) Environments: The two environments are similar, as in both cases, the object is to be placed on a conveyor belt. We can also safely assume that, in both cases, the object is subjected to the same external forces (gravity, friction). (3) Robots: In this case, the two robots differ. Yet, their differences can be broken into two distinct parts. Both robots are endowed with two arms of similar structure. Solely the second robot is on legs and hence faces the additional challenge of controlling its balance when placing the object on the conveyor belt. This aspect may be resolved without actual learning, for instance, through constrained-based optimization, controlling for additional constraints at the center of mass (Bouyarmane et al., 2018). Alternatively, reinforcement learning or adaptive control may be used to fine tune gains (Khadivar et al., 2023).

Hence, in this case, transfer learning is mostly conducted across the different robots, while taking advantage of their similar bimanual structure. In other cases, when the two robots and/or environments differ importantly, experience may be transferred across tasks. For instance, the bimanual manipulation strategy used when placing a box on a conveyer belt (Figure 1, middle-top) may be transferred to a handover task (middle). Such transfer may also be achieved across different tasks and different embodiments: The bimanual strategy of the two fixed-based manipulators (left) may directly be transferred to a different robot executing a related task, for example, to the humanoid robot handing over the box (middle). Experience may also be transferred across environments. For example, the bimanual manipulation strategies used by the humanoid robot to place a box on the conveyer belt or to handover the box (Figure 1, middle-top and middle) may be reused by a humanoid robot manipulating a box in space (right). In this example, the physical rules of the two environments strongly differ due to the influence of gravity on Earth.

Finally, in some cases, transferring knowledge may not be beneficial or may even impede the robot performance. For instance, we consider transferring experience from the fixed-based bimanual setup placing a box in a conveyer belt to a humanoid robot kicking a ball. In this case, employing transfer learning might not be beneficial. Instead, the large differences between the robots, tasks, and environments might even cause transfer learning to impede the methods employed to fulfill the ball-kicking task. It is important to highlight that, even if some particular actions can be transferred, conflicting goals may lead to negative transfer. This clearly showcases the crucial importance of identifying the commonalities and differences between two situations when applying transfer learning in robotics. Moreover, it highlights the importance of transfer learning metrics that measure not only the transfer quality, but also the transfer gap between robots, tasks, and environments.

While the terms falling under the umbrella of transfer learning in robotics are not exactly agreed upon, the robotics community has intensified its effort to transfer various forms of knowledge across different contexts. Figure 2 (top) shows the recent rise in the proportion of published papers at the two biggest robotics conferences—IROS and ICRA—invoking keywords

1

that we consider as falling under the umbrella of transfer learning. This recent interest shows that the community strives for embodied transfer learning, which may be a necessary a priori for truly intelligent systems (Kremelberg, 2019). It is interesting that different keywords related to transfer learning in robotics display different growth rates (see Figure 2, bottom). For instance, terms related to imitation learning, behavioral cloning, and learning from demonstrations display an early rise and a high popularity nowadays, highlighting the effort of the robotics community to tackle transfer between embodiments (human-to-robot or robot-to-robot) from early on (Dautenhahn and Nehaniv, 2002). In contrast, terms related to transfer across environments (i.e., sim-to-real and domain adaptation) only recently gained interest, while terms related to transfer across tasks remain less documented, suggesting that transfer learning across environments and tasks is still in its infancy. Percentage of papers including words falling under transfer learning in robotics umbrella over the years for the two biggest robotics conferences. The results are based on a systematic search through the content of 44,067 papers, excluding their references.

In this position paper, we contend that transfer learning in robotics has the potential to revolutionize the robot learning paradigm by enabling robots to leverage past experience in novel contexts. However, key challenges remain to be addressed to fulfill this potential. In particular, the fundamental question of

2. Transfer learning taxonomies: From machine learning to robotics

While the machine learning community has devoted substantial efforts to defining and systematizing transfer learning and categorizing its different instances, transfer learning in robotics is found under various terminologies. This section aims at providing a unified view of transfer learning in robotics. To do so, we take inspiration from machine learning taxonomies and define a taxonomy of transfer learning settings that occur in robotics.

2.1. Taxonomy of transfer learning in machine learning

In this section, we introduce the definitions that are commonly adopted in the transfer learning community, see, for example, Pan and Yang, 2010; Yang et al., 2020; Zhuang et al., 2020). Transfer learning builds on the two fundamental concepts of domain and task. A Example of transfer learning on the Office 31 dataset (Saenko et al., 2010). Transfer can occur

Transfer learning approaches are commonly categorized into inductive, transductive, and unsupervised transfer learning (Pan and Yang, 2010; Yang et al., 2020; Zhuang et al., 2020). This categorization focuses on the availability of labels independently of the relationships between source and target spaces. Namely, in the inductive setting, labels are available in both source and target spaces, while they are only available in the source space in the transductive setting, and are not available in any space in the unsupervised setting. For a more encompassing categorization that generalizes to robotics, we instead propose to focus on the fundamental concepts of domain and task and on their relationship in the source and target spaces.

Transfer Learning in Machine Learning. Let We observe that Definition 2.1 implies the following hierarchical taxonomy of transfer learning settings illustrated in Figure 4. Note that source space may correspond to a union of multiple sub-source tasks and/or domains. (1) Task transfer learning: (2) Domain transfer learning: The difference in marginal distribution is often tackled by learning a mapping between overlapping instances (also known as “support”) between the source and target domains (3) Dual-mode transfer learning: Approaches in the dual-mode are currently mostly related to unsupervised transfer learning scenarios. This remains an underexplored area due to the difficulty of capturing the similarities—or the transferable information (instance, feature, parameter, etc.)—between the source and target spaces. Figure 3 illustrates the aforementioned transfer learning setting using the transfer dataset Office 31 (Saenko et al., 2010), a classical benchmark for transfer learning. In this case, the domains correspond to the source used to obtain the images (i.e., Amazon and a webcam in Figure 3) and the tasks correspond to the labels of the objects represented in the images. To extend these concepts to robotics, we must consider the robot as an additional mode. In the next section, we discuss its implications for transfer learning in robotics.

Hierarchical taxonomy of transfer learning in the context of machine learning. Domain transfer occurs when the source and target domain differ and is characterized by a difference in the feature space and/or in the marginal distribution. Task transfer occurs when the source and target task differ and is characterized by a difference in the label space and/or in the predictive function. Dual-mode transfer occurs when both the source and target domain and task differ.

2.2. Taxonomy of transfer learning in robotics

Transfer learning in robotics builds on the three fundamental concepts of robot, environment, and task. A

Transfer Learning in Robotics. It is important to emphasize that, unlike transfer learning in machine learning which only involves disembodied agents, the agent’s Inspired by the hierarchical taxonomy defined for transfer learning in machine learning community, we propose a hierarchical taxonomy for transfer learning in robotics based on the relationship between the source and target robots, environments, and tasks, as shown in Figure 5. Specific illustrative examples of its categories are depicted in Figure 6. Our taxonomy considers the following settings: (1) Robot transfer learning: (2) Environment transfer learning: (3) Task transfer learning: (4) Dual-mode transfer learning: (5) Triple-mode transfer learning: Notice that, depending on the relationship between the source and target spaces, our Definition 2.2 intrinsically refers to related fields, some of which received significant attention over the years. In Figure 2 (bottom), we notably observe that imitation learning is the most mentioned transfer learning field followed by sim-to-real and domain adaptation. In this sense, we view transfer learning in robotics as an umbrella term that encompasses “imitation learning,” “learning from demonstrations,” “sim-to-real,” “domain adaption,” “meta-learning,” “knowledge transfer,” “skill transfer,” “motion retargeting,” “embodiment transfer,” “morphology transfer,” and “kinematic transfer,” among others.

Hierarchical taxonomy of transfer learning in the context of robotics. Robot, environment, and task transfer occur when the source and target robot, environment, and task differ, respectively. Dual-mode and triple-mode transfer occur when two of these modalities and the three of them differ, respectively.

Illustration of the categories of the hierarchical taxonomy for transfer learning in robotics. The humanoid dual-arm robot ARMAR-III

3. Successes of transfer learning in robotics

Change of environment or domain as in

3.1. Environment transfer

Change of environment conditions (Kramberger et al., 2016) or the complexity of the environment where the task is being executed (Vosylius and Johns, 2023) provide examples of generalization to a declaratively new environment. However, the environment (domain), can be different in other aspects that go beyond just the setting—for example, contact conditions or other physical conditions might not be the same (Muratore et al., 2022). One example of such is transfer from the simulation-to-reality or sim-to-real.

Potentially unjustly, but transfer learning in robotics is often associated exactly with sim-to-real, where typically experience is obtained in one domain—the simulation—and exploited to accelerate learning in the transferred domain—the real world. Several reviews cover sim-to-real transfer learning in robotics, that is, (Muratore et al., 2022; Zhao et al., 2020), affirming the notion of a huge body of work in this field. The gist of sim-to-real lies in the notion that collecting the data for modern (deep) learning and other AI algorithms in the real world is too expensive in terms of time and resources to scale up (Muratore et al., 2022). Therefore, the data is collected in simulation, despite the difference between the real and simulated domains. This difference, referred to as the “reality gap” (Collins et al., 2019), needs to be overcome for real-world execution, which is done using transfer learning. Since collecting data in the real world is so time-consuming and expensive, researchers might change the domain to a different simulation, ending up with sim-to-sim methodologies. These are applied to demonstrate the behavior of transfer learning methodologies.

Different practices have been proposed for sim-to-real transfer learning, starting with realistic modeling (Muratore et al., 2022). No matter how accurate, modeling will never be fully cover all the aspects of the real world (Muratore et al., 2022), thus other approaches have emerged. Domain randomization, such as randomization of image backgrounds, of physical parameters of objects and robot actions, or of controller parameters (Höfer et al., 2021), is a common approach. By randomizing over, for example, physical parameters, the approach tries to cover the entire spectrum of these parameters in the hope that this includes the parameters that describe the real world. Even so, one-shot transfer learning is seldom successful (Zhao et al., 2020), and additional learning is required, for example using reinforcement learning (Ada et al., 2022), back-propagation (Chen et al., 2018) or both (Lončarević et al., 2022). If there are significantly fewer learning iterations in the target domain, the process is called few-shot transfer learning (Ghadirzadeh et al., 2021). Few-shot transfer learning has notably been applied to sim-to-real transfer in robotics (Bharadhwaj et al., 2019; Shukla et al., 2023). Given that more information can be available in the simulation, the notion of privileged learning was introduced, where the privileged information is used to train a high performance policy, which in turn trains a proprioceptive-only student policy (Lee et al., 2020a). The idea was very successfully demonstrated in quadrupedal locomotion by more than one group (Kumar et al., 2022; Lee et al., 2020a), and is general enough to be applied for very different tasks, such as excavator walking (Egli and Hutter, 2022) and even robotized handling of textiles (Longhini et al., 2022).

3.2. Task transfer

Transferring of robot walking from one domain to the other can be considered more than just domain transfer, as walking itself can be different for different environments. For instance, a pacing gait learned to walk on smooth ground might not be stable enough for walking on mountainous terrains. Thus, walking on mountainous terrains can be seen as a novel task, which may benefit from transferring previously-learned gaits adapted to other terrains. Moreover, walking is not an isolated instance: If the robot can learn to throw accurately at one target, a modulation of the throwing task to aim at a different target can in fact be considered at the least a different instance of the same task, if not a different task overall.

Such transfers from one (or several) task instances to a new one have been utilized in robotics before, and were often referred to as generalization. In this sense, for example, fast learning from a small set of demonstrations was applied with nonlinear autonomous dynamical system (DS), which have the ability to generalize motions to unseen contexts (Khansari-Zadeh and Billard, 2011). Similarly, a set of dynamical systems in the form of dynamic movement primitives was used to generalize to transfer knowledge from known situations to unknown in positions (Ude et al., 2010) and in torques (Deniša et al., 2016), probabilistic movement primitives (ProMPs) encode complete families of motions (Paraschos et al., 2013), TP-GMMs adapt to changes of predefined local frames (Calinon, 2018), and Mixture Density Networks adapt a learned motion primitive to new targets specified in a different space (Zhou et al., 2020). 3 Generalization was even termed inter-task transfer learning (Fernández et al., 2010).

Task transfer has also been tackled via meta-learning. In the meta-learning setting, a model is trained on a variety of tasks so that new tasks are solved by using none (zero-shot) or only a limited amount (few-shot) of additional training data (Finn et al., 2017; Nichol et al., 2018). For instance, MAML (Finn et al., 2017) was shown to generalize to new goal velocities and directions in the half cheetah and ant locomotion tasks of the Gymnasium benchmark (Towers et al., 2023) faster than conventional approaches. Other meta-learning approaches tackle the transfer problem from a different perspective by learning loss functions (Bechtle et al., 2021, 2022). The meta-learned loss functions generalize to different tasks, thus alleviating the need of designing task-specific losses.

Thus, in a broad sense of Definition 2.2, such approaches already propose solutions for

3.3. Robot transfer

Above mentioned approaches use knowledge from several instances of a task. However, learning of even one instance of a task could pose a challenge. Imitation learning, where human skill knowledge was transferred to a robot, has been thoroughly researched as the means for learning of task models and their execution on a robot (Billard et al., 2008; Ravichandar et al., 2020). Imitation learning (IL), also known as programming by demonstration (PbD), is in a strict sense an example where the task and the environment remain the same, but the agent is different,

4. Challenges and promising research directions

The aforementioned examples highlight that knowledge can be transferred across several robots, tasks, and environments, thus highlighting the potential of transfer learning for robotics. However, several key questions falling under the areas of identifying the similarities and differences across tasks, environments, and robot to single out what should be transferred and when remain to be answered to realize the full potential of transfer learning in robotics. In this section, we describe the key challenges that currently constitute roadblocks on the way to the future of transfer learning in robotics.

4.1. Abstraction levels in robotics

Humans and some animals, such as great apes, acquire cognitive skills via the concept of social learning (Whiten and Ham, 1992), whose main component is to copy (transfer) behavior from one individual to another. Social learning takes place at different levels depending on the goal and context. In biology, the lowest level of transfer corresponds to

These cognitive skill levels can also be roughly identified in robotics, where they intrinsically correspond to different Cognitive skill levels in biology, corresponding abstraction levels in robotics, and associated robotic capability stack. A higher level of abstraction eases the transfer to different agents, environments, and tasks, but requires more and more complex robot’s capabilities. Namely, transfer at a given abstraction level requires the robot to be endowed with abilities ranging from the bottom to the corresponding level of the capability stack.

To elaborate on the different levels of “abstraction,” consider the task of transferring a grasp performed by a

It is important to notice that the abstraction level has a direct influence on the capabilities that are required for successful transfer across robots, environments, and tasks. In particular, transfer at a given abstraction level requires abilities ranging from the bottom of the robot capability stack onto the abilities of the current level (see Figure 7-

In this sense, we contend that investigating transfer learning methods across the entire robot capability stack is of utmost importance. In particular, we believe that bridging the gap between high-level semantic task transfer (Driess et al., 2023) and low-level execution of various tasks with different robots in the real world is a crucial challenge for transfer learning in robotics. These require

4.2. Robotics transformers

As previously mentioned, the use of large pre-trained foundational models (Bommasani et al., 2021) to learn to transfer is enticing. Several large transformer-based models have been adapted for use in robotics, resulting into so-called

The subsequent RT-2 (Brohan et al., 2023a) is a vision-language-action model based on vision-language models (Chen et al., 2023b; Driess et al., 2023) trained on web-scale data and tuned with robotic actions. The largest RT-2 consists of 55 billions parameters. The increased performance of RT-2 compared to RT-1 and other adjusted baseline models (such as VC-1 (Majumdar et al., 2023), R3M (Nair et al., 2022), MOO (Stone et al., 2023)) is attributed to the vision-language backbone combining co-finetuning the pre-trained model jointly on robotics and web data, so that the model considers more abstract visual concepts as well as robot actions. Interestingly, the largest RT-2 model displays encouraging emergent capabilities, where the model is able to use the high-level concepts acquired from the web-scale data such as relative relations between objects to complete tasks that were not present in that form in the robotic dataset. However, these emerging capabilities only emerged in the largest models, which necessitate a complex cloud infrastructure to be deployed. Therefore, they are currently unsuitable for deployment on robotics platforms and self-sufficient autonomous systems. Moreover, the model is not able to produce motions that are not covered by the large robotics dataset. Furthermore, the size of the model (55 billions parameters) can slow the model inference down to 1 Hz.

Overall, robotics transformers incorporated high-level semantic reasoning capabilities directly into the robotic actions. This is equivalent to fusing the capability stack of Figure 7 into (a) single monolith model. This approach comes at the price of reduced low-level performance and limited capabilities compared to traditional methods that output continuous actions, or direct force control for compliant tasks, among others. In addition, fusing the capability stack also results in big and cumbersome models, which are difficult to deploy. In this sense, decomposing the capability stack may result in scalable models resulting in higher performances in robotics tasks.

Importantly, robotics transformers still offer potential beyond their usage as an end-to-end controller. Such models can potentially be used in a similar fashion as large pre-trained models have been used in machine learning to obtain compact or universal representations. In this context, it is worth highlighting RT-X (Padalkar et al., 2023) the latest iteration of the robotic transformers, which aggregated 60 robotic datasets with 22 different manipulator embodiments and made this data suitable for the robotic transformer architecture. Such open-source tools and datasets are crucial to bootstrap research in transfer learning for robotics.

4.3. Universal representations

Pre-trained representations are widely popular both in machine learning and in robotics (Pari et al., 2022). For instance, ImageNet (Deng et al., 2009) was often used to acquire low-dimensional image representation for picking via suction and parallel gripper (Yen-Chen et al., 2020), contact-rich high-dimensional dexterous manipulation tasks (Shah and Kumar, 2021), and household tasks such as scooping involving tools (Liu et al., 2018). The main advantage of such representations is their flexibility in being leveraged for many different downstream tasks with little adaptation. Although these representations are promising, they still require fine-tuning to be transferred to different settings. In this sense, robotics would benefit from truly universal representations that would be intrinsically transferable between robots, environments, and complex tasks without additional training.

In this context, adapting methods such as

Alternatively, universal representations may be constructed by tasking models with so-called

Moreover, the advent of large language models and visual language models brought a new breed of representation models that have been rapidly applied in all areas of robotics, for example, in planning (Huang et al., 2022; Shah et al., 2023), manipulation (Jiang et al., 2022; Khandelwal et al., 2022; Ren et al., 2023), and navigation (Gadre et al., 2022; Lin et al., 2022; Parisi et al., 2022). Such models are excellent candidates to harvest novel universal representations for robotics.

Some large visual language models jointly account for different modalities by encoding them in a shared latent space. For instance, CLIP (Radford et al., 2021) is pre-trained on a large dataset of images and associated textual descriptions. The model maps both modalities into a shared latent space using a contrastive loss function. The idea of combining representations from different modalities into a shared latent space was also explored in robotics. For instance, Tatiya et al. (2020, 2023) learned a common latent space from haptic feature space of multiple robots. Knowledge from source robots was then transferred through the latent space to facilitate object recognition by a target robot. Lee et al. (2020b) considered specific encoders for RGB, depth, force-torque, and proprioception modalities, which were aggregated into a multimodal representation with a multimodal fusion model. This shared representation was shown to improve the sample efficiency of the manipulation policy for a peg insertion task. The case of missing modalities during inference time was considered in Silva et al. (2020). A perceptual model of the world was trained by assuming that some modalities may not be available at all times. The approach can therefore compensate for missing or corrupted modalities during execution. Such joint representations are crucial to design universal representations for transfer.

To be successfully leveraged in various robotic scenarios, universal representations should be expressive, while remaining simple enough to facilitate downstream applications. This is usually achieved via a dimensionality reduction process by extracting low-dimensional latent representations from data. While this latent space was usually assumed to be Euclidean, that is, flat, recent works have shown the superiority of curved spaces—manifolds like hypersphere, hyperbolic spaces, symmetric spaces, and product of thereof—to learn representations of data exhibiting hierarchical or cyclic structures (Gu et al., 2019; López et al., 2021; Nickel and Kiela, 2017). For instance, the compositionality of visual scenes can be preserved via hyperbolic latent representations, thus improving downstream performance in point cloud analysis (Montanaro et al., 2022) and unsupervised visual representation learning (Ge et al., 2023). This suggests that rethinking inductive bias in the form of the geometry of universal representations may also be relevant for robotics applications and for transfer learning in robotics. For example, data associated with robotics taxonomies are better represented in hyperbolic spaces (Jaquier et al., 2024) and manipulation tasks encoded as graphs in the context of visual action planning (Lippi et al., 2023) may benefit from non-Euclidean representations.

4.4. Interpretability

Interpretability and explainability of learning-based approaches are key to safely deploy robots into the real world. In particular, black-box approaches lacking human-level interpretability can severely hinder natural and safe interactions with robots. In this context, transferable universal representations should also be interpretable and explainable. To do so, approaches in the field of visual action planning (Lippi et al., 2023; Wang et al., 2019) proposed to decode the underlying representations into a human-readable format, that is, images. Alternatively, representations can be readily encoded into a human-readable format that is additionally interpretable by many other methods or software architectures. For instance, the universal scene description (USD) (Studio, 2023) was designed to interchange 3D graphics information. This format was recently enhanced by Nvidia to facilitate large, complex digital twins — reflections of the real world that can be coupled to physical robots and synchronized in real time (Nvidia, 2023). USD is made from sets of data structures and APIs, which are then used to represent and modify virtual environments on supported frameworks such as Omniverse (Mittal et al., 2023), Maya (Autodesk, INC, 2019), and Houdini (SideFX, 2022). Such a framework has significant potential to be used for robotics transferability. For instance, it could be leveraged to build joint representations of the world shared across multiple robots, to share knowledge, and even to infer digital twins from sensory readings.

4.5. Benchmarking and simulation

Benchmarks and relevant metrics are key to evaluate and compare methods, thus having the potential to boost the development of innovative novel approaches. For instance, the rapid improvement of deep-learning models benefited from easily-accessible benchmarks that are widely accepted by the community (Cordts et al., 2016; Deng et al., 2009; Krizhevsky, 2009; Lin et al., 2014). The robotics community also benefited from impressive strides towards unified benchmarks with efforts such as the YCB-(Calli et al., 2015) and KIT-(Kasper et al., 2012) object datasets, and with regularly-organized benchmark competitions such as RoboCup (Kitano et al., 1997), ANA Avatar Xprice 4 , and DARPA challenges 5 . However, they all face robotics unique challenges. First, as robots are real systems evolving in the real world, the deployment of any method can be highly time-consuming. Second, as previously mentioned, transferring methods to robots with different embodiments is non-trivial, which intrinsically hinders benchmarking across different research groups. Last but least, handcrafted, highly tuned solutions usually outperform more general methods to solve any specific or standardized task as defined in classical benchmarks. This is especially notable for robotic manipulation where accepted benchmarks remain scarce.

The Robothon 2023 task board challenge (So et al., 2022) is an example of recent robotics manipulation benchmark. This board is an assembly of various relevant robotics tasks—including inserting a key into a keyhole and turning it, plugging/unplugging an ethernet connector, and pushing switches, among others—allowing the evaluation of different approaches. As required for a benchmark, the task board is standardized and its specifications are given. However, in such settings, handcrafted, or even prerecorded, motions can lead to surprisingly high scores. Randomly orienting the board before every trial was later included to discourage such solutions. Within machine learning benchmarks, handcrafted solutions are prevented by dividing the available data into training and test sets, which consists of different samples drawn from a single distribution. Analogously, the Robothon 2023 challenge would require a large number of task boards consisting of the same high-level tasks but differing in their geometric-specific realization. A promising avenue to overcome the impracticability of producing numerous physical task boards would be to leverage simulators.

Modern robotic simulators, such as Mujoco (Todorov et al., 2012), Bullet (Coumans and Bai, 2016–2021), and PhysX 6 have shown impressive improvements in various areas, including in robotics assembly (Narang et al., 2022). Such simulators have the potential to generate various parametrizations of simulated boards, and thus to create training and test sets similar to machine learning benchmarks. In particular, such sets would be of high relevance for transferability in robotics, as they have the potential to evaluate transferability across (1) robots, (2) environments, that is, different parametrizations, and (3) tasks performed on the same board. The ultimate challenge is to overcome the sim-to-real gap when deploying the developed methods on a real, previously unknown task board using a new robot during live competition. Such benchmarks would boost research in transferability in robotics, as well as provide valuable information on the difficulty and challenges of each transfer setting. It is worth highlighting that a wide range of works and methods have been developed in the field of machine learning in recent years and subsequently compiled into transfer-learning-libraries (Jiang et al., 2020). Such a consortium of methods offers a huge potential to be used in robotics contexts. Importantly, relevant metrics must be defined to compare different approaches in different transfer settings.

4.6. Metrics for transfer learning in robotics

Two types of metrics are relevant for transfer learning in robotics, namely

Transfer quality metrics are crucial to evaluate and compare the performance of transfer learning algorithms. As such, they are key to the development of novel transfer learning methods. Transfer quality in machine learning is commonly measured by comparing the performance achieved on the target task with or without transfer learning. In this case, the quality metric includes not only the final performance (asymptotic performance), but also the initial benefit of the transfer (jumpstart performance), the time to reach a predefined performance threshold (time to threshold), as well as the sensitivity to different hyperparameter settings (Taylor et al., 2007; Taylor and Stone, 2009). In addition to measuring the transfer quality, further analysis of the transfer process can be conducted by comparing the number of required sub-source tasks, the number of demonstrations (Barreto et al., 2018; Zhu et al., 2020b), or the required quality (e.g., suboptimal, expert, oracle) of the source space (Zhu et al., 2020a). Recently, Chen et al. (2023a) highlighted the importance of robust unsupervised evaluation metrics for domain adaptation. Such metrics should be independent of the training method, consistent across hyperparameters and models, and robust to adversarial attacks. Most of the aforementioned transfer quality metrics can and are in fact already used for transfer learning in robotics (Zhu et al., 2023).

Transfer gap metrics provide a measure of the discrepency between the source and target spaces. Note that such metrics may also be used to measure performance in certain circumstances. Robotics adds an additional challenge to the problem of defining suitable transfer gap metrics for transfer learning: Indeed, transfer learning in robotics can be seen as a three-part transfer problem consisting of transfer across robots, tasks, and environments. Several metrics have been defined and directly optimized to solve each of these sub-problems. In this context, domain adaptation received considerable attention from the machine learning community in recent years. When the distribution of the source and target domains can be reliably estimated, simple divergences, for example, the Kullback-Leibler (KL) divergence (Kullback and Leibler, 1951) the Maximum Mean Discrepancy (MMD) (Gretton et al., 2012) for labeled data, or the

4.7. Negative transfer

Importantly, transfer learning is not necessarily beneficial in all settings. Transfer learning algorithms build on systematic similarities between source and target spaces. However, if non-existing similarities are selected by the algorithm, the transfer can have a negative impact on the performance in the target space (Wang, 2021). This phenomena is denoted

In robotics, negative transfer may occur at the different levels of the robot capability stack. For instance, at the low control level, transferring an inverse dynamic model learned for a source quadrotor to a target quadrotor with significantly different physical properties has been shown to lead to worse performances than using a baseline controller that disregards the inverse dynamics (Sorocky et al., 2020, 2021). Interestingly, the lower levels of the capability stack may be more susceptible to negative transfer as low-level information, for example, inverse dynamic models, may only be transferred across closely-related source and target spaces. In contrast, experience at the higher levels is more general and may be transferred across a larger range of source and target spaces.

We believe that negative transfer remains an under-investigated direction in robotics. In particular, negative transfer may be particularly harmful for robotics. First, negative transfer may lead to potentially-damaging behaviors of the target robot, while safety is a crucial aspect when deploying robots in the real world. Second, negative transfer may lead to transfer learning requiring longer training time than directly learning the desired behavior in the target space, while low training time is crucial for real robots acting in the real world. Therefore, the effects and causes of negative learning remain to be thoroughly studied, as they may be key to develop successful and reliable transfer learning algorithms tailored to robotics.

5. Conclusion: The future of transfer learning in robotics

The rise of transfer learning implies its potential to enable robots to leverage available knowledge to learn and master novel situations efficiently. In this paper, we aimed at unifying the concept of transfer learning in robotics via a novel taxonomy acting as a bedrock for future developments in the field. Building on the successes of transfer learning in robotics, we outlined relevant challenges that have to be solved to realize its full potential. It is important to highlight that these challenges intrinsically relate to determining what can and cannot be transferred. As illustrated in this paper, transfer learning in machine learning largely relies on identifying whether the distribution of data across two domains remain similar. Identifying similarities and differences across robotic tasks amounts to more than comparing distributions. We have emphasized the need to delineate

We hope that this position paper paves the way towards successful transfer learning between robots, tasks, and environments, as well as their compositions. Reusing knowledge holds the promise of closing the performance gap between humans and robots in overcoming novel challenges and acquiring new skills and concepts.