Abstract

Keywords

Introduction

In public health, effective program evaluation is an essential organizational practice involving planning and program improvement, which is critical for demonstrating the results of resource investments (Brownson et al., 2017; Centers for Disease Control and Prevention, 1999). Evaluation often consists of developing strategies and metrics to improve the implementation and effectiveness of interventions and programs. In addition, the evaluation identifies best practices and provides useful tools to determine whether project goals are achieved. The primary focus of evaluation in public health has been evaluating highly focused interventions, usually undertaken by a single investigator (Stokols et al., 2008). However, in the last few decades, there has been a growing focus on evaluating large research initiatives that are conducted by collaborative teams of scientists and are designed to impact complex, multifaceted health issues (Milojević, 2014; Trochim et al., 2008). Evaluating large initiatives is difficult because it requires examining and assessing various interconnected, dynamic elements (Croyle, 2008; Medical Research Council, 2000).

This move to large initiatives, such as transdisciplinary collaborative centers (TCCs), has called for collaborative scientific teams instead of individual scientist projects to address systemic, multifaceted public health issues (Trochim et al., 2008; Wuchty et al., 2007). Such teams bring together scientists from different professional backgrounds and often focus on more than one research project (Transdisciplinary Collaborative Centers for Health Disparities Research Program [TCC], n.d.). Table 1 provides a comparison of individual scientist projects versus large transdisciplinary centers (Trochim et. al, 2008). Solving complex social, environmental, and public health problems requires perspectives and expertise from scholars from a wide range of academic disciplines (Abrams, 2006; Smedley & Syme, 2000). To solve these problems, greater collaboration among scientists who train in different fields and partner with community organizations is needed (Butterfoss & Francisco, 2004).

Characteristics of Individual Scientist Projects Compared to Large Research Initiatives.

This focus on multidisciplinary initiatives is not without critics. Some critics of the scientific community question the viability and cost-effectiveness of these endeavors and argue that these large initiatives divert valuable resources from important discipline-specific research. Critics are concerned about the value added by these programs and whether these projects are worth the extensive monetary investment (Klein, 2008, p. 20; Rimer & Abrams, 2012; Stokols et al., 2008). It remains unclear whether large research collaboratives are more productive than research projects developed by one or a few investigators, which has led to the growing body of work on the science of community–academic partnerships (CAPs). This work sheds light on the conditions under which transdisciplinary collaborative initiatives are effective (Perez et al., 2013).

Despite these concerns, government agencies provide a significant amount of funding for complex research initiatives. Specifically, the National Institute of Minority Health and Health Disparities (NIMHD) has developed TCCs designed to support partnerships and collaborations between a broad range of stakeholders to create transformative and novel infrastructure for collaborative research, implementation, and dissemination activities (TCC, n.d.). Given the considerable investment in these initiatives, it is essential to evaluate the outcomes and impacts of large, collaborative, transdisciplinary research initiatives. Through evaluation, large research partnerships can demonstrate the value of transdisciplinary research and the extent to which CAPs and their work are worth the investment (Drahota et al., 2016).

Furthermore, comprehensive evaluation models can be used to define key terms, processes, and outcomes of large transdisciplinary initiatives and determine whether large Centers are more efficient, cost-effective, and more impactful than the single investigator model used in traditional public health research (Rutter et al., 2017). The evidence gained from comprehensive evaluations of large transdisciplinary Centers can be used to assess the effectiveness of Centers’ interventions and their social and community impacts, cost-utility, and scientific benefits.

Existing Frameworks for Large-Scale Collaborative Centers

Given that Flint Center for Health Equity Solutions (FCHES) is a TCC, it was important to examine appropriate literature that could inform the Centers’ evaluation, which moved beyond simply evaluating its projects to broadly assessing its operations, partnerships, research, and community products and impacts. To do this, we examined research on large initiatives, organizational theory, and community coalitions. Since one of the key goals of the FCHES evaluation was to measure the organization’s overall success in meetings-stated goals and objectives, the team needed to consult literature focused on ways to assess organizational performance. Martz (2013) examined five extant models used to evaluate organizational performance, including the goal model, systems model, process model, strategic constituencies models, and competency values framework. From these models, the authors developed an Organizational Effectiveness Checklist that could be used to evaluate organizational performance that incorporates each of these models’ strengths and serves as an interdisciplinary approach to evaluate organizational performance grounded in the organizational, management, and evaluation fields. We considered elements of this checklist to inform our thinking about how to evaluate FCHES, specifically the presuppositions, strengths, and limitations of each model. Further, this approach offered an interdisciplinary approach to evaluating both the process and outcomes of the organization.

In addition to examining organizational theory, it was important to review organizational collaboration theory to understand how to evaluate complex collaborations (Gajda, 2004; Gajda & Koliba, 2008) and determine their effectiveness (Marek et al., 2015). Woodland and Hutton (2012) provide a theoretically grounded framework, the Collaborative Evaluation and Improvement Framework, that can inform the process and outcomes of collaboration and the qualitative and quantitative data collection strategies and measurement tools that can be used in different evaluation contexts. This framework describes five phases that serve as entry points that can inform evaluating organizational collaboration. These phases include operationalizing the construct of evaluation, identifying and mapping communities of practice, monitoring development stages, assessing integration levels, and assessing cycles of inquiry. Given that a key aspect of the success of FCHES as an organization rested on effective collaboration, this model helped identify how we could characterize collaboration and the specific attributes of collaboration that would shape our work (i.e., collaboration in the development and refinement of the roles and responsibilities of the Core and project teams) in addition to identifying the procedures and metrics that will be used to monitor and assess collaboration.

In a review of evaluation methods used to assess the effectiveness of community coalitions in public health, Kegler et al. (2020) revealed that 70% of the evaluations used either a formal theory, conceptual or logic model that guided the evaluation. Although quantitative community-randomized trials were indicated as the “gold standard” of evaluation, only two studies used this design (10%). In contrast, a case study design was the most commonly used (37%), and data collection strategies were stratified across quantitative, qualitative, and mixed data collection methods. From their analysis, the authors suggest that developing logic models and conceptual models of change, using qualitative methods, and selecting evidence-based strategies adapted to local contexts can strengthen the understanding of the role of community coalitions in creating and supporting community change.

DuBow and Litzer (2019) advocate the iterative development and use of a theory of change to inform the evaluation of a complex national initiative. This theory of change went beyond the assumptions and logic model developed in the early stages of the initiative. It focused on providing a blueprint that articulated the connection between the organization’s activities and outcomes. The theory was developed using an iterative process with program stakeholders. It was used to prioritize the organization’s activities, provided organizing principles for reports and proposals, and shifted the evaluation to be more in line with the theory of change. These approaches informed the interactive process used to develop and refine the FCHES Center Evaluation and how this framework was used to inform Center activities.

Moreover, only a few studies have examined the evaluation of large transdisciplinary initiatives, similar to FCHES. Hence, these studies informed the development of the proposed framework for a health equity–focused TCC. Trochim and colleagues (2008) have provided suggestions for evaluating large research initiatives including developing comprehensive conceptual models. The authors argue these models should use participatory and collaborative evaluation approaches, incorporate integrative mixed methods, and adapt to the various stages of the initiatives’ development (Trochim et al., 2008). Further, they concluded that there is a need to improve funding and organizational capacity for evaluation to ensure that evaluation is sufficiently integrated into all aspects of planning and implementing large collaborative initiatives. Klein (2008) also examined transdisciplinary research and concluded there are seven generic principles to pay attention to when evaluating this type of work. Some of these principles include the variability of goals, leveraging integration, management, leadership, coaching, effectiveness, and impact (Klein, 2008).

Informed by the emerging literature on evaluating large transdisciplinary collaborative initiatives, including the Centers for Disease Control and Prevention framework for program evaluation to evaluate a TCC (Centers for Disease Control and Prevention, 1999), FCHES developed an evaluation framework. The authors and community partners designed this theoretically based evaluation framework. The evaluation framework provides a comprehensive and systematic approach to understand, monitor, and evaluate the Center’s progress toward achieving its aims and goals. The framework includes components of a logic model and draws on concepts proposed by theories on research–community partnerships, organizational theory, economic evaluation, dissemination, and implementation science. Moreover, the Center evaluation framework focuses on four primary areas: (1) Center operations; (2) CAP and collaboration; (3) allocation and utilization of human and financial resources, including a cost-benefit analysis; and (4) dissemination of community and research products.

This article presents a model for evaluating FCHES, a large TCC conducting community-based participatory research on health disparities and chronic diseases. The FCHES is an assembly of stakeholders, including public health researchers, policy makers, health officials, community organizations, and faith-based partners across various specialties, to mount evidenced-based and promising approaches to prevent chronic disease and reduce health inequities. This report begins with a discussion of FCHES and the development of the conceptual framework used to frame the evaluation. Next, information on the data collection and analysis is provided. The report ends with an overview of the Year-2 pilot evaluation findings and lessons learned that could be used to inform the review of other transdisciplinary research Centers.

FCHES Overview and Evaluation Framework

FCHES is a NIMHD-funded TCC for health disparities research on chronic disease prevention within the Department of Health and Human Services defined Region 5 (

The overall aim of FCHES is to serve as a regional focal point that creates, nurtures, and sustains productive transdisciplinary partnerships that provide health equity solutions for the elimination of health disparities. The long-range goal of FCHES is to eliminate disparities in physical and behavioral health through developing, implementing, and disseminating community-based multilevel interventions and creating sustainable health equity solutions in partnership with a broad section of multisectoral stakeholders. To evaluate the extent to which these aims and goals were being realized, it was essential to develop a comprehensive conceptual framework to guide the Center evaluation.

Development of the FCHES evaluation conceptual framework

As a part of the NIMHD proposal, FCHES architects created a comprehensive evaluation plan designed to monitor the progress of the proposed activities, facilitate ongoing project management, and ensure the successful completion of stated aims. The evaluation plan detailed in the proposal focused on evaluating administrative functioning, scientific accomplishments in translational activities, scope and impact of research, innovation, collaboration and communication, integration and synergy, and funds management. Also, key milestones, benchmarks, and metrics were identified for each Core and project. Although these elements were clearly articulated in the proposal, the evaluation team developed an evaluation framework that served as a conceptual lens through which evaluation efforts were focused.

The architects of the Center found it important to have a Center evaluator (first author), whose role was to monitor the conduct and track the progress of the Center’s research, implementation, and dissemination activities. The Center evaluator, who was external to the Center, worked closely with the Administration Core, Center principal investigator (PI), and the Center Steering Committee to provide ongoing recommendations and feedback that improved the overall efficacy of the Center’s progress toward its aims and goals. Furthermore, the Center evaluator provided a yearly comprehensive evaluation used to improve Center productivity, work productivity, and collaborative synergy. The Center evaluator was also responsible for directing the development of the evaluation framework, protocols, and metrics used to inform the Center’s work and provide a systemic approach to evaluating FCHES’s reach and impact. Although the Center evaluator was central to the work, an evaluation team was created to develop the framework, identify metrics for evaluation, and collect and analyze evaluation data.

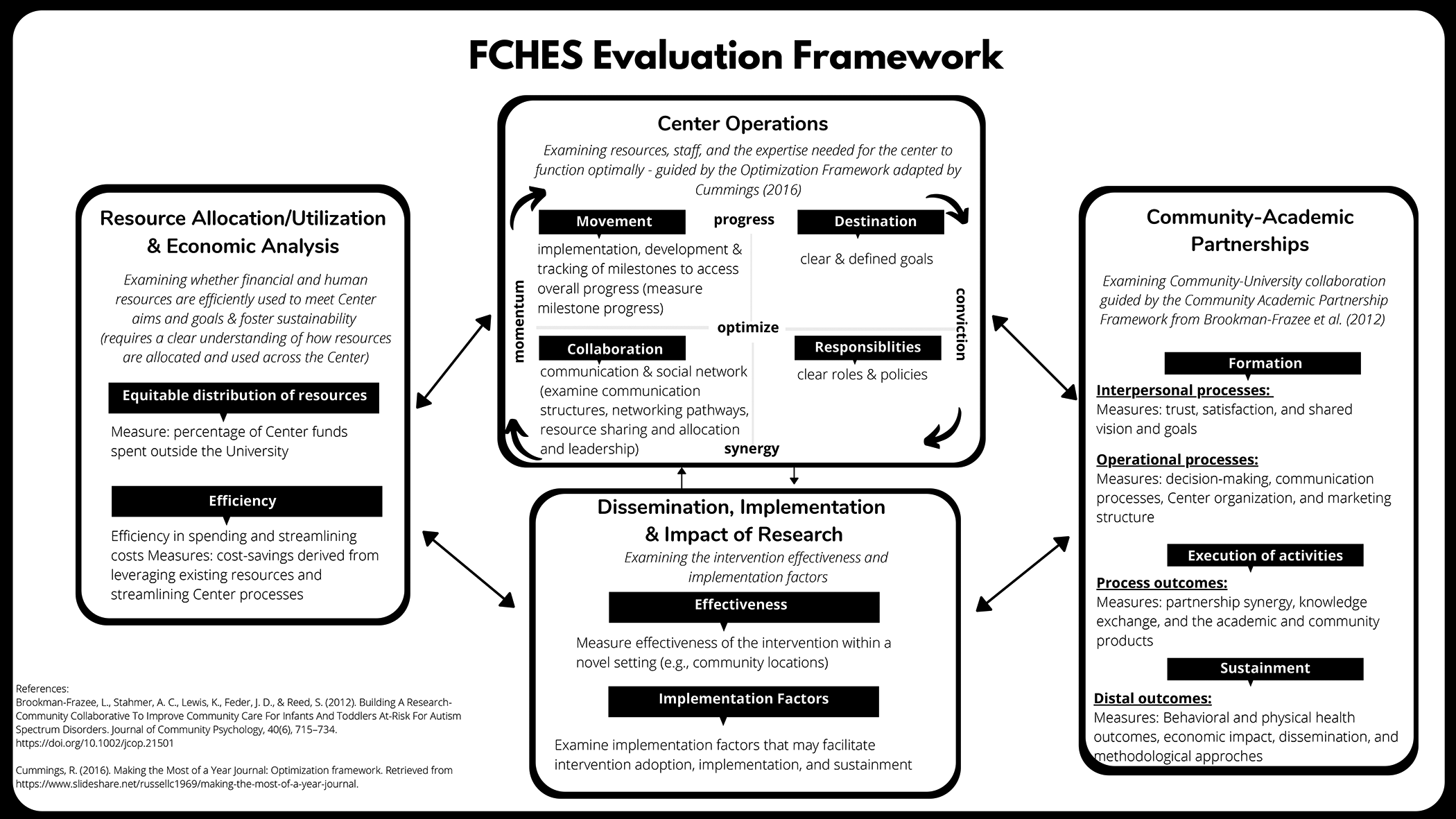

In the sections that follow, we provide an overview of the overarching FCHES evaluation framework and describe the various theoretical models that comprise this framework. The conceptual framework shaping the evaluation moved beyond merely assessing the progress and impact of the interventions, which is the focus of most evaluations of large initiatives. The framework was used to assess the Center’s functional operations, how resources are allocated and utilized, the active and consistent collaboration between academic and community partners, and the dissemination and impact of the Center’s research products and economic impacts. Hence, the evaluation team, which included an external evaluator, a research assistant, a community partner, an academic partner, and an economist, developed a comprehensive overarching framework. Drawing from concepts and methods from multiple disciplines, this comprehensive conceptual framework was used to understand the evaluation of a TCC (FCHES). The four integrated theoretical models underlying the comprehensive evaluation focus on organizational optimization (Cummings, 2016), economic evaluation and cost-effectiveness, research–community partnership (Israel et al., 2005), and dissemination and implementation science (Robinson et al., 2005). Figure 1 illustrates the linkages between the four theoretical models informing the evaluation, reflecting how each element interacts and influences each other. The framework was refined through a collaborative process with Center leadership and community partners to ensure that it reflected the various FCHES stakeholders’ important elements. Later in the paper, we discuss the evaluation questions, data collection, analysis methods, and results from a pilot study utilizing this conceptual framework.

Flint Center for Health Equity Solutions evaluation.

Center operations

For FCHES to meet its goals, it had to operate as a cohesive organization, and each Center component had to function optimally. A critical part of an evaluation framework must draw from organizational theory, which explains the behavior of groups who interact with each other to perform the activities intended to accomplish a common goal (Jones, 2013). Further, it was essential to understand the kinds of behaviors that would support the Center in efficiently meeting its objectives. Hence,

The organizational optimization framework used four major components: destination, responsibilities, collaboration, and movement. The

Organizational optimization began by informing stakeholders of the FCHES overall aims, goals, and specific objectives. After examining and refining these goals, each Core and project developed clearly defined milestones reviewed and approved by the Administration Core and were programmed in a milestone tracking system discussed in detail later in the report. While these milestones were being developed, the roles and responsibilities were defined, and various training and professional development activities were implemented so that all members of FCHES were empowered in their roles and understood their duties and responsibilities. Also, we examined how various projects and tasks were managed and executed to accomplish these activities. Understanding the nature of collaboration within the Center was essential to evaluating Center operations and overall Center effectiveness. Hence, we examined Center communication structures, networking pathways, resource sharing and allocation, and leadership to assess collaboration. The movement was evaluated by analyzing data from the milestone tracking system implemented across all the Center Cores and projects. In addition, a list of potential barriers to progress was created to understand the factors that impeded Center progress, and a feedback system was implemented to address these barriers as they arose (see Appendix 1).

CAP

Critical to the design of FCHES and its primary aim was to harness the collective energy, expertise, and commitments of community stakeholders to conceptualize, create, build, and sustain health equity solutions for the Flint community and the region. As a result, the Center was community-partnered from its conception. All aspects of the Center’s operations and success rest on the extent to which the community is involved with the Center’s overall leadership, structure and governance, program implementation, and research dissemination. It was a priority for the Center to use the community-based participatory research model (Israel et al., 2005). With this focus on community-engaged research, one of the critical components of the evaluation model addressed the

This model proposes that member engagement facilitates the achievement of positive outcomes because committed and invested partners participate more actively to achieve the goals of the partnership. It is through this investment and commitment that collaborative synergy gets achieved between the Center and its partner organizations (the consortium partnership), which promotes the achievement of the goals and aims of the Center (Figure 2). This model was used to understand and evaluate the nature of the collaboration between the research team and the community partners during each of the three stages of Center development (formation, execution of activities, and sustainment). The formation years of the grant, Years 1 and 2, were the focus of the pilot evaluation.

Community–academic partnership framework (Brookman-Frazee et al., 2012; Drahota et al., 2016).

Figure 2 represents the CAP framework that was used to examine and assess community collaboration. The leading agency, Michigan State University (MSU), consulted with community organizations from the inception of FCHES. The consortium partner organizations represented many different facets of the community and were engaged in all aspects of Center operations and leadership. For Years 1 and 2, this model was used to understand the collaborative process that included interpersonal and operational processes. To examine the interpersonal processes, we focused on understanding the facilitating and hindering factors that impacted the quality of relationships and communication among partners. Such factors included trust, satisfaction, shared vision, and Center goals. The operational processes were also examined, including decision-making and communication processes, Center organization, and meeting structure. During Years 3 and 4, the execution of the activities phase, the evaluation will be focused on process outcomes, which include partnership synergy, knowledge exchange, and the academic and community products produced by FCHES. For Years 5 and beyond, various data will be collected to understand the partnership’s distal outcomes.

Resource allocation and utilization and economic analysis

One of the critical concerns raised in the literature on transdisciplinary research Centers is whether the resources used in these Centers would be better spent using the individual scientist projects. The

A critical component of the evaluation framework is the economic evaluation. The economic evaluation considers the overall economic impact of the Center and includes cost and cost-effectiveness analyses of the two research projects. The overall FCHES economic impact evaluation relies on using IMPLAN, an input/output (I/O) model to represent the interrelationships among economic sectors (Anusha & Mehmood, 2018; Brigid & Hawkins, 2019). For context, I/O data show the flow of commodities to industries from producers and institutional consumers for any given region (in this case, Genesee County, MI). These trade flows built into the I/O model allow the estimation of the impacts of one sector on other sectors. Due to the nature of the NIMHD investment in research, we selected a single industry component—scientific research and development services—to estimate these economic impacts. The model relies on the region- and county-specific multipliers to estimate the total effect of a project in an area.

In addition, the economic analyses of the two interventions (SFF and CC) include cost, cost-benefit, and cost-effectiveness analyses. Most economic analyses are conducted and reported retrospectively after the interventions are completed. We are currently conducting prospective SFF and CC interventions cost analyses to capture and report costs and immediate consequences of the intervention activities and inform the everyday aspects of the intervention in real time and early planning for potential intervention scale-up. Cost analyses will be followed by cost-benefit and cost-effectiveness analyses upon the completion of the CC and SFF interventions. The evaluations include cost-effectiveness analyses of the CC multilevel intervention, with the primary study outcome being the average systolic blood pressure change between the baseline and 6-month assessments. Cost-benefit analyses compare the intervention costs to monetized benefits derived from primary or secondary outcome improvements. Cost-effectiveness analyses are planned for the SFF interventions focusing on the primary (family resilience) and secondary (behavioral health), SFF study outcomes of implementation, and adoption of evidence-based substance-use programs among local behavioral health providers.

Academic and community dissemination of research and impact

Dissemination is defined as “an active approach of spreading evidence-based interventions to the target audience via determined channels using planned strategies” (Rabin & Brownson, 2012, p. 26), whereas implementation is defined as “the process of putting to use or integrating evidence-based interventions within a setting” (Rabin & Brownson, 2012, p. 26). Central to the work of the Center was the study of the two collaborative intervention projects (SFF and CC). Each project had research questions related to the clinical effectiveness of the intervention being delivered and factors influencing the intervention’s implementation within community-based settings. As a result, each project PI worked with the DISC PI to transform the studies into effectiveness-implementation hybrid trials (Curran et al., 2012). Implementation-specific research questions were added to test both the intervention’s effectiveness within a novel setting (e.g., community locations) and evaluate implementation factors that may facilitate intervention adoption, implementation, and sustainment. This integration of clinical effectiveness outcomes with implementation outcomes led the evaluation team to realize that not only were the projects themselves interventions, but the Center itself could be viewed as an intervention in the Flint and surrounding communities.

With a major goal of disseminating its research products to various academic and community stakeholders, it was essential to evaluate dissemination processes and products during every phase of Center development (Butterfoss & Kegler, 2009). This became a central goal to allow researchers and the broader community to share value and increase the effectiveness of the research conducted by the large Center initiative. The Center emphasized conducting research that was responsive to the priorities and needs of the community as a primary goal of dissemination and implementation science research (Gopichandran et al., 2016). Hence, it was critical to understand the policies, procedures, and systems that shaped the dissemination of research to both academic and community outlets. Guided by the Linking System Framework (Robinson et al., 2005), the evaluation team worked with the Administrative Core and the Dissemenation and Implementation Science Core (DISC) to develop a process and set of procedures to guide the development and dissemination of products produced by FCHES (Figure 3). Furthermore, this framework will guide the DISC’s dissemination of research and community products and provide critical evaluation constructs.

Linking system approach to dissemination (Robinson et al., 2005).

Evaluation approach, questions, and data sources

The evaluation team developed the evaluation questions in partnership with the Administration Core, including the Center PIs. Once these questions were established, they were vetted by Core and project leaders and community partners through an ongoing and iterative process. These questions reflected the various components of the Center evaluation framework, including Center operations and effectiveness, community collaboration and partnerships, and overall Center impact. For each of the evaluation questions, data were collected and analyzed to create a Year-2 evaluation report that was shared with the Center staff and partners and informed Year-3 goals, activities, and initiatives. The evaluation report documented FCHES’s progress, informed project leadership, and refined Center processes to ensure timely and cost-effective achievement of FCHES goals and aims as well as those of the various Cores and projects. The data sources used in the evaluation were based on the conceptual framework and relied on several data sources (Table 2).

Evaluation Questions and Data Sources.

Note. FCHES = Flint Center for Health Equity Solutions.

Quantitative Data

In collaboration with the DISC, a Center survey was developed to evaluate the collaboration among FCHES Cores and projects as well as overall FCHES partnership synergy. Specifically, FCHES personnel, including community stakeholders belonging to the Consortium Core, were asked whether their primary Core collaborated with other Cores and in what way (i.e., obtained consultation, provided consultation).

Quantitative Data Analysis

Center survey data were analyzed to provide information about the types and nature of collaborations among FCHES personnel and partners. FCHES partnership synergy was also measured using adapted surveys from Schulz et al. (2003): one adapted for use with FCHES personnel and another adapted for collaborative partners. The FCHES partnership synergy survey measured six domains: general satisfaction, impact, trust, collaborative decision making, organization and structure of meetings, and communication. Each question was rated on a 1 (

Qualitative Data

FCHES Year 1 and 2 progress reports

Per NIMHD guidelines, FCHES is required to submit a progress report, which describes the grant’s scientific progress, identifies significant changes, reports on personnel, and describes plans for the subsequent budget period (U.S. Department of Health and Human Services, National Institutes of Health, n.d.). The evaluation team reviewed and analyzed these reports to understand Center operations, progress, and impact.

Center meeting minutes and attendance

Each month, FCHES holds Center leadership meetings that are attended by Center leadership, key personnel, and representatives from consortium partners. The agendas, minutes, and attendance for these meetings were a primary source of data for the evaluation team and were used to help evaluate the alignment of Center activities to Center goals, collaboration with community partners, operations, and Core and project progress.

Core and project one-on-one meetings with the evaluator

During Years 1 and 2 of the project, the Center evaluator held meetings with the Center PIs to review project milestones and discuss barriers to progress. The Center evaluator would then share common barriers reported with the administrative Core to develop action plans to support the Cores and projects.

PDPP development and implementation

The Product Dissemination Policies and Procedures (PDPP) was created with input from each Core and was used to promote the scientific and technical accuracy of FCHES publications and ensure that fair credit was given to the authors and other contributing individuals. Also, a Product Tracker in SharePoint (list feature) housed necessary information about each product, including the author, title, type of product, and the intended audience.

Informal feedback from people using the PDPP

As the use of the PDPP grew, staff from the DISC and Administrative Core elicited feedback from FCHES collaborators to continue to refine and improve the product submission process. These informal communications resulted in the addition of a frequently asked questions sheet to clarify common questions and enhanced infographics to provide a visual representation of the submission steps. The Product Tracker was an effective method of encouraging communication between Cores because of its accessibility and comprehensive inclusion of publications and products to both academic and community audiences.

FCHES website

The Center website provided an overview of the Cores and projects by listing their goals and responsibilities. The website was designed to be a “living site” in which pages were updated as progress was made to keep the community aware and engaged. One of the goals for the website was to be community-friendly so that non-researchers could easily understand what FCHES was and what it aimed to do in the community. The Center evaluator used the website to keep track of the overall aim of FCHES and ensure milestones were set to meet the aim. Reports from Google Analytics were used to understand how many users interacted with the website and how many were new or returning users.

Observations of center activities

To understand Center operations and community collaborations, the Center evaluator observed various Center operations and activities, including monthly Center meetings, Core and project leader meetings, and the yearly Center retreat. During these activities, the Center evaluator took detailed notes that were analyzed using qualitative coding. These notes were used to document how Center operations were being implemented to ensure the destination was clearly defined to all stakeholders, including roles and responsibilities. The nature of collaboration between community partners and Center staff and how the Center moved toward its intended aims and goals were also documented.

Core and project milestones and reports

Critical to the evaluation of overall Center progress was the development and implementation of a systematic milestone tracking system. Milestones are defined as critical benchmarks that are needed for the completion of an aim or goal. Each Core and project worked with the Center evaluator to establish monthly milestones based on their specific aims and reflected the work of the individual Core or project. Hence, these milestones were not uniform but were dynamic to match each Core and project’s goals as the work evolved. Examples of the kind of milestones that were tracked included the following: “conduct partner survey to determine preferred methods for sharing and receiving information,” “conduct systematic review activities, including article selection, review, and coding,” and “get input on data and measures index from every Core.” This milestone tracking process began with identifying key metrics, collecting monthly data using an electronic data collection survey, developing milestone reports reviewed by the evaluation team and Administrative Core, and providing feedback and support based on milestone tracking. In addition to tracking the progress toward milestone completion, Core and project leaders also recorded barriers to progress. The evaluation team created a list of barriers to progress in consultation with the DISC (Appendix 1) to record and address any barriers that would potentially impact not only the progress of the Cores and projects but also could impede overall Center progress.

Integrated Qualitative Data Analysis

After each data source was collected, carefully reviewed, and analyzed, an inductive qualitative analysis process was used to develop themes that emerged across the various data. This process began with collecting the qualitative data using a start list of codes developed by the Center evaluator. The start codes were created using key concepts from the various theoretical models that composed the evaluation framework. After coding the data using these codes, the second level of codes (pattern codes) were developed and used to identify preliminary themes and assertions that answered the evaluation questions. In addition to employing these coding strategies, the evaluator used memos to organize, summarize, and synthesize the data and emergent themes that Centered on six focus areas. The areas of focus for the Year-2 evaluation were (1) activity alignment and milestone tracking, (2) interproject/Core collaboration, (3) community–academic partnership and collaboration, (4) facilitators and barriers to progress, (5) personnel and resource allocation and management, and (6) overall impact of FCHES.

Summary of Year-2 Results: Pilot

During Year 2, the FCHES evaluation framework was finalized and used to shape the Center’s evaluation. Hence, the evaluation team used this emerging framework to conduct a pilot evaluation of four domains (Center operations, resource allocation and utilization, community–academic partnerships, and dissemination of research) that were essential to understanding to what extent FCHES was meeting its goals, specifically during the formation stage. The results presented in this section represent the major findings of the Year-2 evaluation. They are presented in a way that reflects the themes that emerged from the data analysis.

Overall center progress

During the initiation phase (Years 1 and 2), FCHES moved steadily toward achieving its aims and goals. Year 1 was spent building the Center’s infrastructure, hiring and onboarding project personnel, conducting meetings, and creating strategic university and community partnerships. Since Year 1 was truncated, many of these infrastructure-building activities were continued in Year 2. Year 2 was characterized by securing personnel and community partnerships, building the Core and project teams’ momentum, and engaging in activities aligned with their aims and goals. The two multilevel intervention projects completed institutional review board approvals and needs assessments and are now concluding the stakeholder and participant recruitment essential to the projects’ full implementation in Year 3. This was done despite significant contextual changes that impacted the SFF project. The Center’s activities became more streamlined to align more closely with its overall aims and goals, with a focus on the equitable allocation of Center human and financial resources, refining milestones and project plans for Cores and projects, sustaining and empowering community partnerships, increasing visibility, and research dissemination and providing training and development opportunities to Center staff and community partners.

Milestone tracking system

During Year 2, FCHES refined and launched its electronic milestone tracking system in Research Electronic Data Capture to support Cores and projects with tracking their progress toward their major goals and objectives. Developing an electronic milestone tracking system was central to the projects and Cores strategically aligning their work to their goals and delineating milestones from tasks and activities. This clarity has been essential to forwarding of the Center’s progress and empowering the staff to maximize their efforts and resources.

Interproject collaboration and community partnership building

Many of the activities in Year 2 were centered on developing procedures for cross-Center and community collaborations that would support the effective implementation of the two multilevel interventions. To that end, community leaders and university researchers collaborated to expose the community to the intervention projects, develop strategic relationships with community members and leaders, and engage these community partners in all phases of the work of the Center. Specifically, having a community coinvestigator for each project and Core was vital to the collaborative model driving the Center’s work. The Consortium Core created structures including subcommittees and information distribution processes that facilitated community partner feedback.

Center outreach and dissemination

During Year 2, the Center engaged in many activities designed to increase public exposure, community engagement, participant recruitment, and research dissemination. Data revealed that over the past 18 months, the Center expanded its exposure through its website, consortium partnership meetings, Center kickoff event, and other community activities. Engagement of community members was done through frequent meetings with Consortium partners and other stakeholders. The DISC was instrumental in assuring that both projects were effective hybrid Type II implementation studies. The project leadership team engaged in many strategic meetings that increased the Center’s awareness and increased its impact on local public health initiatives. Both intervention projects had started recruiting for their projects and were on target to reach their participant goals within the following 6 months.

Impact of FCHES on flint and region

Data from Year 2 suggest that FCHES has had a tremendous impact on workforce development, public health research infrastructure, and public health advocacy. To date, the Center has roughly 68 people engaged in Center-related activities, of which have received some form of training and compensation from the Center. Many of those employed by FCHES have had little to no formal training in formal public health research. Hence, one of the major impacts of the Center has been on providing workforce training and building the research capacity in Flint. FCHES coalition has been visible in the local community and has leveraged their individual and collective knowledge and expertise to shape policies and influence various community and government stakeholders.

Economic evaluation

Year-2 activities focused on developing plans and procedures to support the envisioned economic impact analyses, cost-benefit, and cost-effectiveness analyses. Specifically, the Center and project leaders worked on refining the interventions’ aims and the primary and secondary outcomes in alignment with the overall Center goals. The leaders and the health economist collaborated toward developing overall Center and project-specific economic analyses supported by the overall Center and project goals and the assessed costs and outcomes. The activities of Year 2 were vital for progressing toward the final economic evaluation of the overall Center and the SFF and CC projects.

Research and community products dissemination

During Year 2, the DISC helped cocreate a tool to review nonacademic and community-focused products. This was an important step in further communicating FCHES’ commitment to providing understandable results to various audiences. The tool was designed to be used by Consortium Core members to provide feedback on the appropriateness and suitability of products for community comprehension and acceptability. The creation of this tool highlighted the unified partnerships within the Center, where equal emphasis is placed on all audiences at all levels.

Data from Year 2 suggest that the number of publications and products increases as the Center and its associated interventions develop and collect data. Future data will include the number of products that were reviewed and the resulting number of academic presentations, academic peer-reviewed publication submissions, community-based presentations, and community-based products distributed to community stakeholders. Additional data will be collected related to any facilitators or barriers related to the PDPP and submission process.

Discussion

The evaluation report for Years 1 and 2 indicated progress made, challenges, and recommendations for improvement. In this section, we discuss the results and lessons learned from our pilot implementation of the evaluation and future directions for FCHES evaluation, particularly implementing the evaluation framework for Year 3 and beyond. Further, we provide recommendations as to how other transdisciplinary Centers can adopt this framework to evaluate their work.

Center Evaluation Distinct From Program Evaluation

As stated earlier, evaluating collaborative Centers is distinct from evaluating programs (Trochim et al., 2008). Hence, the Center evaluation must consider all components involved (projects, personnel, resources, collaboration, etc.) instead of evaluating a single program and its outcomes. A Center has many moving parts that are essential to the overall success of the Center. Previous evaluation of large research Centers has shown that both scientific and administrative management is important for Center success (Nass et al., 2003). This was also the case at FCHES, where the Administrative Core was responsible for the overall leadership and worked closely with other members (i.e., Center Steering Committee and Data Safety and Monitoring Board) to ensure the projects were moving toward the end goal. The Administrative Core recognized a need for Center evaluation and hired a Center evaluator. The evaluator joined Center meetings to gain insight into the Center’s overall aim and work and reviewed monthly milestone reports that brought attention to any barriers to progress. Based on this experience, the evaluator created a framework that could be used to evaluate Centers.

The framework is innovative in the way that it accounts for all major components of a Center (demonstrated in the four domains of the framework mentioned earlier). This framework can be applied to other Centers and be used as a guide for evaluators examining work beyond a project or program. As transdisciplinary science and collaborative research Centers grow, evaluation is needed to continue refining Center goals and impact. Future research should seek evaluation models that fit large research initiatives such as Centers and organizations. The evaluation team is currently using this framework to evaluate the overall center goals, aims, and outcomes. Once this framework is tested on FCHES, the results will be disseminated and shared with the larger research community with recommendations as to how this framework was improved or refined as a result.

Evaluation Through the Phases

As other scholars have suggested, the evaluation of large initiatives evolves as the Center moves from one phase of development to another (Gajda & Koliba, 2008). Transdisciplinary Centers go through various development stages, and when adopting a framework for evaluating a Center, the framework must be adaptable and useful for assessing progress at multiple stages. As a team, we are creating metrics and outcomes that can be used to determine progress at various phases of Center development (formation, execution of activities, and sustainment). The Year-2 evaluation reflected outcomes that were critical to the initial formation of FCHES. However, in Year 3 and beyond, one goal is to use this framework to target specific data sources, metrics, and outcomes that will be used to measure progress during the execution of the Center’s activity and sustainment phases.

Lessons Learned

In the process of developing the Center evaluation framework, there were several lessons learned. First, the evaluator’s role was meant to be external to the Center and evaluate whether overall aims and goals were met. However, the evaluator became very involved in the Center by attending meetings and participating in the Center Steering Committee, which reviewed quarterly progress and had discussions around Core and project barriers to progress. The milestone tracking component was led by the evaluator and was used to track the overall Center effectiveness. Reviewing milestone progress was a time-consuming project that led to more involvement of the evaluator as the programming process had to be adjusted based on newly identified needs. Second, given the scope of the project, it was important to expand the evaluation team beyond the center evaluator. Hence, the evaluator worked collaboratively with a team that consisted of a health economist, members of the DISC, Center administrators, a graduate student, and a full-time research assistant. The members of this team supported the evaluator with developing and refining the evaluation framework, developing evaluation metrics, data collection and analysis, and crafting the yearly evaluation report. Much of the data used in the evaluation was being collected for multiple purposes; hence, the collection of evaluation data was not isolated but integrated into ways that were not disruptive or burdensome to the evaluator and the team. The evaluator also worked cooperatively with the Administration Core and was given resources and support to conduct the evaluation. It was important to draw on the expertise of center leadership and others to conceptualize and implement the evaluation work; hence, we encourage other centers that are conducting similar work to draw on the expertise of key center stakeholders to evaluate their TCCs and ongoingly collected data that can be used for multiple purposes.

Expanding the Use of the FCHES Center Survey

When the Center survey was initiated in Year 1, this instrument’s primary goal was to understand the nature of collaboration between Center staff and its community partners. Only select personnel completed the survey, and survey completion was voluntary. We learned that this approach did not optimize the use of this instrument and that it needed to be refined so that it could be used to gather other important data. We also needed a survey used for FCHES personnel and a separate survey for our community partners. Therefore, the Center survey was revised, and Center staff and community partners were required to participate in the survey for Year 3. The survey is administered every 6 months and used to understand Center operations, community collaborations, and overall Center impact and to inform the overall direction.

The Evolution of a Center Evaluator Role

The TCC solicitation did not require a Center evaluator role; however, FCHES architects created this role to ensure the successful completion of the stated aims. Although the evaluator’s role was articulated in the proposal and was primarily viewed as an external function, this role evolved and became an integral part of overall Center operations. This evolution was predicated by the Center’s needs and the valuable perspective provided by the evaluator, particularly in defining and refining project and Core objectives, linking activities to goals and outcomes, developing and refining milestones and the milestone tracking system, and supporting the annual reporting process. Through our experience, we learned the usefulness of having an evaluator and how ongoing evaluation is critical to supporting the Center in assessing the projects’ effectiveness and the overall Center. Specifically, the evaluator provided ongoing substantive feedback to Center PIs and Core and project leaders which helped the leadership maintain a big picture eye view of the Center and streamline its activities. It was through this work that the need for an evaluation framework was articulated and eventually developed to fill the gap in the literature that explores how large TCCs can be assessed. From our experience, we learned that other similar Centers should consider creating this role and a team to support the evaluation while allowing the role to evolve and expand based on the needs of the Center, its leadership, and its stakeholders.

Adapting FCHES Framework to Other Transdisciplinary Centers

When the Center evaluator was given the charge to evaluate the overall Center, there were very few models that could be used to evaluate FCHES. As cited earlier, there was scant literature on how to think about transdisciplinary Centers and the various components essential for the success of a large multidisciplinary initiative. Much of the evaluation literature focused on evaluating initiatives or programs, not overall Center effectiveness. The few studies that did provide a framework for transdisciplinary Center evaluation were not appropriate or comprehensive enough to be used to evaluate a collaborative Center. Given the aims and goals of FCHES, the evaluation needed to not only assess the progress of the two multilevel interventions but also be able to help understand how the overall Center was optimizing its personnel and resources to achieve its aims and goals. Simply tracking the progress of how many people participated in our programs and the individual outcomes of participants was insufficient to understand how Center operations, partnerships, and research were contributing to the elimination of health disparities in Flint and Region 5. Hence, this FCHES evaluation framework can be used by other Centers and be adapted to assess similar Centers with far-reaching and comprehensive aims and goals.

Conclusion

This report offers an evaluation framework used to measure the FCHES’ progress on its overall mission and goals. The framework provides a model for the evaluation of other transdisciplinary community-based research efforts. The framework also takes into consideration the phases of establishing a new transdisciplinary collaborative research initiative. Ultimately, the framework will serve as a mechanism to evaluate Center progress and establish the value of implementing community-engaged research in a Center setting. The framework was piloted in Years 1 and 2 of FCHES and helped Center leadership identify areas requiring improvement and highlighted effective policies and activities. Future work will require refinement of the measures and indicators driving the framework as it is fully implemented.