Abstract

Keywords

Introduction

The rapid technological growth of IoHT includes thousands of sensors in various medical sensor equipment and application generates and collects a large volume of data. This massive amount of data is processed from various applications, sensor networks and small to large-scale IoHT.1,2 Bigdata refers to any complicated, diverse, and massive number of data that traditional data processing systems are unable to handle. Initially, the properties designed to describe bigdata volume, variety, and velocity. Later, extra attributes, including authenticity, venue, validity, lexicon, vagueness, and value were added to provide equivalent descriptions of large data sets. However, these features frequently run into simultaneous difficulties in complex IoHT applications when storing, analyzing, and retrieving the desired findings. The act of vast volume of complicated data to find correlation and uncover hidden patterns is known as big data analytics.3–5

Hadoop is a free and open-source structure that permits huge volumes of information to be handled and put away in a conveyed, versatile, and reliable processing climate.6,7 Hadoop is widely utilized in public cloud services and massive clusters because it is cost-effective, quick to process, fault-tolerant, and adaptable. The Hadoop Distributed File System (HDFS) and MapReduce 8 are the two major components of Hadoop. MapReduce allows for concurrently processing massive troves of either structured or unstructured data. To distribute massive amounts of data, HDFS employs many hard drive logical file systems. Hadoop’s processing power is far superior to traditional data processing systems but has security issues. There is no method to secure data while it is being stored or transferred since Hadoop is not built for security. Many firms utilise big data for research and marketing, but security considerations may be overlooked.9,10 The data breach would harm the company’s brand and have legal ramifications.

Hadoop big data manages financial data, personal data, business data, and sensitive information such as customer, client, and employee information. Furthermore, organisations store and analyse a large quantity of data, which must be protected in HDFS storage via encryption, threat detection, and logging measures. These strategies aid frameworks in quickly spotting threats and gathering client data. The diversity of large amounts of information describes it. Text, photos, music, and video are all examples of big data. A range of approaches have been developed in recent years to protect data during its development and storage phases. Data falsification methods and access limits increase data privacy during the generating phase. Encryption techniques are mostly used to ensure data security and privacy during the storage phase. 11

The process of converting plain text to ciphertext is known as encryption. By permitting users to have sufficient authentication and banning others, the conversion of explicable data into inexplicable form secures data privacy. Encryption keys are transferred, and the decryption operation will employ the duplicate keys. Encryption is still used for data protection and confidentiality. Strictly speaking, there are two types of encryption algorithms: symmetric vital algorithms and public key algorithms. Existing research uses various encryption algorithms to secure massive data, including Blowfish, AES, RSA, DES, RC4, RC6, and ABE.12–14 However, when dealing with a significant volume of data in a dynamic environment, the performance of this standard encryption mechanism degrades. As a result, developing an effective data encryption technique for important data security becomes critical. The following are the significant contributions of this research work. • A hybrid data encryption algorithm based on ABE and Blowfish is proposed. • A comparison of the proposed encryption method to other hybrid encryption techniques like CP-ABE + HE, HE + BF, and ABE + BF. • The effectiveness of the proposed ABE + BF has been measured in terms of its ability to encrypt and decrypt data, as well as its throughput and file size. • The total time taken in encryption and decryption is improved by using ABE + BF shown in the results.

The rest of the paper is organized as follows: Section 2 has a brief related works; Section 3 contains the proposed method and materials Section 4 contains result and discussion, and Section 5 contains the conclusion.

Related works

This section contains brief literature reviews on large data security and encryption techniques. Using various encryption algorithms, researchers are working to improve the security and privacy features of large data applications.15–17 In the homomorphic cryptography and secure organisation convention configuration introduced in 18 a security saving closeout process is used to further grow information classification and confidence between the client and outsider specialist co-op. Homorphic encryption is used to ensure the security of data transfers between users and third-party service providers. For increased data security, the approach has been expanded to include signature-based verification. However, as the number of users and file sizes grow, so does the system’s performance.

The completely homomorphic encryption scheme suggested in overcomes internal and external information security gaps and threats in the vast information environment. 19 The sophisticated cryptosystem examines the tasks and divides the processing and data into two parts. This procedure greatly increases the system’s data processing capability while ensuring high accuracy. 20 Describes comprehensive data encryption and compute acceleration process that can handle various circumstances. For significant information encryption, a varied crypto speed increase secure information stockpiling framework that forcefully enhances the enormous information document functioning modes is provided. When compared to existing software and hardware accelerators, the study’s findings offer a superior tradeoff.

The data analysis methodology proposed in successfully handles privacy issues connected with the process of transmitting health information by utilising encryption. 21 Patient-centric data access control mode addresses privacy concerns in health information as well as the need for data encryption. The suggested RSA-based encryption encrypts and distributes the patient file over many domains. Though the encryption paradigm efficiently addresses security and privacy concerns, data transmission requires numerous domains. When compared to alternative data transmission technologies, this raises the system cost.

The probabilistic trapdoors are designed to survive assaults and improve data security. The unique encryption technology announced in ensures information privacy in massive data streams. The dependability of obtained information is determined by the security factors of information respectability and privacy. The designers use unique encryption to improve information flow and unscrambling performance while maintaining information trustworthiness and categorization. 22

The problems in traditional cryptography techniques were explored in Attribute-based encryption. 22 In a huge data context, fine-grained access control is critical, and the encryption approach provides this. Flexible policies improve access control on encrypted data and increase efficiency when dealing with huge amounts of data. The results were analyzed using 10 distinct attributes, and cryptographic acceleration was used to improve performance. This encryption model’s main advantages are its low memory and processing power. 23 describes a hybrid Attribute-Based Encryption (ABE) model that solves the drawbacks of traditional ABE methods. As traditional models’ techniques become obsolete over time, the shown crossover model integrates intermediary re-encryption to convert ABE’s ciphertext into Personality Based Encryption (IDE) cipher-text. Increase security and prevent data collisions by using the identity-based encryption and key randomization.

Gaytri et al. proposed a hybrid model securing the bigdata to fine-grained and versatile access control for medicinal services records; the authors have proposed an encryption strategy that makes use of CP-ABE in conjunction with the Honey Encryption (HE) method to encode each sensitive public healthcare data document. The proposed approach gives secure information transmission and healthcare services information classification. Far reaching expository and test results are introduced which mirror the proficiency of the proposed approach. 24

Authors developed a combined approach for securing the message from brute force attacks using Honey Encryption, a powerful symmetric key encryption technique, and an Android-based device; we propose a secure messaging system in this research article. A comparison between the Honey Encoding with AES and Honey Encoding with Blowfish algorithms is also conducted. Studies reveal that Blowfish produces the most outstanding results with Honey Encryption when compared to AES since Blowfish requires less processing time. 25

T. Mohanraj proposed a hybrid encryption algorithm to secure Big Data. Authors have compared the performance of their technique with the traditional available techniques for securing the data with respect to encryption time, decryption time, throughput and efficiency. 26

Riaz H. et al., proposed a recent study have reported notable progress in protecting healthcare information within IoT and IoHT environments. For instance, recent work on robust steganography techniques for safeguarding medical records highlights a growing focus on embedding security features directly into the data, thereby reducing the risk of unauthorized access and manipulation. These developments show the importance of applying layered protection mechanisms that complement but are not limited to conventional encryption.

Syed Raza Abbas et al., In addition, extensive reviews on federated learning for smart healthcare illustrate the importance of privacy-preserving data processing, secure model training, and distributed analytical frameworks in IoT-based systems. As healthcare organizations generate and manage increasingly large datasets, the need for secure computation and decentralized protection measures becomes more evident, especially for maintaining privacy and preventing data leakage.

This study introduces a secure and strong information participation approach called Blowfish Hybridised Weighted Quality Based Encryption (BH-WABE) for secure information composition and viable access control. Each attribute is assigned a weight based on its importance, and information is encoded using access control principles. A property authority assigns unmistakable features based on their weight and denies or modifies them, while the cloud specialist co-op stores the rethought data. The recipient can recover the information record matching its weight to reduce the computational cost. In terms of security, reliability, and efficacy, the suggested BH-WABE offers collision resistance, multi-authority security, and fine grained access control. 27

Taken together, these findings demonstrate that healthcare IoT systems require security solutions that are integrated, adaptable, and able to scale with data growth. In response to these challenges, the present work introduces a hybrid cryptographic framework aimed at improving confidentiality, integrity, and overall data protection within Big Data–driven IoHT applications. According to the findings, key management and authentication processes linked with cryptographic systems are especially difficult for advanced data administration. While homomorphic encryption advances information security, the encryption cycle is slow and inefficient. Fully homomorphic encryption, on the other hand, improves data privacy and usability. The approach is unsuitable for big data applications because to its sluggish computation speed and accuracy problems. Although RSA-based encryption is more authentic and secure, it is slow when processing huge volumes of data. For common data, traditional encryption techniques work well. Traditional encryption techniques, on the other hand, take longer to calculate due to the vast volume and variety of data in big data. The adoption of hybrid encryption models enhances encryption performance, although the system still has space for improvement. Previous studies have not compared their proposed methodology results with traditional techniques, and not compared with hybrid methodology. So, their proposed methodologies results are not authentic and cannot say that our hybrid methodology is better than other hybrid methodologies. To overcome this gap, authors have proposed a hybrid methodology and compared the performance of the methodology with the previous available hybrid methodology. Based on this discovery, hybrid encryption solutions appear to be a superior option for improving big data security. In light of these facts, our research recommends a hybrid encryption technique for large data security in a distributed file system context that delivers excellent performance while needing little processing.

Proposed method and materials

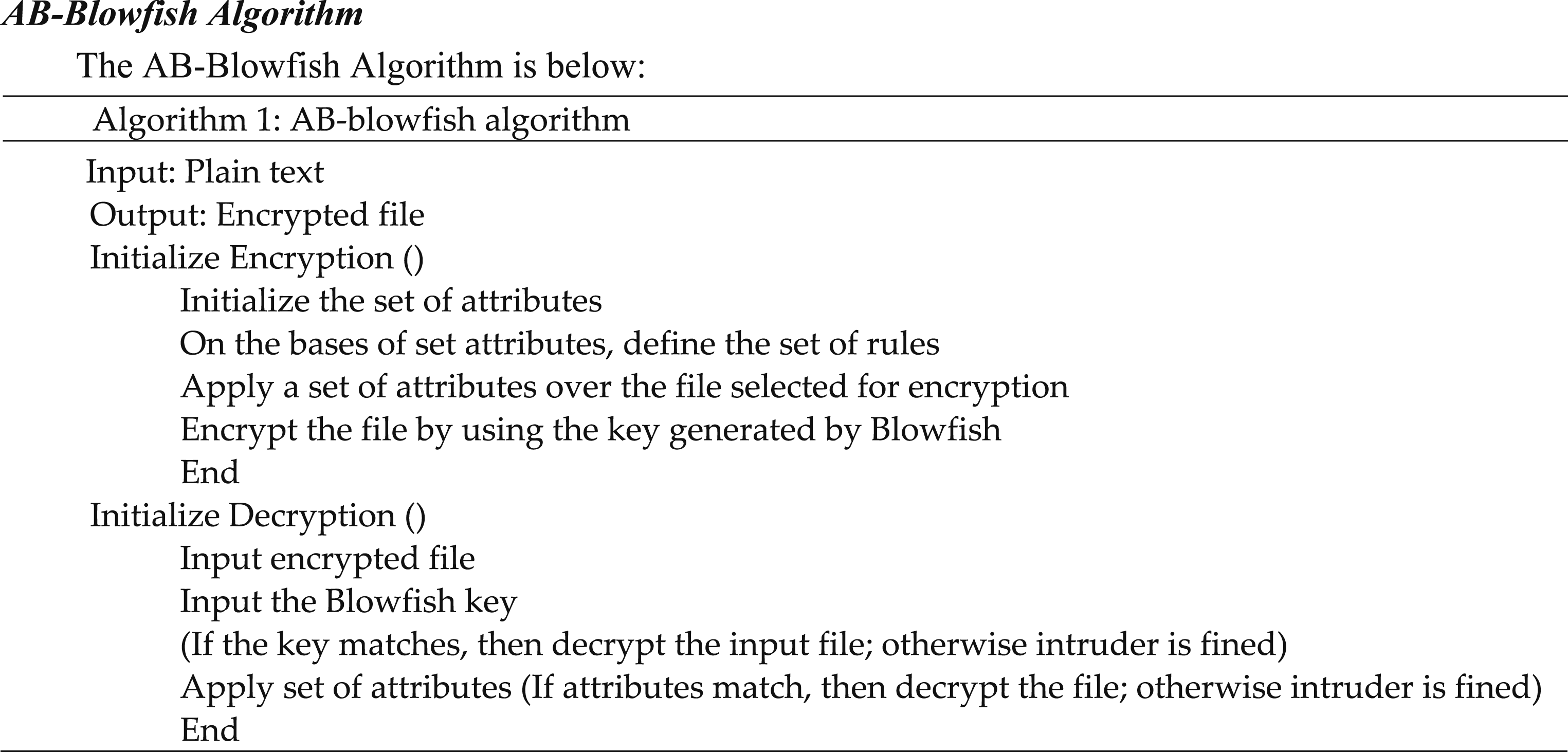

The hybrid encryption approach relies on the distinct strengths of its two components. ABE is employed to enforce fine-grained access control by encrypting and distributing the symmetric key according to predefined user attributes and roles. This ensures that only authorized entities can obtain the key needed for decryption. Blowfish is then used to protect the actual medical data because it offers fast processing, low memory requirements, and stable performance on resource-limited IoT devices. By separating access control from bulk data encryption, the scheme introduces an additional layer of protection that limits unauthorized access, reduces the risk of key misuse, and enhances overall resilience against common threats in healthcare IoT systems. To secure HDFS massive data, the proposed hybrid encryption technique combines the ABE and Blowfish algorithms. The double encryption procedure delivers higher data protection than typical encryption approaches.

ABE has lately acquired popularity due to its secure communication capabilities in dynamic contexts and decentralised access management. The encryption process, on the other hand, cannot be defined by user-specified policies or processes. Access control policies in ciphertext policies are introduced to give users more control over the encryption process. Previous studies have not compared their proposed methodology results with traditional techniques, and not compared with hybrid methodology. So their proposed methodologies results are not authentic and cannot say that our hybrid methodology is better than other hybrid methodology. To overcome this gap, authors have proposed a hybrid methodology and compare performance of methodology with pervious available hybrid methodology.

Users can configure the encryption and decryption policies in addition to the properties. Furthermore, these access control methods encrypt data during transmission and storage. ABE effectively manages client requirements and combines private and public keys into a single trait-based concept. The property arrangements defined by ABE are derived from consistent combinations of attributes. In ABE, the features and consistent blends may be constructed based on the client’s needs and the application. The suggested hybrid encryption systems is capable of encrypting both static and dynamic data (Figure 1). Working of the proposed technique.

Only the characteristics are encrypted in ABE, not the full block. Secret or symmetric cyphers use Blowfish to generate 32- to 448-bit key, which is then used to encrypt and decrypt data. The ABE algorithm is first used in the two-step procedure using Blowfish. ABE relies on some random user the private key must be obtained based on attributes rather than a hybrid approach if the algorithms are conducted independently. The odds of producing duplicate keys are high if the intruder knows the qualities.

When the Blowfish method is used individually, all the data blocks are encrypted similarly. This could cause problems if the intruder understands how to decrypt the data. However, Blowfish allows the user to select a key size between 32 and 448 bits in length. The ABE encryption procedure uses a secure key obtained via Blowfish instead of a random private key to eliminate intrusions and secure the data. The suggested hybrid encryption model’s overall process flow is depicted in Figure 1.

The technique starts by choosing characteristics for the input file. The qualities are chosen based on the client’s preferences as well as consistent mixes. Following the selection, a set of rules for the characteristics is constructed, and encryption is performed.28,29 A key created by the blowfish method safeguards the file after it has been encrypted for two-tier security. The scrambled record is used for decoding, while the Blowfish key is used for verification. If it matches, the decryption process continues; if it fails, the attempt to decrypt is reported as an intrusion, and the decryption process terminates. If it matches, decryption process continues; if it donesn’t, the decryption attempt is flagged as intrusion.30,31 Otherwise, it is classified as an incursion at this level. The decrypted file will be plaintext, which can be utilized in the application requested.

The following are the steps involved in the encryption process. Step 1: The HDFS client uses the distributed file system to communicate with master node in the first step. Step 2: The controller node receives the distributed file system request, which contains a request to create a new file. Step 3: The main node identifies and picks the data node with the greatest available space. Step 4: The distributed file system transfers the data from the data nodes to the HDFS client. Step 5: Before encrypting the file, the HDFS client sends the selected characteristics. Step 6: The encrypted file must be secured; to do so; a Blowfish key must be generated and applied to the file. Step 7: Using the distributed file system output data stream, the writing operation begins from the client to a specified data node. Step 8: When the writing procedure is complete, the data in the current node is moved to a another node. Step 9: The master node keeps track of the current data node and the replication data node during replication. Step 10: The distributed file system delivers an acknowledgement to the HDFS client after the data has been successfully duplicated in the secondary data node. Step 11: When the HDFS client receives the acknowledgement, it stops writing. Step 12: The writing process is completed when the HDFS client acknowledgement is received.

The following are the steps involved in the decryption process. Step 1: The HDFS client uses the distributed file system to communicate with the master node during decryption. Step 2: The master node receives the distributed file system request, which comprises a request to read a file. Step 3: The data node gets information from the master node, such as the encrypted files’ location. Step 4: The HDFS client starts the process by picking data from the chosen block in the file system data input stream. Step 5: A secret phrase match is directed for confirmation, and if it matches, the client inputs the characteristics to unscramble the data. Step 6: Access is reported as unlawful or intrusive if the matching process fails. Step 7: When the acknowledgement is received, the HDFS client stops reading. Step 8: The reading operation is fully done after obtaining the HDFS client’s acknowledgement.

As proved in previous phases, the proposed model’s encryption and decoding approach provides enhanced information security to vast amounts of data in the HDFS scenario of composing and understanding operations. The algorithm for the proposed healthcare big data security paradigm is as follows.

Result and discussion

Simulation parameters.

Encryption time using ABE + BF

Calculating the time, it takes to construct cipher-text yields the encryption time. The suggested model, according to Figure 2, takes the least amount of time to process all of the files also shows in Table 2. As file size increases, so does encryption time. The encryption time for a maximum record size of 1 GB of information is around 6.8 min, but CP-ABE + HE, HE + BF, and CP-ABE + AES need 17.7 min, 13.6 min, and 11.0 min, respectively, to scramble the specified size of the document. The proposed hybrid encryption approach encrypts data in an average of 7.1 min, which is 14 min quicker than the Blowfish algorithm, 16 min faster than the 3DES algorithm, and 8.5 min faster than the DES algorithm. Encryption time analysis. Encryption time (in minutes).

Decryption time using ABE + BF

The time it takes to transform ciphertext into plain text is used to compute the decryption time, as shown in Figure 3. The proposed model’s decryption time is much less than that of other encryption algorithms, according to the investigation. Decryption time is almost identical to encryption time, taking about 5.7 min to decrypt 1 GB of data. CP-ABE + HE, HE + BF, and CP-ABE + AES algorithms take 18.8, 14.7, and 9 min, respectively, significantly longer than the proposed model. The suggested solution achieves an average decryption time of 5.7 min, which is 13.1 min faster than CP-ABE + HE, 9 min faster than HE + BF 3.3 min faster than the CP-ABE_AES hybrid algorithm. The time taken by different algorithms, as shown in Table 3, measured in minutes. Decryption time (minutes). Decryption time (minutes).

Throughput

For both the encryption and decryption procedures, the throughput is determined as depicted in Figures 4 and 5, respectively. The throughput of encryption is calculated by dividing the size of the text by the time it takes to complete the procedure. Decryption throughput is calculated by dividing the total cipher text by the time it takes to decrypt it. The encryption and decryption throughputs are presented in Table 4 and Table 5 respectively. Throughput of encryption. Throughput of decryption. Throughput of encryption. Throughput of decryption.

The proposed encryption algorithm had the highest throughput compared to other approaches due to the smaller file size & shorter computation time. In contrast, the text size rose and the encryption and decryption took longer. As a result, other hybrid encryption methods’ throughput and performance were reduced when compared to the proposed encryption algorithm.

The suggested approach achieves a maximum throughput of 351.23 MB/min and 355.83 MB/min in the encryption and decryption processes. In the encryption process, the throughputs achieved by CP-ABE + HE, HE + BF, and CP-ABE + AES algorithms are 95.92 MB/min, 126.6 MB/min, and 166.9 MB/min, respectively. Same decryption speeds achieve at 90.96 MB/min, 117.23 MB/min, and 208.53 MB/min, respectively.

Efficiency of intrusion detection

The effectiveness of the model’s and other models’ intrusion detection is evaluated for several different scenarios and the results are compared in Figure 6 and Table 6. All six instances are gained via altering the system’s encrypted data, which is done by changing the variables in the encrypted text. For a few instances, the alterations are made in the initial region of encrypted files, while for a few others; they are made in the middle and end of encrypted files. intrusion detection accuracy. Intrusion detection accuracy.

The intrusion detection results included in this study are intended to evaluate the system’s ability to identify unauthorized modifications to encrypted data. The artificial intrusion instances were created to test integrity validation within the encryption process rather than to simulate complex attack scenarios. Therefore, the reported efficiency values (90%–96%) reflect detection accuracy for data tampering events.

To facilitate the monitoring of the responses of both proposed and existing models to a variety of intrusions. The initial results demonstrate that the detection efficiency is highest, and deviations are detected as invasions by decrypting the text and comparing it to the genuine text. Changes made in the middle and end portions, on the other hand, take longer to detect because decryption is done block by block. As a result, the detection time is initially long, resulting in lower detection efficiency. Because of the hybrid encryption and decryption techniques, the detection efficiency of the proposed model outperforms all previous models.

Efficiency comparison.

Compared to previous encryption algorithms, the suggested hybrid encryption model achieves optimum efficiency. Hybrid algorithms’ efficiency was compared in Table 7.

Performance analysis.

Table 8 Compares the proposed model to CP-ABE + HE, HE + BF, and CP-ABE + AES algorithms regarding overall performance. Table 8 shows the average values for all of the parameters. The findings reveal that the suggested hybrid encryption algorithm outperforms traditional techniques in all respects, from tiny to huge data. As a result, security of big data security is improved. The proposed encryption framework can be seamlessly deployed across IoHT architectures, including resource-constrained wearable and sensor-based medical devices. Its lightweight design enables secure data handling at both the sender and receiver ends within healthcare service environments, ensuring end-to-end protection of clinical information during acquisition, transmission, and storage.

Conclusion

In this study, a novel hybrid encryption approach is proposed to enhance the security of large-scale data within the Hadoop Distributed File System (HDFS) environment. The technique integrates Attribute-Based Encryption (ABE) with the Blowfish algorithm to provide a dual-layer protection mechanism for files stored in the HDFS data nodes. Specifically, selected attributes of the input files are encrypted using ABE, while the key generated by the symmetric Blowfish algorithm is utilized as an access credential for the encrypted data. This layered method strengthens data confidentiality and enables the detection of unauthorized access attempts during the decryption process.

The performance of the proposed algorithm was evaluated using key metrics such as encryption time, decryption time, throughput, and overall efficiency. When compared with other hybrid algorithms—including CP-ABE + HE, HE + BF, and CP-ABE + AES—the proposed model demonstrated superior performance. While most related studies benchmark their methods against traditional standalone encryption algorithms, our work provides a more rigorous comparison by evaluating against existing hybrid schemes. The results clearly indicate that the proposed technique achieves higher accuracy, improved performance, and enhanced security for Big Data in IoHT environments. As a direction for future work, these developments will involve deploying the proposed hybrid encryption scheme in multi-node distributed environments. They also indicate growing opportunities to apply AI based robust and scalable encryption frameworks. The hybrid model introduced in this study can support secure processing, storage, and transmission of medical Big Data in next-generation healthcare systems.