Abstract

Keywords

Introduction

In the last years, the importance of wireless sensors networks (WSNs) has significantly increased as a consequence of the requirements of the

During the course of many years, several research activities have been focused on improving the behavior of intelligent systems using different artificial intelligent strategies. Particularly, decision making plays an important role under this topic since it can be used for different applications such as networks and energy management,1–3 health care and medical decisions,4,5 or autonomous transportation and road traffic6–8 among others. Moreover, nowadays, systems tend to be increasingly more autonomous, and decisions have to be taken by themselves according to the environmental information. Furthermore, many of these systems have to be able to determine their behavior facing with changing environments or even to combine different responses provided by different sensors or agents in order to obtain a more accurate one. The application establishes the requirements; sometimes, reliability will be the most important aspect but performance, consumption, ease of deployment, or even adaptation are also other factors to be taken into account.

Pattern recognition is very related to decision making, and it is very extended in classification systems. One of the main characteristics of these systems is reliability, since classifiers need to be able to generate outputs with a high level of accuracy. Moreover, they allow us to predict the output of a specific event (usually once the system has been trained). There are many classification methods, such as artificial neural networks (ANNs), decision trees, K-nearest neighbors (KNNs), linear regression, or support vector machines (SVMs), which try to obtain a good classification of a training set in order to establish the best criteria for splitting and to label the data properly. In spite of the existing methods, several modifications of the original ones are being studied in order to improve the classification rates. Furthermore, the importance of this dependability requirement involves multiple efforts on developing new strategies to improve the system response.

Ensemble methods can improve the accuracy of a classification.9–11 Usually, an ensemble is composed of two stages. Generally, it contains a primary set of learners, also called base learners, which are in charge of generating estimations for the second stage, which has to combine all of them. The most popular ensemble methods are bagging, boosting, stacked generalization, and ensembles of learners. All of them are based on creating several models using a training set. In case of bagging, models are built independently using different samples with replacement from the training set to obtain them. Once the models are created, they are ready to classify new samples. The same sample is applied as an input to every model, and the output is calculated as a combination of all single outputs (i.e. normally a mean or majority voting). Random forests are a special case of bagging. In this particular case, a set of decision trees are combined to obtain the final classification. Each decision tree–based model is built using a random selection of its attributes. Then, an input is applied to every model, and the final classification is given by the most popular class. In contrast to bagging, in boosting methods, a dependency among the models is defined. The most famous boosting method, called

Kuncheva 16 explains the importance of classifier ensembles and how the combination of several classifiers can improve the behavior of a classifier system. When different classifiers are combined, it is necessary to handle the contribution of each one to the finaldecision.17–19 Therefore, an appropriated management of each classifier has to be previously defined. There are two main strategies to deal with the whole ensemble: (1) fusion and (2) selection. On one hand, a selection strategy is more focused on working with different specialized classifiers, which means that each one knows a specific part of the feature space and therefore is responsible for classifying the classes belonging to this part. On the other hand, classifier fusion approach is more focused on combining the contribution of every classifier in order to obtain another more accurate one. 20

In this work, we use the two-stage classifier ensemble architecture used in our previous work. 21 It is composed of several individual classifiers which have to be combined in order to obtain the final result. The proposed architecture is shown in Figure 1.

Proposed general scheme of the system.

The first stage provides some classifications obtained from partial information about the target, and then the second stage is in charge of combining all of them to obtain a final improved classification. Classifiers of the first stage have been implemented as ANNs (particularly as multi-layer perceptrons (MLPs)) trained with partial information about the target. The proposed methodologies of this work are focused on the second stage, where different methods will be employed to compare the efficiency and adaptability of each one. On one hand, another ANN will be implemented, whereas, on the other hand, different voting algorithms are also tested. This second-stage ANN needs to be trained, and it uses the estimations of the first stage as its inputs in order to generate the output of the ensemble. Related to the voting algorithms, three different methods are tested. The simplest one is plurality majority and the others are two proposals based on weighted majority, which are called

As mentioned before, bagging and booting methods use the same data set and split it using different techniques. In contrast, in this case, there are different data sets to be analyzed as a consequence of a multi-sensor scenario. This fact allows us to use the same algorithm (in this work, ANNs) for all the classifiers located in the first stage, because each one provides different results since they use different data sets. Although the proposal is not exactly an ensemble of learners in terms of heterogeneity, it can be considered as an ensemble method which deals with its own scenario. Three of the proposed algorithms are considered machine learning methods because they have some learning capabilities and take into account the previous system behavior. Therefore, in these cases, the proposal can be considered as a stacked generalization approach. Just in case of majority voting, this assumption is not certain, because it does not imply any kind of learning.

Moreover, not only accuracy has to be taken into account, adaptability is another characteristic to address. Therefore, this article introduces a comparison among adaptive and non-adaptive fusion algorithms in a two-stage classification scheme as an ensemble method mainly focused on the second stage. A classifier can be trained for a specific application and, consequently, it will carry out a great classification. However, if some changes affect the system, the classifier will provide worse results. Therefore, adaptability is a very important factor to consider a high level of reliability, even in classifier ensembles.22,23 Hence, in this work, adaptive capabilities are also analyzed and compared depending on the algorithm used in the second stage.

In this article, we present improved results of the first stage from our previous work. 21 Thus, the results of the second stage are also improved. This allow us to analyze the effect of the first-stage accuracy on the second stage. Besides, we present an additional application example which is more realistic and uses real data collected from sensors (in this case, low-cost radars).

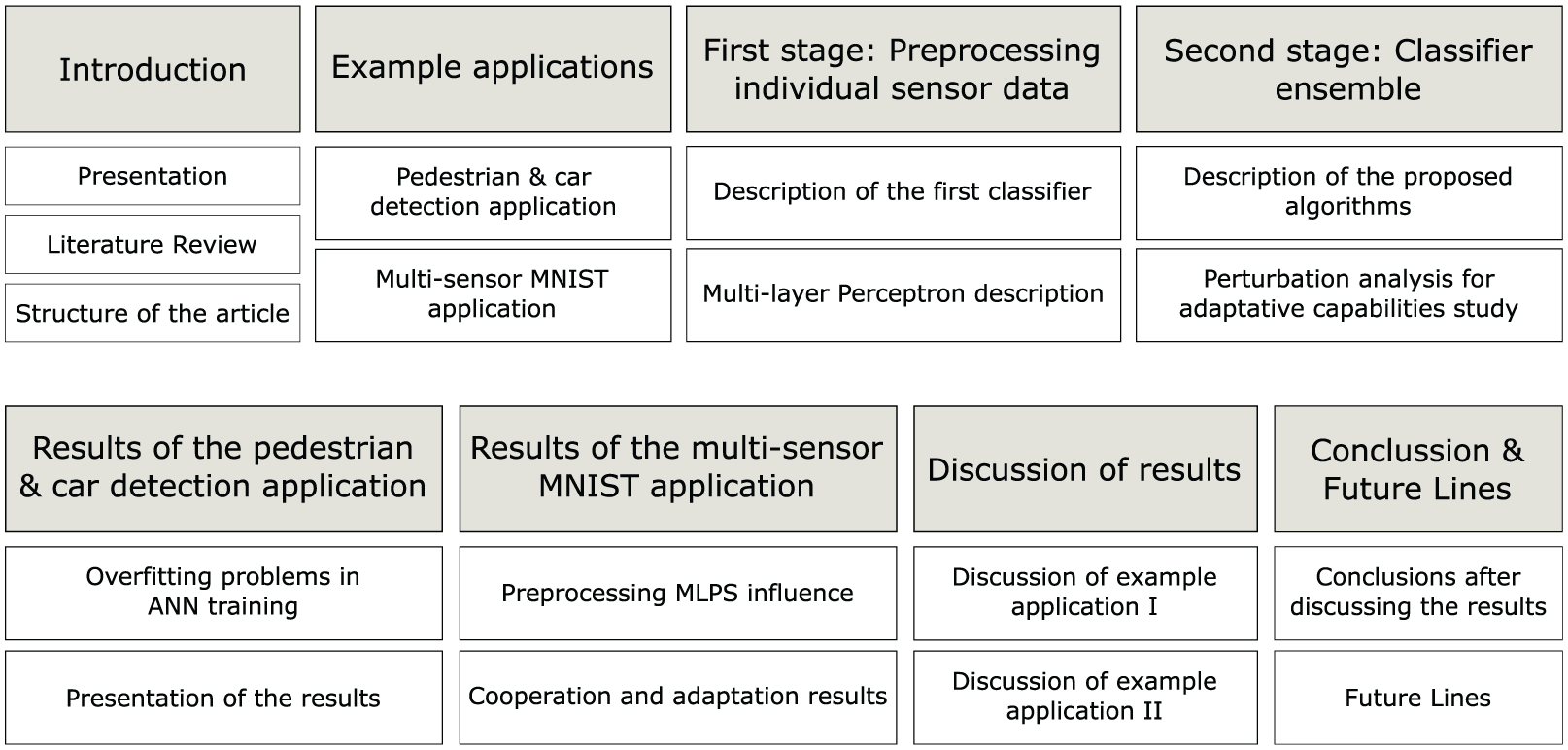

This article is organized as follows. In section

Structure of the article.

Example applications

As mentioned in the above section, this work makes a comparison among different algorithms for combining the outputs of a classifier ensemble. In order to test the proposed algorithms, different example applications have been analyzed. The first one consists of detecting and identifying cars and pedestrians using low-cost radars. 24 This is a realistic application where the position of the radar device is so critical, therefore collaboration among devices can be a solution to play down this problem. For this application, only a small set of samples is available since samples were taken in a complex environment. However, it is possible to analyze the behavior of each algorithm on the overall system testing different situations. In order to make a more detailed study, we have defined another example application. In this case, a huge data set is available, so a statistically relevant analysis of the algorithms can be performed since enough tagged examples are available. This second application is a modification of the well-known MNIST database 25 in order to adapt this database into our multi-sensor scenario. It was also the same application example used in our previous work, 21 in which handwritten numbers had to be classified. Each device analyzes only a small part of the whole image, so the partial information is combined using the proposed algorithms. Although this application is not as realistic as expected, it is a good way to test the efficiency of the collaborative algorithms since we have enough samples to generalize the obtained results.

Pedestrian and car detection

Detecting cars, persons, animals, bikes, or another kinds of objects when they are moving is not a complicated task. The challenge resides in the classification to identify the kind of the moving object. Although if this is complicated enough in itself, this difficulty becomes higher when the sensor device is a low-cost radar and due to the fact that it has no complex and precise functions. In spite of the sensor having some limitations, the obtained results were acceptable. 24 However, the radar position was so critical. In order to minimize this influence, a combination of several radars working together improve the results. 26 Therefore, in this work, a comparison among different algorithms is analyzed to demonstrate the utility of the cooperation.

In this example application, three low-cost radars were used in order to detect and identify the moving object from different angles. A deployment of the system was carried out to obtain a data set with real tagged samples. The three radars were placed in the parking of our school (Figure 3) to take samples of moving objects. Tags were added manually. When each radar signal surpasses a threshold, the software tool saves 512 samples in a second and the time of the event. Then, the first 128 points of the fast Fourier transform (FFT) of the 512 points signals are introduced as inputs to the first-stage classifiers. In this case, instead of using a classification tree,24,26 an ANN is used to provide the results of each radar device for this first stage.

Deployment of a radar for measuring.

A critical aspect of this example application resides in the limitations of the environment. The place was not so friendly for taking the samples, since the environment was not very controlled. Many people were moving around and many times trees were moving, and the radars detected these movements and mixed them with moving people or vehicles. Therefore, taking the samples was not an easy task. These complications caused a lot of problems in obtaining enough samples to make an exhaustive analysis. In order to reduce this problem, different testing situations are studied, and another example application is also tested to be available as an statistically relevant analysis.

After this experiment, we achieved 128 valid tagged signals. Out of them, 92 belonged to pedestrians or group of pedestrians, 23 to cars, and 13 to bikes. As there were not enough examples to train and test, five sets of 52 pedestrian signals were taken randomly from the 92 ones, 13 cars were taken randomly from the 23 ones, and the 13 bikes have been used to train the ANNs. The five sets of the remaining 40 pedestrian signals, 10 cars, and 0 bikes were used as test sets.

Multi-sensor MNIST

In order to test the proposed algorithms with enough examples, the well-known MNIST database 25 has been chosen as an example application. To adapt this database into our multi-sensor scenario, the following modifications and methodologies have been done:

The 28 × 28 pixel images of the MNIST database have been divided into four 14 × 14 sub-images. This emulates four different sensors catching the same event, but each one has only partial information of the entire target. The image has been divided as follows: the top left corner of the image is the quadrant 1, quadrant 2 is the bottom left corner, quadrant 3 is the top right corner, and quadrant 4 is the bottom right one. Figure 4 shows an example.

Each 14 × 14 sub-image is processed by a two-layer MLP trained to classify its quadrant. Thus, four estimations are obtained, each one with different global accuracy and class accuracy, due to the fact that some quadrants will be more sensitive to certain numbers than others.

In order to test the adaptability of each algorithm, different types of perturbations have been added as noise on the image and as communication errors between stages.

28 × 28 image of a handwritten 7 divided into four 14 × 14 images.

The MNIST database is composed of two sets, one for training stage and the other one to test the system. The

First stage: preprocessing individual sensor data

The main focus of this article is on the algorithms which aggregate data from all sensors. Therefore, data from sensors are preprocessed with the same algorithm for all the proposed experiments. A two-layer MLP with an output per class has been chosen to implement the first stage of the proposed scheme (see Figure 1). Nevertheless, whatever other classifier could be chosen for this propose.

Combination of the first-stage classifiers does not literally represent a combination of multiple solutions for the same target. The outputs of the first stage have been obtained by MLPs using different inputs. Each one uses only partial information or a different point of view of the global target. It means that there is a high likelihood to obtain different classifications for the same target at each MLP in the first stage since they have a huge dependency of received input (image of each quadrant or signal from different oriented radar). This implies that every partial solution is not well-balanced, because these results do not only depend on the internal functionality of the MLP but also on the external information partially received from the target (image of each quadrant or signal from different oriented radar).

MLP

The mathematical model of a neuron is shown in equations (1) and (2). This model performs a weighted sum of the inputs and then applies an activation function to the output. The activation function is usually a linear function or a sort of saturation function like sigmoid functions

with

There are different families of ANNs. Each family has different connections, activation functions, and learning algorithms. In this work, MLPs have been implemented, which only allow feedforward connections and are trained with supervised learning. They have as many outputs as classes are needed to be classified. The output is trained to be 1 for the corresponding class and −1 for the other classes. However, the final output will be a set of values in the range [–1,1], representing the confidence for each class.

The learning algorithm most used for training MLPs is backpropagation. It is an approximate steepest descent algorithm which uses the mean square error (MSE) between the ANN output and the desired one as the fitness function, where

where

A variation of the standard backpropagation,

If the new MSE is less than the previous one, the learning rate is increased

Else, if the new MSE is greater than the previous one, but no more than a certain percentage

Else, the new MSE is greater than the previous one plus a certain percentage of it

On the detection with radars application

Each radar signal is, first, applied a FFT and the first 128 points are taken (an example is shown in Figure 5); then it is classified by a 2-layer MLP with 128 inputs, four hidden neurons, and three output neurons. Each output neuron recognizes a class: pedestrian, car, or bike.

Radar parameter extraction: example of person signal.

Due to the lack of tagged examples, the first-stage classification performance is very unstable (as shown in the section

As there is a big unbalance between classes on the achieved tagged examples, the impact on the MSE of each example is corrected proportionally to its class abundance. Particularly, the squared error of each example is multiplied by the total number of examples of the training set (78) and divided by the number of examples of its class on the training set (52 persons, 13 cars, and 13 bikes). Hence, on every epoch (as training is performed in batch mode), the influence of each class is balanced on the MSE, avoiding a tendency to classify every input as the most abundant class.

The parameters for the first-stage training are as follows: 1500 iterations,

On the multi-sensor MNIST application

Each quadrant, into which the input images are divided, is classified by a two-layer MLP with 14 × 14 inputs, 40 hidden neurons, and 10 output neurons. Each output neuron recognizes a digit. This is a relatively small ANN compared to the state-of-the-art ones used to solve MNIST. 25 It has been done by design to fit the MLPs on embedded implementations, which have a limited number of resources. Consequently, these networks cannot be as effective as the MLPs implemented on powerful machines.

In order to perform a fair comparison of algorithms, three sets of classifiers have been trained with 10,000 different examples taken randomly from the

The parameters for the first-stage training are as follows: 5000 iterations,

It should be noticed that these parameters have been modified from the ones used in our previous experiments reported in Villaverde et al. 21 Particularly, the iterations have been augmented from 1500 to 5000, the activation functions have been optimized, and minor changes are performed on the scripts that compute the training. It can model two types of classifiers: a weak one in the previous experiments 21 and a strong one for the new experiments.

Second stage: classifier ensemble

The second stage of the proposed system (see Figure 1 for more details about the general scheme) is based on the main concept of classifier ensembles since it has to combine the outputs coming from different classifiers (MLP in this case). In particular, in this work, different fusion approaches have been implemented to consider the different outputs given by the first classification stage. Another MLP and three different cooperative algorithms are proposed: majority voting-based algorithm, basic weighted voting-based algorithm, and stochastic weighted voting-based algorithm.

Whereas the neural network requires a training stage before the normal operation to adjust the internal weights in order to provide the best results, the proposed cooperative algorithms do not require any kind of previous training. Besides, the weighted voting-based algorithms are always updating the contribution of each classifier to provide a result every time for each input and also adapting itself to the system’s changes (i.e. changes which affect the proper functionality of one or more classifiers). Unlike the proposed neural network and the majority voting algorithms, these ones take into account the past behavior of the system. In this way, they can decide which first-stage classifier is giving the best results as time goes forward and, consequently, give more importance to their contributions.

MLP-based algorithm on second stage

MLP has also been chosen as one of the algorithms to compare. It is more computationally intensive than the other proposed algorithms, but it can achieve better accuracy when it is trained properly.

On the detection with radars application

Seven MLPs of nine inputs (three from each radar), 12 hidden neurons, and three output neurons have been trained with the same training sets used to train the first-stage MLPs. So, the test sets can be used for testing the second stage too.

The training parameters for the second stage are as follows: 700 iterations,

On the multi-sensor MNIST application

Five MLPs of 40 inputs (10 from each quadrant), 15 hidden neurons, and 10 outputs have been trained with each of the remaining signals which belong to the training set but were not used to train the first stage (

The training parameters are as follows: 4000 iterations,

Majority voting-based algorithm

Majority voting can be classified as (1) unanimous, (2) simple, and (3) plurality. Unanimous implies that all classifiers agree. In case of simple majority, at least more than 50% of classifiers agree. Finally, in plurality voting, the solution is given by the most voted category. In this work, plurality voting will be addressed as the majority voting-based algorithm.

This is the simplest and the fastest proposed algorithm. However, it has an important disadvantage since this method produces ties, especially in the case of a an even number of classifiers. When a tie happens, the classifier does not provide a reliable solution. Therefore, it can generate a high level of uncertainty. Another disadvantage is produced when a first-stage classifier starts to provide wrong results (i.e. due to a change in the environment or to a internal failure). If the system does not realize that situation, the hit rate will get worse. This is due to the fact that the solution does not depend on the previous behavior, but this algorithm just analyzes the inputs and provides the result using only this information. Therefore, it is a non-adaptive methodology.

Basic weighted voting-based algorithm

Unlike the majority algorithm, the basic weighted voting weights each input to manage the contribution of each classifier. The functionality is very similar to the majority one. The solution is also the most voted category but, in this case, contributions are weighted. These classifiers which have demonstrated a higher reliability will have more influence over the final decision. The solution of every quadrant is compared to the final solution; if they match, this means that the classifier has contributed positively to the final decision, so its weight will be increased by adding a fixed value

Pseudocode for the basic weighted algorithm.

In this method, the fact that weights have to be increased or decreased using a predefined fixed value is the largest drawback. Although the increase can be a little more higher than the decrease (or vice versa) to avoid standstill, both parameters have to be predefined by the programmer. Moreover, initial weights

Stochastic weighted voting-based algorithm

This algorithm also weights the influence of each classifier. But unlike the basic weighting, in this case, weights are calculated using a stochastic method. Therefore, the programmer does not need to define how weights evolve, because they are updated based on the Monte Carlo method. Thus, this algorithm works similarly to a particle filter since it computes, every time, a large combination of weights (i.e. particles) in order to reach a good solution. In this work, 200 particles have been used. Initially, all particles are randomly distributed and cover all the available space. Then, as the algorithm proceeds, particles are continuously moving around the valid ones. If there is a change in the environment, particles will move to another area of the space in order to accomplish the new requirements and maintain more or less the same level of accuracy. The final weights are not given by a specific particle, but they are calculated according the distribution of all particles (particularly, by the geometric center of all particles).

The available space for particle location is limited, and the coordinates of each one can vary only between 0 and 1. Although, at the first time, particles are randomly distributed in all the space, for next launches, they will be only distributed around the valid ones. In this way, the convergence of the particles is searched to find the best space of particles according to the solutions given by the classifiers. The final weights will be calculated as the geometric center of each coordinate of every particle, where each coordinate represents the weight of each classifier. Every particle is evaluated to determine which ones are valid giving a final solution. This result is compared with the result calculated using the geometric center. If both results match, then that particle is considered a valid combination, otherwise it will be rejected. However, not all the particles are equally valid. Some of them will have a strong contribution and consequently, the final weight combination will be closer to them. In order to find these particles, a deeper analysis of them is required.

Once the weights provided by a particle are applied to calculate the result for that particle, it is possible to know which is the dispersion around the different categories. It means that when the weights given by a particle are applied, the result will be determined by the category which has the highest contribution. However, other categories could also have some contribution. As these contributions are more similar, the result is less reliable since the system cannot distinguish a single solution well. In contrast, if one category obtains much more contributions than the others, it means that the result presents more confidence

Example of the final result calculation for two valid particles.

Pseudocode for the stochastic weighted algorithm: computer version.

In conclusion, this algorithm is also adaptive like the basic weighted voting-based algorithm, but, unlike the previous one, this takes its own decision about evolution of weights by itself. There is no need to predefine a fixed value to reward or penalize the first-stage classifiers. The algorithm decides how to do that by moving the particles over the available space according to the behavior of each one. Therefore rewards and penalties will be different every time.

Perturbations

Different perturbations have been added to the

Initially, the image noise associated to different quadrants was added to simulate the first case (image noise). The first quadrant was corrupted with additive Gaussian noise (adding a normal distribution noise with mean 0 and standard deviation of 64), which is the most frequently used. Then, the analysis was repeated applying the same type of noise on the third quadrant instead of the first one. On the contrary, some noise was also added over the output of the first stage, therefore data received in the second one was partially corrupted. Different situations were analyzed. Gaussian noise with a standard deviation of 1 and 0.5 was applied to study two different cases with different noise levels. Besides, in order to simulate a disconnection, the input of the second stage was replaced by the less worse case possible. For the ANN, it is all outputs fixed to −1, which represent “no-idea,” and a random assignment for the rest of algorithms which have to vote an hypothesis. Like the previous case, the first and the third quadrants were being corrupted separately.

Therefore, four different perturbations have been analyzed. One of them as a image noise and the others as noise in the link between the first and the second stage. For each case, experiments were done when the failures were associated not only to the first quadrant but also to the third one.

Results of the pedestrian and car detection application

The lack of tagged examples causes overfitting problems in the ANN training. Figure 9 shows the evolution of the MSE of the train and test sets during a training of a first-stage MLP. The overfitting problem is when the MSE of the training set improves by learning each training example with precision, whereas the ANN loses generalization and the MSE of the test set decreases. It appears when there are more learning parameters (i.e. weight and bias) than training examples, after some training epochs. As more learning parameters than training examples are present, the sooner (i.e. at earlier epoch) appears the problem. In this case, as there are few training examples, the size and/or the training epochs are limited, and hence the performance of the MLPs. Besides, it also causes the training of the first stage MLPs to be very unstable (getting very different results on each experiment). Therefore, we have chosen five different situations of the first-stage MLPs as examples of how the algorithms may work instead of averaging them.

Example of overfitting on the training of first-stage MLP.

Table 1 shows the results on the test sets of the detection with the low-cost radars. As shown in the firsts three rows of Table 1, there is not a clear tendency for every test, therefore each test is analyzed as a particular test to show how it affects the overall system (see section

Results in % of the detection with low-cost radars.

Results of the multi-sensor MNIST application

Although, in this work, the first stage is not one of the main issues to be dealt with, it plays an important role in understanding the results of the second stage. Therefore, first, an analysis of the MLPs of the first stage is done in order to know the starting point. Then, results for the ensemble classifier are shown to compare the four cooperative algorithms explained above.

Besides accuracy, the other important issue dealt in this work is the adaptability. Adaptive capabilities allow us to maintain the system with a similar level of accuracy although external or internal conditions affect the system or their components. Therefore, in order to demonstrate these capabilities, some perturbations were introduced to analyze the system response.

Preprocessing MLPs

Due to the fact that images have been divided into four quadrants, the accuracy of each one is different. In order to know the behavior of each quadrant, different data subsets of the MNIST database were tested.

Table 2 shows the accuracy for each quadrant on the new experiments, whereas Table 3 shows the accuracy on our previous experiments. In order to avoid outliers, three experiments were developed for each case. Therefore, values shown in these tables were obtained as a median after the three experiments were carried out under the same conditions but using different subsets of samples. Besides, these tables also show not only the global accuracy but also the values obtained for each category. In this way, it is possible to discover where the categories are more difficult to classify (which turns to be the number “5”).

Accuracy in % of the preprocessing classification from the new experiments.

For each class, the median of the three experiments (to avoid outliers) and for the global accuracy, the mean (of the three experiments) are given.

Accuracy in % of the preprocessing classification from the previous experiments. 21

For each class, the median of the three experiments (to avoid outliers) and for the global accuracy, the mean (of the three experiments) are given.

A big improvement can be noticed from the previous experiments to the newly optimized ones. We will take advantage of this fact to compare the second-stage algorithms against this two different situations. On one hand, our previous experiments represent a situation with a low-accurate first stage. 21 Let us call this first stage as weak classifiers. On the other hand, the new experiments have a first stage with higher accuracy. Hence, let us call them as strong classifiers.

Comparing the global accuracy of the

Cooperative algorithms and adaptive capabilities

Once the accuracy of each quadrant is known, data fusion is analyzed. It implies to study how each algorithm works in terms of accuracy and adaptation. As mentioned before, in this work, four algorithms have been presented.

The system evolution when a change affects it denotes the system susceptibility and, consequently, how it can maintain a certain level of accuracy when a perturbation appears. Trained systems tend to be more unstable when facing environmental changes. In contrast, they used to be more accurate since the training stage provides them with some knowledge in advance. As mentioned in subsection

Accuracy and adaptability of the proposed algorithms for the second stage when the first stage has high accuracy (new experiments).

Accuracy and adaptability of the proposed algorithms for the second stage when the first stage has low accuracy (old experiments). 21

Figures 10 and 11 show the influence of noise over the non-perturbation case for each algorithms of both new and previous data, respectively. They represent the difference between the hit rates without any perturbation and the hit rates when different perturbations were applied using, in the first stage, the weak classifier (our previous experiments) and the strong classifier (our new experiments), respectively (applying equation 4). The values of these hit rates are obtained from Tables 3 and 2. These two figures highlight the influence of the accuracy of the first stage over the global result for each algorithm. The ANN shows the most important variation. When a weak classifier is used in the first stage, the ANN of the second stage is more sensitive to noise, as our previous results demonstrate. However, for the new experiments, the ANNs of the first stage are more accurate, therefore the ANN of the second stage suffers less variation when the perturbations are applied. Although these effects are also presented for the other algorithms, the influence is not as significant as the ANN case

Comparison graph for previous experiments (hits analysis).

Comparison graph for new experiments (hits analysis).

In order to know how good the weighted algorithms are, adaptive capabilities have been analyzed taking into account the percentage of failures (miss + ties) of every algorithm when the perturbations are applied. These percentages are shown in Tables 6 and 7 and have been obtained using the formula

where

Percentage of failure increase of the perturbations for the new experiments.

Percentage of failure increase of the perturbations for the old experiments.

Figures 10 and 11 also provide this information; however, we use equation (5) as an additional analysis because when the hit rate is closer to 100%, it becomes more difficult to improve it. Thus, using equation (5), for a low failure rate, a small improvement becomes as relevant as a big improvement for a high failure rate.

In order to obtain the ANN results, the second stage MLP was trained five times per each one of the three

A remarkable issue of these results is that two of the proposed algorithms show some kind of uncertainty due to the appearance of ties. When an algorithm generates not only hits and misses but also ties, it means that it is not robust enough. Consequently, ties introduce an important level of uncertainty in the system since it is not possible to provide a specific solution. These ties would be hits or misses, which depends on the number of ties and on the number of involved categories. It could be an important factor to take into account according to the application requirements.

In order to analyze the influence of the first stage into the second stage, Table 8 shows the improvement of the failure rates (ties + miss) when comparing the new experiments (strong classifiers) against the old ones (weak classifiers). These percentages have been calculated using equation 5, but in this case, we use

Percentage of failure improvement of the new experiments with respect to the previous ones, calculated using equation (5).

Discussion of results

Discussion of example application I: pedestrian and car detection

As we have mentioned before, the samples obtained for the pedestrian and car detection application are limited. Therefore, the results of this application example are not statistically relevant and consist of analyzing different successful test cases.

In general, the second stage is able to obtain better results than each radar individually. It means that the ensemble improves the success rate of the individual classifiers. However, in one case, the ensemble cannot exceed the best individual rate. This effect happened in the Test 1 (in Table 1) where most of the algorithms are not able to improve the best individual radar result (from Radar 3). Only the ANN improves a 3% of that value. On the contrary, in Test 5, ANN shows worse results than the best individual radar rate. However, for this test, the best improvement is given by stochastic weighted voting-based algorithm, which surpasses 8% of the best individual result. In all the other tests, all the algorithms of the second stage are able to exceed the best individual rate, therefore the classifier ensemble can be a good alternative to improve the system behavior.

In general, ANNs present good results when there are enough samples to train. However, there are some tests in which the ANN does not provide better results than the other algorithms. This is due to the fact that the number of samples in this application are very limited and, in consequence, the ANNs are not well-trained. This means that the other algorithms can be preferred than ANNs when the application cannot provide a huge number of tagged samples. Even majority gives better results than ANN for tests number 3, 4, and 5. However, in these cases, the best alternative is the stochastic weighted voting-based algorithm, since it provides the highest hit rate (due to its adaptive capabilities) and also reduces the system uncertainty because it does not present any tie.

It is important to notice that even if these results are not statistically relevant, we have found examples in which the classifier ensemble improves the best individual classifier (those improvements are between 3% and 8% depending on the cases), proving so, the usefulness of a classifier ensemble with the tested algorithms in a real application.

Discussion of example application II: multi-sensor MNIST

All the results provided in this article have been obtained through MATLAB simulations, although the final implementations are intended to be executed in embedded platforms (micro-controllers, digital signal processors (DSPs), or field-programmable gate arrays (FPGAs)). The estimated number of operations needed to generate a decision from the four first-stage outputs are as follows: 800 for the ANN (already trained), 18 for the majority, 26 for the basic weighted, and

First of all, it is important to highlight that some results were obtained previously

21

for this application. The differences between our first results and the new ones are basically found in the first stage. In this work, the ANNs of the first stage (preprocessing MLPs) have been improved and therefore the results for the second stage have also changed. As Tables 2 and 3 show, the accuracy of the new ANNs is better than the previous one for every quadrant for both Train– and Test sample sets. It implies more confidence data to be dealt in the second stage and, consequently, high accuracy is obtained in the new experiments. Improving the first stage, the ANN for the second stage is able to suffer less deterioration (see Figures 10 and 11). Results of the preprocessing MLPs on the new experiments show that the accuracy varies a 9% among all quadrants since the best one has a hit rate of 82%, whereas the worst one is around 73% (see Table 2). According to these results, quadrants 2 and 3 are the best ones, which coincides with the best ones in our previous experiments.

21

Besides, there are some categories more complicated to classify than others. The most significant case is obtained for category 5, since the lowest values belong to that category. It means that when the image represents the number

The first stage classifier has a great influence on the accuracy of the whole system. Using a strong classifier in the first stage implies better general results because when the accuracy of the first stage improves, the accuracy of the second stage also improves. As we have defined before, Table 8 shows the percentage of failure improvement comparing the previous experiments (which use a weak classifier) with the new experiments (which use a strong classifier). According to those values, when there is no perturbation in the system, the ANN can improve a 59.17%, whereas the three majority-based algorithms can improve around 50%. The stochastic weighted is voted the best of the three since it has 51.94% of failure improvement. This demonstrates that the ANN of the second stage provides the highest improvement compared to the other algorithms since it has been trained with more new reliable samples. Therefore, when an algorithm requires a training stage before the commissioning, it can present best result than other non-trained algorithms; however, the second ones are able to work directly, also giving good results. Therefore, depending on the application requirements or limitation, we can choose one algorithm or the other one.

On one hand, regarding to the system hit rate, the ANN gives the best results. Its worst hit rate was around 73% in case of the previous experiments (see “Link Q1 Gaussian 1” and “Link Q1 Gaussian 1” in Table 5) which was improved in the new experiments reaching around 91% for the same kind of noise (see “Link Q1 Gaussian 1” and “Link Q3 Gaussian 1” in Table 4). In contrast, plurality majority presents the worst hit rates in any case; in some cases, this algorithm can only achieves a 55% or 60% of hits rates, especially when the first stage is not good enough (see Table 4). However, when a weighted algorithm is used, those values increase a lot compared with the plurality majority. In particular, for the new experiments, stochastic weighted voting can almost reach 90% of hits when no perturbation is applied (see Table 4), which only differs by 6% when compared with the ANN. Moreover, although the ANN continues getting better hit rates when perturbations are applied, the stochastic weighted voting does not have significant variations in presence of those perturbations. It means that it is more stable than ANNs for changing environments.

Therefore, regarding the system adaptation, we can conclude that the ANN is highly sensitive to any perturbations, especially when the accuracy of the first stage is lower. When a weak classifier (previous experiments)

21

is used in the first stage instead of an strong one (new experiments), the ANN of the second stage suffers a significant deterioration. The error of the ANN when Gaussian noise with standard deviation 1 is applied can reach more than 15%–18% with respect to the non perturbed situation when a weak classifier is used in the first stage (see Figure 10). However, these values can be minimized under 6% when an strong classifier is used (see Figure 11). With respect to other algorithms, they can also minimize the error except when quadrant 3 suffers a link disconnection between both stages. In this case, the ANN is the only algorithm that can minimize the error. In this situation, the ANN algorithm has much more information than the other algorithms because when a disconnection is detected, the ANN can force all the input values for that quadrant to “

According to Table 8, the improvement that the ANN can reach is between 57.84% and 68.75%, whereas the stochastic weighted algorithm can only improve between 42.51% and 52.77%. This is a consequence of using a weak or strong classifier in the first stage, because the ANN (which is a trained method) is the most sensitive algorithm to any change in the first stage. As we have mentioned before, although the ANN has better hit rates in presence of perturbations than the other algorithms, its deterioration when those perturbations are presented shows a steep fall since the percentage of failures grows significantly, as Tables 6 and 7 show. In fact, in presence of some link noise (i.e. link Gaussian noise with standard deviation 1), the increment of the failure rate is even more than three times than the increment of the failure rate without perturbation. For example, when that noise is applied to quadrant 1, the ANN failure rate increases 146.73% in case of the new experiments and a 165.52% in case of the previous experiments (see Tables 6 and 7). In contrast, the failure rate of weighted algorithms does not exceed 38.50% for stochastic weighting and 41.61% for basic weighting for the worst case, as Table 6 shows. That is because ANNs are usually trained for general cases (i.e. without so many perturbations). Thus, when an unexpected perturbation appears, re-training will be necessary to adapt to the system, which involves getting new tagged examples of the perturbations. Furthermore, re-training may be impossible for some kind of applications or for sporadic perturbations. Therefore, in general, weighted algorithms are better in terms of adaptation than the ANN.

The ANN is also computationally very intensive. In this sense, the best algorithm may be the plurality majority, which is the simplest among all. It does improve the best first stage hit rate (except in the rare scenario of the Test 1 of the detection application with radars, in which it at least equals this value) when there are no perturbations. However, it does not obtain a significant improvement, and its accuracy decreases significantly with perturbations, specially for disconnections and intense noise on the link (Gaussian noise with standard deviation 1). The second computational effective algorithm is the basic weighted, which also provides balanced properties regarding accuracy and adaptability.

Conclusion and future lines

Conclusion

This article has compared four different algorithms for getting the final classification in a two-staged classifier ensemble. These algorithms have been applied to two example applications. Through the first application, the applicability of the classifier ensemble has been proven, testing the four proposed algorithms in a real application. On the contrary, through the second application, the proposed algorithms have been tested against different perturbations in order to measure their adaptability to environmental changes. Moreover, the effect of improving the accuracy of the first-stage classifiers has been measured.

After the discussion of the results, it can be concluded that

ANNs show the best accuracy among all the algorithms tested. However, they need to be trained with enough labeled examples, which for some applications will be unavailable or difficult to get. They have high computational intensity, and they are less adaptable than the weighted algorithms.

Majority shows the worst results in accuracy and adaptability (actually, it is not an adaptive method) and produces a lot of ties. It has been compared to show a reference of the minimum achievable improvement of an ensemble with the simplest algorithm. Nevertheless, it does improve the first-stage accuracy while providing the minimum computational intensity, which may be required for some applications.

Basic weighted shows intermediate and balanced results for accuracy, adaptability, and computation intensity. However, its main drawback is the ties generation, which produce a high level of uncertainty. This situation is not suitable for most of the applications since the unknown results can interfere with the system reliability.

Stochastic weighted shows the second best accuracy and the best adaptability. Although the hit rates do not reach the ANN results, they do not require any training before commissioning. Moreover, it shows a high level of adaptation because perturbations do not affect a lot due to its adaptive capabilities. Its main drawback is its high computational intensity. However, authors expect that by improving the particle selection stage, it will be possible to increase the hit rates and even reduce the computational efforts.

Future lines

Future lines will address the improvement of the stochastic weighted algorithm since it demonstrates low uncertainty due to the fact that it does not produce any tie, it also presents a high level of adaptation, and it does not required any type of training. Although it shows these great advantages, the main problem is its hit rate. Therefore, future research efforts could tackle this issue by changing the mode of particles selection or even the way to evolve them. Nevertheless, as Table 4 shows, for some specific cases under certain perturbations, the stochastic weighted algorithm can already exceed the ANN hit rate (i.e. when quadrant 3 is perturbed with Gaussian noise with standard deviation of 1 over the input of the second stage for a low accuracy first stage, old experiments). Therefore, if the stochastic weighted algorithm is improved enough, it will be a better option than ANNs for sensors data fusion in critical applications with changing environments.