Abstract

Introduction

There is growing agreement among scientists and policy makers that data used for research purposes, including those extracted from social media, should be made accessible whenever possible; and that their re-use should be encouraged, provided that it conforms with legal and ethical concerns around privacy and personal data protection, such as championed through the European General Data Protection Regulation (GDPR, 2019). To participants in the Open Data movement, this position is exemplified by the motto ‘as open as possible, as closed as necessary’.

Following years of debate on the values and practices underpinning data sharing and re-use, the FAIR principles have emerged as plausible guides towards the handling of data for research purposes (Mons et al., 2017; Wilkinson et al., 2016). Accordingly, data should be organised and managed to be easily

While applauding these developments, this paper identifies a research area in which the application of FAIR, CARE and TRUST principles is not enough to guarantee that data collection, processing and use are fair to those affected by these processes. This is the use of social media data (SMD) for health-related research. 1 As in other domains, the opportunity to mine social media for information has been hailed as transformative for research on human health, given its power to document human behaviour in real time. Considerations around the fairness and accountabilities relating to using SMD have often been set aside, on the understanding that as long as data were anonymised, no substantive issue would arise. We counter this perception by showing that the use of SMD in health research can yield results that are both scientifically dubious and ethically problematic. Our argument is that the use of SMD, no matter how well-intentioned and/or compliant with FAIR principles, is not necessarily fair to those from whom the data are gathered and those whose lives may be affected by specific uses of the data.

This problem is only indirectly addressed by the CARE and TRUST frameworks, since it does not necessarily concern indigenous data sources (the main focus of CARE) and it is not limited to the set-up of data infrastructures (the main targets of TRUST). Rather, social media capture data from individuals around the world, including highly resourced countries – indeed, the fact that they may disproportionately capture information from specific demographic, social or cultural groups constitutes a key concern around their use for research. Moreover, concerns around the social and ethical implications of reusing such data for health research affect the whole research process, including the design and use of databases as well as models, algorithms and the choice of directions and goals.

To develop this argument, Using social media as research data section of this paper discusses how uncritical uses of SMD in health research risk fostering existing social divides, promoting misrepresentations of society and breaking public trust in research institutions – with damaging effects on research outputs, applications and public perception. What is data fairness within research? section considers what fairness means in the context of SMD use, emphasising its significance towards guaranteeing the reliability of knowledge produced through data interpretation. We propose to focus on

Our contribution to wider debates on data ethics and justice is thus to identify the ethical problems arising from scientists’ uses of SMD, and to suggest that these problems may be addressed by incorporating a commitment to fairness in the methods routinely used in health research. We do not aim to provide a comprehensive review of what data justice and fairness may involve across contexts, nor do we aim to offer an empirical analysis of the inequity and discrimination affecting social media platforms, which has already been provided by many scholars including Srnicek (2017), O’Neill (2017), Zuboff (2019) and Noble (2018). Rather, we build on such scholarship to reflect on what researchers using SMD can concretely do to counter such bias. Our suggestions are thus complementary to calls for increased responsibility and accountability by social media providers (Dijck et al., 2018; Lupton and Michael, 2017; O’Neill, 2017; Van Kleek et al., 2017) and scholarship on the intersections between platform capitalism and social studies of infrastructures (Plantin et al., 2018).

Our analysis draws from relevant literature in data studies, health research and philosophical debates on fairness, as well as our experiences as a UK-based interdisciplinary group engaging in data-intensive, health-related research from the perspective of computer science (HW), public health (RL, LF, BW) and critical data studies (SL). Between 2016 and 2017, we had opportunity to work together within the project ‘Social sensing of health and wellbeing impacts from pollen and air pollution’, resulting in the present exploration of the risks and opportunities involved in SMD analysis. Accordingly, we will accompany our argument with illustrations of how our research was informed and/or constrained by methodological fairness.

Using social media as research data

Advances in data availability and analysis have outpaced the process of ensuring that data-intensive research is equitable and appropriate with respect to the publics affected by its results. Research in social science and the humanities has shown how mining personal data can lead to discrimination and damage to vulnerable communities (boyd and Crawford, 2012; D’Ignazio and Klein 2020; Floridi, 2011; Floridi and Taddeo, 2016). In the medical sciences, there are related concerns around handling sensitive information (Auffray et al., 2016; Dijck and Poell, 2016; Tamar and Lucivero, 2019). The ease of use fostered by the FAIR principles can be misunderstood to encourage disregard for the ethical implications of research. Furthermore, big data analysis can suffer from design and methodological problems, such as inadequate theory development, lack of sampling methods and justification, limited control over and information about data sources and difficulties in assessing the extent to which a given dataset is representative of a phenomenon of interest (Metcalf and Crawford, 2016, Taylor, 2017, Veale and Binns, 2017). These issues can compromise the credibility, reliability, and applicability of findings.

Social media offer the researcher a rich and extensive source of data at a scale not previously achievable (Kavanaugh et al., 2012; Leavey, 2013; Picazo-Vela, et al., 2012). Several research sectors (including business and government) seek to exploit the potential of the vast amounts of information generated through social media. The processes associated with the accessing, transformation, analysis and interpretation of SMD need to be critically assessed. As Halford et al.(2017) argued, ‘far from being “naturally occurring”…social media data are shaped through complex social and technical processes’. These processes have societal implications that pose specific regulatory challenges (Pentzold and Fischer, 2017). When SMD analysis is robust and reliable, the resulting knowledge can enhance understanding of countless topics, including some which have traditionally been difficult to address, and counterbalance other methods of data collection (such as social surveys) that can also over- or under-represent certain groups. Further, the use of SMD offers opportunities to reform relationships between governments and citizens, enhance democratic representation and inform public policy and decision-making (Leavey, 2013; Picazo-Vela et al., 2012) – for instance, by improving service delivery; developing early warning indicators of key societal issues (Hays and Daker-White, 2015; Leavey, 2013) and guiding responses to disaster situations (Kavanaugh et al., 2012).

Critical scholarship on the use of social media has initially focused on privacy and surveillance (Conway, 2014; Mikal et al., 2016), with attention to social fairness emerging more recently (Kennedy et al., 2017; Taylor, 2017). Opaque and unfair social media research practices may covertly embed and exacerbate structural social disparities in power, magnifying social divides, inequalities and injustices; and may promote misrepresentations of societies, communities and individuals (D’Ignazio and Klein, 2020; Floridi, 2011; Munoz et al., 2016). There is also potential for damaging effects to the reputation and public perception of science.

Public concerns regarding SMD extend beyond the protection of privacy to an interest in whether social media and data analytics companies are meeting wider expectations of what is fair (Kennedy et al., 2017). As demonstrated by the Cambridge Analytica scandal around Facebook data misuse, high-profile controversies regarding inappropriate and unethical use of SMD threaten the perceived trustworthiness of institutions such as universities and governmental bodies (O’Neill, 2004; Pentzold and Fischer, 2017). Any backlash against the use of such data thus risks to compromise the valuable insights that these data can provide (Halford et al., 2017).

The question of what is appropriate when using SMD is ‘urgent as data and research agendas move well beyond those typical of the computational and natural sciences, to more directly address sensitive aspects of human behavior, interaction, and health’ (Zook et al 2017). This urgency was underlined by Taylor and Pagliari’s (2017) finding that only one of the UK research councils had ethical guidance for social media research (with two others pointing to guidelines by other bodies); and only 50 out of 156 studies mentioned ethics (including statements that no approval was required) – a figure likely to be lower if restricting the sample to computer science, where ethical considerations are not always considered and related oversight is often lacking. This is despite many recent efforts to identify procedural and ethical frameworks for the gathering, transformation, analysis and dissemination of SMD (Dove et al., 2016; Gelinas et al., 2017; Golder et al., 2017; Lee, 2017; Mittelstadt and Floridi, 2016; Townsend and Wallace, 2016; Weller and Kinder-Kurlanda, 2016). Solutions to embedding ethical principles in social media research are lagging behind due to the pace of development and change in the technologies available, the widening of disciplinary interests as the potential of the data becomes clear and the contextual variability of the notion of fairness in relation to social media research use. Whilst there is public support for the fair use of SMD for health research, this appears dependent on the methods used and adequate protection of privacy (Mikal et al., 2016).

Perceived motivations of and trust in institutions have also emerged as salient factors in public perceptions of fair use (Williams et al., 2017). Practices which would be unacceptable for commercial goals may be considered differently in case of demonstrable public gain. Identifying the expectations of social media users regarding what is fair is complicated by the complexity of SMD and what they represent, and by low levels of public understanding regarding who owns and has access to the data, and how they can be used (boyd and Crawford, 2012; Kennedy et al., 2017; Nissenbaum, 2009). Many social media users do not know who is accountable for the secondary use of their data (boyd and Crawford, 2012).

There are questions as to whether the protections put in place through legal frameworks such as the GDPR are flexible and responsive enough to ensure fairness, especially in the face of rapidly developing methodologies and applications of SMD (Halford and Savage, 2017). The contextual dependency of fairness, difficulties with identifying what is generally acceptable, variability around what is appropriate between contexts and the often conflicting interests of different groups or of different goals, all present challenges to Institutional Review Board or Research Ethics Committee assessments of SMD use (Hunter et al., 2018; Zook et al., 2017). By exercising data fairness at each stage of the research process section, we argue that careful planning and management of research can help overcome these challenges. Before delving into practical considerations, however, we need to add conceptual clarity to the notion of data fairness by introducing the idea of ‘

What is data fairness within research?

In the Oxford English Dictionary ‘fairness’ is defined as ‘the quality of treating people equally or in a way that is right or reasonable’. It is clear from this definition that identifying what constitutes fairness is complicated by the context dependency of what is right or reasonable (Bicchieri and Chavez, 2010; Wolff, 2010), which in turn relates to what one is trying to achieve (Ryan, 2006). It is thus no surprise that notions of trust (O’Neill, 2004), accountability (boyd and Crawford, 2012), transparency (Information Commissioners Office, 2017) and justice (Taylor, 2017), all come into play.

Many existing analyses of fairness focus on the extent to which goods or opportunities are available to different individuals or groups, and whether existing disparities in the allocation of resources can be alleviated. This

This same literature on distributive fairness has acknowledged that it is hard to determine equal distributions of data or equal stewardship of data, since what constitutes equal – and who is taken into account among data subjects and analysts – depends on the situation of inquiry. For example, our interdisciplinary project aimed to analyse SMD to monitor the prevalence and location of hayfever symptoms across the UK. We used Twitter data seeking mention of hayfever symptoms to see if this would provide early warning of the effects of increased pollen levels. We successfully obtained ethics approval for this research, however none of the people who sent these tweets had been informed or consented to the use of their tweets for this purpose, including the people whose actual geographic location was identifiable at the time of sending the tweets. This was a relatively benign use of ‘anonymised’ data but was it a fair use? What would the equal distribution of data mean in this context? How does the lack of contextual information about the users other than their location (such as their age, gender and socio-economic status) affect research outputs? And if Twitter users were aware of such data reuse, would they change the ways in which they discuss hayfever on the platform, and what implications would this have for our research?

Questions of this kind emerge whenever SMD are used for knowledge production, and become particularly sensitive in relation to health research, with social media users expressing worries about potential discrimination when asked about the rights and wrongs of using their data for research (Kennedy et al., 2017). To address such issues, we need an understanding of fairness that places greater emphasis on data usage and its relation to individual and collective agency within society. Consideration of fairness needs to actively foster the use of data to produce knowledge that supports social agency and empowers vulnerable groups, thus explicitly targeting concerns around visibility and representation. This is where the concept of

In what follows, we aim to build on such literature and our own insights as data practitioners to answer a critical question for knowledge production activities grounded on the analysis of SMD: how can such research treat data fairly in ways that enable positive action? In other words, can specific practices and methods of SMD analysis foster distributive fairness and data justice? We propose to think broadly about the role of data within epistemic processes (i.e. processes relating to the conditions for knowledge creation) and to consider the forms of injustice tied to the use of data within research, thus linking

In this respect, the work of philosopher Miranda Fricker, though not specifically devised to address data management strategies, provides a helpful starting point. Fricker (2009) argues that evaluations of what is fair and just within society involve examining the forms of prejudice built into our ways of understanding the world (which can include tools, methods, knowledge claims and of course, data). She considers the extent to which our ways of knowing enable us to treat people in ways that are right or reasonable, and proposes to distinguish between two forms of epistemic injustice: (1)

In the world of data practices, examples of testimonial injustice appear in the choice of social media platforms as preferred data source, due to those platforms being the easiest and least expensive to access. A clear example is Twitter, which combines a relaxed approach to data re-use with the opportunity to download and re-use for free a sample of the data available on the platform on any given day. For publicly funded projects such as ours, the absence of upfront costs in accessing the data is a big carrot, which explains the predominance of Twitter as a data source for health-related research based on social media (Sinnenberg et al., 2017). This predominance makes it difficult to question the use of Twitter as a data source in the first place, despite its shortcomings; and to argue for alternative data sources requiring additional investment. This has created a prejudice in favor of Twitter data use within health research, with methods, software and training tools emerging that targeted specifically this data source and made it even easier to deploy, especially for newcomers to the field (Sinnenberg et al., 2017).

Hermeneutical injustice takes the form of privileging specific socio-demographic, cultural or political stances within data sources, as in the charged cases of migration or obesity figures. Consider studies that use Twitter data for health research without taking account of the types of people who do and do not use this platform, and thus of the bias built into their data source. Hermeneutical injustice happens whenever a published study mentions the fact that Twitter users are largely young, in professional occupations, and based in cities rather than rural areas, without however taking those biases into account in the rest of the analysis. Some studies may argue that such biases are not relevant in their case, e.g. when investigating availability and access to health care resources across a region. Attention to hermeneutical injustice however demands that assumptions made around whether or not a given dataset is representative, and of what, be examined empirically and in detail. What evidence is there that data collected on young urban dwellers represent the views and experiences of older people living in rural areas? And what are the implications of this set-up for the studies at hand?

The concepts of hermeneutical and testimonial injustice expose the significance of focusing on research methodology as a key locus for decisions around what constitutes data, for which purposes and audiences, and with which social and ethical implications. Perhaps surprisingly, researchers working in data science and related domains are not necessarily thinking of fairness as a factor underpinning their everyday technical choices and methods. Data fairness is often viewed as a legal and regulatory issue, whose resolution is in the hands of judiciary and policy bodies. This understanding of data fairness focuses primarily on consent and protection from harm, though legislation around equality of opportunity and unlawful discrimination is also relevant to how researchers use personal data (Clifford and Ausloos, 2018). Researchers are required to comply with this legal understanding of data fairness, which provides broad guidance on what to avoid when handling SMD (such as the publication of easily identifiable, sensitive information without the consent of the individuals concerned). However, legal frameworks do not provide concrete guidance on all aspects of research practice, especially since what legal precepts mean for everyday technical decisions unavoidably depends on the context of application.

A crucial complement to the legal approach is a social understanding of data fairness as a way to counter discrimination and decrease social divides (Fiesler et al., 2020; Veale and Binns, 2017; Zook et al., 2017). This involves considering the intention and approach of data practices, the extent to which different publics and stakeholders are engaged, the power relations at play and the role played by trust in facilitating or disrupting data access and mining. Though familiar to researchers who use big data, these considerations are still viewed as largely extraneous to research itself (Golder et al., 2017). Indeed, making data fair is often delegated to governance mechanisms (such as ethics committees, review boards and public engagement groups) that encourage awareness of potentially discriminatory mechanisms among researchers and wider society. These mechanisms, however, may fall short of providing guidance to researchers when it comes to technical data practices ranging from data cleaning to storage and modelling.

We propose that what is missing is an understanding of data fairness as part and parcel of the research process.

Implementing methodological data fairness means considering how legal and social concerns can be addressed within each stage of research, thus helping to ensure that potential gains are realised. This is critical to avoid unethical data uses as well as methodological problems in data analysis, especially since legal and institutional governance mechanisms struggle to keep up with rapidly developing methodologies and research findings (Halford and Savage, 2017), including potential harms arising from integrating different data sources (Leonelli, 2016). This does not guarantee the ethical use of data after a research cycle is complete, as researchers have limited control on how their work will be interpreted and re-used by others. However, attention to methodological data fairness can affect the infrastructures, expectations and commitments that accompany the data as they journey on, thereby helping to prevent immediate concerns and contributing towards longer term fairness. In this sense, methodological data fairness is an important component of scientific inquiry; as we show in the next section, asking what makes data use fair within everyday research practice is often equivalent to asking what makes data reliable, trustworthy and accurate.

Methodological data fairness at each stage of the research process

Planning and design of research

The first stage in achieving fair social media research relates to the fundamentals of the research; what is being asked, why, in what ways and for what purposes. Clarity in research goals informs robust design and data choices. In turn, the choice of data can affect and sometimes shift the goals of research (boyd and Crawford, 2012), a possibility that needs to be taken into account in the design and subsequent implementation of research – not least as part of Data Management Plans (DMPs).

Of further concern is evaluating the conceptual assumptions being made. It has been suggested that the apparent uncritical and a-theoretical nature of much big data and social media research, as well as a lack of self-reflexivity by researchers, has limited the development of the field and the utility of research outcomes (Tinati et al., 2014). Whether or not this is the case, we wish to problematise the widespread tendency among researchers to confuse the lack of well-established theories with the lack of conceptual assumptions. The lack of theory is understandable in a domain that is often data-driven and viewed as a precursor to the development of full-blown theories, but it does not mean that researchers do not make significant conceptual assumptions when developing their empirical research – such as the study of rural populations being irrelevant to the understanding of certain diseases, Twitter data being useful to identify the emergence of symptoms in a population, or social media posts being reliable. Those assumptions need to be identified and evaluated when designing and carrying out a study.

Although new sources of data and methodologies are continually being developed and used, established scientific practices must continue to apply (boyd and Crawford, 2012). The intentions of the study, the assumptions being made about the phenomenon in question, and who may be affected or involved, should be explicitly considered throughout the research and reported in the final stages (Floridi, 2011). These questions may not always be clearly answerable, but posing them can help researchers anticipate the potential for harm or dis-benefit (Zook et al., 2017). This reflexivity should extend to the scientific context of researchers. The epistemic culture and commitments already in place in the research environment may need to be challenged to ensure that practices are lawful and fair.

Working with stakeholder and reference groups and, where appropriate, with diverse publics, can help refine research plans and surface unknown unknowns. For instance, it may be useful to poll a group of Twitter users to determine whether they are likely to make accurate tweets, or whether they tend to post ‘fake facts’ as a joke or for other purposes. Such a consultation may inform the extent to which researchers rely on Twitter content, how they structure data mining, and whether they use other data sources to triangulate findings. This also applies to the secondary use of data and to the application of any tools developed. Indeed, few tweets have geo-referenced data and yet in our past research, we have extrapolated from those few to all tweets in order to link them with environmental data, without reflecting on how representative the people with geo-referenced data may be of all Twitter users. This turned out to limit the re-usability and reliability of our results in the longer term.

In academic and some institutional settings, the use of SMD for research presents challenges to the established ethical review systems. There is much variation as to whether or not ethical review is sought; and even where established practices exist, approaches and standards are contested (Metcalf and Crawford, 2016; Vitak et al., 2016). Challenges include the implications of who or what is considered to be the research ‘participant’ and the ‘distance’ of researchers from data providers; a broadening of what is understood to be data; and the balancing of potential gains against uncertain harms (Neuhaus and Webmoor, 2012).

The highly dynamic nature of the social media industry poses further challenges to maintaining institutional oversight of fairness. Platforms change their Application Programming Interface (API) with little or no notice, and what was once an acceptable and widely used data source can suddenly become prohibited. Changes are often not communicated widely to data users, except in the instrumental and technical aspects of how (e.g.) computer programs used to access the data must be altered to continue functioning; changes in the ‘back end’ functioning of the API that do not affect the direct interface may go unnoticed. Social media corporations protect commercially valuable information (such as algorithms used to control access and presentation of data, as well as complete raw datasets). Ethical oversight frequently operates in the absence of full information about the data and how they have arisen.

This has led to calls for an ‘agile’ ethics (Neuhaus and Webmoor, 2012) and questions as to whether researchers should rely solely on committees for their ‘ethical compass’ (Henderson et al., 2013). Promising novel avenues for oversight focus on ‘ethics ecosystems’, the sharing of information between ethics boards, regular reviews of the research process (Leonelli, 2016; Samuel et al., 2018), and more explicit consideration of fairness (Taylor and Pagliari, 2017). Ethics and data governance within research institutions need to capitalise on wider expertise to support methodological data fairness.

Data choices, sampling and acquisition

Key considerations in undertaking fair social media research relate to data acquisition. We already stressed that Facebook, Instagram, WhatsApp, SnapChat and other major platforms are not available for research due to privacy constraints and lack of access (Fielsler et al., 2020). This creates a major distortion to what – and who – can be studied using SMD, which needs to be taken into account in research design.

The fact that some forms of SMD are available does not mean that they are

We have surprisingly little information on the demographics of social media use at a population level, a situation compounded by the rapid evolution of usage patterns. Demographics may change by topic, geography and time of day within the same platform; for example, English-language posts on Twitter may come from US, UK or Australian citizens depending on when people are awake. Concerns have been raised about the trustworthiness of information sources (Floridi, 2011), with important implications for the research findings thereby derived. The authenticity of social media users – especially given the abundance of fake, pseudonymous and automated ‘bot’ accounts – is difficult to verify even with specialised identification tools (Hunter et al., 2018). Depending on the analysis performed and the intended purpose of research, these accounts may be seen as extraneous noise or as an accepted part of the social media milieu (Hunter et al., 2018). Attention to hermeneutical injustice demands that efforts are made to understand the population from which SMD are sampled, especially when the analysis aims to understand the beliefs, actions and intentions of socio-demographic groups and the results are generalised and used to guide decision-making.

The motivations of social media users cannot be verified without in-depth qualitative research. Except for specific citizen science initiatives (Tempini, 2017), few users post information intended to inform research. Some researchers take user consent to the Terms of Service (ToS) of the relevant platforms as a substitute for consent to data use, and exercise great care in staying within the boundaries determined by such a ToS. For instance, during the social sensing project, we stopped using Instagram data, despite their potential usefulness, since ToS stated that data should not be harvested at scale using automated methods. Although we could have gotten the data using a program that simulated many manual searches, we concluded that this might contravene the reasonable expectations of the user.

This is not a universal position regarding the ethics of restrictive ToS, with some researchers questioning the underlying power dynamics between user, platform and research (Fiesler et al., 2020). Moreover, both social media users and researchers are uncertain about what norms should apply to the use of their data (Nissenbaum, 2009; Zimmer, 2010). It cannot be assumed that social media users have read, understood or acted upon a ToS (Vitak et al., 2018); and consent from the original poster may not cover information acquired on their social networks due to the inherently linked nature of social media material (Hunter et al., 2018). Similar issues have emerged in relation to bioethics (Anderson, 2015).

Different attitudes to SMD mining emerge from evaluations of whether practices were fair or not, whether or not the data are perceived to be ‘private’ or ‘public’, and in relation to transparency of the research process (Kennedy, 2016; Kennedy et al., 2017). The very retrieval of data via an API is ‘shaped by the methods that researchers use to access data, the economics and practicalities for the companies in sharing data, with whom and on what basis, both shaped by legal and sometimes even ethical considerations’ (Halford et al., 2017). Opacity in the storage, display and data provision/distribution protocols of social media companies limits understanding of what SMD represent. This lack of clarity is likely to introduce hidden biases (González-Bailón et al., 2014; Morstatter et al., 2013). For example, when geolocating data extracted from applications such as Twitter, it is unclear how the location is set and measured; the Twitter platform allows precise location information from smartphone GPS to be attached to individual tweets, but in the absence of GPS may utilise more general location information from the user profile. This can generate inequities in sampling and representation, depending on the scale and level of resolution of the location categories used (e.g. neighbourhood vs. street vs. household). As long as little information is provided on how these algorithms work for specific social media, it is difficult to evaluate what assumptions are being made and how they may affect the research undertaken.

Data processing and analysis

Recognition of the potential for data processing to result in discriminatory outcomes has prompted greater consideration of the need to ensure data fairness (Berendt and Preibusch, 2017). Social media produces enormous quantities of data, much of which will be irrelevant to the research underway; this extraneous material must be filtered, or ‘cleaned’ (Kavanaugh et al., 2012). Computational methodologies, such as machine learning algorithms, can discriminate data according to predictive characteristics. However, machine learning is subject to similar sources of bias as other forms of data processing (Horvitz and Mulligan, 2015; Veale and Binns, 2017).

Supervised ML begins with a human-curated training dataset, from which the machine learns to detect patterns of interest. Thus, it can amplify or distort any biases used by human curators to produce the training data. Unsupervised ML finds statistical patterns in the data without much human involvement – but its outputs are still as good as the data sample to which it is applied. Relatedly, many ML algorithms are inherently difficult to explain even if code is available for study, since decisions are decentralised and there is no clear IF-THEN logic (Munoz et al, 2016); and while in academic work code-sharing is common, in commercial uses of machine learning, the code is often proprietary.

The extrapolation and identification of individual characteristics (e.g. political affiliations) through the modelling of SMD are becoming increasingly accurate (Kosinski et al., 2013), with the linking of data from separate social media sources further enhancing these predictions (Leavey, 2013) and related infringement of privacy laws (Horvitz and Mulligan, 2015). Machine learning can also reveal information the social media users had not consciously shared. Codes of good practice regarding data processing and use, including from burgeoning research fields such as Discrimination-aware Data Mining (DADM) and Fairness, Accountability and Transparency in Machine Learning (FAT ML), are being developed (Berendt and Preibusch, 2017) but remain challenging to apply given ‘poorly designed matching system, decision-making systems that assume correlation necessarily implies causation, and data sets that lack information’ (Munoz et al., 2016). As noted, the processing of SMD should avoid stripping data of crucial contextual information.

Data storage, stewardship and management

The creators of the FAIR principles emphasised that ‘good data management is not a goal in itself, but rather is the key conduit leading to knowledge discovery and innovation’ (Wilkinson et al., 2016). Good data stewardship is crucial in safeguarding public trust and maintaining fairness in social media research, and yet what constitutes good practice in the management of new forms of big data, including social media, is not well defined or acted upon (Auffray et al., 2016; Kinder-Kurlanda et al., 2017). Ostensibly, SMD should be treated as other potentially sensitive datasets with the interests of the ‘participant’ protected, including friends or family mentioned or implicated. The data and associated meta-data should be securely stored in line with predesigned DMPs. As we noted however, SMD raise specific challenges with respect to basic tenets of ethical data management such as consent and confidentiality.

To mitigate these challenges, bespoke DMPs and systems architecture must be designed towards secure data storage and retrieval. Kinder-Kurlanda et al. (2017), for example, developed robust methodologies to archive geotagged Twitter data, while Collmann et al. (2016) designed DMP guides which prompt researchers to reflect on the implications of data re-use – including how to manage the deletion of data in relation to the ‘right to be forgotten’ of GDPR. A related challenge is posed by the restrictions constraining the sharing of SMD beyond the original posting. Twitter for instance allows researchers to download and store tweets via their API, but not to pass the tweets to anyone else. A common research practice is to share lists of tweet ID numbers and then query the Twitter API to retrieve the full tweet. This gives Twitter the ability to refuse to provide the tweet associated with an ID, which – while complying with GDPR regulations - makes it difficult for studies based on these data to be scrutinized and replicated.

Reporting and publishing

This brings us to the issue of reporting. Although there are as yet no systematic studies of the reproducibility of social media research, assessments of similar fields concluded that it is ‘difficult if not impossible’ to independently reproduce studies from published papers (Olorisade et al., 2017). This likely applies to research using SMD which is published with ‘remarkably little methodological consideration of the data used’ (Halford et al., 2017). Lack of detail and transparency hampers researchers’ ability to interrogate the suitability of research components and veracity of the findings, hence limiting the fruitfulness of social media research and assessments of data fairness (Zimmer, 2010). The fields using SMD tend to perpetuate existing disciplinary habits for ethics and data governance. Researchers in public health or epidemiology are more sensitive to the demands of data fairness, while computer science has little history of engagement in this area. Nevertheless, much of the current social media research happens in computer science departments, with researchers doing social science research on public health despite not having research training in that area.

To improve methodological data fairness, researchers need to admit and report the limits of their understanding of the data at the point of publication. As noted, how data are created, curated, sampled and provided through APIs is often unclear, nor is there a good understanding of systemic biases in social media use. Institutions and venues in which social media research is undertaken should encourage their staff to recognise and investigate these issues. Another concern is the lack of recognised standards for assessing the quality of social media research (Cai and Zhu, 2015). The sheer variety of ‘methods, materials, goals, techniques used… as well as the diverse ways in which data can be evaluated depending on the goals of the investigation at hand’ present a significant challenge to performing peer review (Leonelli, 2017). Finding appropriate ways to share SMD may help in this respect, while also lowering the digital divides in data access (Kinder-Kurlanda et al., 2017; Weller and Kinder-Kurlanda 2016). Making software (and related parameters) open source also improves the scrutiny of the research (Jiménez et al., 2017; Vasilevsky et al., 2017).

Conclusion: Data fairness fosters good research practice

There is no doubt about the critical importance of the FAIR principles to effective data management. We have highlighted the equally critical importance of monitoring what happens once FAIR data mined from social media are being used to generate new knowledge; and particularly what the notion of fairness means in SMD mining. We argued that implementing methodological data fairness contributes to better and more responsible uses of SMD for health research, in ways that complement the FAIR framework. Methodological data fairness has a broader remit than the CARE principles, which focus strongly on ethics but restrict their scope to the datafication of indigenous knowledge. It also embraces data that are not necessarily filtered by large data repositories such as those targeted by the TRUST principles, though arguably all social media platforms could be viewed as large databases and should be administered accordingly. The fact that health research based on social media does not necessarily fall under the remit of the CARE and TRUST principles is one of many reasons why ethical concerns in this area are often overlooked by practitioners, especially within computer science and data science departments. Our focus on methodological data fairness aims to help remedy this situation, by stressing the central role of ethics towards achieving reliable and innovative scientific findings.

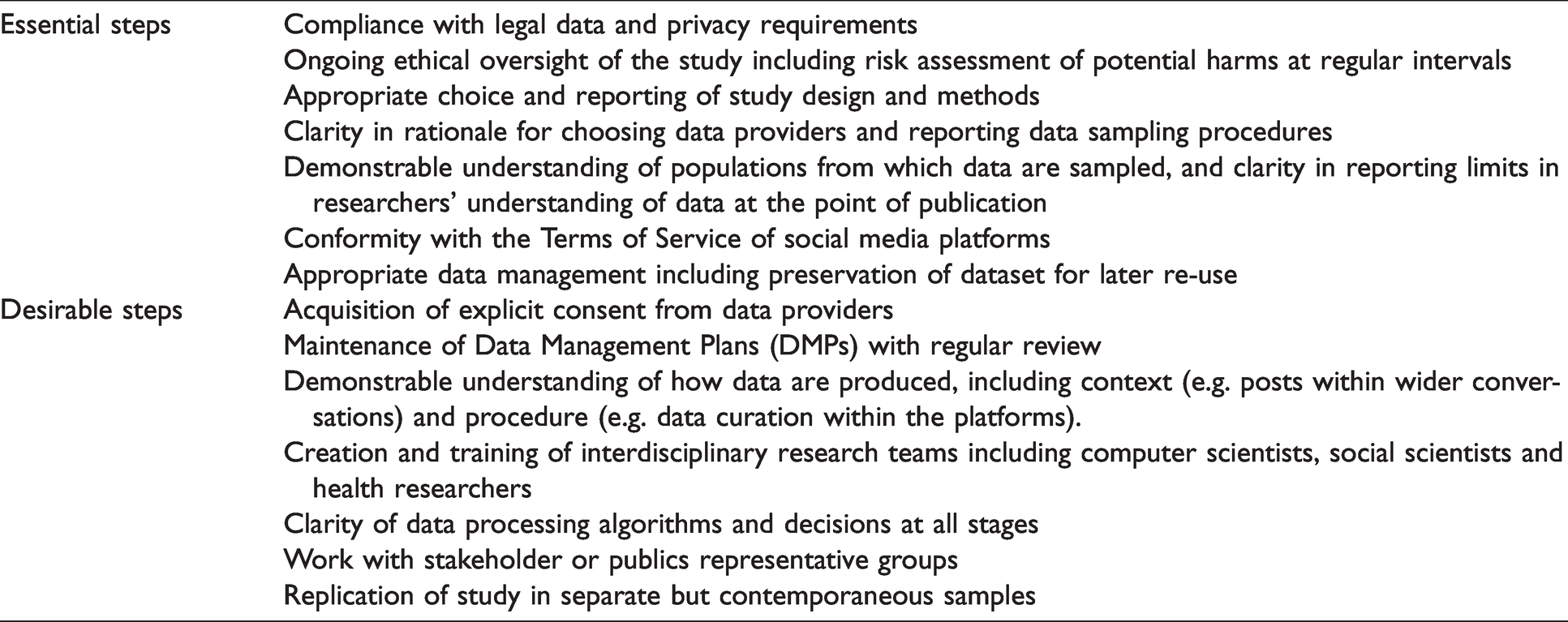

At the start of our analysis, we highlighted two types of epistemic injustice that can affect data analysis and compromise results: testimonial and hermeneutical. Our discussion has shown that efforts to implement methodological data fairness at each stage of the research process, summarised in Table 1, help countering these forms of injustice. Testimonial injustice can be addressed by data practices that critically engage existing norms around what counts as appropriate, relevant or adequate evidence. Steps towards countering testimonial injustice include: considering whether platforms such as Twitter are a sufficient source of evidence for understanding population beliefs, preferences and behaviors; and adopting ‘agonistic’ machine learning, where ‘companies or governments that base decisions on machine learning must explore and enable alternative ways of datafying and modelling the same event, person or action’ (Hildebrandt, 2019). Hermeneutical injustice can be addressed by data practices that leverage diverse sources of knowledge to counter existing prejudice – for example, data collection and mining techniques that seek to mitigate existing digital divides and the privileging of specific socio-demographic, cultural or political stances within data sources.

Steps towards achieving methodological data fairness in social media research.

We noted that data fairness needs to be promoted at a systems level by targeting a variety of stakeholders – in the case of social media research, the owners of online platforms through to users of research findings. The opacity of social media systems, from the implications of user interactions with the platforms to the nature of data gathered through APIs, is a serious challenge. Dialogue with social media users can help clarify expectations and suitable processes for gaining consent. For researchers it is also essential to provide incentives like suitable funding calls, and publishing guidelines demanding rigorous ethical review. This creates a research culture where taking the time to consider data fairness becomes normal practice.

This is particularly relevant given the pressure that the COVID pandemic has placed on social media researchers working at the interface of public health. It has been suggested that researchers should relax their vigilance on data fairness, in the interest of speeding up the production of results that may help to respond to the emergency. The special circumstances linked to disasters have long been noted by data ethics analysts, with many suggesting that those undertaking big data research should know when to break rules (Zook et al., 2017: 7–8). We are uncomfortable with this suggestion. While the urgency of addressing a pandemic is all too obvious, the hasty production of unreliable results is not a useful scientific response (Leonelli 2021; O’Brian 2020). We argued that research outputs are more reliable, reproducible and easier to build upon when researchers pay attention to issues such as sampling, accurate reporting of the scope and limits of projects, and proper handling of consent – in other words, when researchers implement methodological data fairness. These efforts result in better science and more actionable evidence for decision-making.

We conclude that making research data fair as well as FAIR is inextricably linked to concerns around the adequacy of data practices. ‘Making data fair’ means critically identifying and regularly assessing the extent to which data practices are likely to participate in (or sometimes create) social stratification, clustering and surveillance of individuals or communities, and with what possible implications. This requires the implementation of processes of accountability, integrity, and justice as integral to the whole research process – not as an add-on, an institutional hoop to be jumped through or as a discrete stage in preparation or follow-up. Viewing fairness as part of the technical concerns underpinning data analysis is still far from common, as the exclusion of fairness from the FAIR principles exemplifies. Methodological data fairness helps to guarantee not only ethical safeguards, but also – and more fundamentally – the scientific soundness of research practices and outcomes. It is imperative that data scientists working with SMD engage actively in countering pernicious forms of data injustice that can severely damage the credibility and veracity of their knowledge claims.