Abstract

Keywords

Critical thinking (CT) stands as a fundamental cognitive capacity essential for successful integration into contemporary knowledge societies of the 21st century (Alsaleh, 2020; Kocak et al., 2021; Nussbaum et al., 2021; Wechsler et al., 2018). It entails developing robust decision-making and problem-solving skills applicable in diverse practical contexts within an increasingly complex environment, while also addressing significant issues within specific disciplinary domains (Butler et al., 2012; Dwyer et al., 2014; Niu et al., 2013). Recent studies highlight that good critical thinkers demonstrate better decisions-making capabilities, even under pressure (Ellerton, 2022; Gambrill, 2006; Nussbaum et al., 2021); exhibit fewer cognitive biases (Facione & Facione, 2001; Hong & Choi, 2015; Georgiadou et al., 2018); engage more actively as well-informed citizens (Shutaleva, 2021) and frequently possess enhanced employability prospects (Dwyer et al., 2014). This has made CT a competence with little conceptual and methodological consensus in its measurement instruments due to the multifaceted attention it has garnered from diverse scholars and educators interested in the development of thinking skills (Bernard et al., 2008; Niu et al., 2013). Consequently, multiple conceptualizations of CT persist contingent upon the field or disciplinary context under studied (Butler et al., 2012; Ossa-Cornejo et al., 2017; Valenzuela and Nieto, 2008a).

Existing theoretical frameworks characterize CT as a purposeful, reasoned, and goal-directed thinking process, comprising a set of fundamental cognitive skills (An Le & Hockey, 2022; Black, 2012; Dwyer et al., 2014; Nieto & Saiz, 2008; Valenzuela & Nieto, 2008a). These competences enable individuals discern and interpret information (Valenzuela & Nieto, 2008a), scrutinize its validity, assess its reliability, interrogate its origins (Halpern, 2014; Shutaleva, 2021), and construct coherent explanations and conclusions (Nussbaum et al., 2021; Schroyens, 2005).

While the cognitive aspect predominates (Ossa-Cornejo et al., 2017), CT cannot be solely delineated by its constituent skills, as proficiency in these skills does not guarantee adept critical thinking (Nieto and Saiz, 2008; Saiz et al., 2015; Wechsler et al., 2018). Moreover, individuals must discern when it is convenient to use them and be willing and motivated to do so when necessary (Dwyer et al., 2014; Ku, 2009; Valenzuela & Nieto, 2008a). Thus, the behavioral component of CT manifests in the synergy between these components and their practical application (Halpern, 1998; 2014).

In an attempt to resolve the conceptual discrepancy, an interdisciplinary and international panel of CT experts formulated the Delphi Report (American Philosophical Association [APA], 1990), presenting CT as a construct organized around two dimensions: cognitive abilities and affective dispositions. CT is defined as: Intentional, self-regulatory judgment resulting in interpretation, analysis, evaluation, and inference, as well as an explanation of the visual, conceptual, methodological, criteriological, or contextual considerations on which that judgment is based (Facione, 1990, p. 3).

This conceptual perspective underscores CT as a multidimensional construct, consolidating the principal components agreed upon in current literature and representing the widely accepted definition of proficient CT (Alsaleh, 2020; Beckie et al. 2001; Dwyer et al., 2014; Miele & Wigfield, 2014; Sorensen & Yankech, 2008; Wechsler et al., 2018). Under this approach, CT comprises six core cognitive skills: interpretation, analysis, evaluation, inference, explanation, and self-regulation, each with their respective sub-skills, with analysis, evaluation, and inference holding particular significance (Dwyer et al., 2014); and two affective dispositions: approach to life and living and approach to specific themes, questions or problems, along with their sub-components (Facione, 1990, 2011; Ossa-Cornejo et al., 2021; Valenzuela and Nieto, 2008a). Definition of each component is presented below (Table 1).

CT’s Cognitive Abilities Definition (Facione 1990, 2011).

Furthermore, the list of affective dispositions characterizing proficient critical thinkers are: curiosity regarding diverse issues; acquiring and maintaining well-rounded knowledge; readiness to recognize and capitalize on opportunities for critical thinking; trust in structured deliberative processes; self-assurance in reasoning abilities; receptiveness to diverse perspectives; adaptability in considering alternative viewpoints; comprehension of others’ perspectives; impartiality in evaluating reasoning; honesty in confronting personal biases, prejudices, stereotypes, and inclinations; caution in suspending, formulating, or revising judgments; willingness to reevaluate positions where honest introspection warrants change; clarity in articulating questions or concerns; organization in handling complex tasks; diligence in seeking pertinent information; rationality in selecting and applying standards; attentiveness to current issues; perseverance in the face of challenges; and a degree of precision appropriate to the subject and context (Facione, 1990, 2011).

A literature review revealed prominent instruments for assessing CT based on the Delphi panel’s definition, including the California Critical Thinking Skills Test (CCTS), the Test for Everyday Reasoning (TER), and the Critical Thinking Disposition Inventory (Facione, 2011; Ricketts & Rudd, 2004). However, none of these instruments simultaneously measure both dimensions of CT, as the first two evaluate only cognitive skills components, while the third focuses solely on assessing related dispositions.

Other instruments that are not based con Delphi panel’s framework, such as the Watson-Glaser Critical Appraisal (Watson & Glaser, 1980), the Ennis-Weir Critical Thinking Essay Test (Werner, 1991), the Cornell Test of Critical Thinking (Ennis & Millman, 2005), the Halpern Critical Thinking Assessment using Everyday Situations (Halpern, 1998), and the Salamanca Critical Thinking Test (Rivas & Saiz, 2012), evaluate CT solely based on its cognitive abilities. Meanwhile, tests like the Motivational Scale of Critical Thinking (EMPC) (Valenzuela & Nieto, 2008b) are grounded solely in motivational dispositions.

Given the contemporary understanding of CT as a synthesis of highly interrelated skills and dispositions operating jointly and complementarily (Bernard et al., 2008; Ossa-Cornejo et al., 2017), the lack of psychometric tests that assess CT as a multidimensional concept comprising cognitive skills and affective dispositions, and notably, the absence of Latin American assessments (Ossa-Cornejo et al., 2017), the present study aimed to design and validate a CT assessment scale based on the theoretical framework provided by the Delphi report.

Method

Design

The present study adopts a quantitative empirical approach with an instrumental design, aiming to develop and validate a critical thinking assessment scale (Ato et al., 2013).

Participants

A non-probabilistic convenience sampling method was employed virtually, resulting in a sample of 258 individuals (55.04% women) aged between 18 and 63 years (

Procedure

Initially, a literature review identified the APA Delphi Panel CT conceptualization as the most appropriate framework (APA, 1990). The primary CT components were delineated, and a specifications table was developed, allocating each factor’s percentage (%) load and the appropriate number of items (see Appendix A). Expert validation was then conducted independently by five psychologists, including three Ph.D. holders and two candidates, all possessing extensive research experience pertinent to the design and subject matter of the present study. Additionally, one expert specialized in assessment and evaluation, while another had considerable expertise in university teaching, research methodology, and psychometrics. The remaining three experts specialized in thinking skills and cognitive development, teaching and consulting in life-skills education, and linguistic and decision-making processes, respectively. The items were evaluated based on relevance, clarity, sufficiency, and necessity using a scoring scheme adapted from Escobar-Pérez and Cuervo-Martínez (2008). The scores underwent analysis using Lawshe’s Content Validity Index (CVI), with values exceeding 0.6 deemed satisfactory (Tristán-López, 2008). Subsequent adjustments were made to the scale based on the evaluation results.

The scale was administered and validated using the Microsoft Forms tool. Participants were required to confirm eligibility, provide demographic information (age in years, sex, and academic level), and follow instructions for responding to the scale items. Validity and reliability tests were then conducted on the collected data, and a database was established.

Data Analysis

To analyze the internal structure of the test, sample adequacy (KMO), Bartlett’s sphericity coefficient (

Reliability analysis involved evaluating McDonald’s ω, Cronbach’s alpha (α), Guttman’s λ6, and Greatest Lower Bound (GLB) statistics for the complete test and each factor. Values exceeding .7 were indicative of internal consistency (Chadha, 2009). In addition, sample normality was verified with the Kolmogorov-Smirnov test. Due to normality not being founded, Pearson’s product-moment correlations (

Ethical Considerations

This research received approval from the Research and Ethics Subcommittee of the researchers’ Faculty of Psychology, with record number 158. Furthermore, participants’ rights were upheld throughout the research, as their participation was entirely voluntary, and their dignity, integrity, privacy, and autonomy were all maintained. They were provided with the opportunity to give informed consent before the questionnaire was administered, which included information about the study’s authors, its purpose, justification, the advantages of participating, the procedure to be followed and confidentiality and anonymity agreements (American Psychological Association (APA), 2017).

Participants were assured of no risk to their well-being following resolution 8,430 of 1993s article 11 (Colombian Health Ministry, 1993), and their data were strictly used for research purposes only, with no feedback provided on the results due to the ongoing validation process.

Results

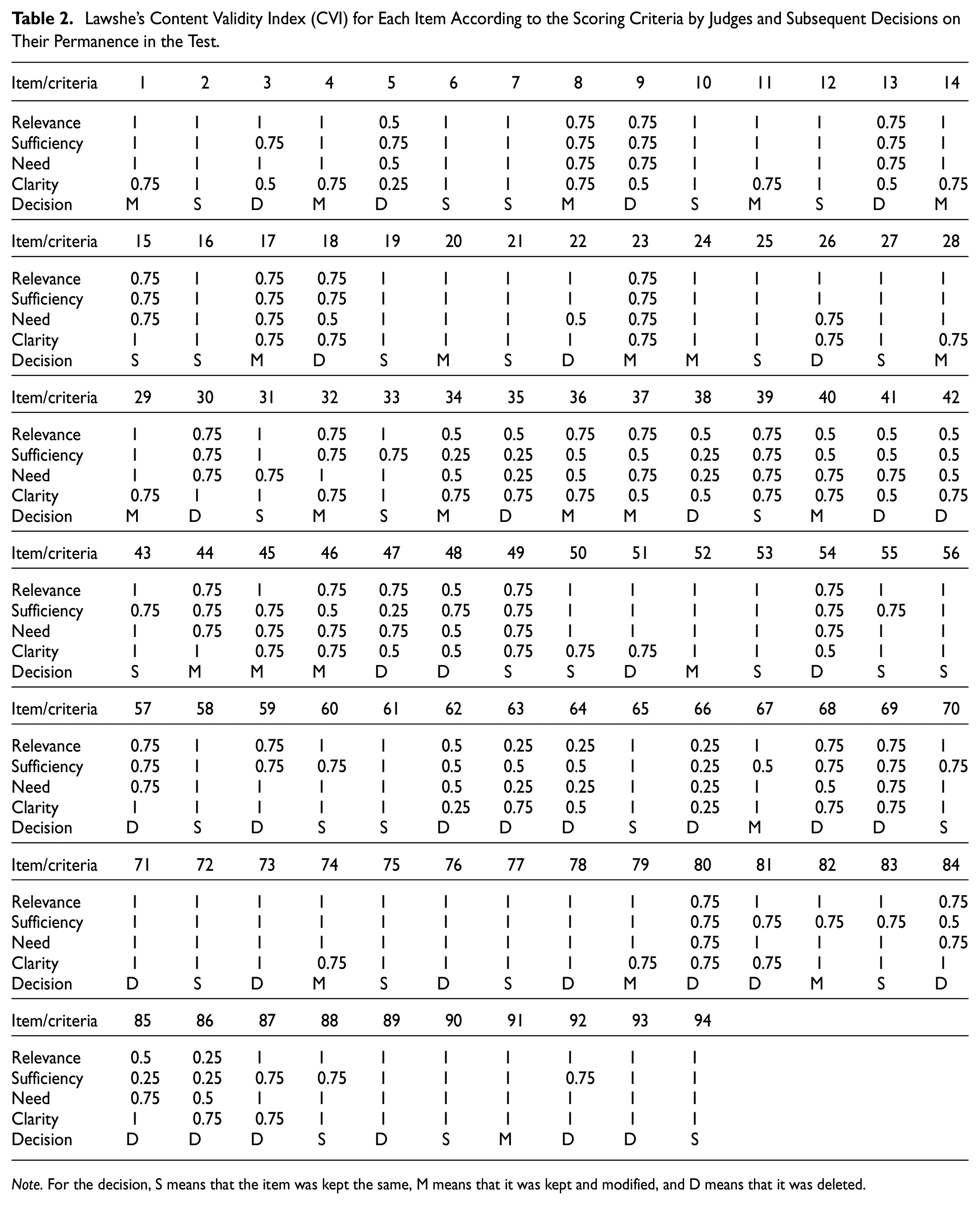

The specifications table, constructed in alignment with the two dimensions of Critical Thinking (CT) proposed by the Delphi report and its sub-components, guided the development of the Critical Thinking Evaluation Scale (CTES) (see Appendix A). Results of the validation process by expert judges of the corresponding items are shown in Table 2.

Lawshe’s Content Validity Index (CVI) for Each Item According to the Scoring Criteria by Judges and Subsequent Decisions on Their Permanence in the Test.

Based on the obtained Content Validity Index, the validation process by expert judges resulted in the deletion of 22 items (CVI < 0.6), the retention of 32 items (CVI > 0.7), and modification of 25 items based on qualitative feedback from each judge. Two motivational disposition sub-categories were removed due to none of their items meeting the minimal sufficient value for two or more rating criteria. Additionally, items with lower frequency CVI ratings of 1 or 0.75 were deleted to maintain the percentage loadings of the original theoretical proposal. Consequently, the scale comprised 57 items for validation and administration to 258 participants. Factor analysis was conducted to examine the underlying factor structure of the CTES (see Table 3).

Correlation Matrix of the Exploratory Factor Analysis.

The final scale consisted of 17 items distributed across 2 factors: Factor 1 comprised 8 items, while Factor 2 included 9 items. Reliability values for each factor and the overall scale demonstrated high internal consistency and appropriate reliability (see Table 4).

Reliability Statistics of the General Test and Each Factor.

All statistics indicated values exceeding .8, signifying robust internal consistency. Positive and significant correlations were observed within both factors (

Item Hypothetical Elimination and Item-Test Correlation.

According to the results, the cumulative proportion of variance explained by the final scale was 40.2%

Discussion

This study aimed to design and validate the Critical Thinking Evaluation Scale, acknowledging the pivotal role of critical thinking (CT) in 21st-century society, as underscored by various scholars (Alsaleh, 2020; Kocak et al., 2021; Nussbaum et al., 2021; Wechsler et al., 2018). Despite the acknowledged significance of CT, there remains a notable scarcity of psychometric instruments that comprehensively assess it as a multidimensional construct (Ossa-Cornejo et al., 2017). Therefore, our endeavor sought to address this gap by constructing a scale grounded in the theoretical framework provided by the Delphi panel, which integrates the main components related to CT agreed upon literature: cognitive skills and affective dispositions.

Our meticulous methodology involved constructing a specifications table based on CT components, followed by item construction, expert validation, and analysis using Lawshe’s Content Validity Index. Upon adjustments, the scale was administered to a sample of 258 Colombian individuals. Subsequently, the assumptions of sample adequacy (KMO), Bartlett’s sphericity, and collinearity were confirmed, and exploratory factor and reliability analysis were conducted.

The results yielded a CT evaluation scale comprising 17 items distributed across two factors, demonstrating high indices of general reliability, and supporting the accuracy and internal consistency of the test (Chadha, 2009). Factor 1 is finally composed of 8 items, while Factor 2, of 9. Factor 1, termed Analytical Ability, primarily encompasses cognitive skills related to evaluation and analytical information processing (6 of 7 items), while Factor 2, termed Argumentative Ability, encompasses a distribution between motivational dispositions (3 items) and cognitive skills, reflecting the strategic application of skills and cognitive strategies in generating and utilizing information.

These findings align with agreed established definitions of CT as an active and skillful application, analysis, and evaluation of information (Alsaleh, 2020; Choy & Cheah, 2009; Nussbaum et al., 2021; Paul & Elder, 2003; Paz et al., 2010; Tung & Chan, 2009). Moreover, this scale ensures comprehensive coverage of the construct by incorporating both cognitive skills and affective dispositions within a unified measurement framework, marking a significant contribution to the existing literature. Notably, this scale represents a pioneering effort within the existing literature as the first to encompass CT as a multidimensional construct. The validity of the instrument is supported by its internal structure, as evidenced by the alignment between factor analysis clusters and the theoretical proposal, high explained variance, expert judgment validation, and adequate item-test correlations (Barraza, 2007).

The only initially integrated component of CT, whose items are not present in the scale’s final version after the corresponding analyses, is the cognitive skill of self-regulation. However, while some literature suggests including self-regulation as a metacognitive component of CT (Facione, 1990), the scale’s theoretical coverage encompasses this aspect within its broader framework. Moreover, empirical evidence suggests that metacognition, while related, constitutes a distinct cognitive process that enhances the direction and prediction of CT outcomes (Choy and Cheah, 2009; Dawson, 2008; Dwyer, 2011; Dwyer et al., 2014; Ghanizadeh, 2011; Heydarnejad et al., 2021; Kuhn and Dean, 2004; Magno, 2010; Melsert & Bicalho, 2012).

The present study has limitations, including the lack of predictive validity testing and convergent validity analysis. Since this study marks the first instance within the current literature review of designing a scale covering the Delphi panel’s CT conceptualization thoroughly, there was no endeavor to obtain valid evidence based on the response process and other variables. Therefore, it is imperative to conduct predictive validity studies with other variables, such as measuring and correlating CT with academic performance across various knowledge areas or comparing CT-trained and untrained individuals. Similarly, only exploratory factor analyses were performed due to the primary aim of designing a scale tested for its metric qualities for the first time. Consequently, it is recommended to validate this factorial structure through confirmatory factor analysis with independent samples in diverse contexts for future research. Another limitation of this study is the absence of a convergent validity analysis with other established measures of critical thinking. Despite the lack of instruments comprehensively covering all dimensions, as demonstrated by the present study, it is advisable to apply the current scale alongside others to assess their correlation in measurements.

In conclusion, this research presents a robust and reliable psychometric instrument for evaluating CT in the analytical and argumentative skills and dispositions within the Colombian population. The scale was named the Critical Thinking Evaluation Scale (CTES), and application-ready version and norms for scoring and interpretation are provided, facilitating its use in various contexts to enhance CT thinking skills and motivational dispositions (see Aprendix B, C and D). Furthermore, critical thinking (CT) is a fundamental skill for students, enabling them to effectively plan their learning, evaluate their performance, and monitor their progress (Silva & Rodriguez, 2011; Alwehaibi, 2012). This skill is equally applicable in scientific and business contexts (Lin, 2014). Moreover, within organizations, strong CT abilities facilitate problem identification, contextualization based on complexity, and the application of methodologically sound solution (Zúñiga, 2015). Thus, enhancing CT proficiency is expected to address the challenge of constructing adequate instruments for measuring and evaluating CT due to the existing conceptual diversity (Dwyer et al., 2014; Ossa-Cornejo et al., 2017). In today’s rapidly evolving information society, the ability to identify individuals with adequate CT skills is more crucial than ever.