Abstract

Public and patient engagement, alongside activities such as knowledge translation and mobilization, are becoming standard requirements for health sciences and services research funding (Domecq et al., 2014; Frank et al., 2015; Tetroe et al., 2008). While some mature methodologies, such as participatory research, embrace non-researcher involvement in research, new methods are also emerging to encourage public involvement in research. Crowdsourcing, “an online, distributed problem solving and production model” (Brabham, 2008), is one method through which researchers are engaging the public. The term citizen science, defined as “a form of research collaboration involving members of the public in scientific research projects to address real-world problems” (Wiggins & Crowston, 2011), is frequently used synonymously with crowdsourcing and aims to address the same notion of engaging the public in research.

Various reviews of the literature across different disciplines found crowdsourcing being used for data collection, processing, and analysis as well as tasks such as problem solving, data processing, surveillance/monitoring, and surveying (Crequit et al., 2018; Hossain & Kauranen, 2015; Ranard et al., 2014). Websites such as TurkPrime, Profilic.co, and Crowdcrafting.org engage the crowd for the purposes of recruitment, data collection, or data analysis for their studies (Bassi et al., 2020; Crequit et al., 2018; Litman et al., 2017; Peer et al., 2017). Studies on crowdsourcing tend to focus on its use of software, technology, online platforms, or its application for the purposes previously noted (Hossain & Kauranen, 2015). There is need for further exploration to understand how best to use crowdsourcing for research, as there is limited guidance for researchers who undertake crowdsourcing for the purpose of research studies (Buettner, 2015; Law et al., 2017). Numerous authors have identified gaps in the research related to crowdsourcing, including a lack of decision aids to assist researchers using crowdsourcing, and best practice guidelines (Buettner, 2015; Law et al., 2017; Sheehan, 2018).

In this study, we sought to explore crowdsourcing as a research method by understanding how and why it is being used, through the use of a modified Delphi technique to identify how crowdsourcing is being applied in practice by researchers and how it aligns with any specific research methods. We aimed to develop a conceptual framework for crowdsourcing within traditional and existing research approaches, as a first step toward supporting researchers using this method.

Method

The Delphi Technique

The Delphi technique, developed in the 1950s by the RAND Corporation, is a method used to achieve consensus among experts (Okoli & Pawlowski, 2005) and to summarize the array of positions that these experts have taken on a topic (Mullen, 2003). It is also frequently used where little evidence exists, or where the knowledge base is limited. Linstone and Turoff (2002) note that it facilitates group communication to enable collective problem solving. Furthermore, according to the Encyclopedia of Research Design, the Delphi method attempts to understand what

Given that we are looking to identify the perspectives that exist among experts on the use of crowdsourcing for research, and establish consensus around preliminary definitional characteristics, we considered the Delphi technique to be particularly appropriate to our design. Furthermore, the Delphi technique had the potential to provide insights within this exploratory study, which will scaffold the induction of a general model or theory (Steinert, 2009). The exploratory data collected allow for the development of a conceptual model. In addition, a panel study (as opposed to the responses of any individual expert) may provide the most relevant “answers” to our research questions, given the limited numbers of experts in this area.

Identifying the expert panel

According to Rowe and Wright (2001), the composition of a Delphi panel should be heterogeneous, to ensure members’ combined experience and knowledge represents the full research domain. The long-standing debate of who qualifies as an expert for the purpose of a Delphi has resulted in very broad inclusion criteria such as “informed individuals” as well as more narrowly defined criteria, such as “specialists in a field” (Baker et al., 2006). The nascent nature of crowdsourcing in research required the term “expert” to be interpreted broadly, and we elected to include individuals with experience in the application of crowdsourcing for research, as well as individuals who had studied the topic of crowdsourcing itself. Given that the purpose of this study was to identify salient characteristics of crowdsourcing within research settings, we conducted a literature review to create a list of potential expert panelists, comprising researchers and/or academics who either use crowdsourcing in their research methods, or research the topic of crowdsourcing. Figure 1 presents a graphical depiction of how panel members were selected.

Participant identification and recruitment process.

First, a list of published studies was assembled by using the keyword terms “crowdsourc*” and (“medical” or “health”), with the filters “English” and “peer-reviewed.” This search resulted in 275 articles identified in PubMed, and 126 articles in ProQuest, for a total of 401 articles – 15 of which were duplicates. The titles of these articles were then reviewed for relevance, and 154 articles were removed that neither discussed the use of crowdsourcing, nor employed crowdsourcing as a primary research methodology. An additional 99 articles that included editorials and commentaries, articles that only referenced the term crowdsourcing in a non-substantive manner (primarily in a broader social media context), focused on crowdfunding (which is not considered to be crowdsourcing for the purposes of this study), and/or did not deploy crowdsourcing for their research, were removed post abstract review. This resulted in a total of 133 articles.

From those articles, email addresses for the first and corresponding authors’ were located (where publicly available). Although a total of 203 researchers were solicited to participate in this research study, 20 emails “bounced back,” meaning that a maximum of 183 emails were delivered. From these 183 emails, 18 individuals agreed to participate in the study.

Crowdsourcing Framework

Working from the more than 40 different definitions for the term crowdsourcing, Estellés-Arolas and González-Ladrón-De-Guevara (2012) developed an integrated definition of crowdsourcing which consists of eight discrete characteristics (p. 197):

there is a clearly defined crowd (size and typology – skills/knowledge of crowd);

there exists a task with a clear goal (task-based, what the participant has to do);

the recompense received by the crowd is clear (what do they get in return – material or not);

the crowdsourcer is clearly identified (any entity or individual);

the compensation to be received by the crowdsourcer is clearly defined (what is the benefit to the crowdsourcers);

it is an online assigned process of participative type (type of process);

it uses an open call of variable extent (type of call); and

it uses the internet (medium).

These characteristics serve as a starting point for constructing a framework for understanding crowdsourcing within a research context. In the absence of a commonly agreed-upon definition for crowdsourcing, these characteristics provide a common language to help facilitate an understanding of its application. Despite the information science undertone, the application of these characteristics within a research context was deemed appropriate, given that they were informed by a non-discipline-specific review of the literature. Furthermore, the characteristics were identified as a result of the comprehensive and integrative process by which the authors developed them (Estellés-Arolas & González-Ladrón-De-Guevara, 2012).

Procedure

The Delphi process includes a minimum of two rounds of questionnaires with each subsequent round building on the previous responses (Fefer et al., 2016). All forms and letters were also reviewed and pre-tested to ensure clarity, prior to data collection. We completed two rounds of questionnaires and content analysis to identify domains of consensus across the experts solicited for their opinion on the use of crowdsourcing for research. For both rounds, a mix of questions were used, including open-ended, edit, rank, and rate. The questions for both rounds can be found in the supplemental appendix. For each Delphi round, data were generated and analyzed by the authors of this article. The first round of questions focused on general questions to gain a broad understanding of how crowdsourcing is used in research. Round 1 questions focused on the ways in which crowdsourcing is being used in research, as well as the key characteristics of crowdsourcing. Participants were asked to identify key characteristics of crowdsourcing for research, and to rate the importance of characteristics identified by Estellés-Arolas and González-Ladrón-De-Guevara (2012). The data from the first round were summarized and shared with panel members to use in the next round. Round 2 questions aimed to further understand why researchers were using crowdsourcing and move toward a framework for using crowdsourcing in research, by trying to improve upon and adapt the characteristics identified by Estellés-Arolas and González-Ladrón-De-Guevara (2012). The threshold for consensus on positions was set at 70% for rating-based questions. A third round was not undertaken as it was determined that further consensus was unlikely, based on the responses in the first two rounds.

This study utilized Qualtrics Survey Software to distribute the questionnaires to the expert panelists via email. The use of the software enabled rapid analysis of responses and allowed for the Delphi process to be conducted in an efficient manner. Procedures employed within this study (including both recruitment and informed consent) were approved by the non-medical research ethics board at the University of Western Ontario (protocol # 108655).

Results

Characterizing the Expert Panelists

The characterization of panel expertise is important in the context of the Delphi method, given its reliance on expert opinion. The researchers identified as experts in crowdsourcing were deemed as such based on the nascent nature of the subject matter. Of the 18 respondents who agreed to participate, 15 completed the Round 1 survey and 12 completed the Round 2 survey. Survey participants were a mix of researchers who had used crowdsourcing in their research (

When asked to rate level of experience in the application of crowdsourcing on a scale from 0 to 100, participants’ scores ranged from 21 to 100 (

As demonstrated through these quotes from the panelists, their self-rated experience ranged from applying it for research purposes to focusing on a specific aspect of crowdsourcing.

The Use of Crowdsourcing for Research

Panelists identified numerous uses of crowdsourcing in research, based on the literature and their own experience, including recruiting research participants, data collection, data analysis, and developing interventions. Individually, panelists used crowdsourcing for participant recruitment, data collection, and data analysis. In some instances, the purpose of crowdsourcing in their research studies was tied to the fulfillment of traditional participant or subject roles, such as recruitment and the provision of data:

This type of role includes inviting the crowd to complete tasks such as questionnaires, providing personal information, and undertaking other online activities to generate data for research purposes. For example,

Panel members who undertook clinical or medical quantitative research studies tended to identify these types of uses for crowdsourcing. In this case, where the primary purpose is to access participants, crowdsourcing differs little from other recruitment methods, and thus requires similar considerations with regard to methodological rigor and appropriateness.

Researchers are also using crowdsourcing to engage the crowd in activities such as data collection and analysis, activities that have been more traditionally the role of researchers. Panelists noted,

Other panelists identified similar uses identified in the literature such as,

In these instances, the crowd supports the research study through the provision of their knowledge, experience, and skills. There is a deeper level of engagement and perhaps an underlying trust factor that the crowd has the capability to undertake such tasks. Leveraging the data collection and analytical capabilities of the crowd are, however, contingent upon the nature of the research ranging from simple tasks such as tracking and monitoring to more complex types of problem solving.

In limited instances, researchers are building capacity through the engagement of the crowd to undertake co-researcher type activities, and providing education and training to the crowd:

While this type of research capacity building is common practice with qualitative research methods such as participatory action research, it was referenced by only one panelist.

The least frequently identified uses of crowdsourcing in research were study and instrument design with expert panel members citing concerns with lack of expertise and knowledge of the crowd to undertake such work. Most of the expert panelists noted the distinction between the roles of the crowd versus those of the researchers. This underscores the fact that specific research expertise and skills are required for many studies, and so areas such as study and instrument design, or even data analysis in some instances, are areas that may extend beyond the capabilities of the crowd. However, this blurring of roles is common in non-research crowdsourcing activities (Howe, 2009) and was acknowledged by panelists:

In addition, panelists distinguished between the crowd as general members of the public and a crowd of experts. As one panelist noted,

The Benefits and Challenges of Using Crowdsourcing for Research

Panelists were asked why they used crowdsourcing and to identify some of the benefits and challenges associated with its use. Members of the Delphi panel tended to view the crowd as a supplement to the capacity and capabilities of professional researchers—In other words, participants were seen to be an on-demand pool of resources. Benefits and challenges were categorized into five broad themes: process, people, knowledge, data, and experience. Table 1 summarizes panelist responses within those categories.

Benefits and Challenges of Using Crowdsourcing in Research.

Based on feedback from the panel, the use of crowdsourcing for research is an effective and efficient process to overcome barriers such as time limitation, volume of data, and costs, regardless of how the crowd is being leveraged.

The Characteristics of Crowdsourcing for Research

In an effort to identify a potential framework for crowdsourcing in research, panelists were asked to indicate the importance of each of the characteristics of crowdsourcing as identified by Estellés-Arolas and González-Ladrón-De-Guevara (2012) in the research context by rating it on a scale of 0 to 100, with 0 being the lowest rating and 100 being the highest. Table 2 summarizes the rating scores and provides the average for each characteristic.

Importance of Characteristics of Crowdsourcing in Research.

The only characteristic that panelists agreed was important within the research context was “there exists a task with a clear goal” which had an average rating of 83.62. When asked to explain the lack of consensus in what characteristic of crowdsourcing are important for research, three common themes emerged across the responses from the panelists, including issues related to the definitions of terms, the specific task being assigned to the crowd, and the nature of the study in which crowdsourcing is being applied. On issues related to the definitions of terms and the lack of clarity around language, panelists noted,

Panelists also noted the disagreement in what characteristics of crowdsourcing are important for research that could result from the specific task being assigned to the crowd:

Finally, the variation in responses from panelists was also attributed to the nature of the study in which the crowdsourcing was being undertaken:

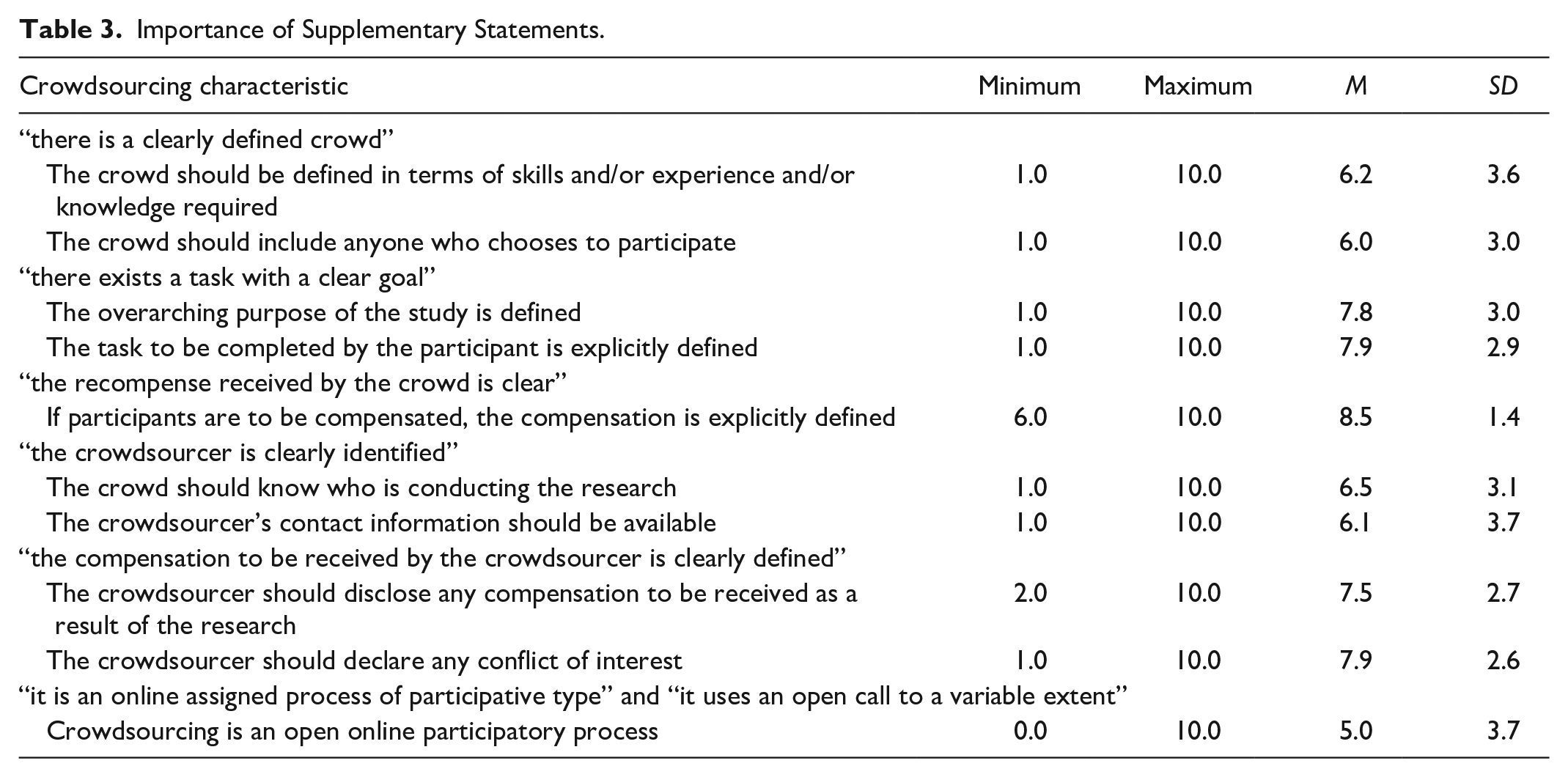

Given the lack of consensus around the characteristics of crowdsourcing as defined by Estellés-Arolas and González-Ladrón-De-Guevara (2012), expert panel members were provided with supplementary descriptors and statements aimed at clarifying each of the characteristics for the research context and asked to rate its importance in relation to the description of the original characteristic on a scale of 1 to 10 (1 being

Importance of Supplementary Statements.

The only characteristics where the panelists thought the supplementary statements improved and clarified the original statements were “there exists a task with a clear goal,” “the recompense received by the crowd is clear,” and “the compensation to be received by the crowdsourcer is clearly defined.” When asked to provide comments and/or edits to each of the supplementary statements, the majority of the comments suggested the supplementary statements did not add to or improve the characteristics.

For the characteristics related to an open call, or using online tools (e.g., the internet), panelists suggested other channels could be used to facilitate crowdsourcing in research, including texting, audience response in a live setting, in person events, public spaces, traditional media, sensor systems, community meetings, and recruitment from public places. As panelists noted,

However, panelists did appear to support the idea of an open call, with comments such as

Again, this raises the matter of composition of the crowd.

Finally, when asked whether the same ethical standards apply when using crowdsourcing in research studies, 67% of the panelists agreed, 8% were uncertain, and 25% disagreed. The panelists who disagreed noted that sometimes crowdsourcing is used because it is easier from a requirements perspective, as it is not considered human subjects research.

Discussion

This modified Delphi study demonstrates a broad range of research applications for crowdsourcing, alongside the various benefits and challenges associated with its use. While no general consensus was achieved on the characteristics of crowdsourcing for research purposes, the findings revealed gaps in knowledge regarding the application for crowdsourcing in research from a methodological and methods perspective. This led us to develop a conceptual framework for researchers to consider when deploying crowdsourcing in their studies based on whether the research study is quantitative or qualitative and how the use of crowdsourcing would align with existing research paradigms. Recognizing that we are in the early stages of exploring crowdsourcing as a research tool, this framework aims to contextualize crowdsourcing as a research method based on how it is currently being used by researchers within existing forms of inquiry and research paradigms. As such, the discussion also presents considerations related to other methodical questions that arose from the modified Delphi process.

The way in which crowdsourcing is used in research is contingent upon the task that is assigned, and this is fundamentally driven by the needs of the research study. These uses of crowdsourcing can be mapped along a continuum (Figure 2). At one end of the continuum, crowdsourcing is used for basic research purposes such as subject or participant recruitment, while at the other end, crowdsourcing serves as a mechanism for capacity building and co-researcher type activities. Moving from left to right, the level of expertise, skill, and experience required of the crowd increases. Considering the research task with the level of crowd expertise, skill, and experience allows for the role of the crowd to be defined as one of subject/participant, citizen scientist, or co-researchers. Furthermore, these research tasks and roles must be considered in the context of the research methodology—quantitative or qualitative—as each has a different set of implications. The application of crowdsourcing in research should align philosophically and methodologically with the research paradigm in which it is being deployed and therefore should align with the standards of those methods.

Continuum of crowdsourcing in research.

This spectrum aligns with the positivist to critical theory paradigms continuum originally created by Lincoln and Guba (2011), and later modified by Heron and Reason (1997), with the addition of the participatory paradigm. The continuum allows for fluidity between the categories where the complexity of the task dictates where it rests along the continuum. Furthermore, the role of the researcher also evolves along the continuum, from sole conductor of a research study to a more collaborative model that may entail activities such as educating and training the crowd to facilitate their participation.

The task, therefore, will also dictate the composition and size of the crowd. Where the task is complex, for example, developing algorithms for the prediction of disease progression for Amyotrophic Lateral Sclerosis (Kuffner et al., 2015), the task is likely to draw experts in the field who are qualified to address the challenge and have an interest in doing so, thus limiting the size of the crowd. At the opposite end of the spectrum, where the task is more general, such as rating food choices (Turner-McGrievy et al., 2015), the crowd is likely to be larger, with a range of skills and backgrounds. Therefore, it is important for researchers to clearly articulate the goal of the study, the task that is being assigned to the crowd, and how the task relates to the study, so participants can self-select based on what they perceive they can contribute. Furthermore, researchers’ need to invest in crowd capacity building will be determined by the complexity of the task, or a requirement for specific skills.

When cross-referencing panelist use of crowdsourcing for research, and its benefits to the published literature on the topic, the crowd was rarely (if ever) engaged in a fully participatory, collaborative, co-research approach among the experts solicited to participate in this study. Concepts related to building public capacity and training the crowd, knowledge mobilization, and two-way engagement between professional scientists and citizen scientists appeared to be tertiary objectives. Thus, leveraging the crowd to build capacity for research in the community, and to mobilize knowledge, appears to be underutilized opportunities—particularly given research that suggests that user-driven research can accelerate and improve the innovation adoption process of a solution or new knowledge (Celi et al., 2014).

Definitions of Crowdsourcing for Research

One possible way to consider crowdsourcing is in the context of the research paradigm in which the crowd will be engaged. The paradigm thus defines the roles of the crowd. If the role of the crowd can be defined in generally acceptable research terms (i.e., participant, data collection, analysis, study design, etc.), it makes it possible to develop a lexicon or terminology that aligns with the roles and paradigms from research subject or participant, to citizen scientist, to co-researcher.

One particular characteristic of crowdsourcing, its online nature and use of the internet, warrants mentioning in the context of defining crowdsourcing for research. Despite the vast majority of definitions referencing the online and internet aspects of crowdsourcing, panelists expanded the scope to include other mechanisms and channels, while maintaining the open call component to enable almost anyone who wishes to participate to be able to do so. This expansion aligns with inclusivity and equity principles of this type of research.

Issues of Integrity and Quality

Ideally, the use of crowdsourcing in research studies should have the same demands for integrity and quality as do studies that deploy other methods. When used for the purposes of recruitment, researchers should acknowledge and recognize issues related to sample representativeness, self-selection, and generalizability, where these are important factors based on the research study design. As quantitative and qualitative research methodologies and approaches have differing views on participant recruitment, the way in which each researcher addresses this will be contingent upon his or her area of study. Similarly, issues related to quality of data will likely be addressed according to research methodology or approach. Various methods to ensure quality have, however, been identified, including bringing reported data together with diagnostic or other clinical measures (Chunara et al., 2013), in-house calculations and physician verification (Swan, 2012), and reputation metrics for evaluating user-generated content (McCoy et al., 2014). While research suggests the quality of crowdsourced data is similar to that of non-crowdsourced data (Behrend et al., 2011; Swan, 2012), researchers should build mechanisms to ensure quality into their study design where appropriate.

Adherence to Research Standards

When undertaken for research purposes, crowdsourcing should be held to the same ethical standards as other approaches. The question remains, however, whether the task being assigned positions the crowd as participants, citizen scientists, or co-researchers, and whether this positioning informs how and which ethical practices apply? Ideally, the defined role of the crowd would dictate which ethical practices apply. Where the crowd is actively involved in complex areas of the study, are they research participants and/or researchers? And do the standards of human ethics still apply?

One less murky area is the need for transparency around the benefits for both the participants and researchers. The expert panel identified the need to be explicit in identifying the compensation, monetary or otherwise, for the crowd’s participation as well as for the researchers. Thus, regardless of the

Limitations

There are numerous definitional challenges when considering the use of crowdsourcing in research. Estellés-Arolas and González-Ladrón-De-Guevara (2012) provided a common definition and framework which, despite being framed using an information technology context, can be adapted to other research domains (Bassi et al., 2019). A key limitation of this definition is, however, within the general domain of applicability to crowdsourcing activities possible beyond online and internet activities. Thus, additional research is required on the application of non-internet-based crowdsourcing for research.

The Delphi panel experts may have interpreted the questions differently based on their own experiences. In some instances, the responses provided reflected a lack of certainty in terms of what the survey questions were asking and how it specifically pertained to their work. There was also a range of knowledge and experience in using crowdsourcing for research among the panelists, making it difficult to come to consensus. This problem was exacerbated by the relative novelty of crowdsourcing and the limited body of literature on its use in health-related research.

Directions for Future Research

As crowdsourcing and other methods are explored for the purposes of research, the research context risks becoming lost in the novelty and attractiveness of possibilities presented by information technologies and new communications channels. While these new opportunities should be embraced, this should be done in a way that maintains the integrity of research paradigms. The ease with which researchers have access to the data and capabilities beyond their institutions and communities, through the crowd, should be leveraged in a responsible manner.

Future research should supplement the information uncovered in this study with case studies and interviews of researchers using crowdsourcing. This may provide an opportunity to further explore and examine the implementation of crowdsourcing in specific settings and implementations. This additional research could also highlight contextual differences that may depend on the research area in which crowdsourcing is deployed.

Conclusion

As evidenced by the Delphi panelists and the current body of literature, the multitude of purposes for which crowdsourcing is being used for research across various disciplines presents significant opportunities for researchers. Researchers from different fields are using crowdsourcing for everything from quantitative surveys to more qualitative participatory purposes. Furthermore, as a nascent approach, the concept of crowdsourcing is frequently interpreted as being simply an online platform (Bassi et al., 2020). The challenge for researchers, however, is to consider all of the characteristics of crowdsourcing, not limited to the online, technological, or platform considerations, and more holistically consider alignment between theoretical perspective and research methods (Finlay & Ballinger, 2006) to ensure its appropriate use for research. The conceptual framework presented within this article provides researchers with a first step toward considering how they will use crowdsourcing within traditional and existing research approaches.

Supplemental Material

sj-pdf-1-sgo-10.1177_2158244020980751 – Supplemental material for Crowdsourcing for Research: Perspectives From a Delphi Panel

Supplemental material, sj-pdf-1-sgo-10.1177_2158244020980751 for Crowdsourcing for Research: Perspectives From a Delphi Panel by H. Bassi, L. Misener and A. M. Johnson in SAGE Open

Footnotes

Declaration of Conflicting Interests

Funding

Supplemental Material

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.